Model providers give your workspace access to AI models. Every application you build needs models to function, and configuring providers at the workspace level means all team members can use them across all projects.Documentation Index

Fetch the complete documentation index at: https://docs.dify.ai/llms.txt

Use this file to discover all available pages before exploring further.

System vs Custom Providers

System Providers are managed by Dify. You get immediate access to models without setup, billing through your Dify subscription, and automatic updates when new models become available. Best for getting started quickly. Custom Providers use your own API keys for direct access to model providers like OpenAI, Anthropic, or Google. You get full control, direct billing, and often higher rate limits. Best for production applications. You can use both simultaneously—system providers for prototyping, custom providers for production.Configure Custom Providers

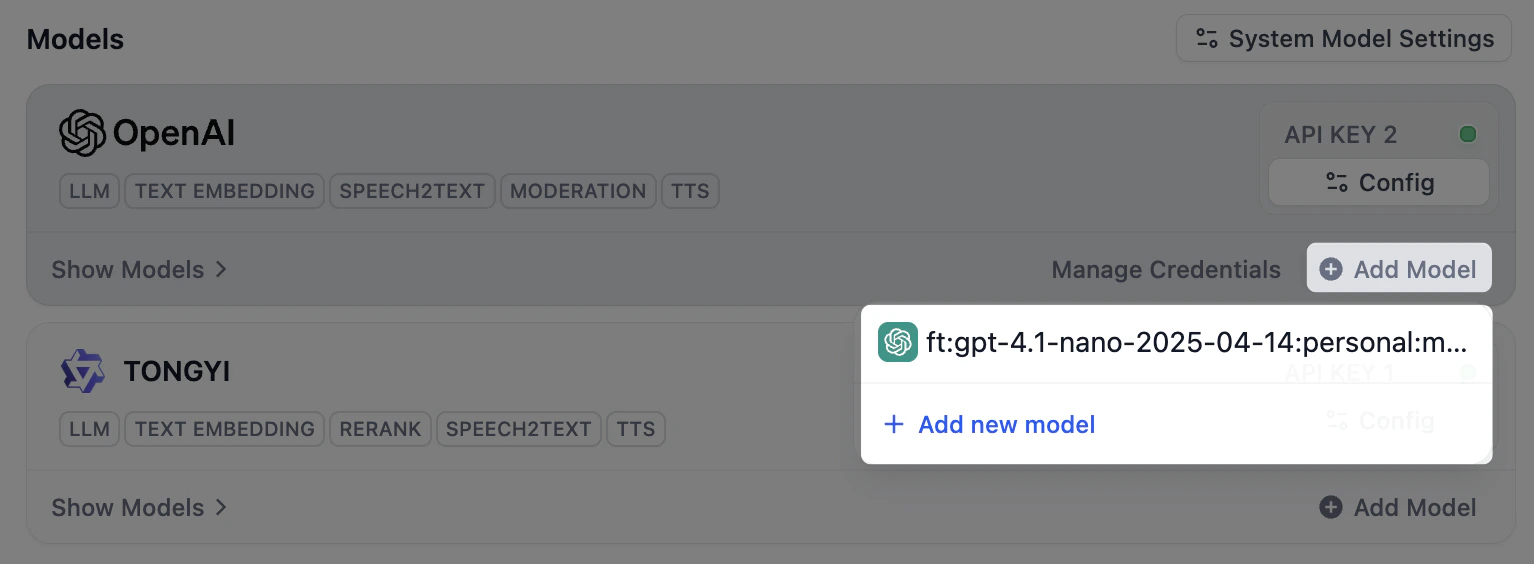

Only workspace admins and owners can configure model providers. The process is consistent across providers:Navigate to Settings → Model Providers

Access the model provider configuration in your workspace settings.

Supported Providers

Large Language Models:- OpenAI (GPT-4, GPT-3.5-turbo)

- Anthropic (Claude)

- Google (Gemini)

- Cohere

- Local models via Ollama

- OpenAI Embeddings

- Cohere Embeddings

- Azure OpenAI

- Local embedding models

- Image generation (DALL-E, Stable Diffusion)

- Speech (Whisper, ElevenLabs)

- Moderation APIs

Provider Configuration Examples

- OpenAI

- Anthropic

- Local (Ollama)

Required: API Key from OpenAI PlatformOptional: Custom base URL for Azure OpenAI or proxies, Organization ID for organization-scoped usageAvailable Models: GPT-4, GPT-3.5-turbo, DALL-E, Whisper, Text embeddings

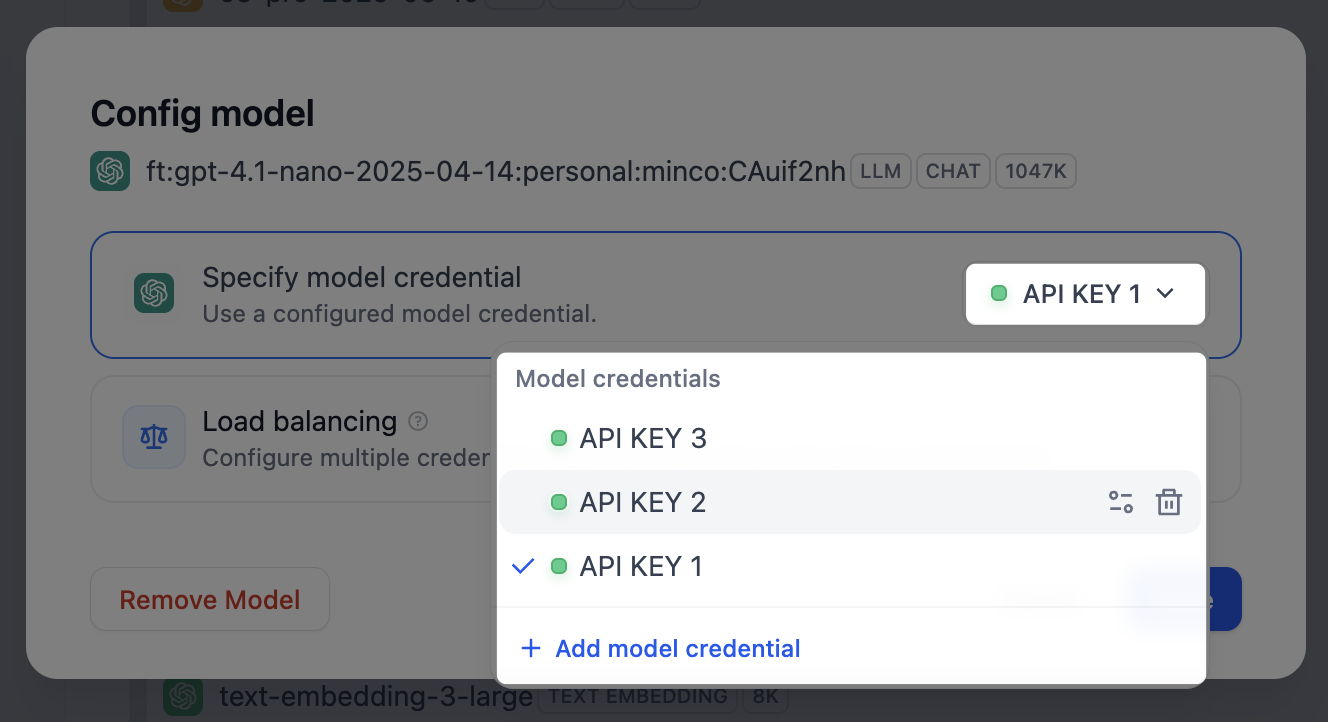

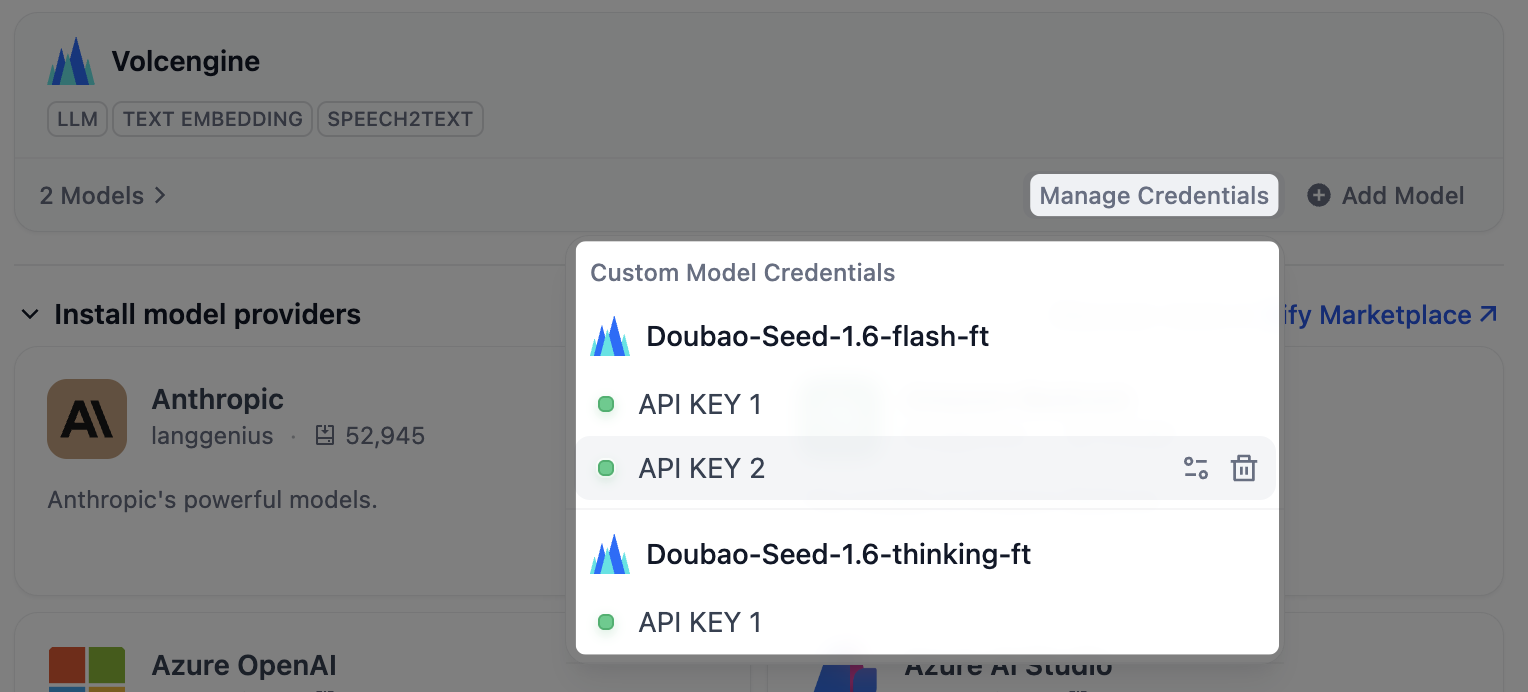

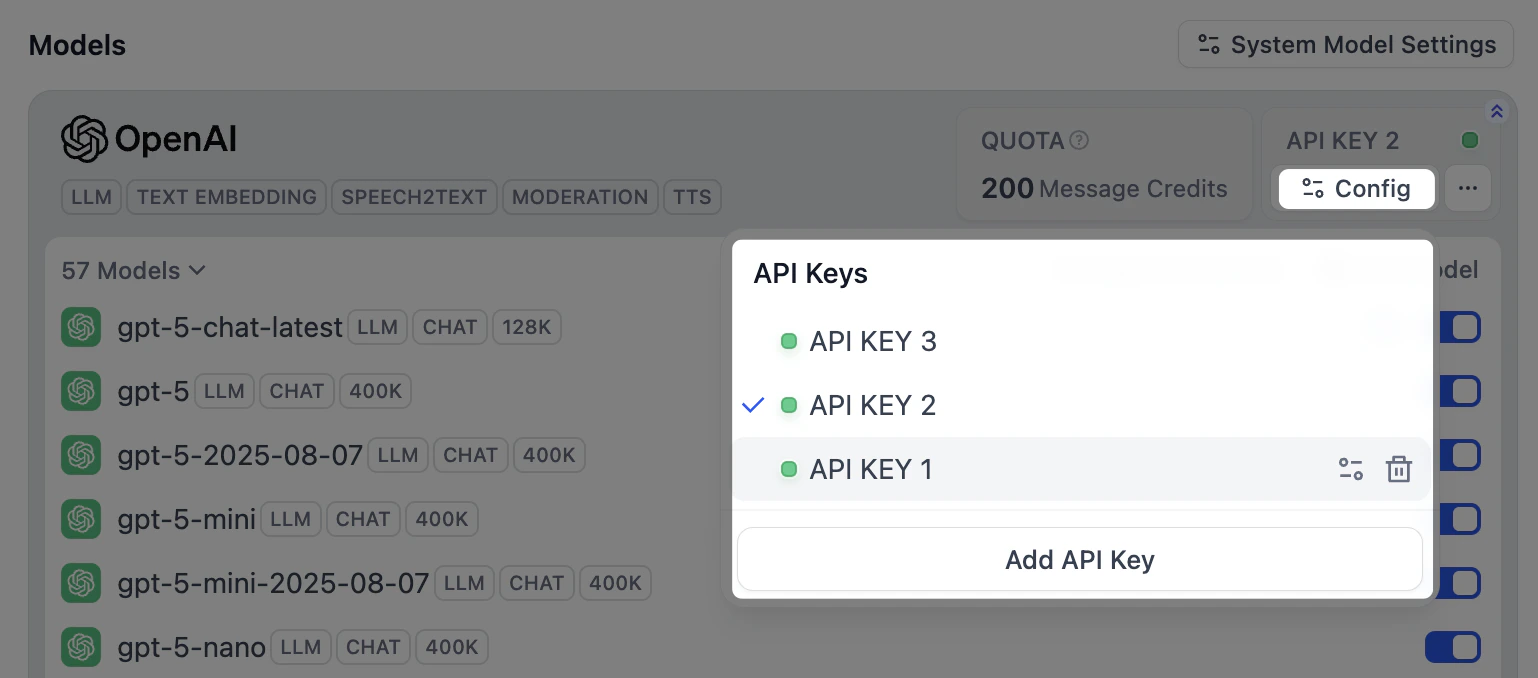

Manage Model Credentials

Add multiple credentials for a model provider’s predefined and custom models, and easily switch between, delete, or modify these credentials. Here are some scenarios where adding multiple credentials is particularly helpful:- Environment Isolation: Configure separate model credentials for different environments, such as development, testing, and production. For example, use a rate-limited credential in the development environment for debugging, and a paid credential with stable performance and a sufficient quota in the production environment to ensure service quality.

- Cost Optimization: Add and switch between multiple credentials from different accounts or model providers to maximize the use of free or low-cost quotas, thereby reducing application development and operational costs.

- Model Testing: During model fine-tuning or iteration, you may create multiple model versions. By adding credentials for these different versions, you can quickly switch between them to test and evaluate their performance.

- Predefined Model

- Custom Model

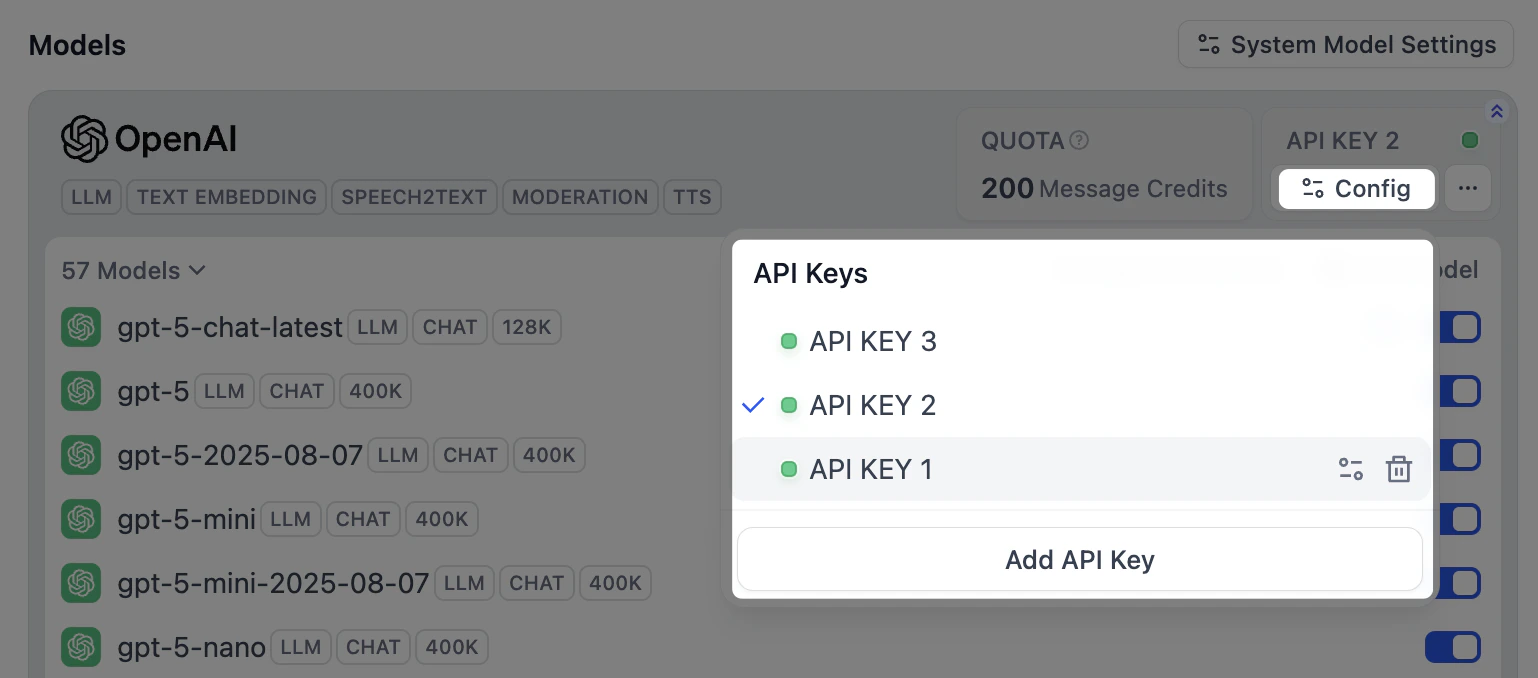

After installing a model provider and configuring the first credential, click Config in the upper-right corner to perform the following actions:

- Add a new credential

- Select a credential as the default for all predefined models

- Edit a credential

- Delete a credential

If the default credential is deleted, you must manually specify a new one.

Configure Model Load Balancing

Load balancing is a paid feature. You can enable it through a paid SaaS subscription or an Enterprise license.

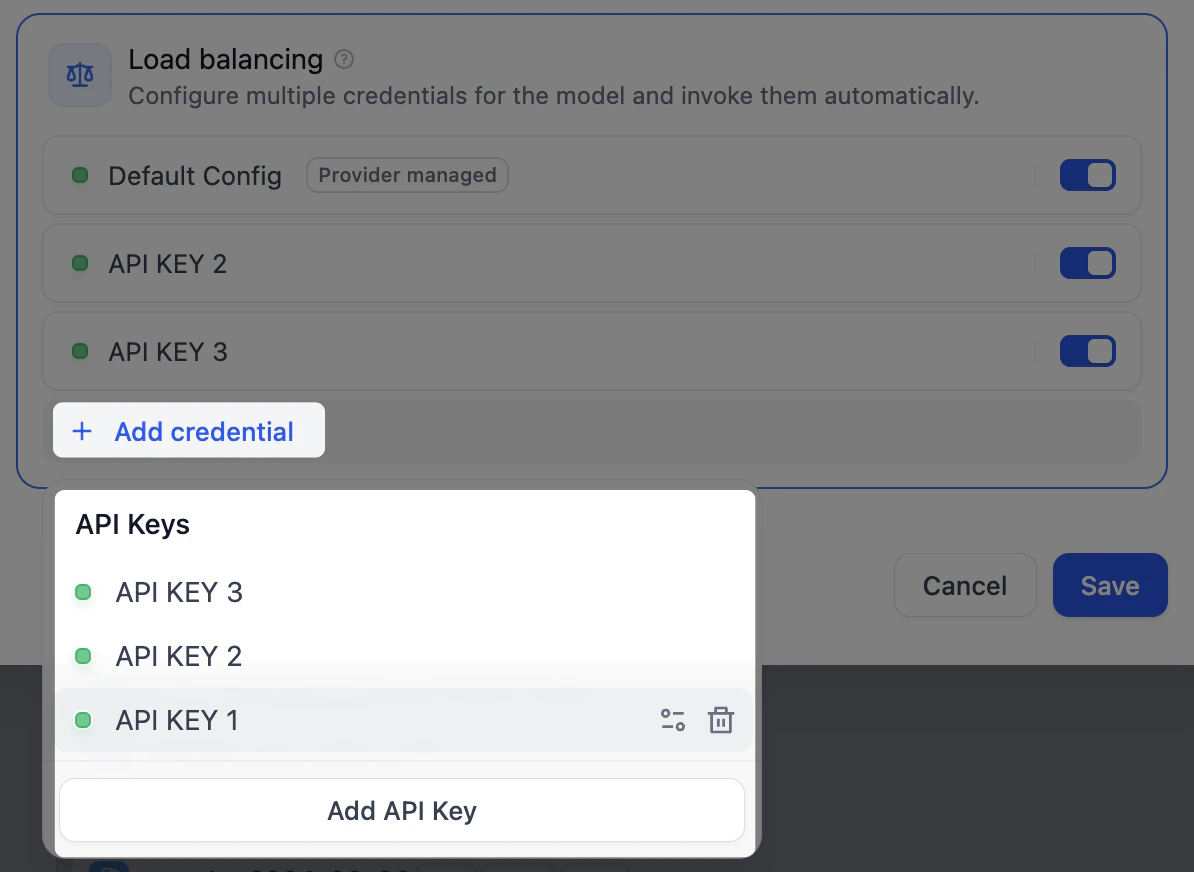

- In the model list, find the target model, click the corresponding Config, and select Load balancing.

-

In the load balancing pool, click Add credential to select from existing credentials or add a new one.

Default Config refers to the default credential currently specified for that model.

-

Enable at least two credentials in the load balancing pool, then click Save. Models with load balancing enabled will be marked with a special icon.

When you switch from load-balancing mode back to the default single-credential mode, your load-balancing configuration is preserved for future use.

Access and Billing

System providers are billed through your Dify subscription with usage limits based on your plan. Custom providers bill you directly through the provider (OpenAI, Anthropic, etc.) and often provide higher rate limits. Team access follows workspace permissions:- Owners/Admins can configure, modify, and remove providers

- Editors/Members can view available providers and use them in applications