- Connect to external APIs

- Process different types of data

- Perform specialized calculations

- Execute real-world actions

Start here

Choose a plugin type

A short decision guide for picking between Tool, Model, Agent Strategy, Extension, Datasource, and Trigger plugins.

Install the CLI

Set up

dify on your machine and scaffold a new plugin project in minutes.Types of Plugins

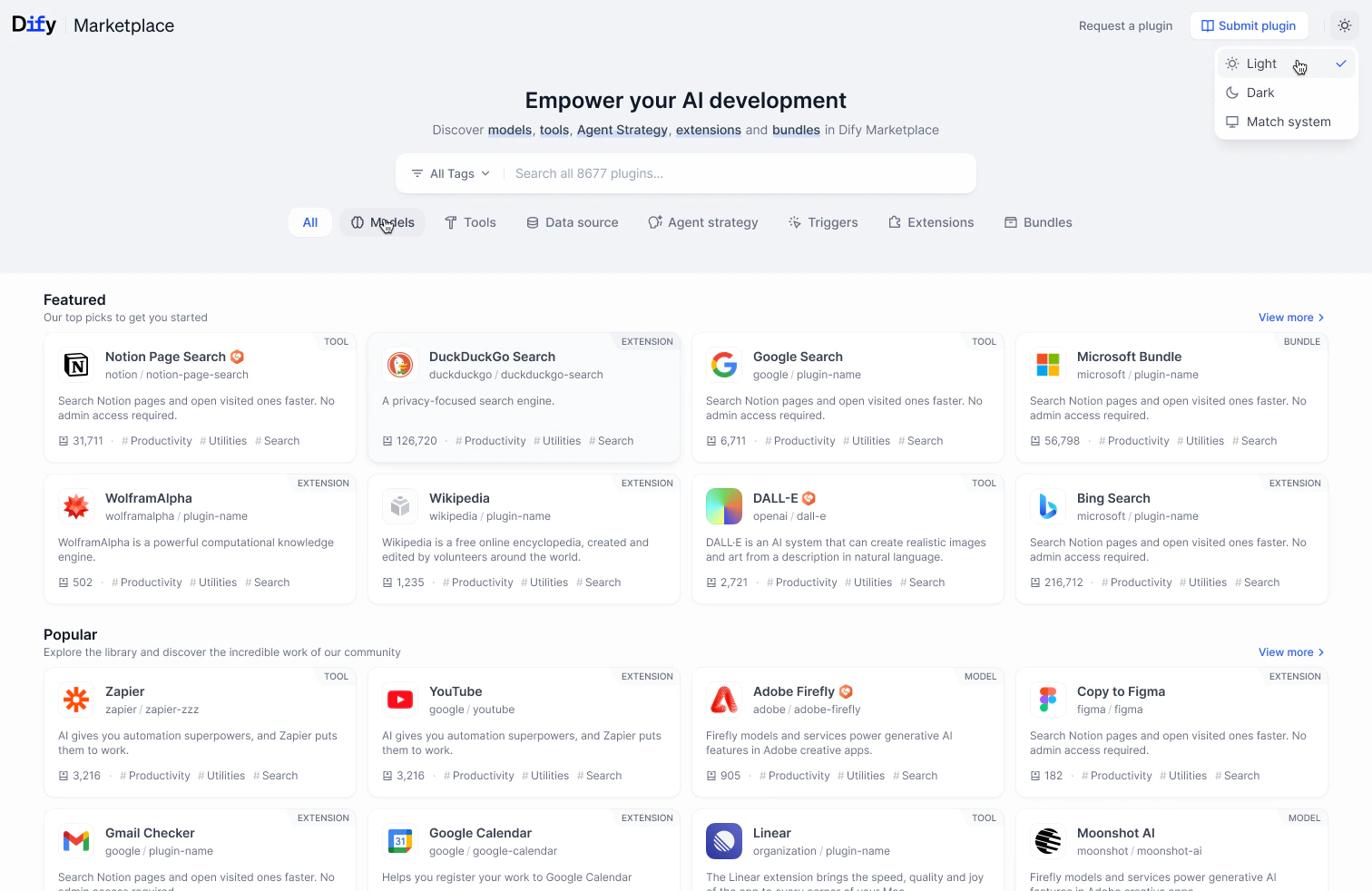

Models

Models

Package and manage AI models as pluginsLearn more

Tools

Tools

Build specialized capabilities for Agents and workflowsLearn more

Agent Strategies

Agent Strategies

Create custom reasoning strategies for autonomous AgentsLearn more

Extensions

Extensions

Implement integration with external services through HTTP WebhooksLearn more

Datasources

Datasources

Feed external content into Dify’s Knowledge PipelineLearn more

Triggers

Triggers

Kick off workflows from third-party platform events received via webhooksLearn more

Additional Resources

Development & Debugging

Tools and techniques for efficient plugin development

Publishing & Marketplace

Package and share your plugins with the Dify community

API & SDK Reference

Technical specifications and documentation

Community & Contributions

Communicate with other developers and contribute to the ecosystem

Edit this page | Report an issue