# App Toolkit

Source: https://docs.dify.ai/en/use-dify/build/additional-features

Optional features that make your Dify apps more useful

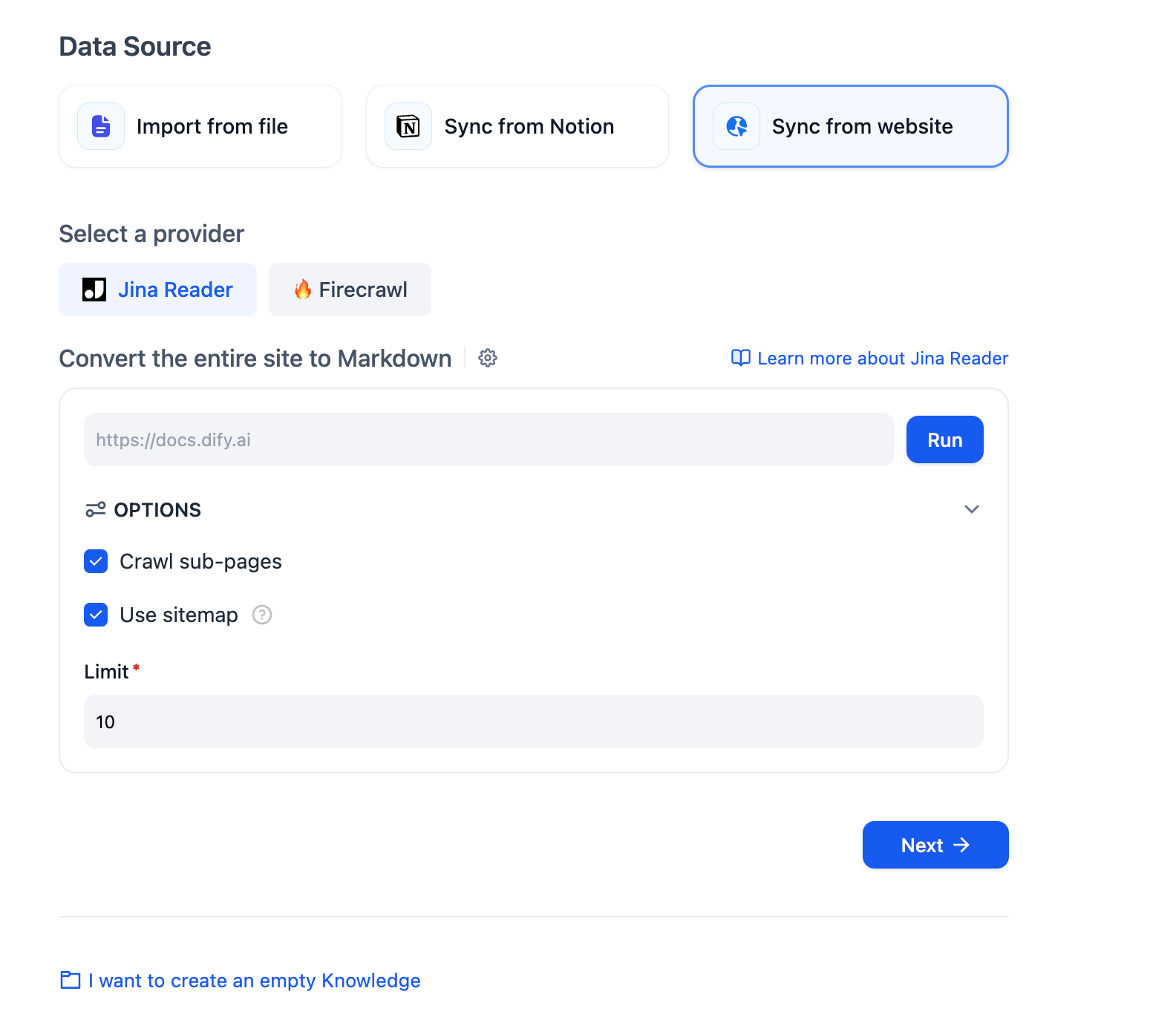

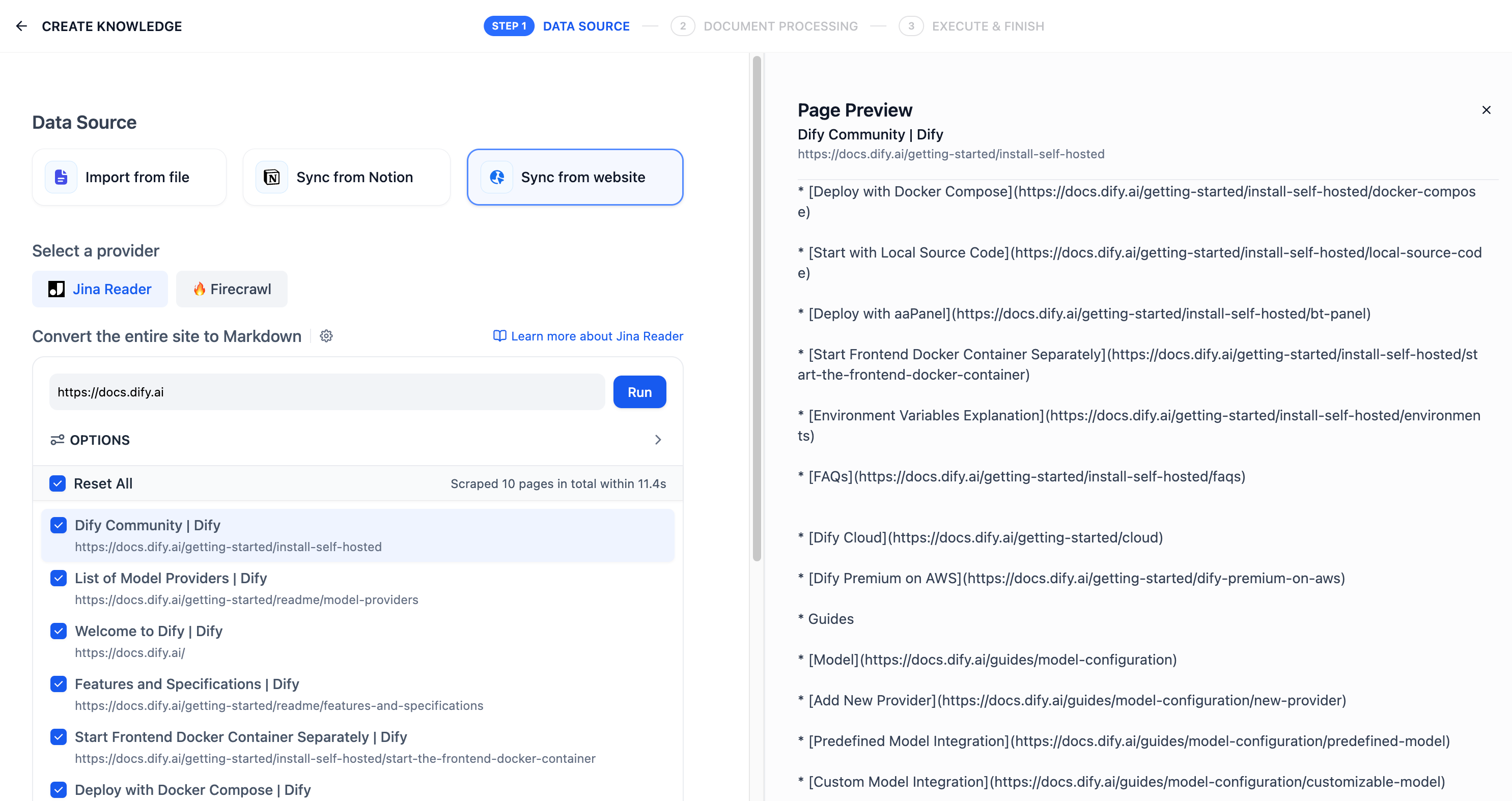

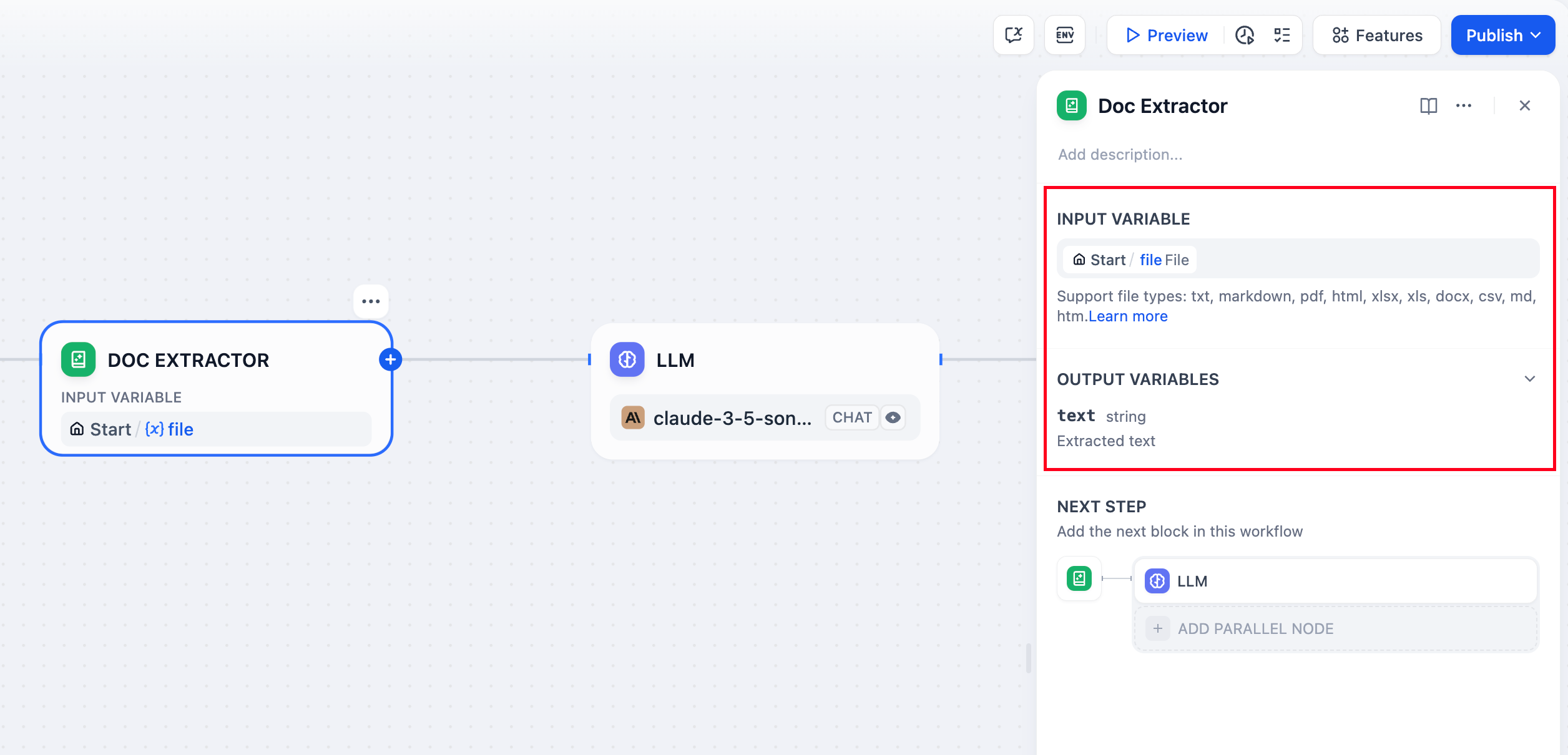

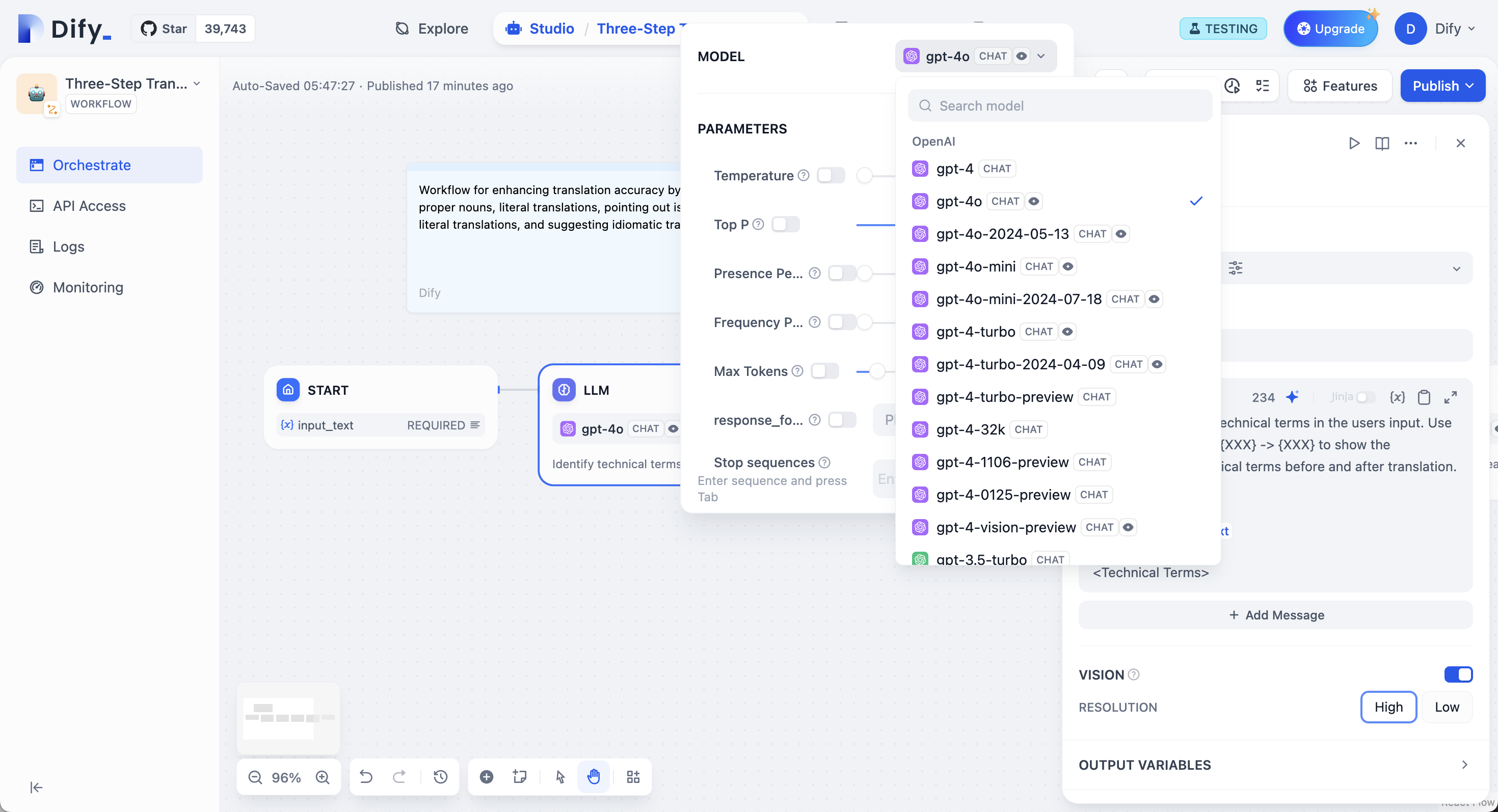

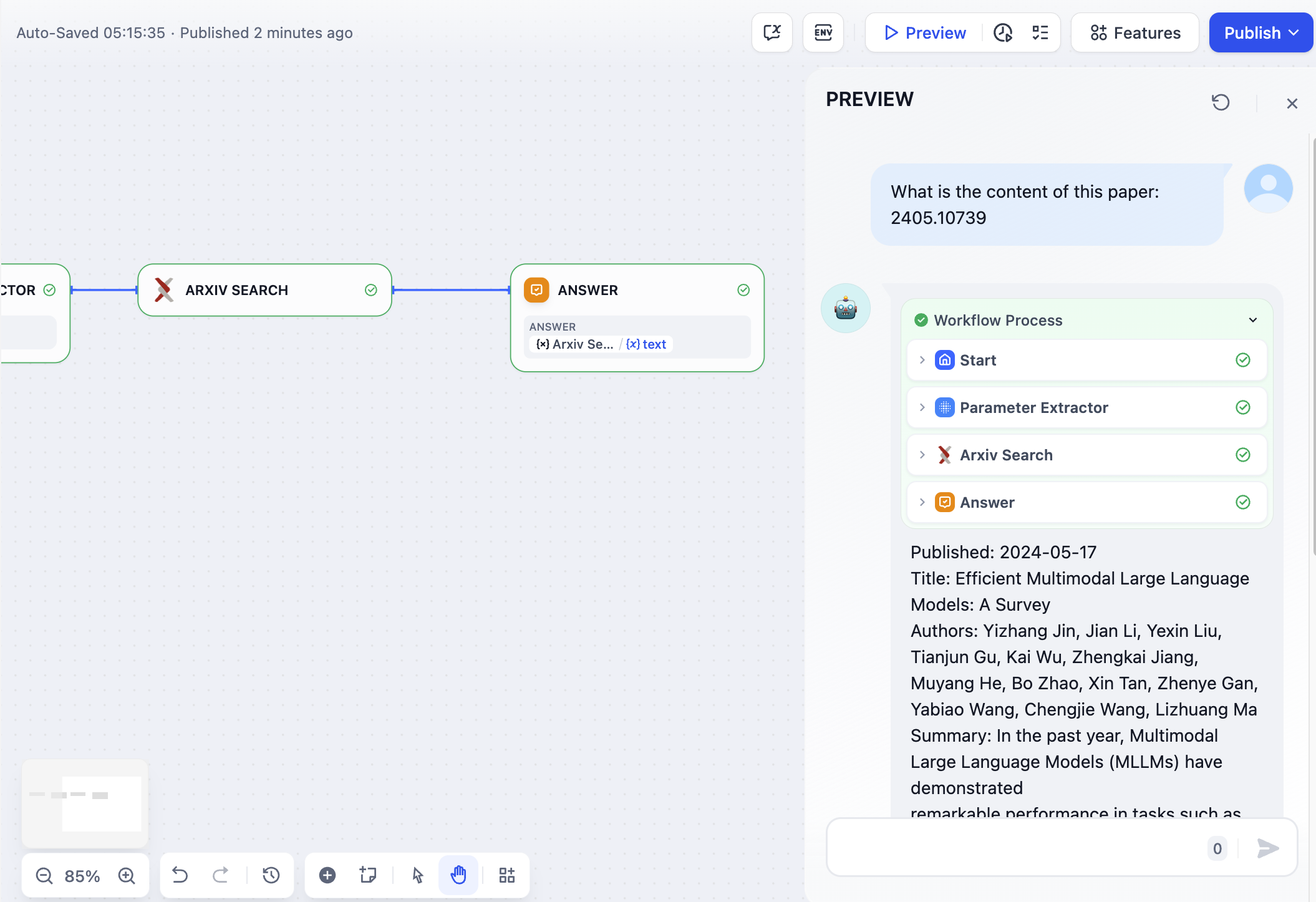

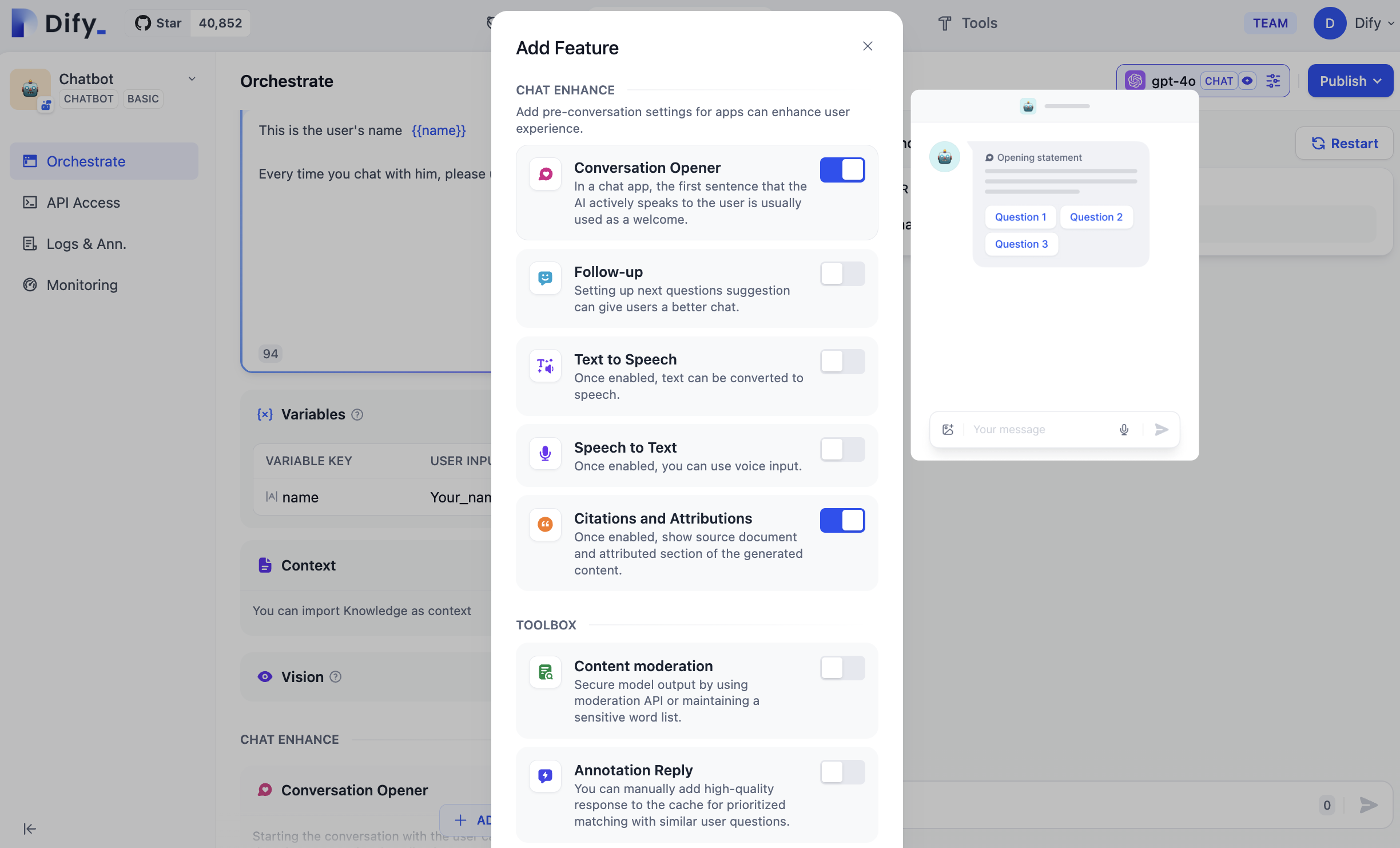

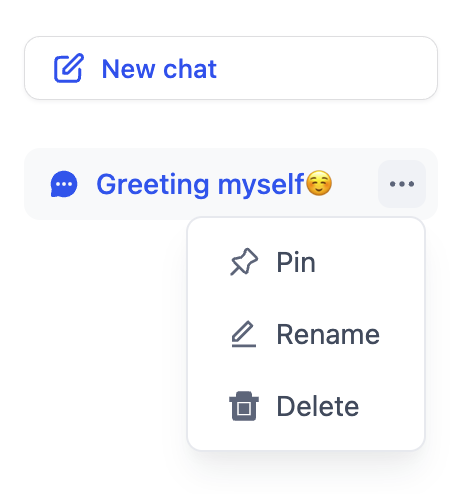

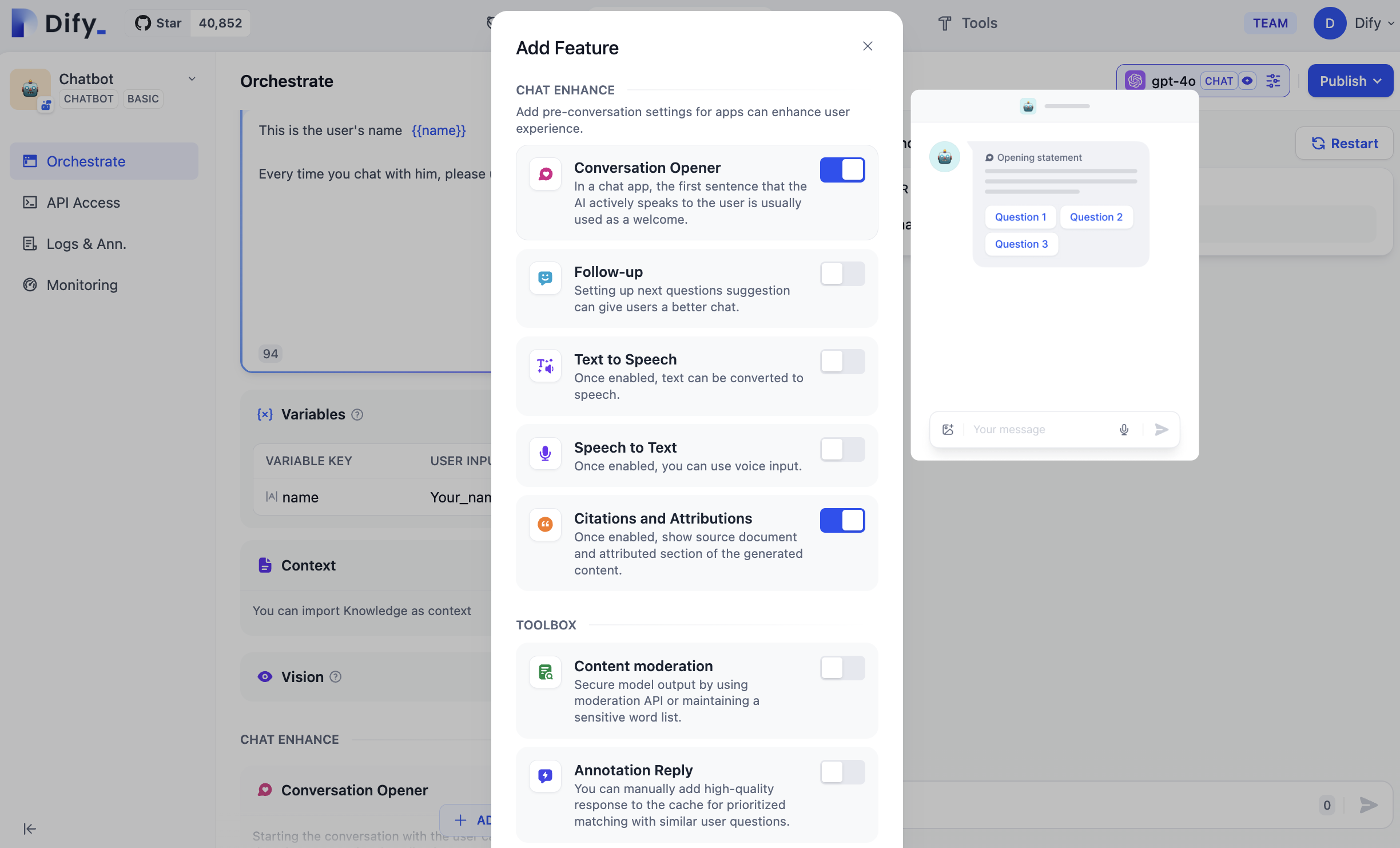

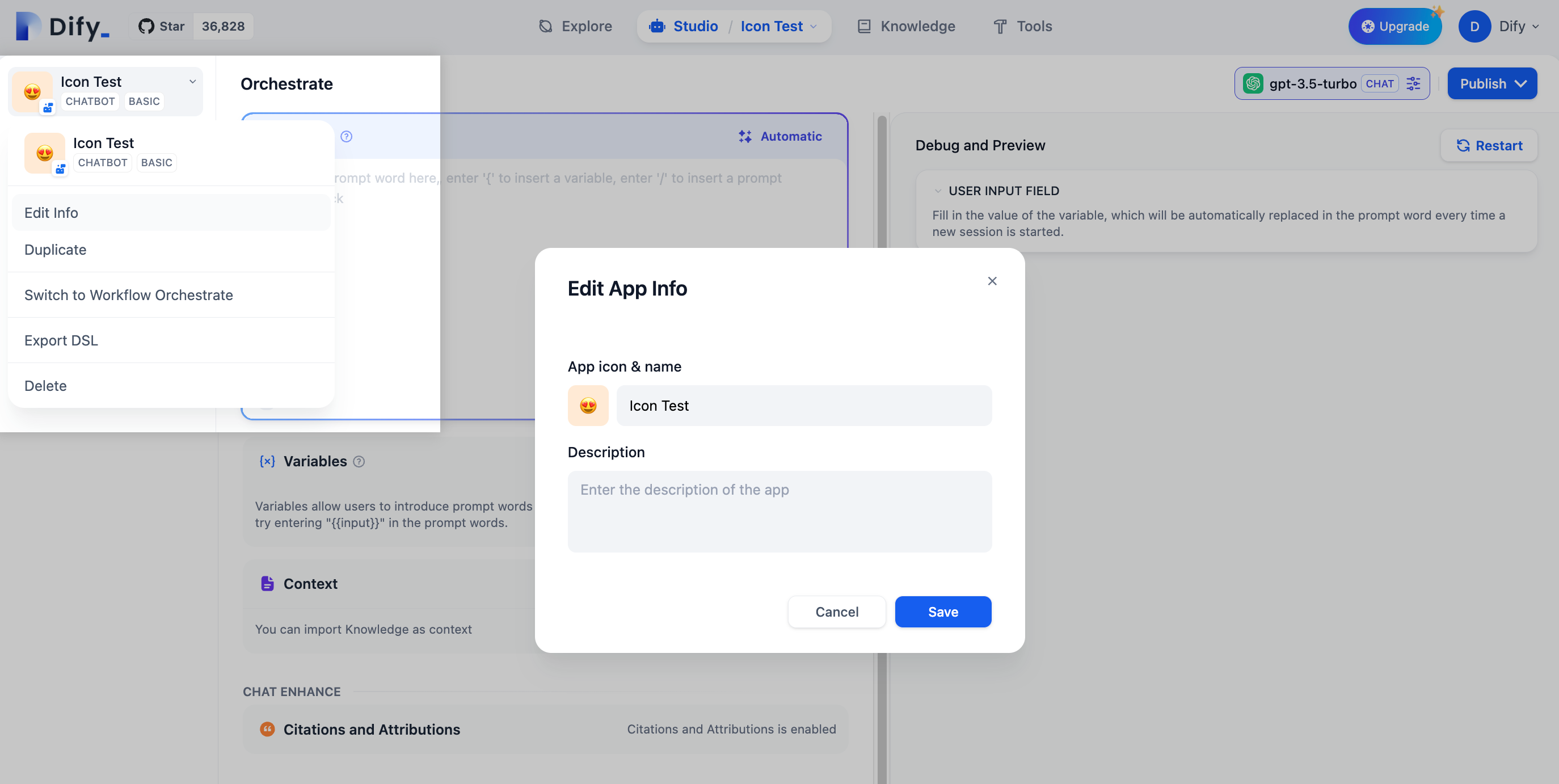

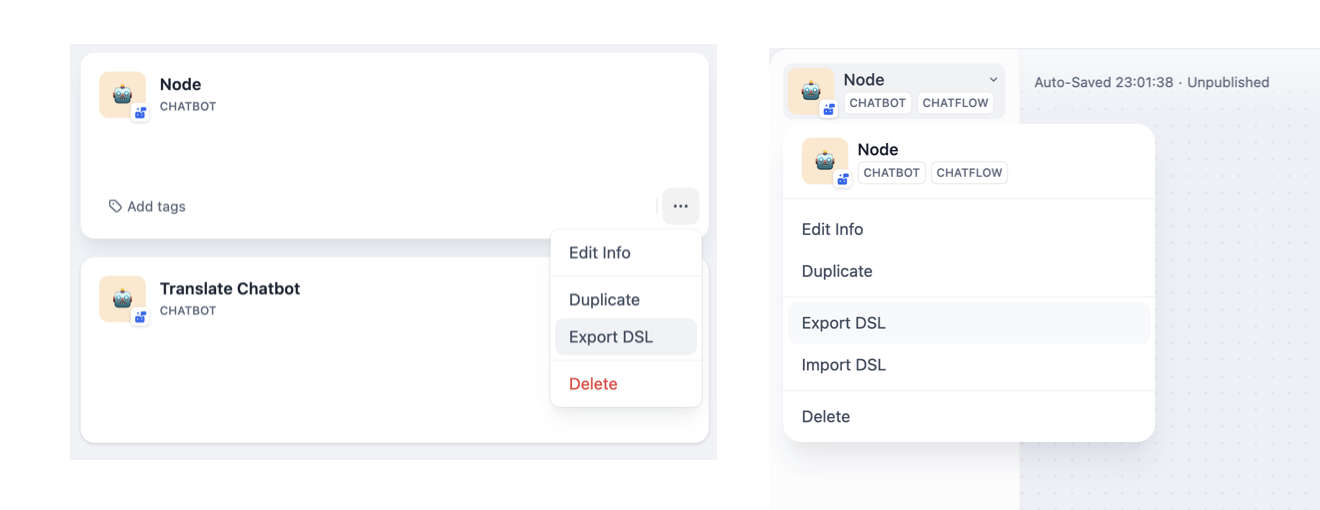

Dify apps come with optional features you can enable to improve the end-user experience. Open the **Features** panel of the builder to see what's available for your app type.

![Features Panel in Chatbots, Agents, and Text Generators]()

![Features Panel in Chatflows]() ## Conversation Opener

Set an opening message that greets users at the start of each conversation, with optional suggested questions to guide them toward what the app does well.

You can insert variables into the opening message and suggested questions to personalize the experience.

* In the opening message, type `{` or `/` to insert variables from the picker.

* In suggested questions, type variable names manually in `{{variable_name}}` format.

## Conversation Opener

Set an opening message that greets users at the start of each conversation, with optional suggested questions to guide them toward what the app does well.

You can insert variables into the opening message and suggested questions to personalize the experience.

* In the opening message, type `{` or `/` to insert variables from the picker.

* In suggested questions, type variable names manually in `{{variable_name}}` format.

![Configuration]()

![WebApp]() ## Follow-up

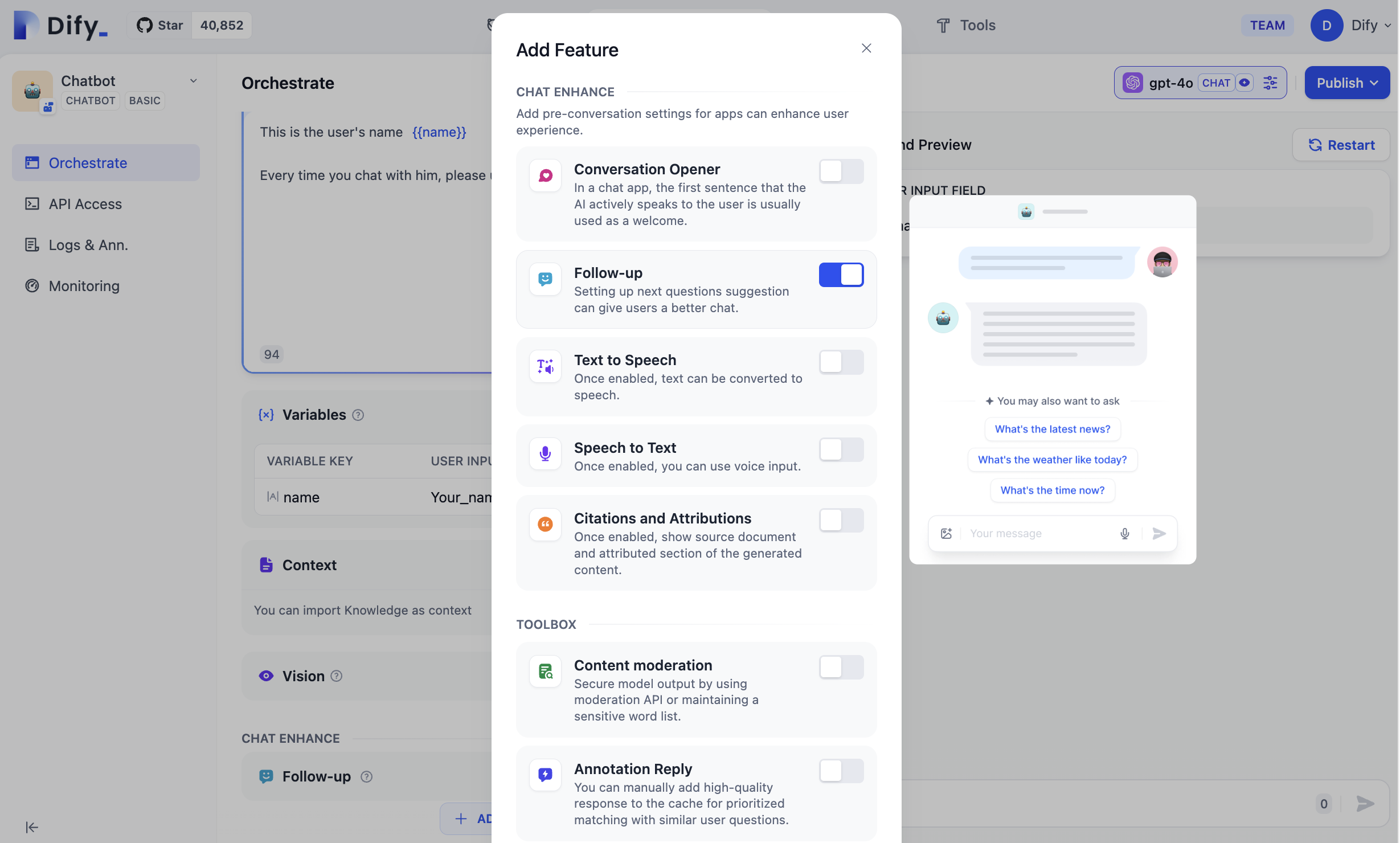

When enabled, the LLM automatically suggests 3 follow-up questions after each response, helping users continue the conversation. This feature is a simple toggle—no additional configuration is needed.

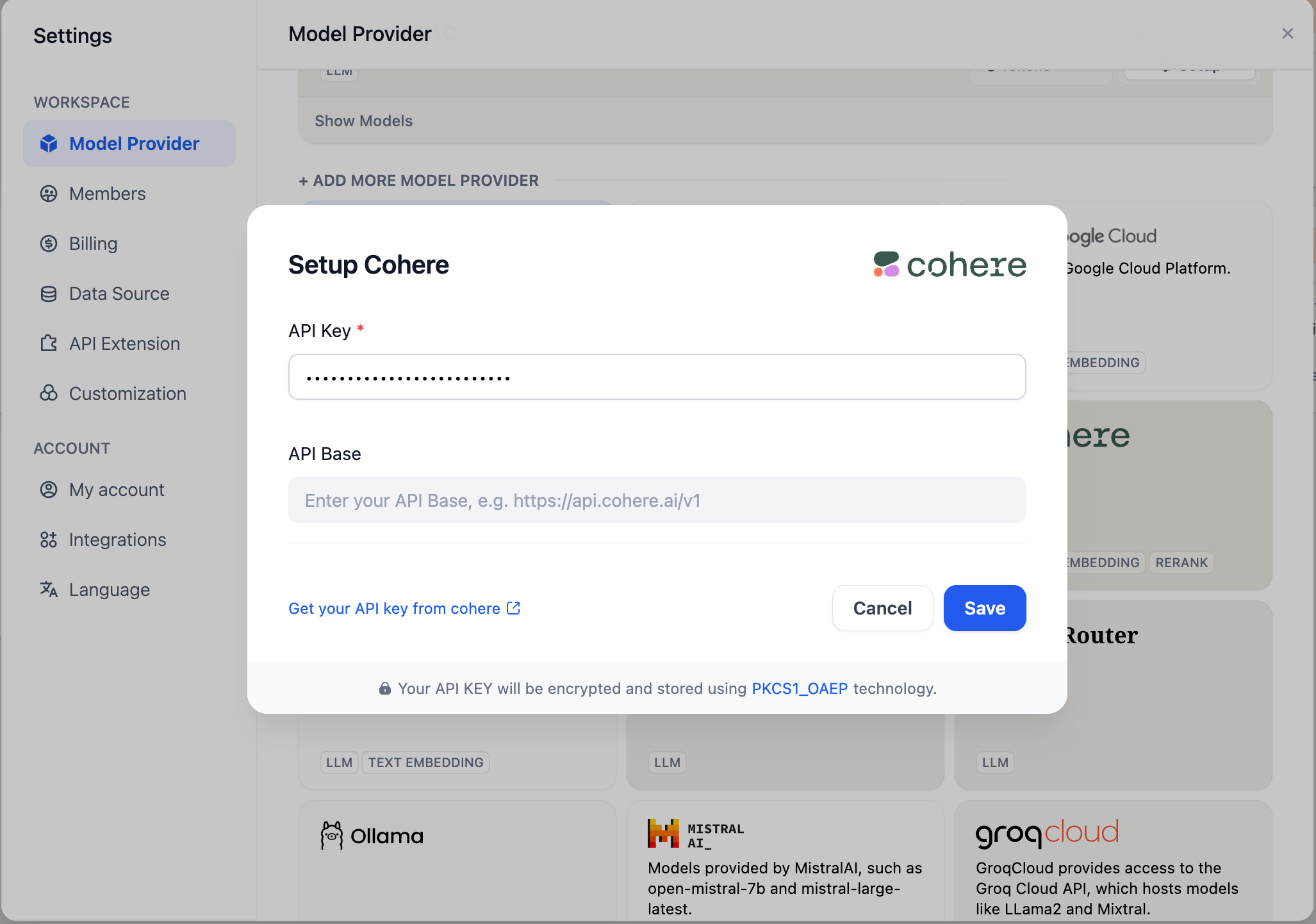

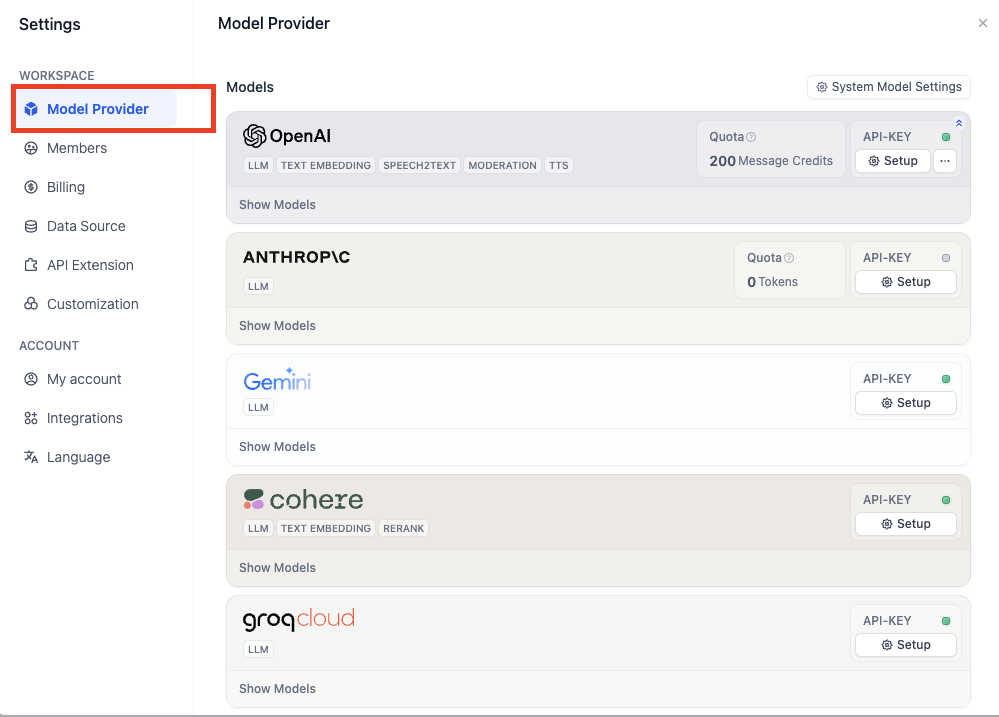

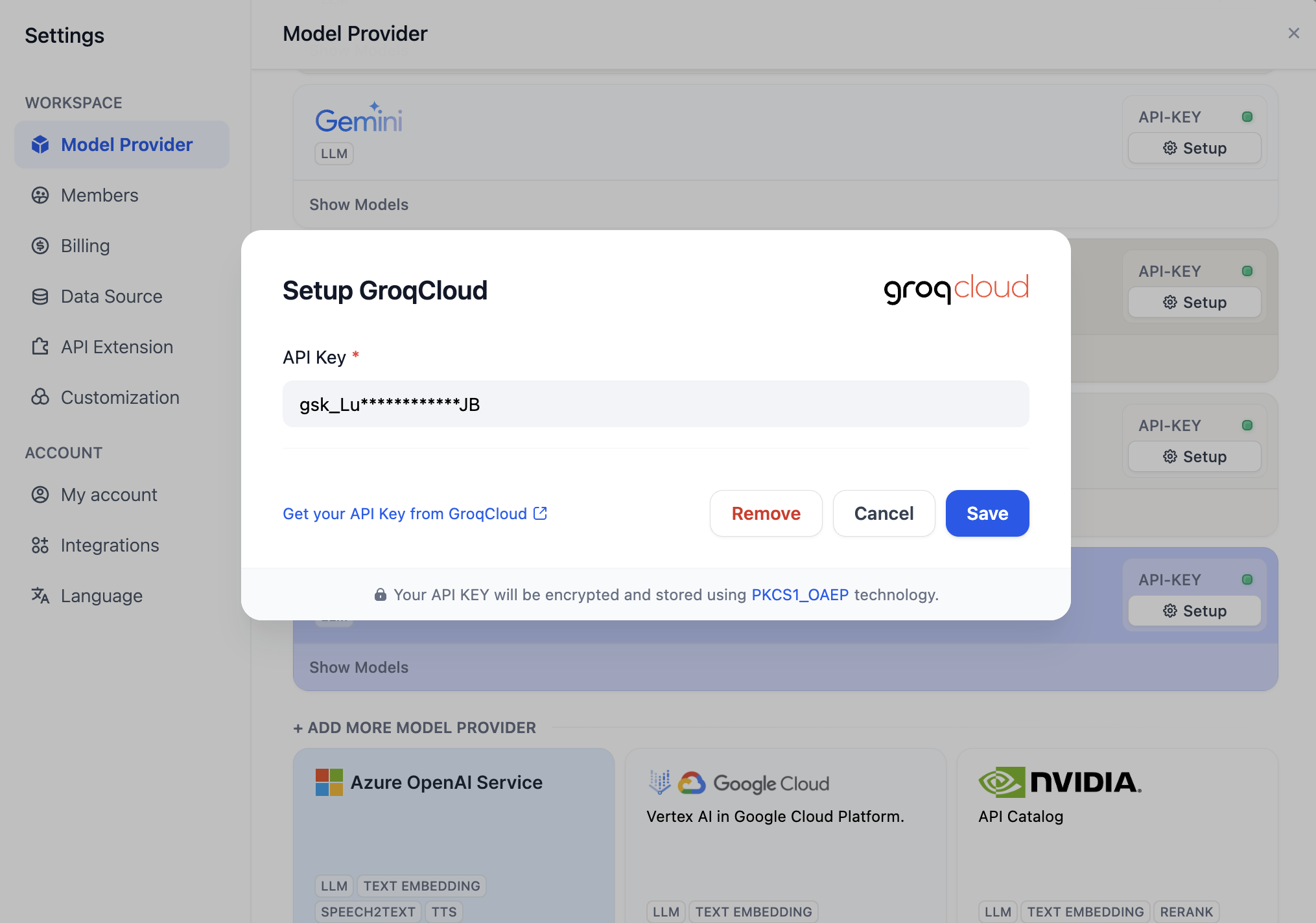

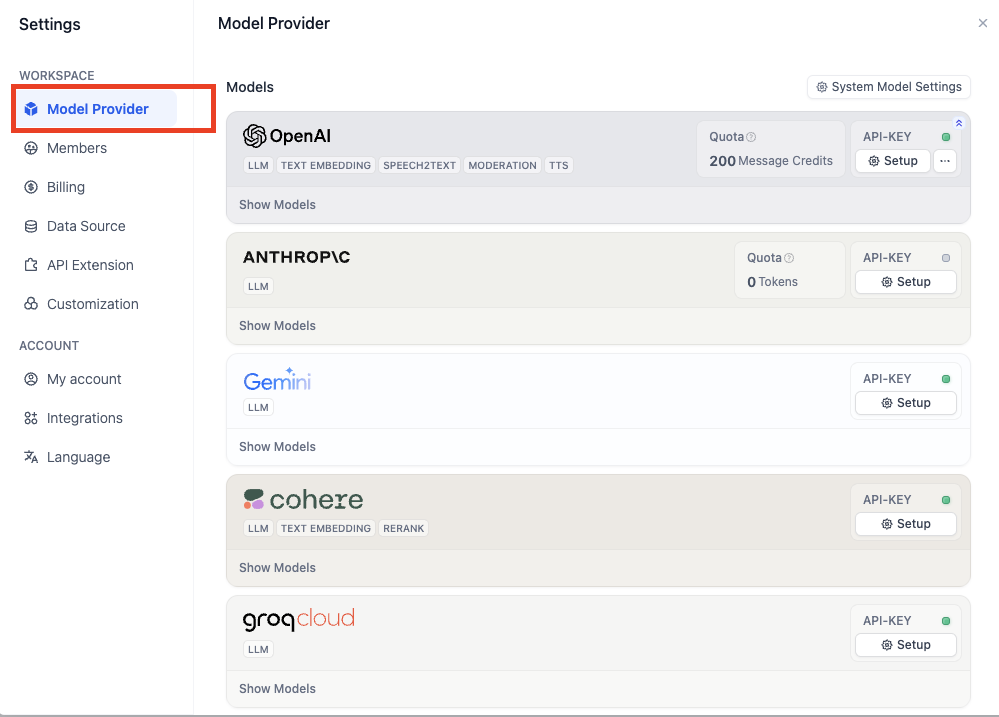

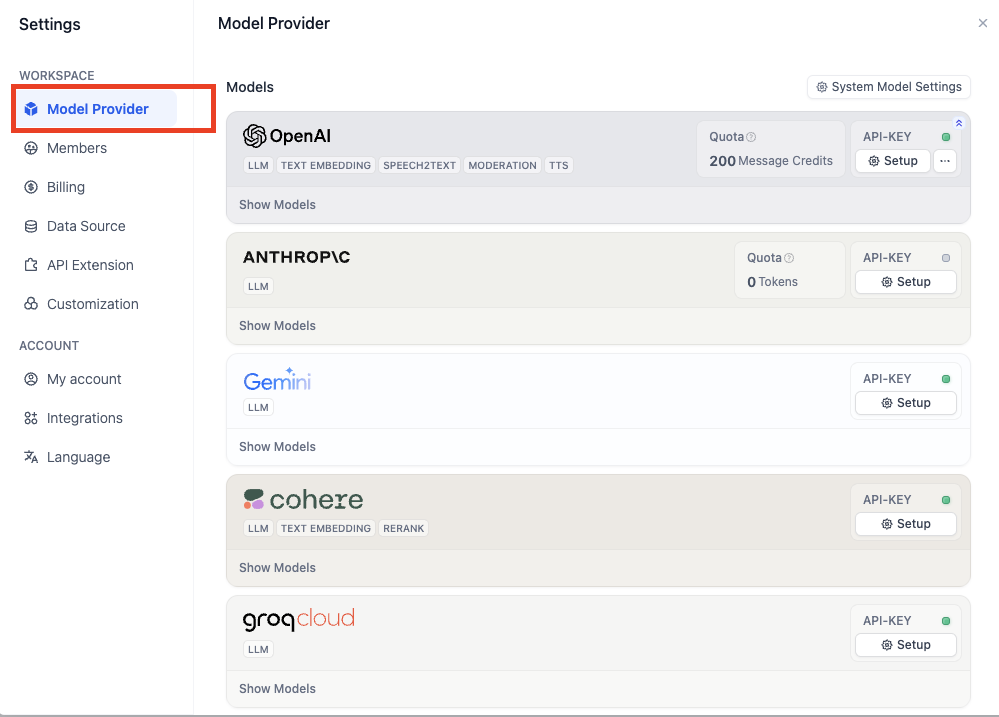

Follow-up questions are generated by a separate LLM call using your workspace's system reasoning model (set in **Settings** > **Model Provider** > **Default Model Settings**), not the model configured in your app.

## Text to Speech

Convert AI responses to audio. You can configure the language and voice to match your app's audience, and enable **Auto Play** to stream audio automatically as the AI responds.

**Text to Speech** uses your workspace's text-to-speech model (set in **Settings** > **Model Provider** > **Default Model Settings**).

The feature only appears in the **Features** panel when a default TTS model is configured.

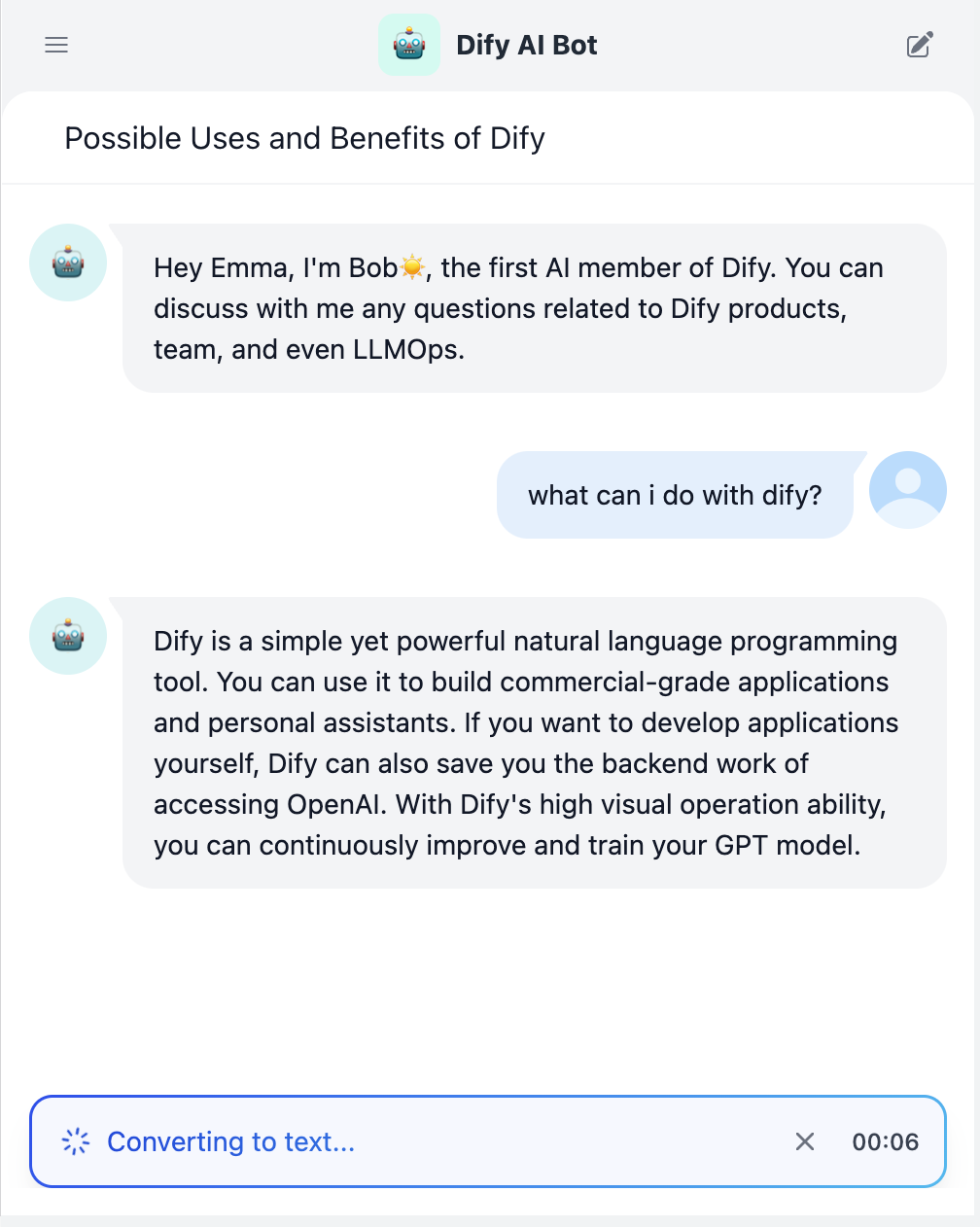

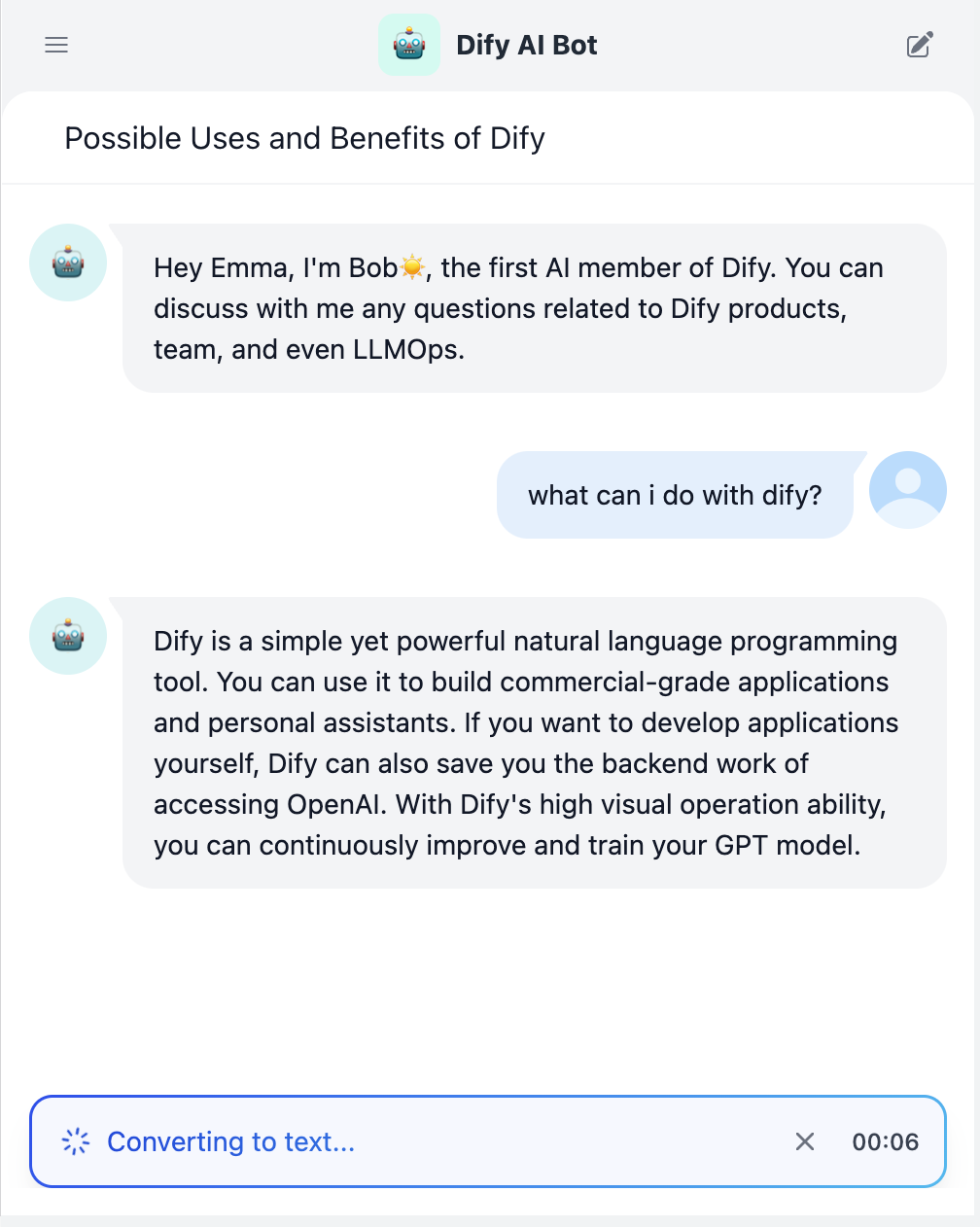

## Speech to Text

Enable voice input for the chat interface. When enabled, your end users can dictate messages instead of typing by clicking the microphone button.

**Speech to Text** uses your workspace's speech-to-text model (set in **Settings** > **Model Provider** > **Default Model Settings**).

The feature only appears in the **Features** panel when a default STT model is configured.

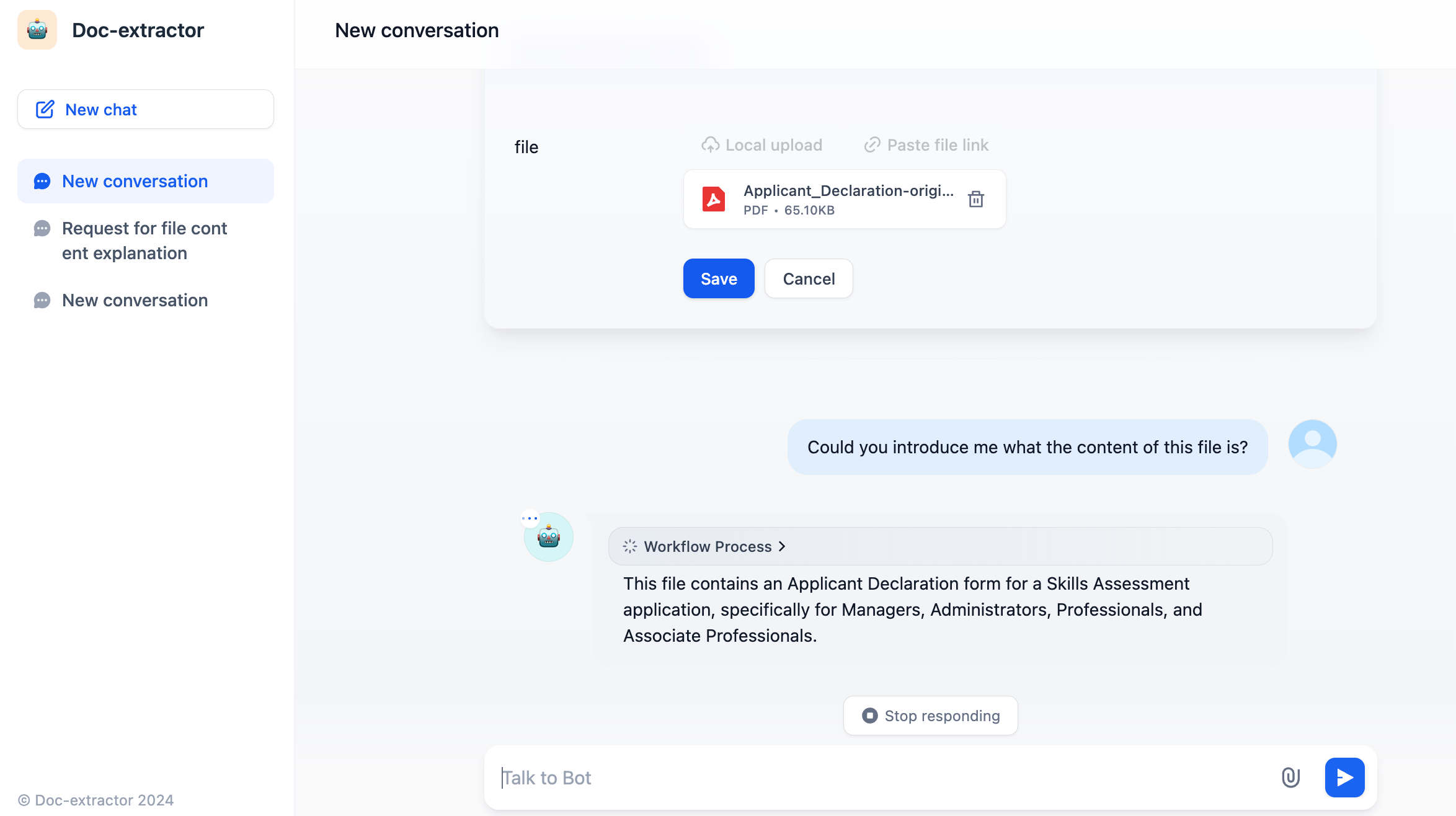

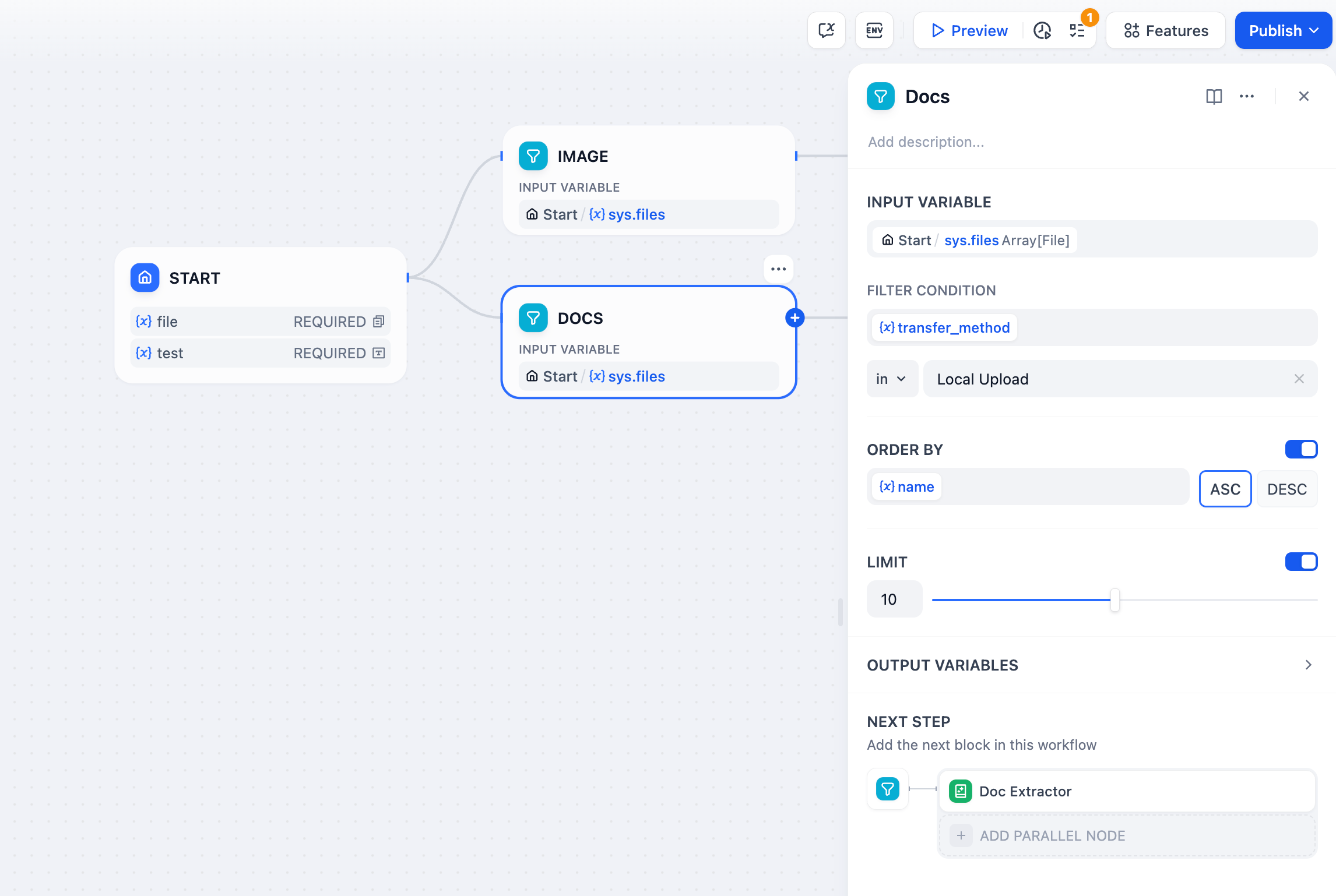

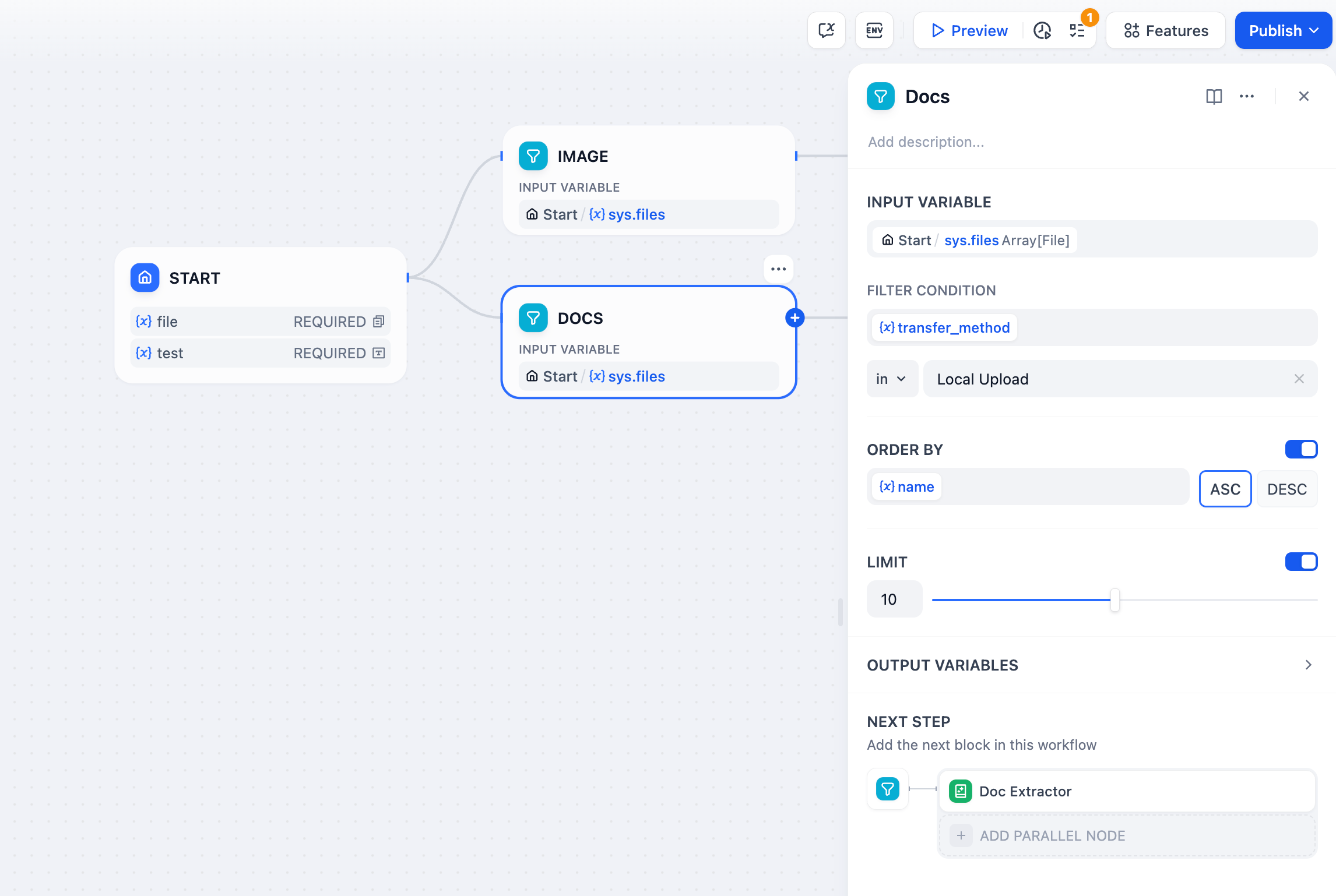

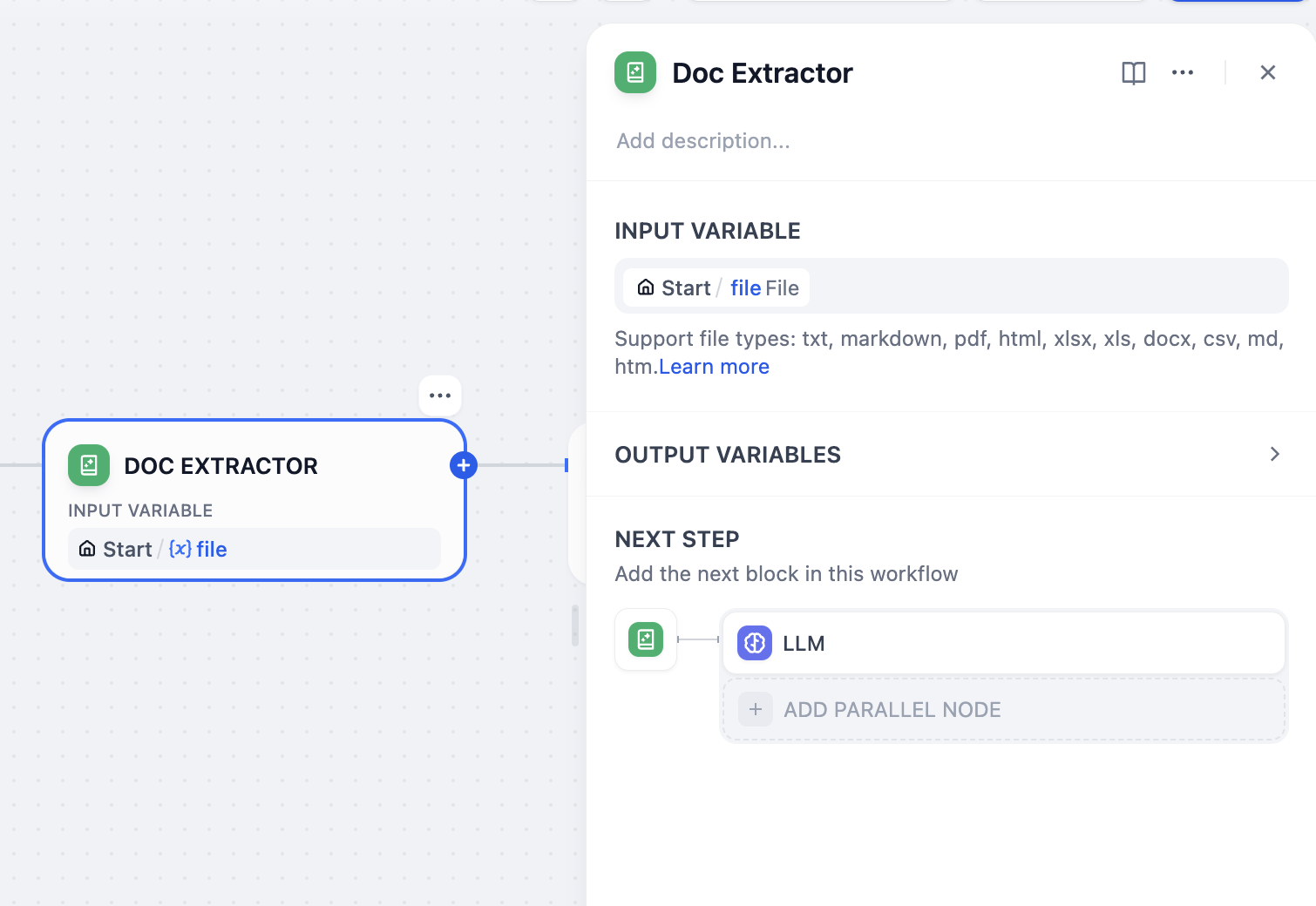

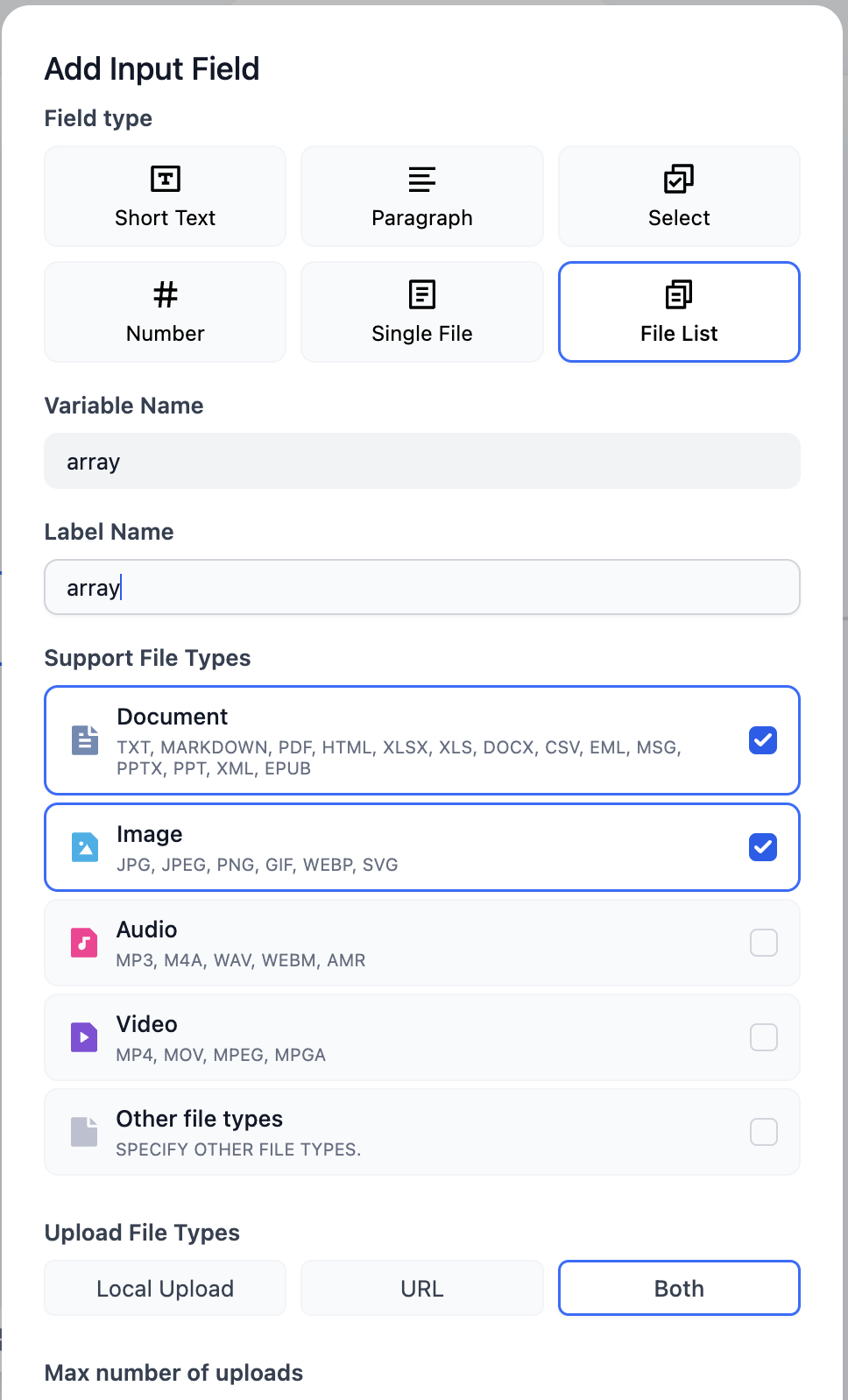

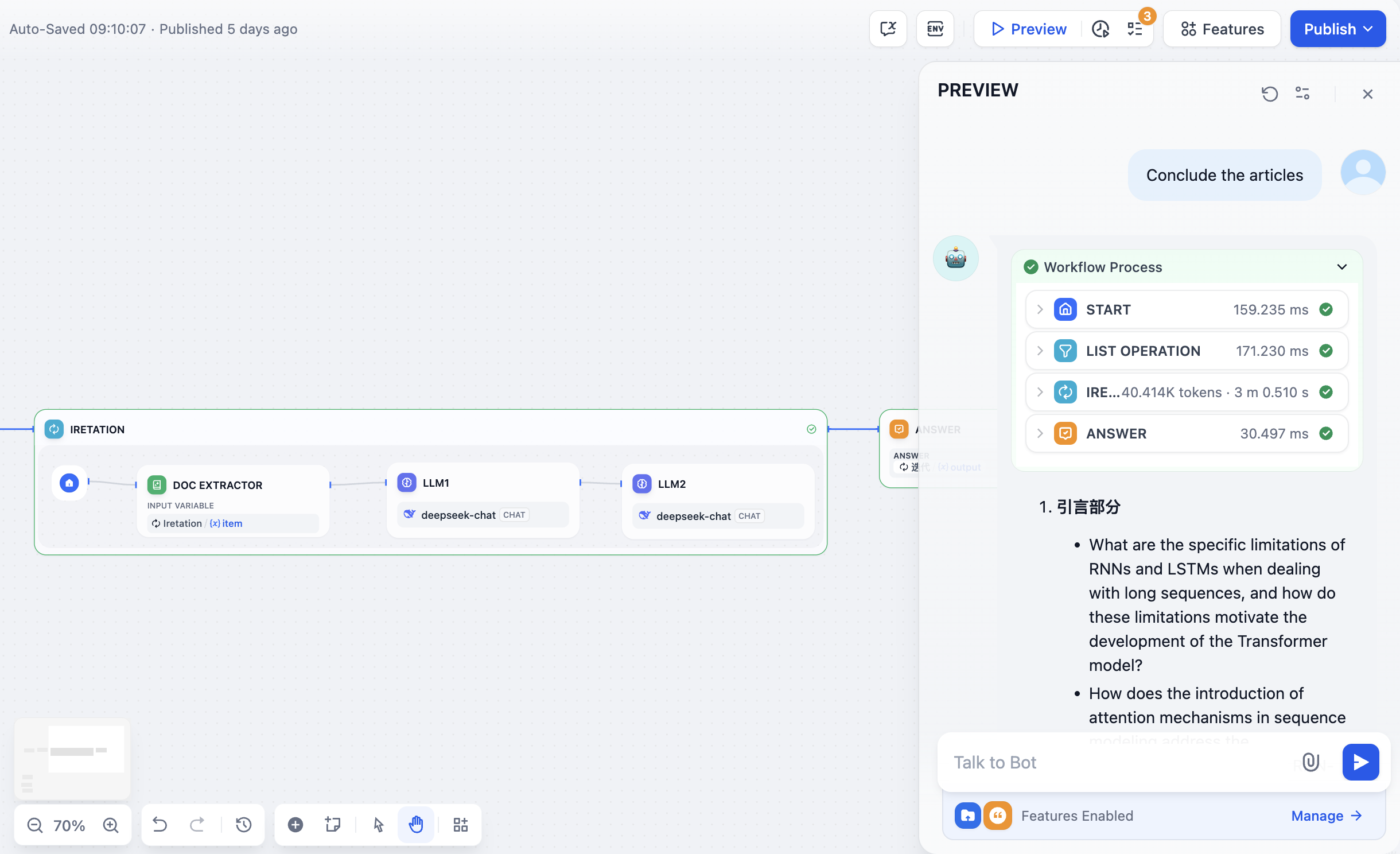

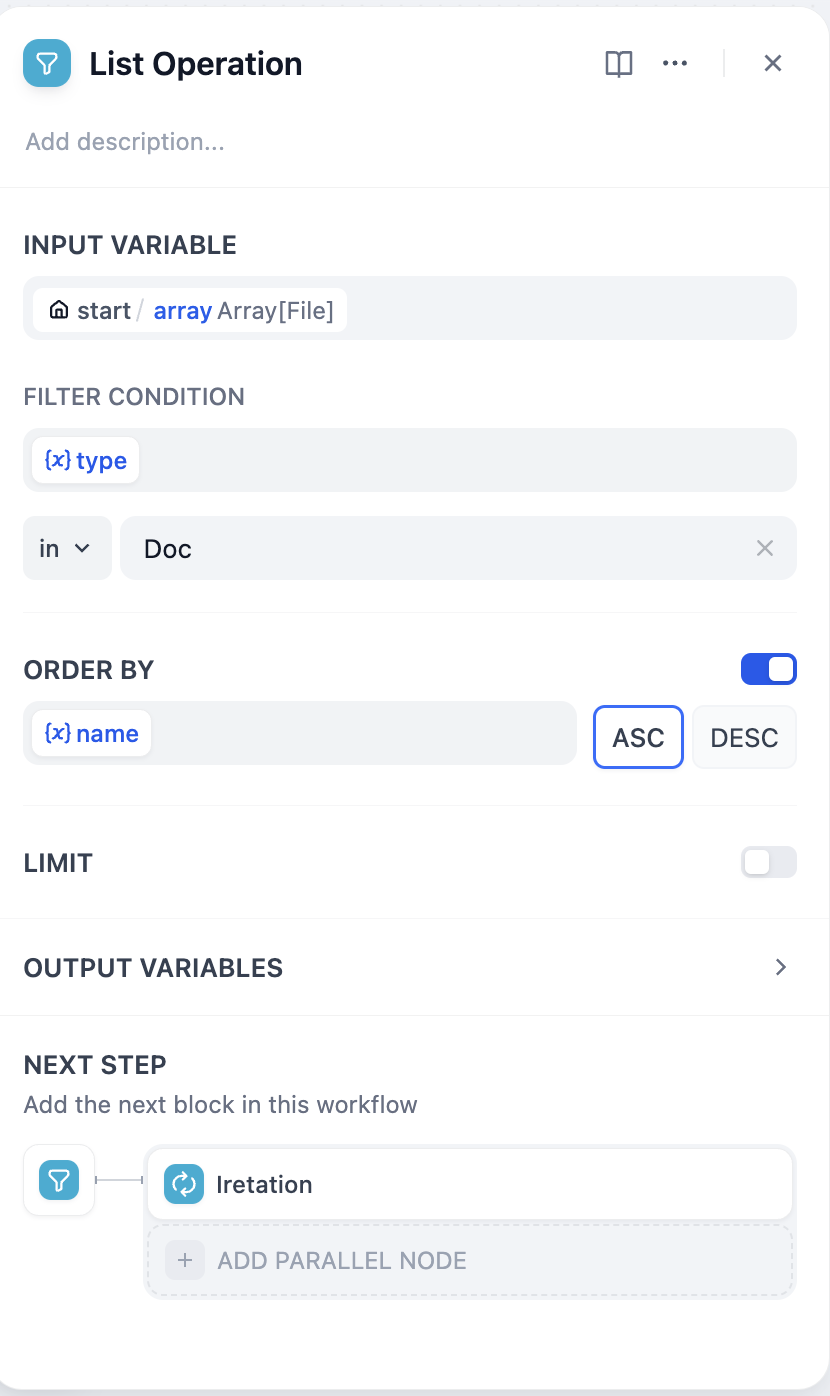

## File Upload

Allow end users to send files at any point during a conversation. You can configure which file types to accept, the upload method, and the maximum number of files per message.

For self-hosted deployments, you can adjust file size limits via the following environment variables:

* `UPLOAD_IMAGE_FILE_SIZE_LIMIT` (default: 10 MB)

* `UPLOAD_FILE_SIZE_LIMIT` (default: 15 MB)

* `UPLOAD_AUDIO_FILE_SIZE_LIMIT` (default: 50 MB)

* `UPLOAD_VIDEO_FILE_SIZE_LIMIT` (default: 100 MB)

See [Environment Variables](/en/self-host/configuration/environments) for details.

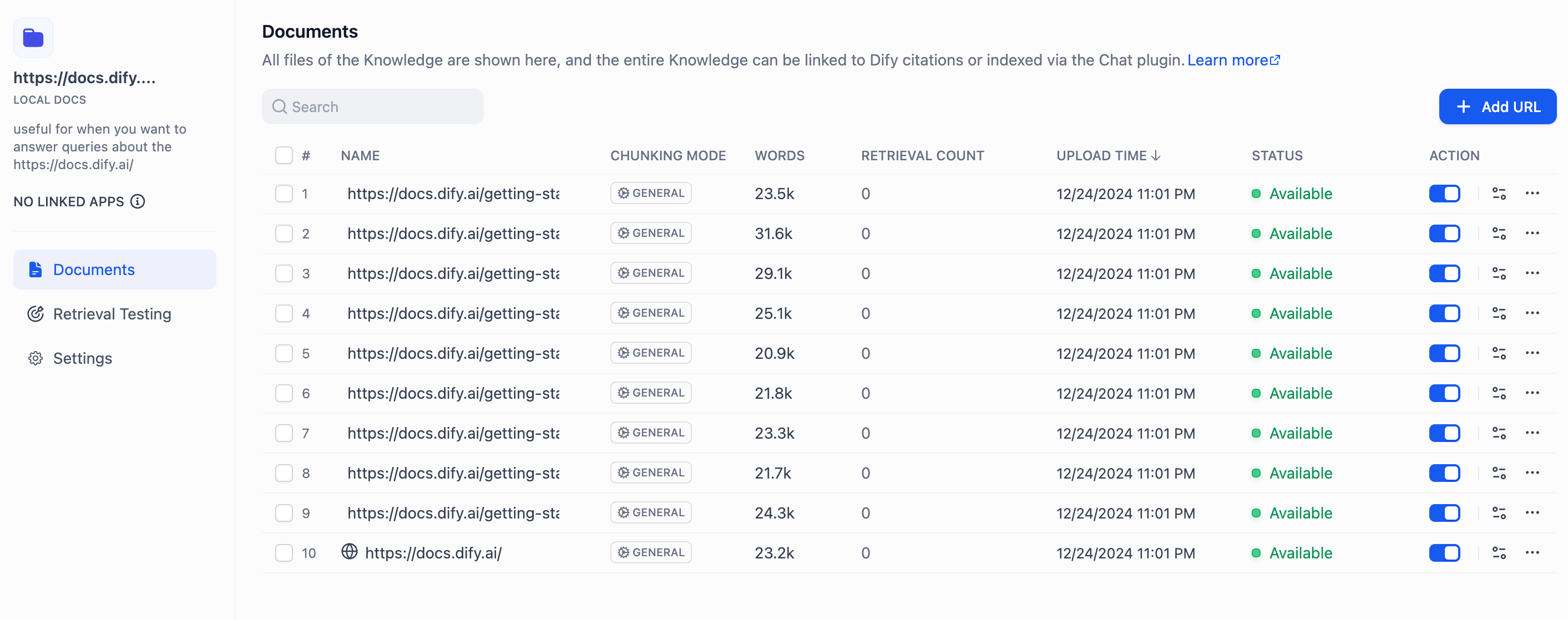

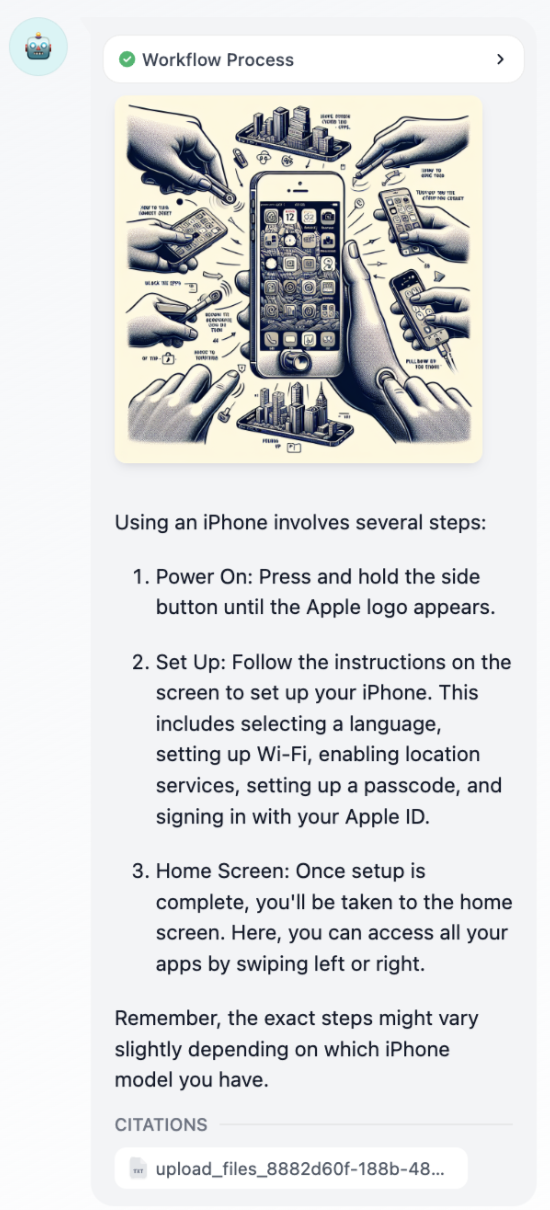

## Citations and Attributions

Show the source documents behind AI responses. When enabled, responses that draw from a connected knowledge base display numbered citations linking back to the original documents and chunks.

## Follow-up

When enabled, the LLM automatically suggests 3 follow-up questions after each response, helping users continue the conversation. This feature is a simple toggle—no additional configuration is needed.

Follow-up questions are generated by a separate LLM call using your workspace's system reasoning model (set in **Settings** > **Model Provider** > **Default Model Settings**), not the model configured in your app.

## Text to Speech

Convert AI responses to audio. You can configure the language and voice to match your app's audience, and enable **Auto Play** to stream audio automatically as the AI responds.

**Text to Speech** uses your workspace's text-to-speech model (set in **Settings** > **Model Provider** > **Default Model Settings**).

The feature only appears in the **Features** panel when a default TTS model is configured.

## Speech to Text

Enable voice input for the chat interface. When enabled, your end users can dictate messages instead of typing by clicking the microphone button.

**Speech to Text** uses your workspace's speech-to-text model (set in **Settings** > **Model Provider** > **Default Model Settings**).

The feature only appears in the **Features** panel when a default STT model is configured.

## File Upload

Allow end users to send files at any point during a conversation. You can configure which file types to accept, the upload method, and the maximum number of files per message.

For self-hosted deployments, you can adjust file size limits via the following environment variables:

* `UPLOAD_IMAGE_FILE_SIZE_LIMIT` (default: 10 MB)

* `UPLOAD_FILE_SIZE_LIMIT` (default: 15 MB)

* `UPLOAD_AUDIO_FILE_SIZE_LIMIT` (default: 50 MB)

* `UPLOAD_VIDEO_FILE_SIZE_LIMIT` (default: 100 MB)

See [Environment Variables](/en/self-host/configuration/environments) for details.

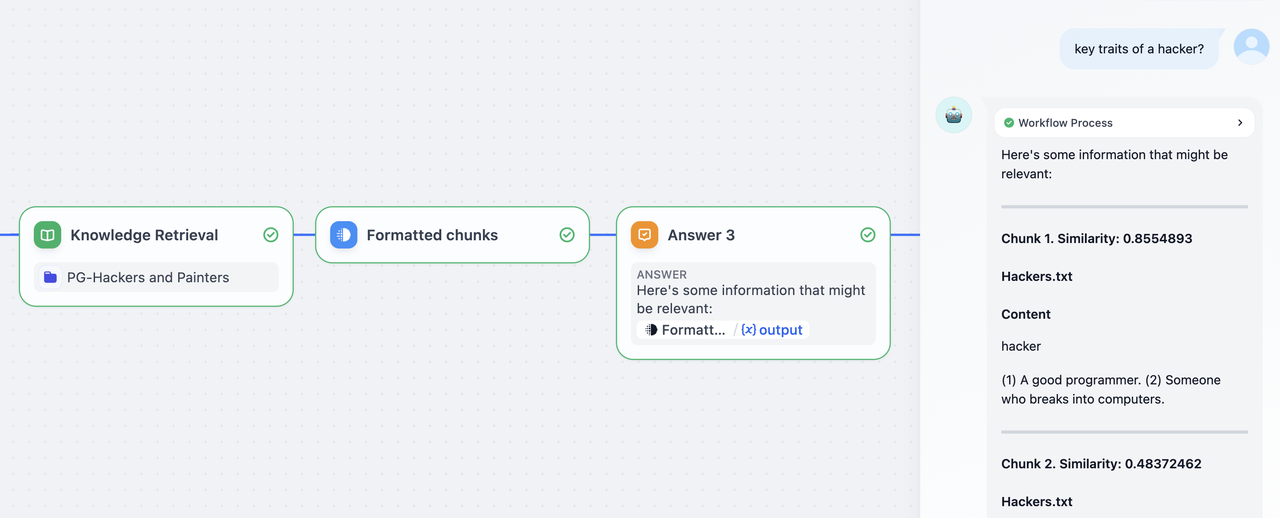

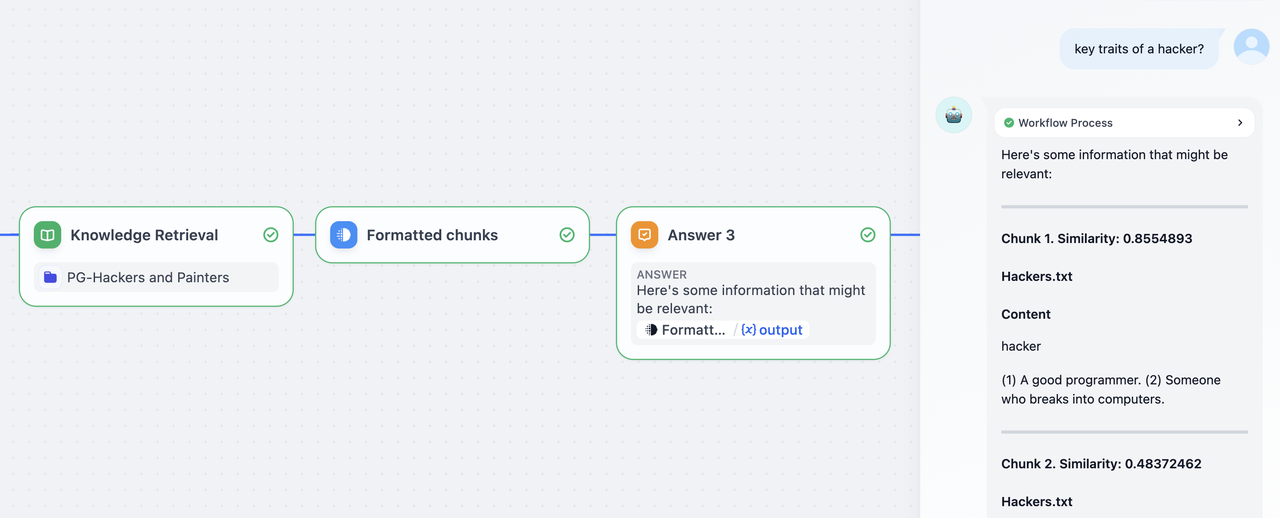

## Citations and Attributions

Show the source documents behind AI responses. When enabled, responses that draw from a connected knowledge base display numbered citations linking back to the original documents and chunks.

![Citations and Attributions]() ## Content Moderation

Filter inappropriate content in user inputs, AI outputs, or both. Choose a moderation provider based on your needs:

* **OpenAI Moderation**: Use OpenAI's dedicated moderation model to detect harmful content across multiple categories.

* **Keywords**: Define a list of blocked terms. Any match triggers the preset response.

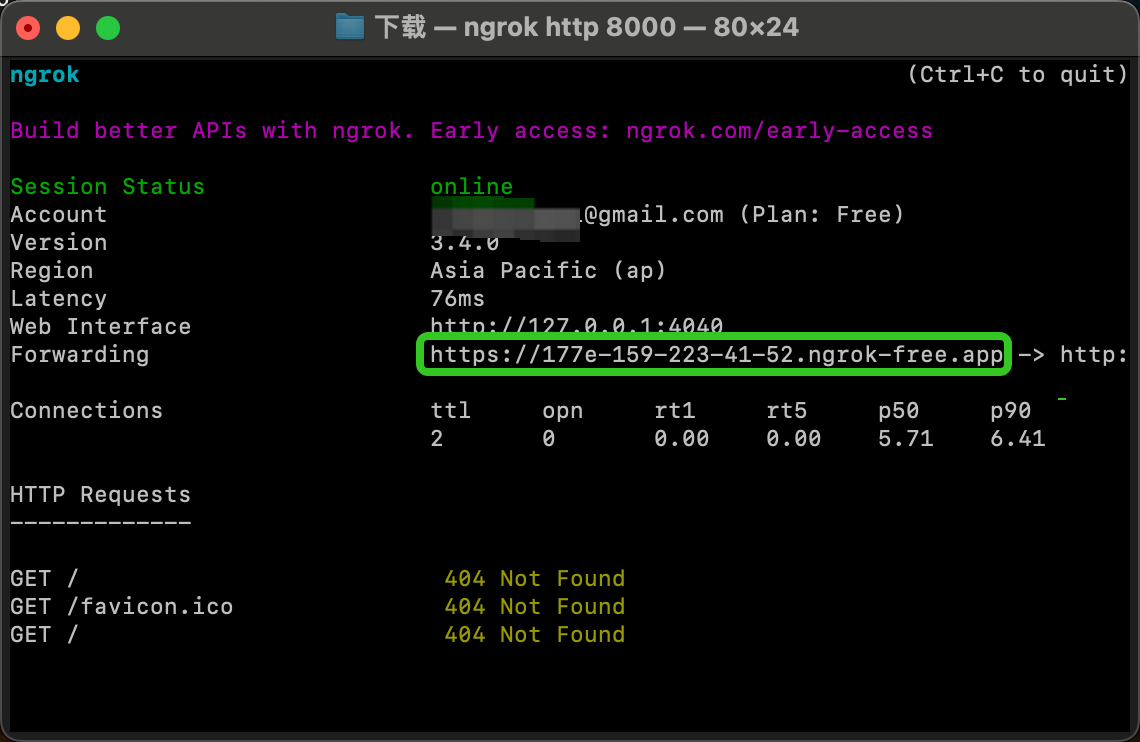

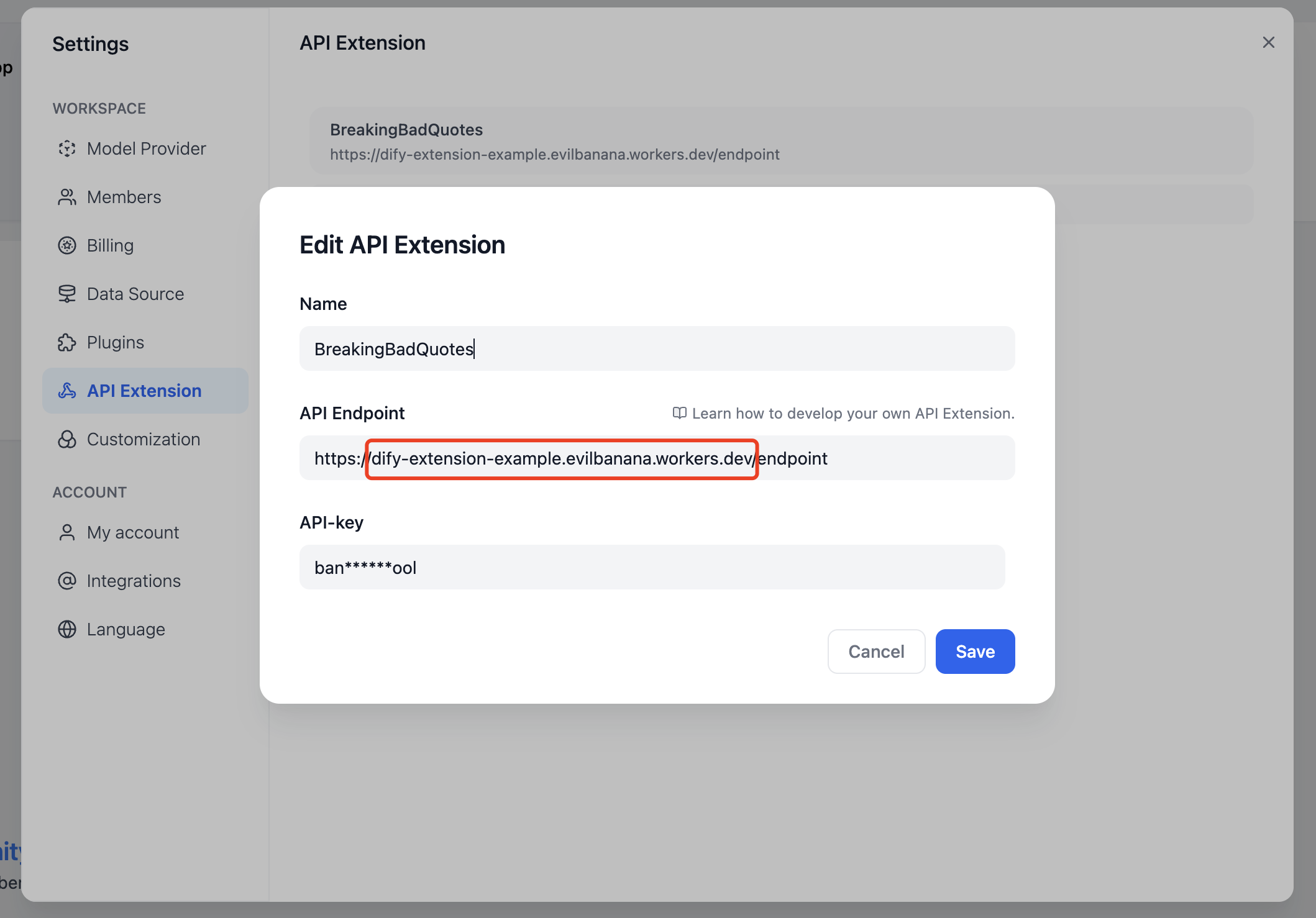

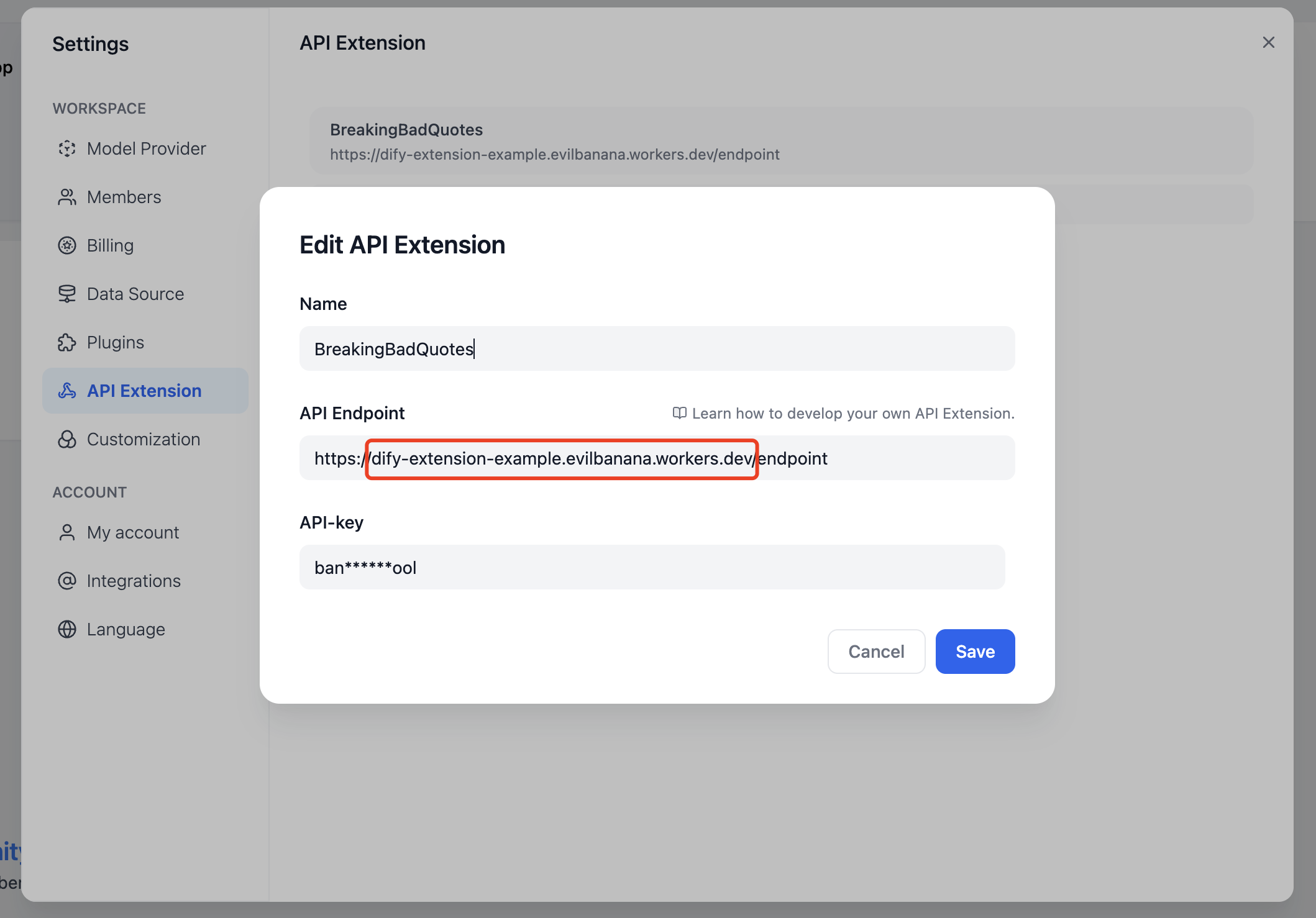

* **API Extension**: Connect a custom moderation endpoint for your own filtering logic.

When content is flagged, the app replaces it with a preset response that you define.

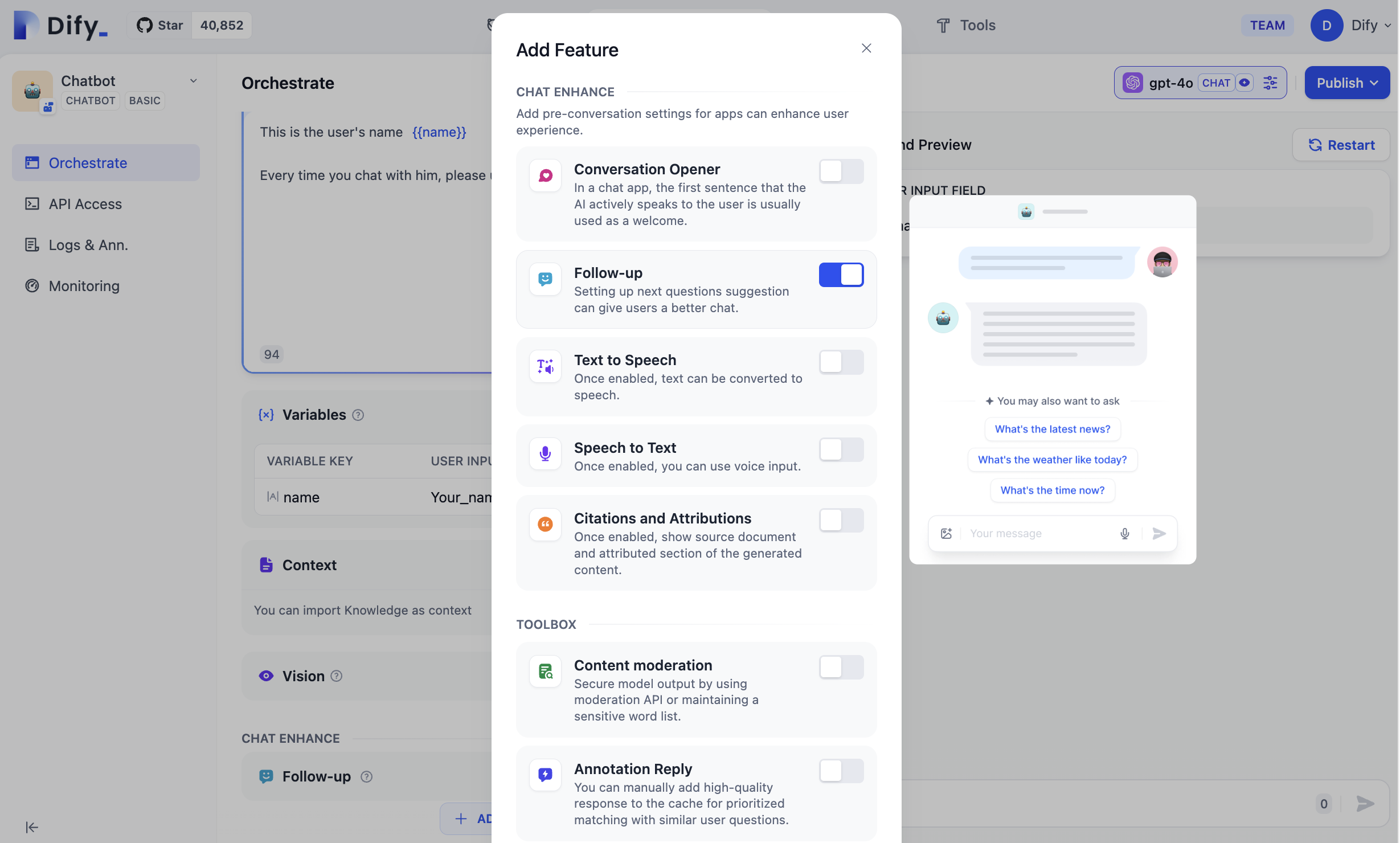

## Annotation Reply

Define curated Q\&A pairs that take priority over LLM responses. When a user's query **semantically** matches an annotation above the score threshold (how closely a query must match), the curated answer is returned directly without calling the LLM.

You can configure the score threshold and the embedding model used for semantic matching.

To create and manage your annotations:

* Convert existing conversations into annotations directly from **Debug & Preview** or **Logs** by clicking the **Add Annotation** icon on any LLM response.

Once a message is annotated, the icon changes to **Edit**, so you can modify the annotation in place.

## Content Moderation

Filter inappropriate content in user inputs, AI outputs, or both. Choose a moderation provider based on your needs:

* **OpenAI Moderation**: Use OpenAI's dedicated moderation model to detect harmful content across multiple categories.

* **Keywords**: Define a list of blocked terms. Any match triggers the preset response.

* **API Extension**: Connect a custom moderation endpoint for your own filtering logic.

When content is flagged, the app replaces it with a preset response that you define.

## Annotation Reply

Define curated Q\&A pairs that take priority over LLM responses. When a user's query **semantically** matches an annotation above the score threshold (how closely a query must match), the curated answer is returned directly without calling the LLM.

You can configure the score threshold and the embedding model used for semantic matching.

To create and manage your annotations:

* Convert existing conversations into annotations directly from **Debug & Preview** or **Logs** by clicking the **Add Annotation** icon on any LLM response.

Once a message is annotated, the icon changes to **Edit**, so you can modify the annotation in place.

![Add Annotation Icon]() * In the **Logs & Annotations** > **Annotations** tab, manually add new Q\&A pairs, manage existing annotations, and view hit history. Click `...` to bulk import or bulk export.

* In the **Logs & Annotations** > **Annotations** tab, manually add new Q\&A pairs, manage existing annotations, and view hit history. Click `...` to bulk import or bulk export.

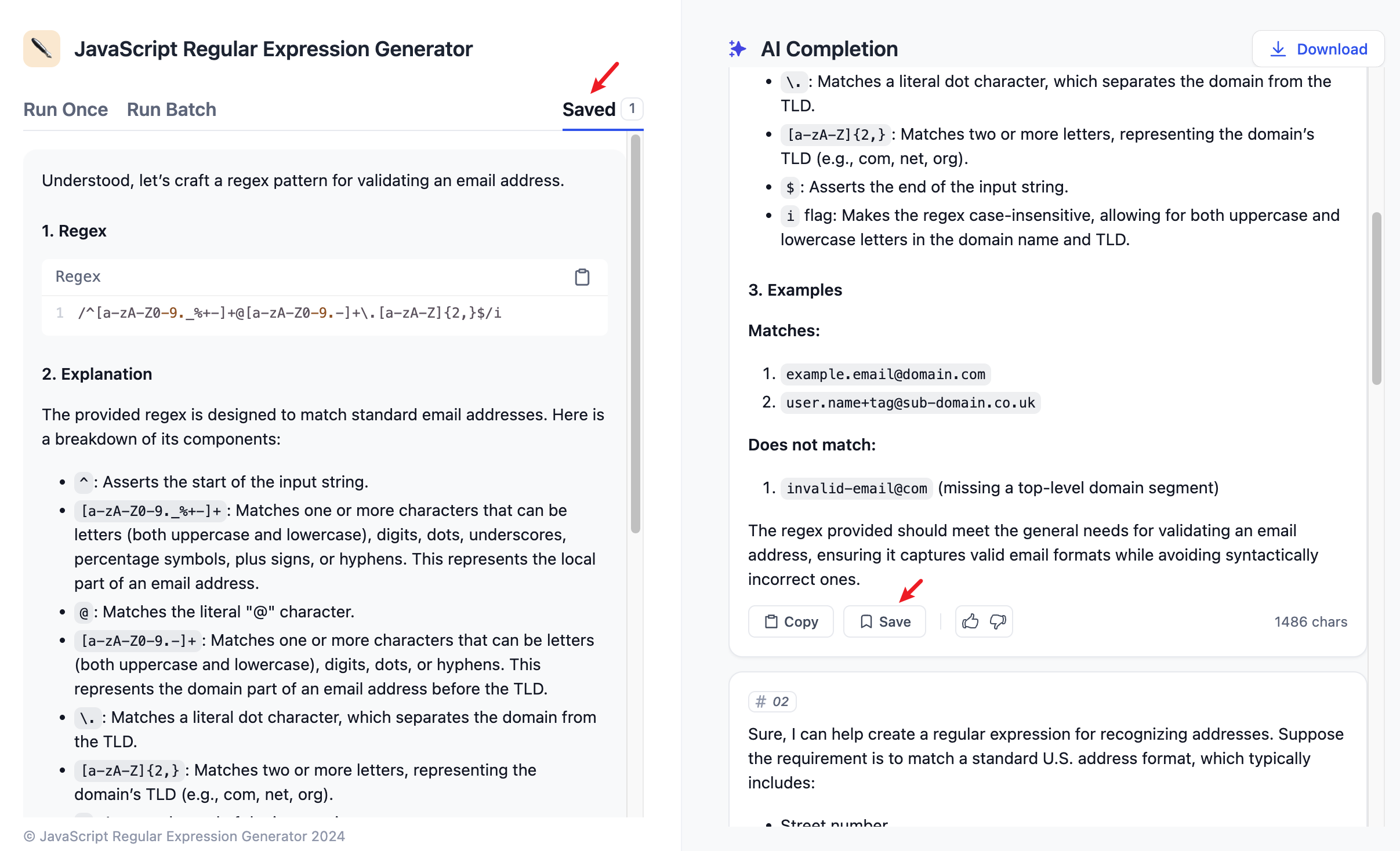

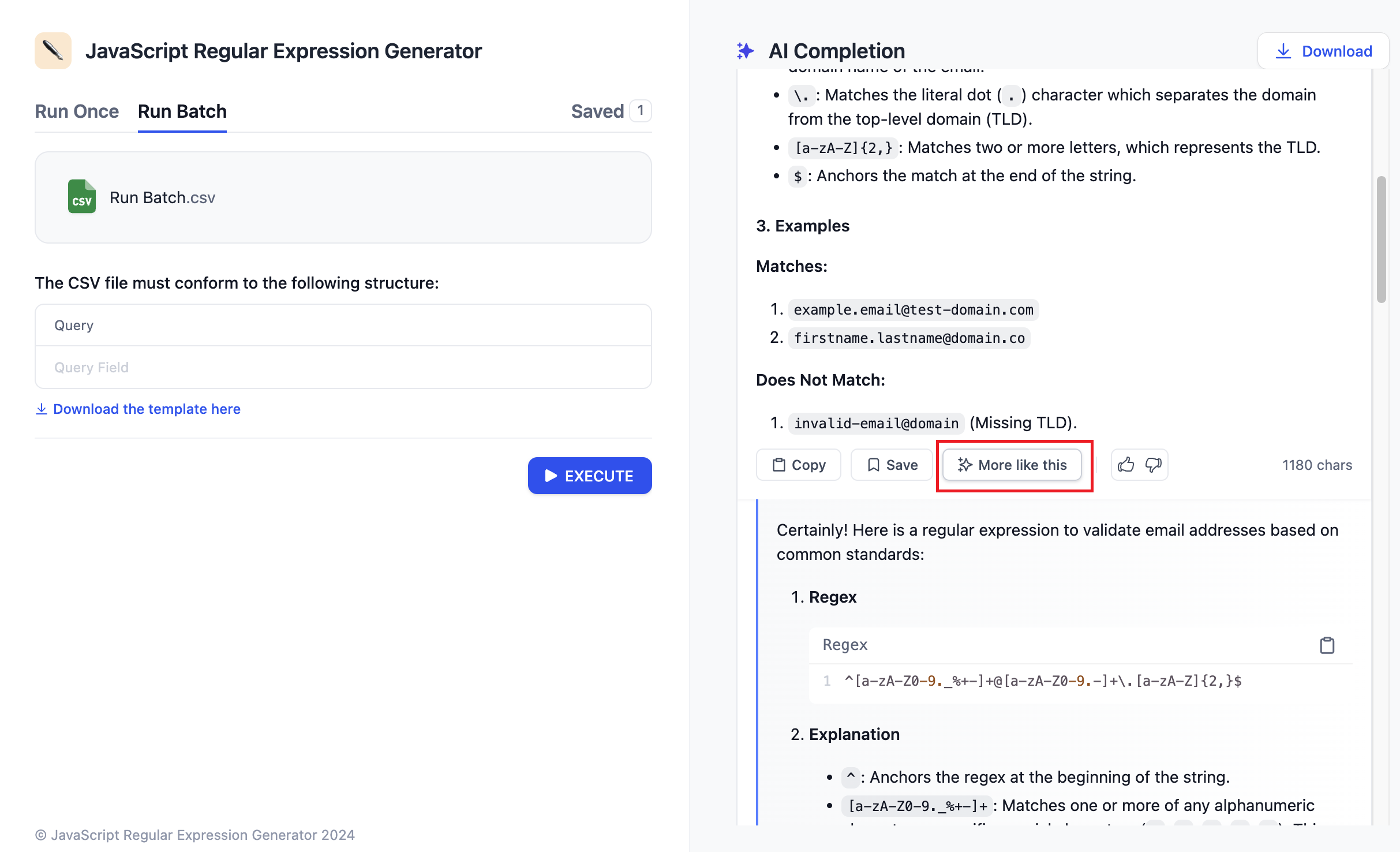

![Bulk Annotation Operation]() ## More Like This

Generate alternative outputs for the same input. Once enabled, each generated result includes a button to produce a variation, so you can explore different responses without re-entering your query.

## More Like This

Generate alternative outputs for the same input. Once enabled, each generated result includes a button to produce a variation, so you can explore different responses without re-entering your query.

![More Like This]() You can generate up to 2 variations per result. Each variation uses additional tokens.

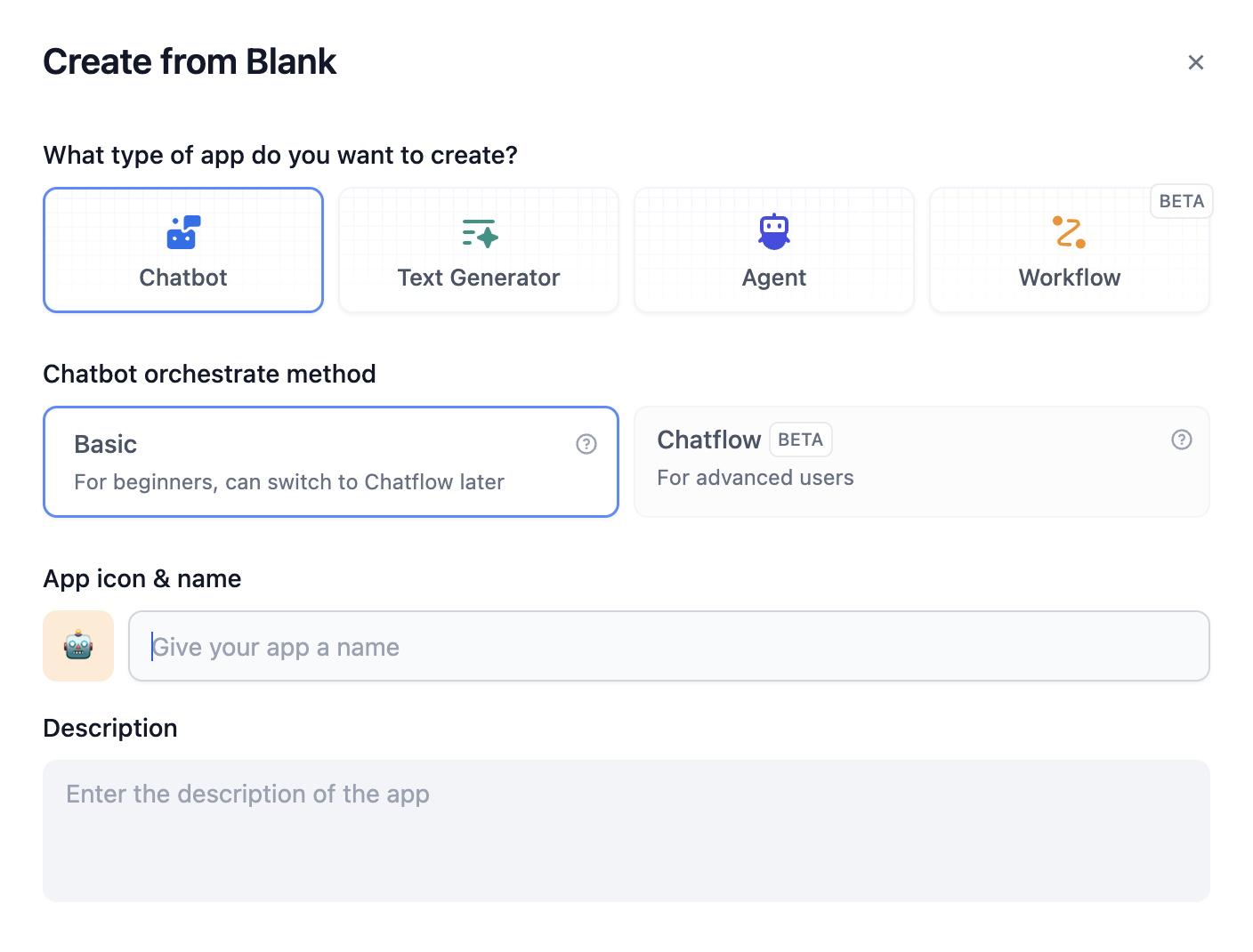

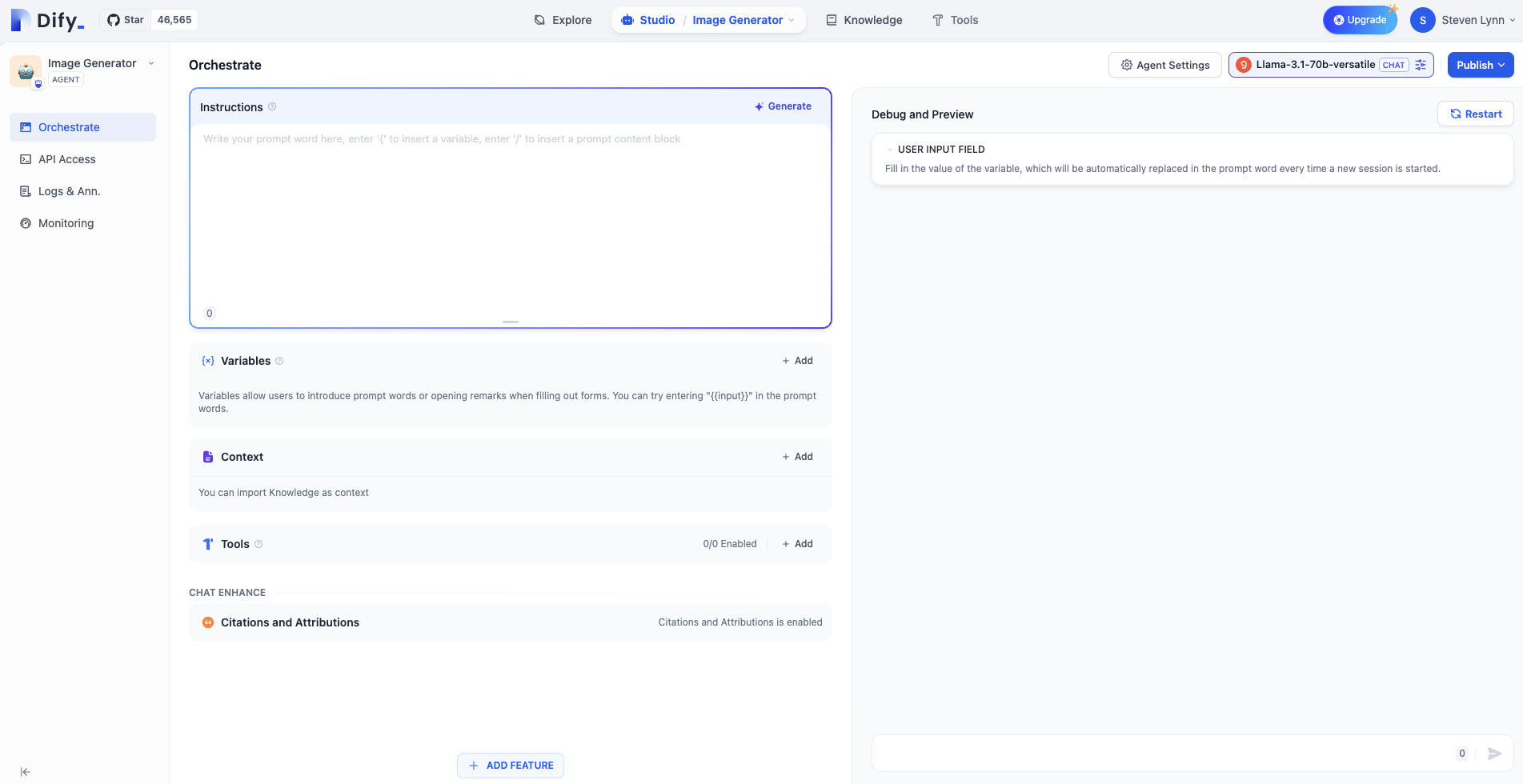

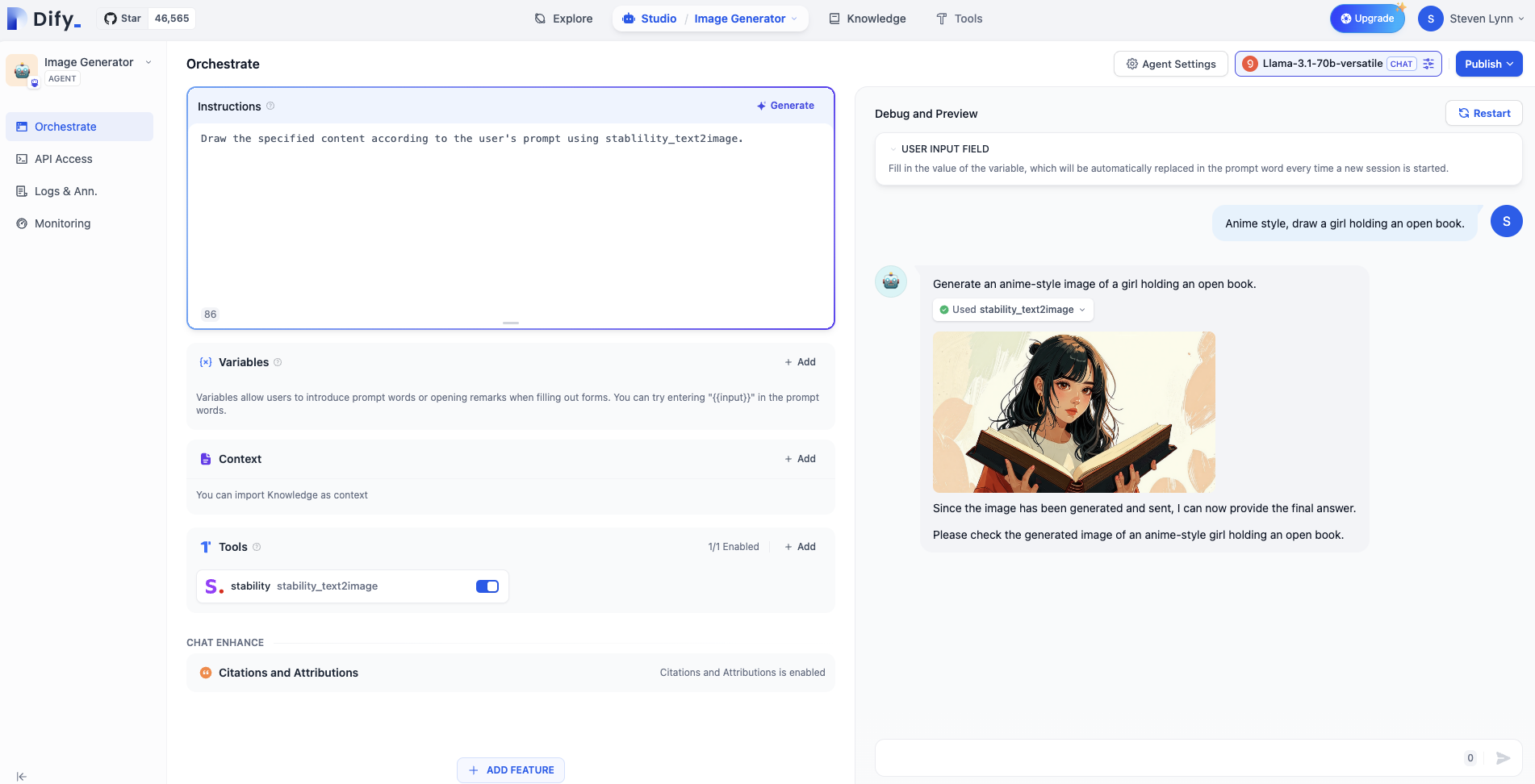

# Agent

Source: https://docs.dify.ai/en/use-dify/build/agent

Chat-style apps where the model can reason, make decisions, and use tools autonomously

Agents are chat-style apps where the model can reason through a task, decide what to do next, and use tools when needed to complete the user's request.

Use it when you want the model to autonomously decide how to approach a task using available tools, without designing a multi-step workflow. For example, building a data analysis assistant that can fetch live data, generate charts, and summarize findings on its own.

Agents keep up to 500 messages or 2,000 tokens of history per conversation. If either limit is exceeded, the oldest messages will be removed to make room for new ones.

Agents support optional features like conversation openers, follow-up suggestions, and more. See [App Toolkit](/en/use-dify/build/additional-features) for details.

## Configure

### Write the Prompt

The prompt tells the model what to do, how to respond, and what constraints to follow. For an agent, the prompt also guides how the model reasons through tasks and decides when to use tools, so be specific about the workflow you expect.

Here are some tips for writing effective prompts:

* **Define the persona**: Describe who the model should act as and the expertise it should draw on.

* **Specify the output format**: Describe the structure, length, or style you expect.

* **Set constraints**: Tell the model what to avoid or what rules to follow.

* **Guide tool usage**: Mention specific tools by name and describe when they should be used.

* **Outline the workflow**: Break down complex tasks into logical steps the model should follow.

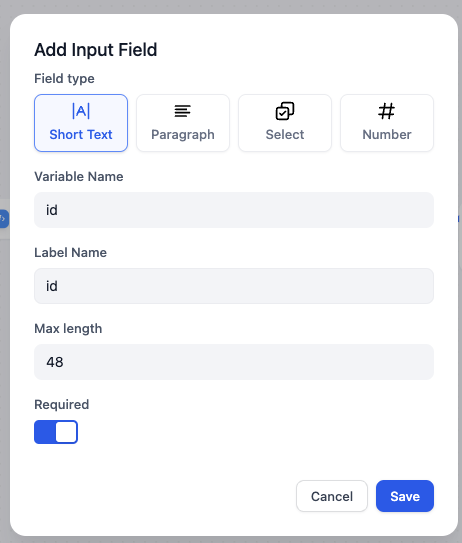

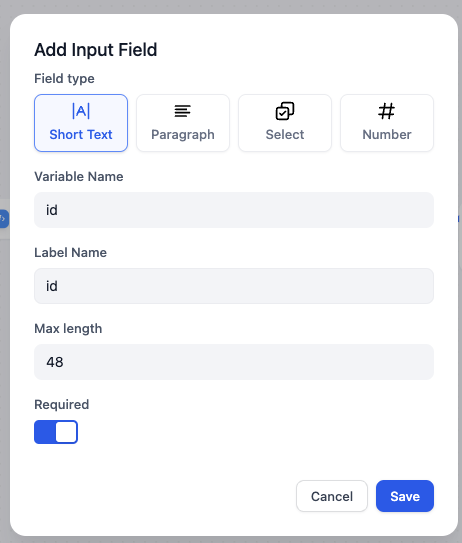

#### Create Dynamic Prompts with Variables

To adapt the agent to different users or contexts without rewriting the prompt each time, add variables to collect the necessary information upfront.

Variables are placeholders in the prompt—each one appears as an input field that users fill in before the conversation starts, and their values are injected into the prompt at runtime. Users can also update variable values mid-conversation, and the prompt will adjust accordingly.

For example, a data analysis agent might use a domain variable so users can specify which area to focus on:

```text wrap theme={null}

You are a data analyst specializing in {{domain}}. Help users explore and understand their data.

When asked a question, use available data tools to fetch the relevant information. If the result suits a visual format, generate a chart. Explain your findings in plain language.

Keep responses concise. If a question is ambiguous, ask for clarification before fetching data.

```

While drafting the prompt, type `/` > **New Variable** to quickly insert a named placeholder. You can configure its details in the **Variables** section later.

Choose the variable type that matches the input you expect:

Accepts up to 256 characters. Use it for names, email addresses, titles, or any brief text input that fits on a single line.

Allows long-form text without length restrictions. It gives users a multi-line text area for detailed descriptions.

Displays a dropdown menu with predefined options.

Restricts input to numerical values only—ideal for quantities, ratings, IDs, or any data requiring mathematical processing.

Provides a simple yes/no option. When a user checks the box, the output is `true`; otherwise, it's `false`. Use it for confirmations or any case that requires a binary choice.

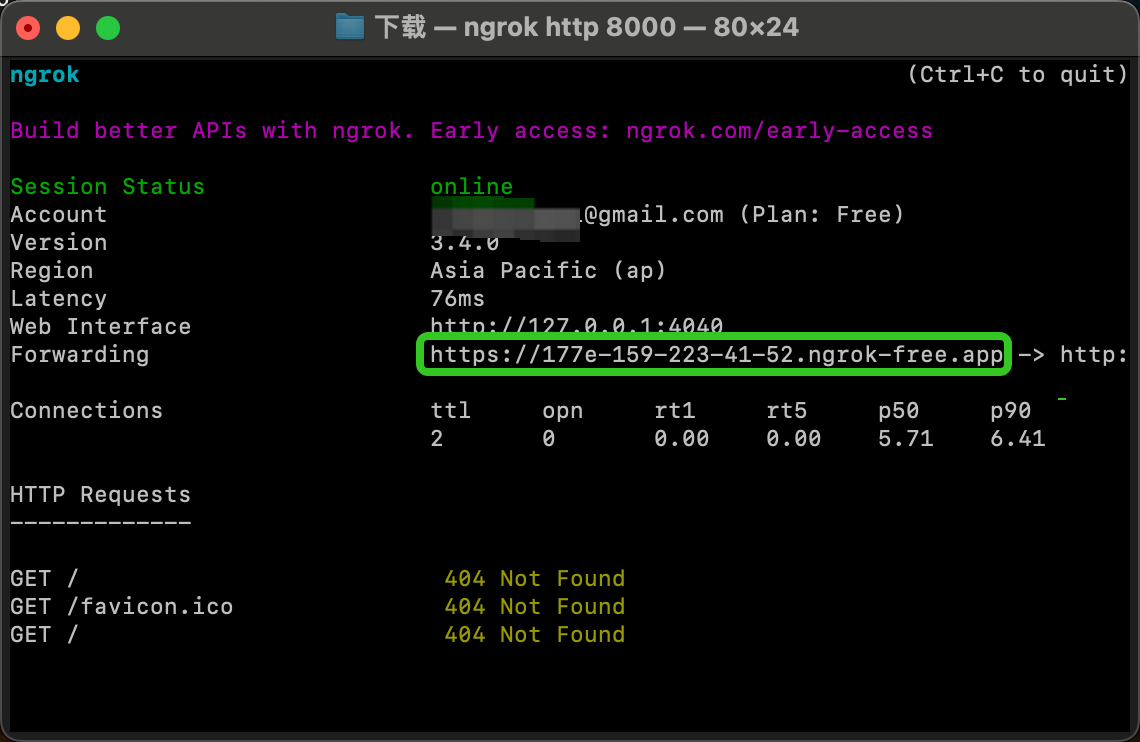

Fetches variable values from an external API at runtime instead of collecting them from users.

Use it when your prompt needs dynamic data from an external source, such as live weather conditions or database records. See [API Extension](/en/use-dify/workspace/api-extension/api-extension) for details.

**Label Name** is what end users see for each input field.

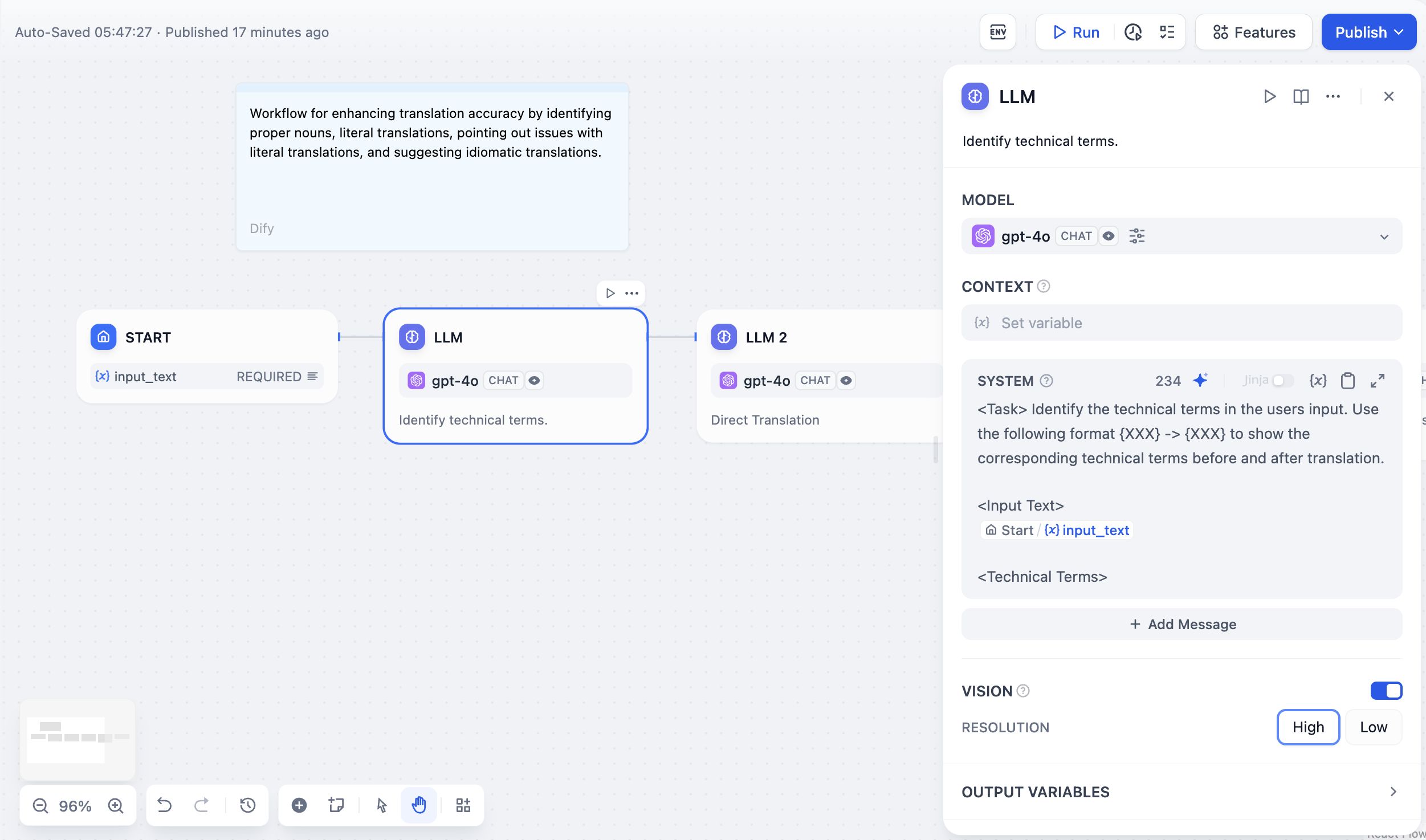

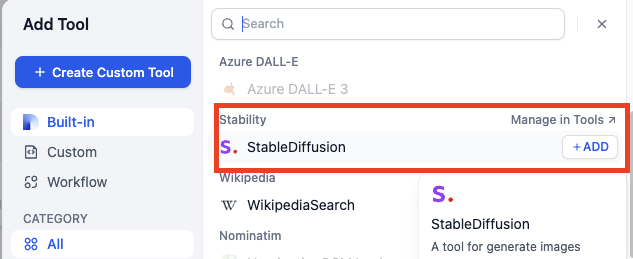

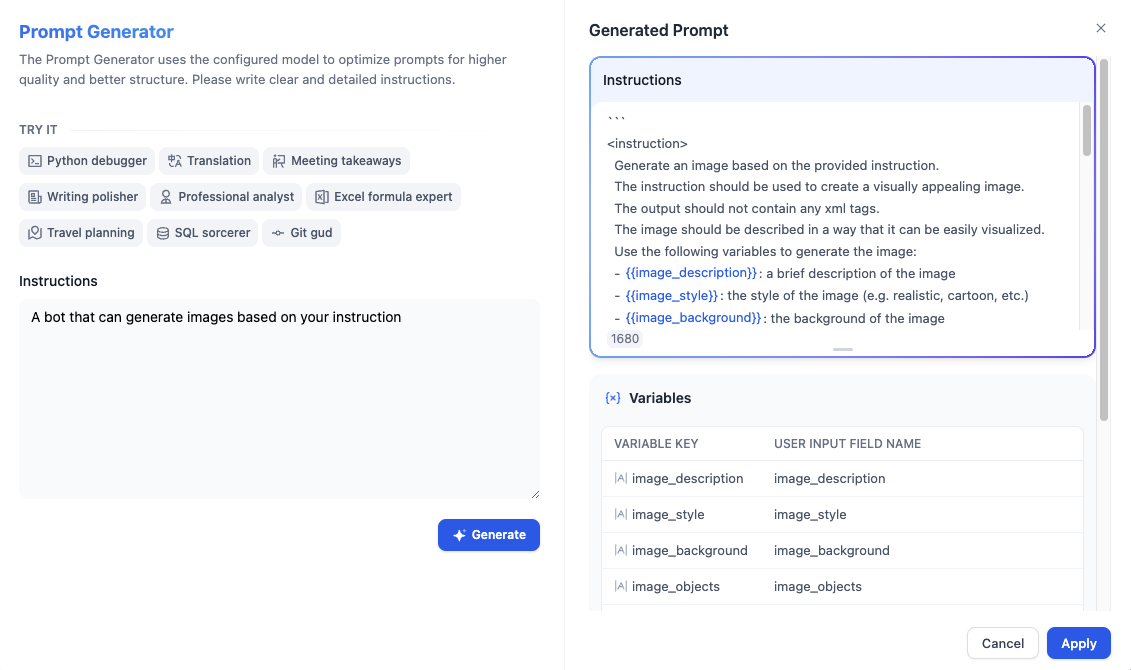

#### Generate or Improve the Prompt with AI

If you're unsure where to start or want to refine the existing prompt, click **Generate** to let an LLM help you draft it.

Describe what you want from scratch, or reference `current_prompt` and specify what to improve. For more targeted results, add an example in **Ideal Output**.

Each generation is saved as a version, so you can experiment and roll back freely.

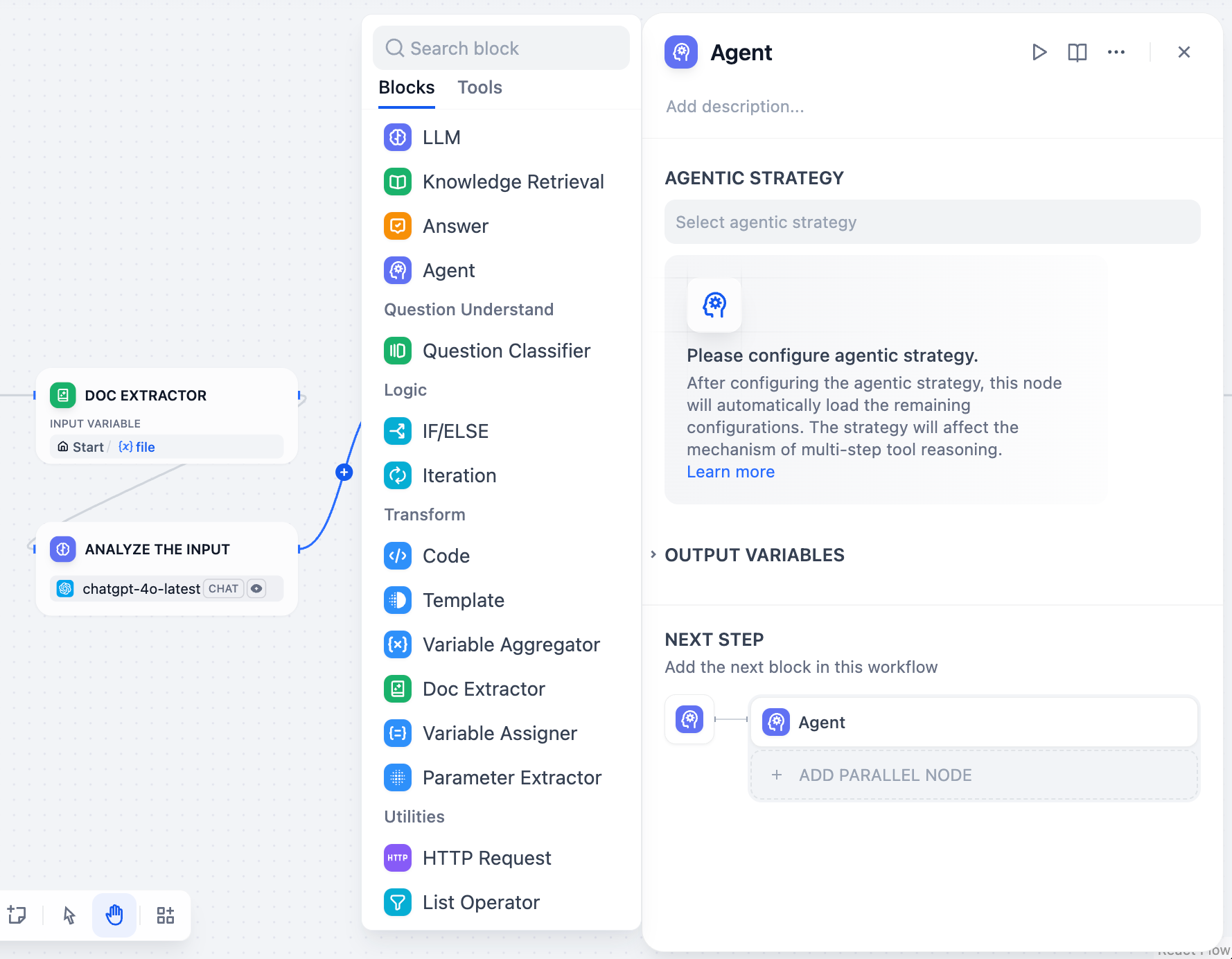

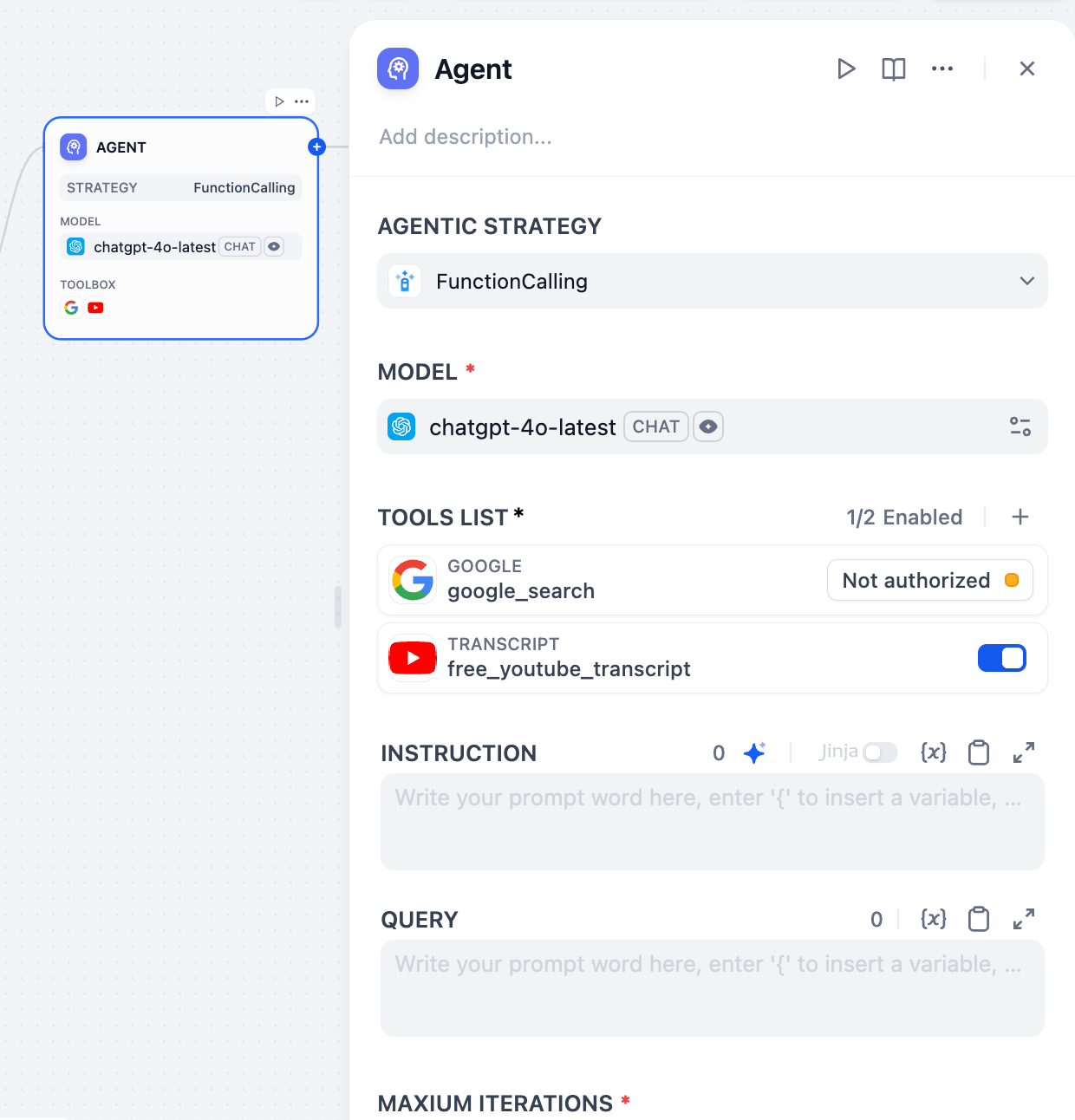

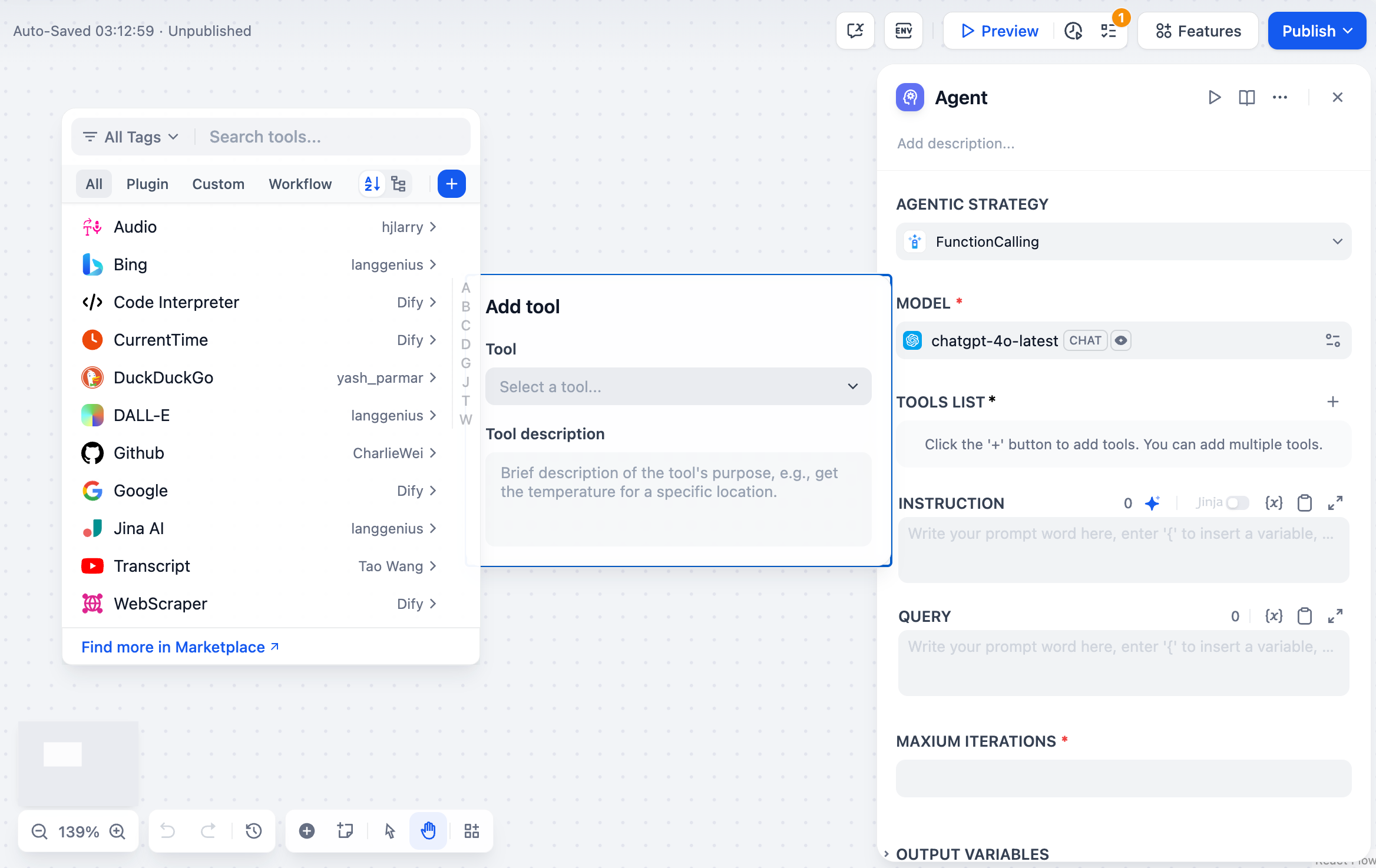

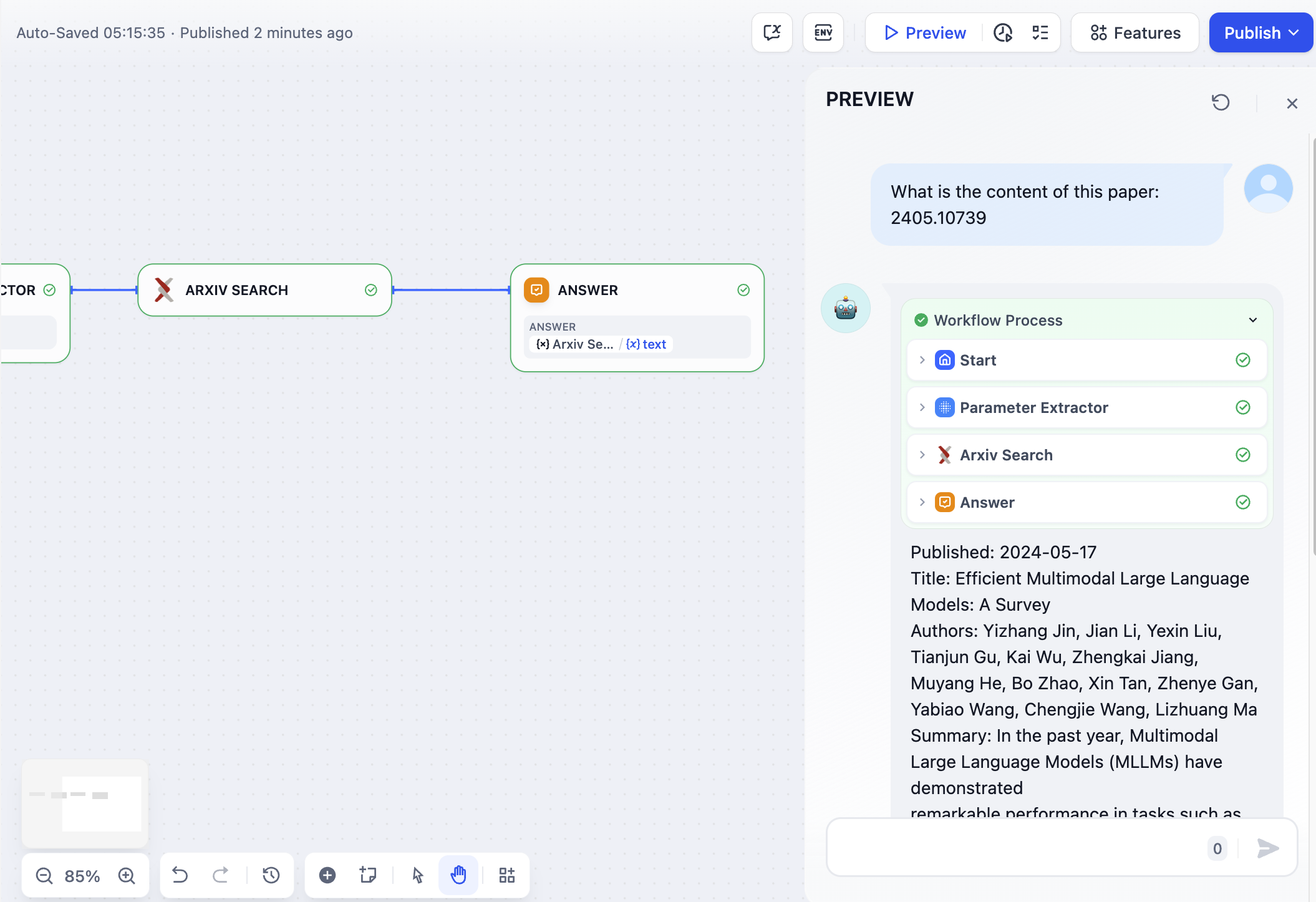

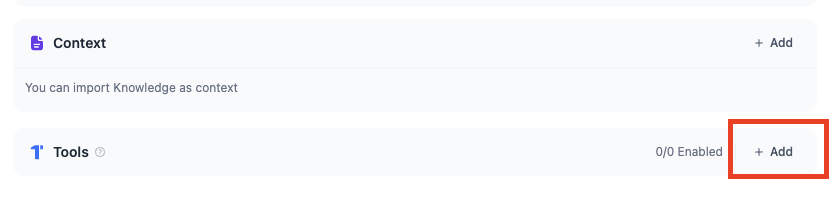

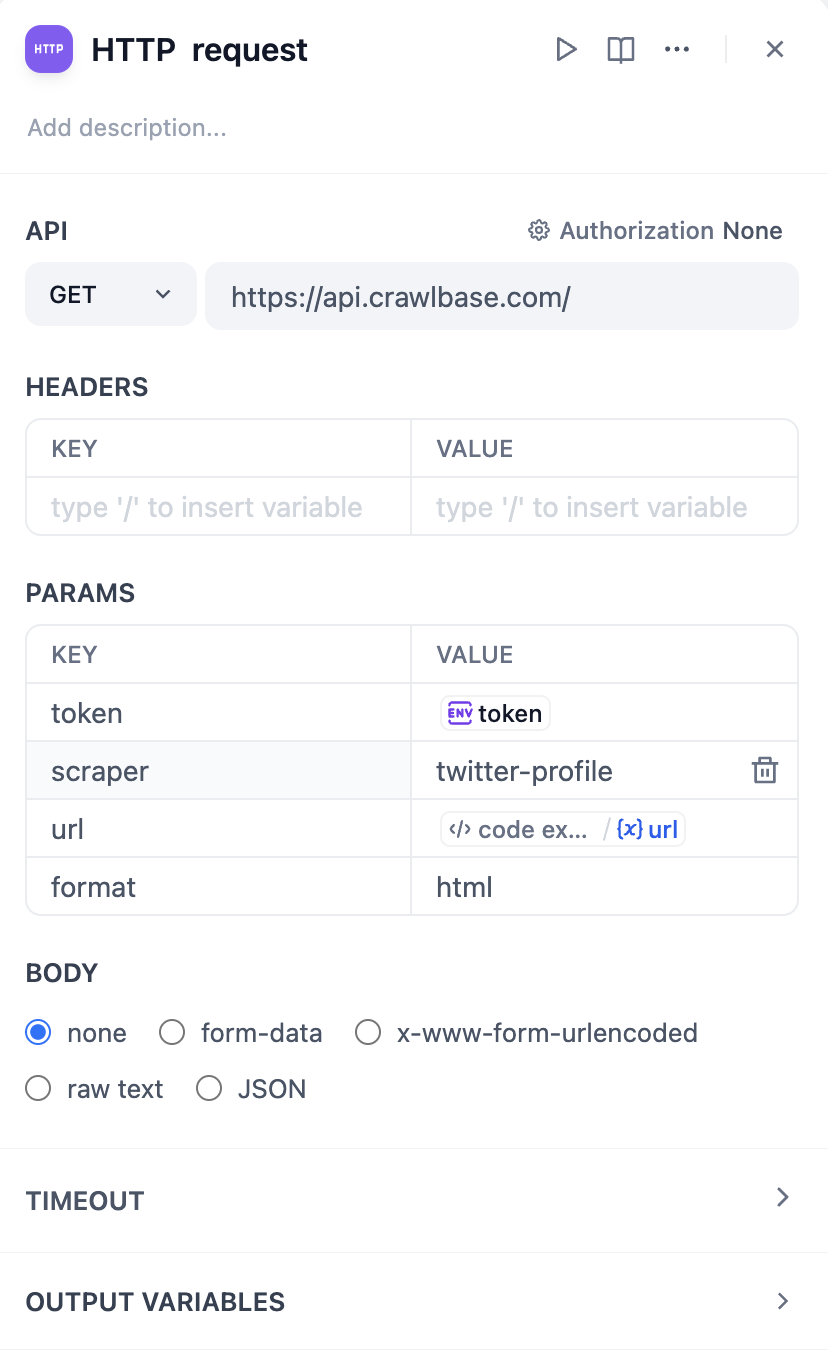

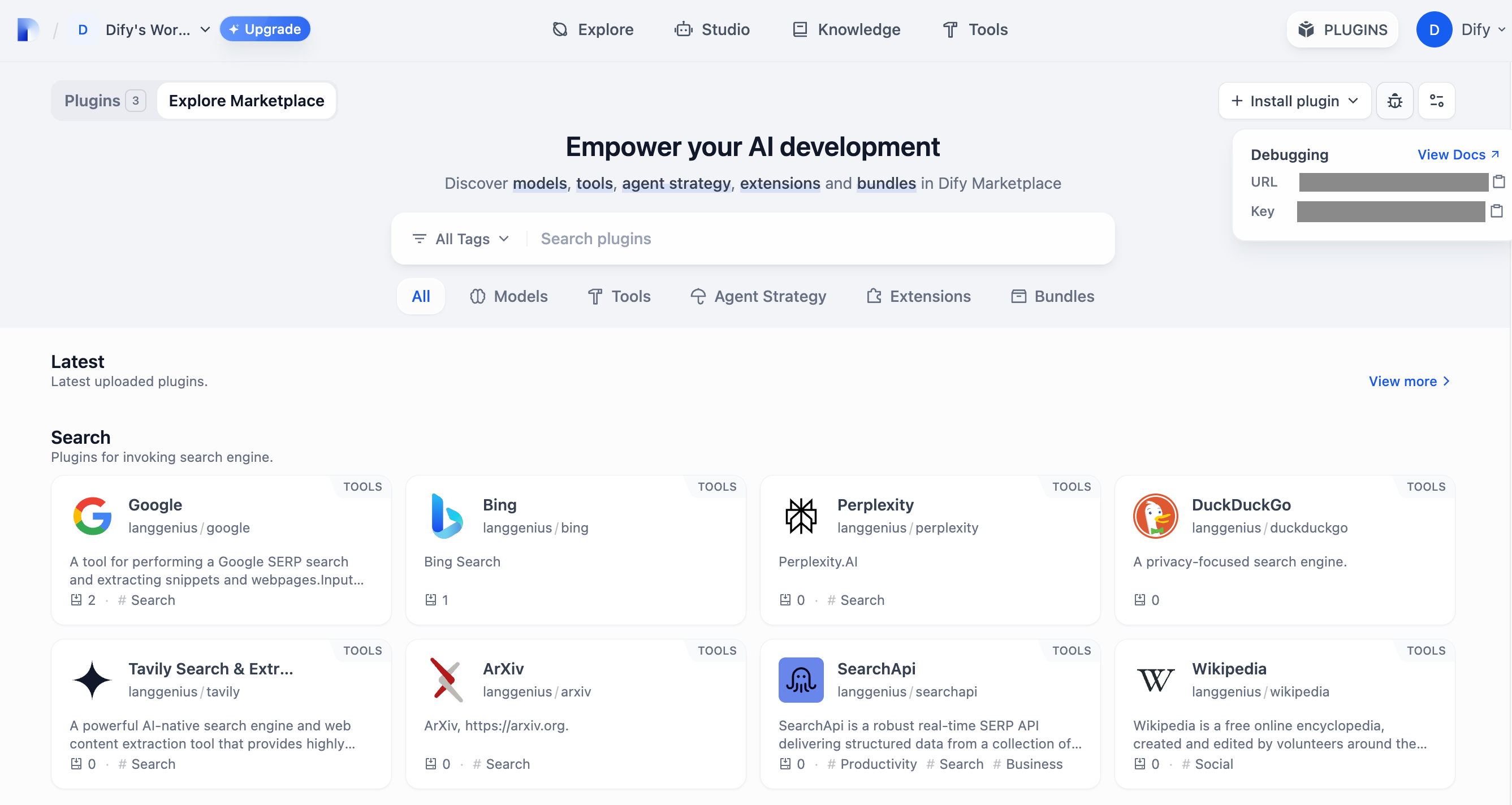

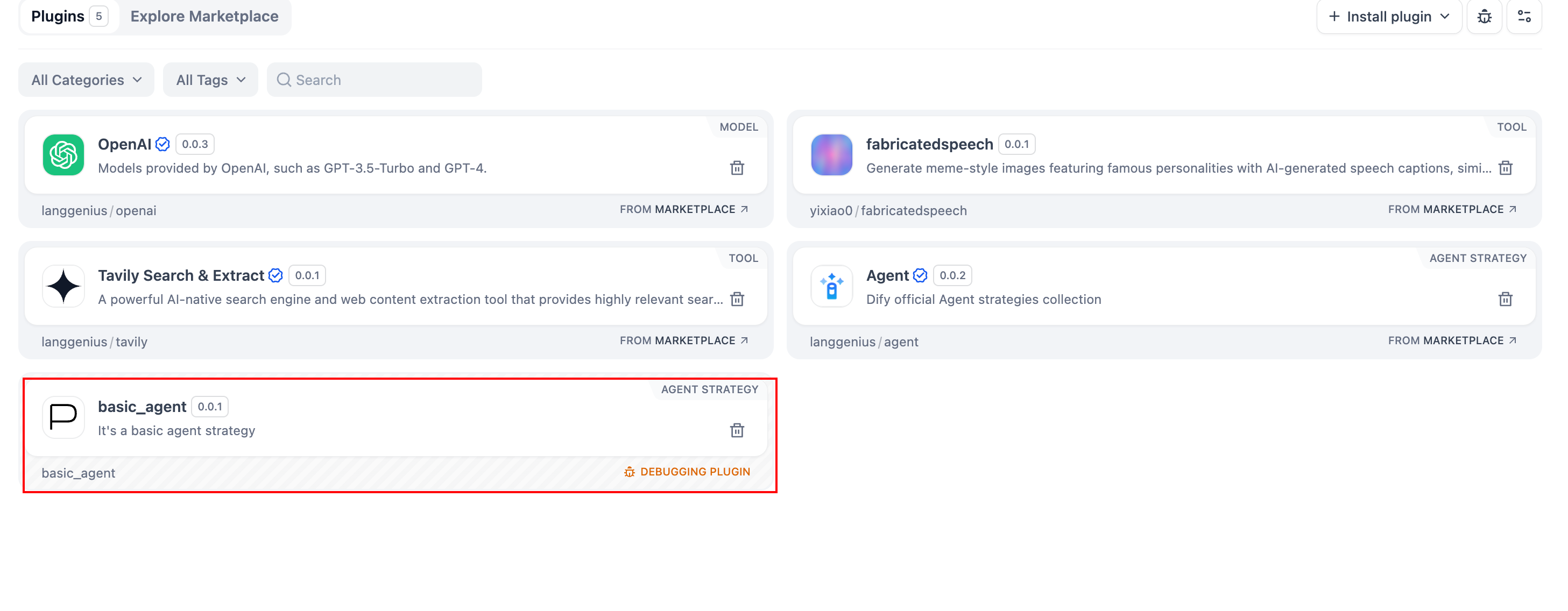

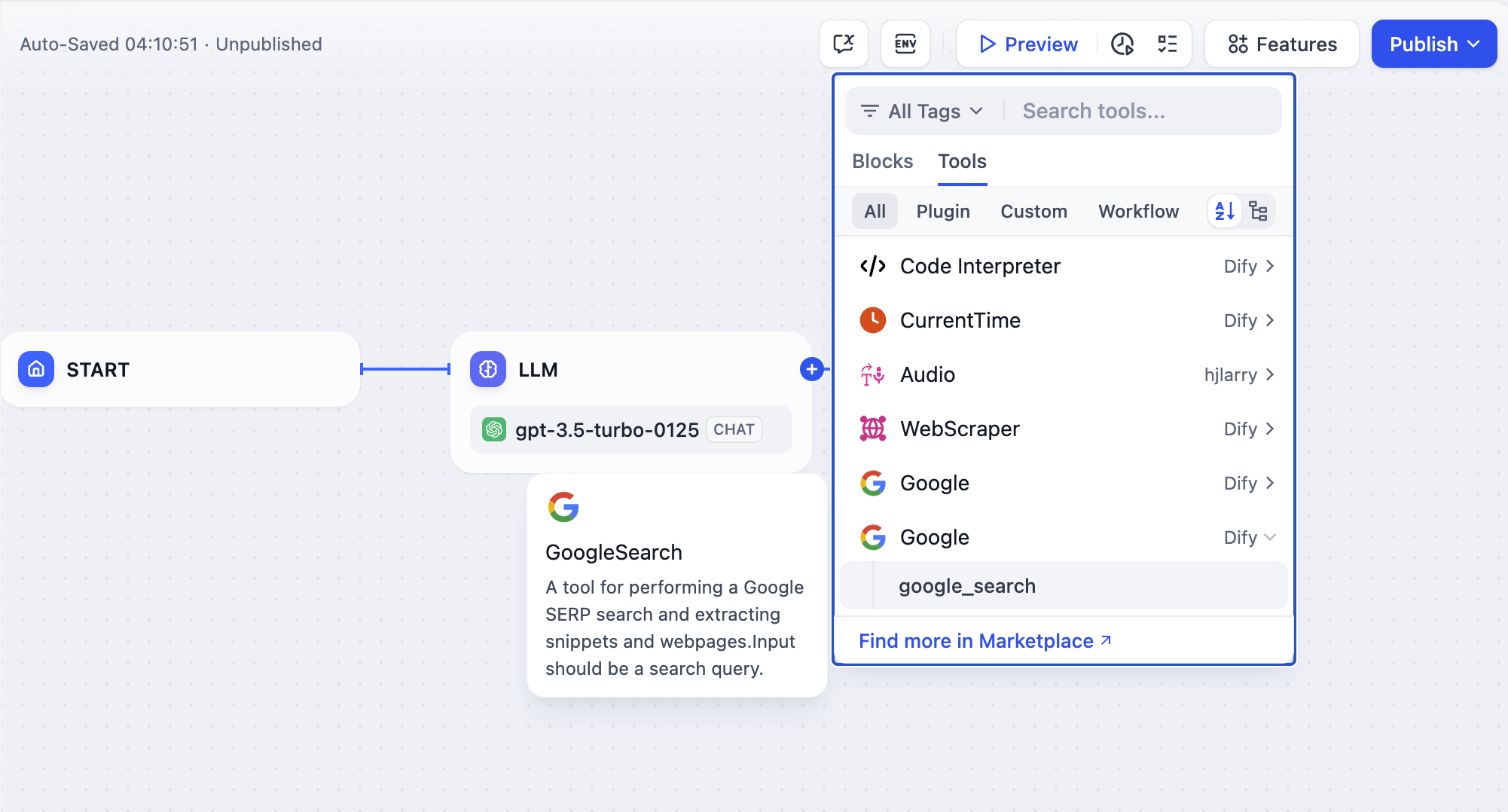

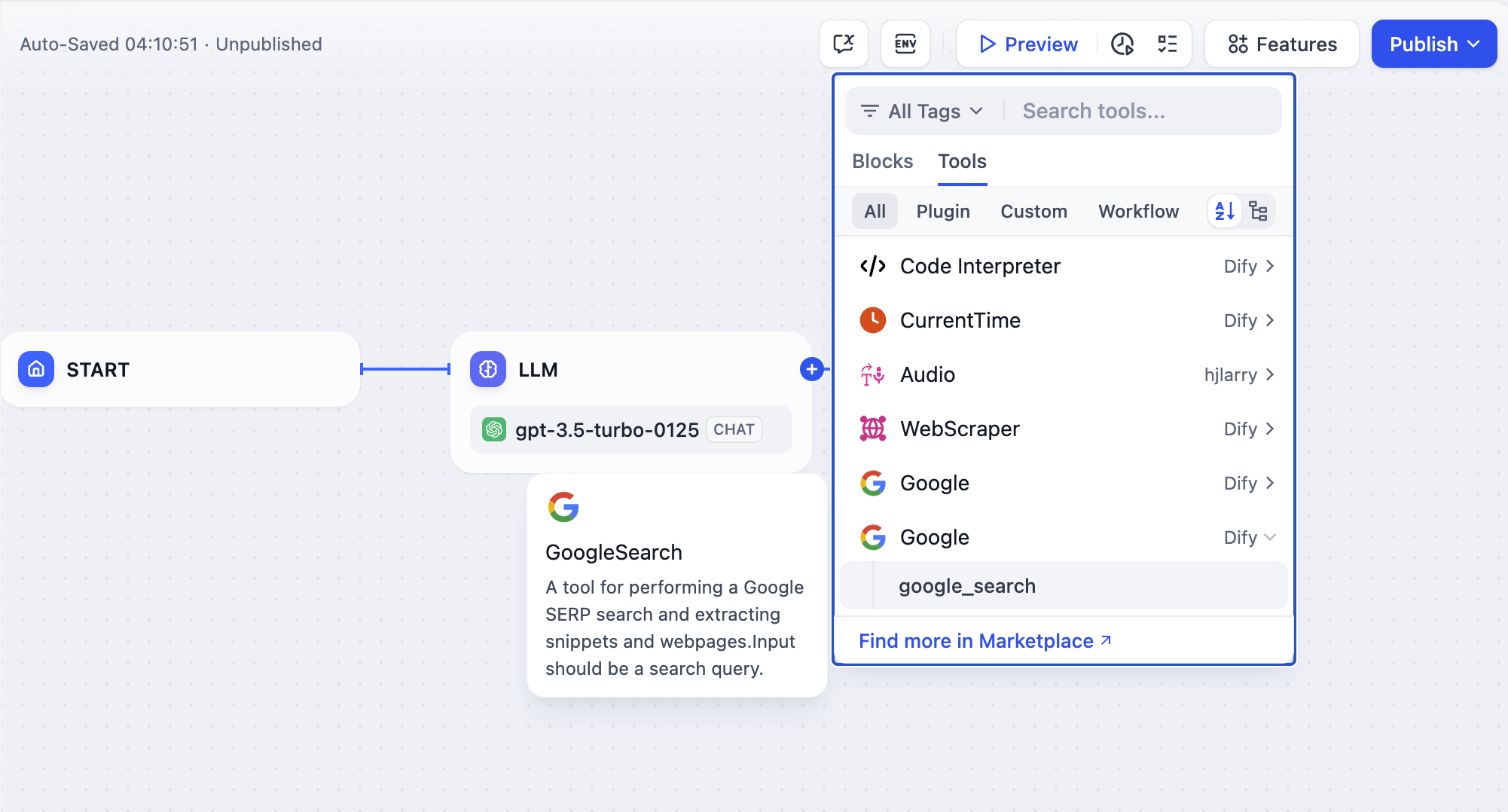

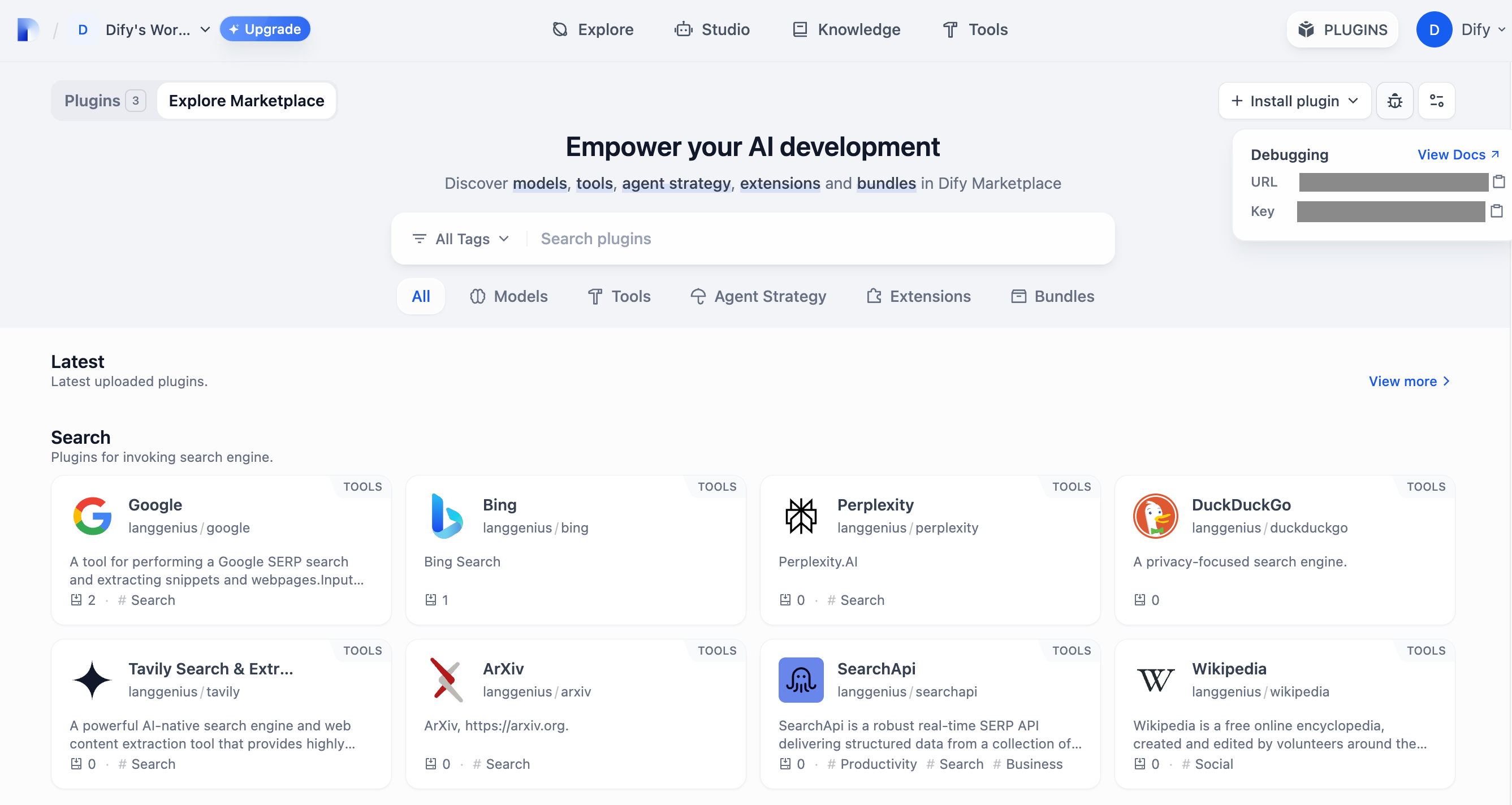

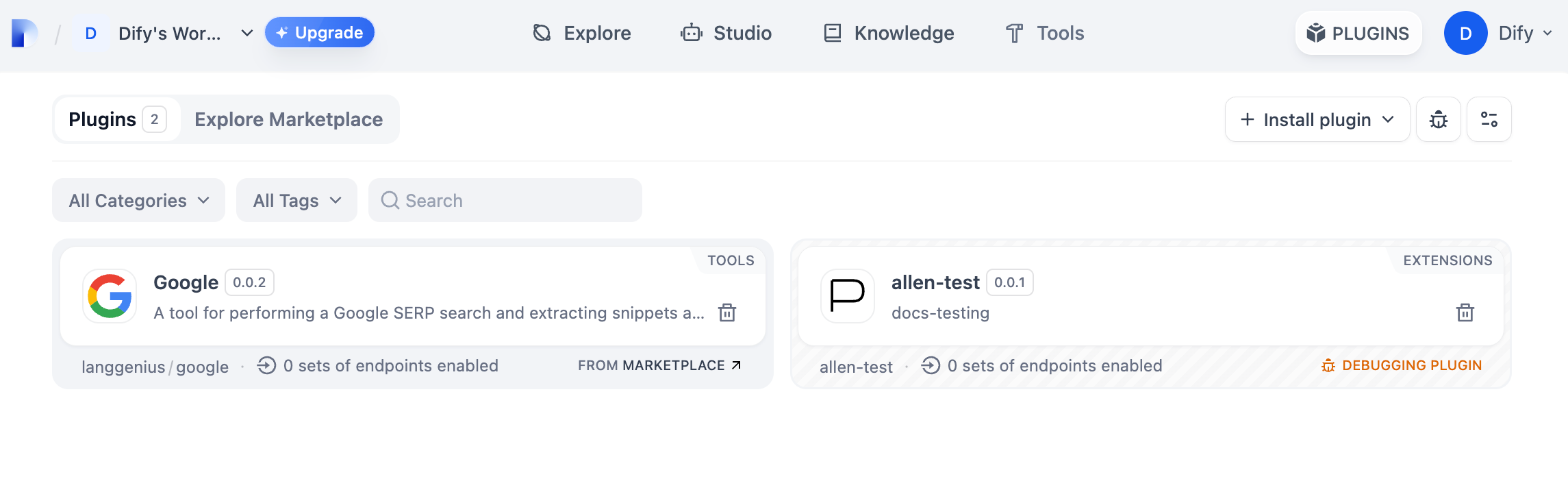

### Extend the Agent with Dify Tools

Add [Dify tools](/en/use-dify/workspace/tools) to enable the model to interact with external services and APIs for tasks beyond text generation, such as fetching live data, searching the web, or querying databases.

The model decides when and which tools to use based on each query. To guide this more precisely, mention specific tool names in your prompt and describe when they should be used.

You can generate up to 2 variations per result. Each variation uses additional tokens.

# Agent

Source: https://docs.dify.ai/en/use-dify/build/agent

Chat-style apps where the model can reason, make decisions, and use tools autonomously

Agents are chat-style apps where the model can reason through a task, decide what to do next, and use tools when needed to complete the user's request.

Use it when you want the model to autonomously decide how to approach a task using available tools, without designing a multi-step workflow. For example, building a data analysis assistant that can fetch live data, generate charts, and summarize findings on its own.

Agents keep up to 500 messages or 2,000 tokens of history per conversation. If either limit is exceeded, the oldest messages will be removed to make room for new ones.

Agents support optional features like conversation openers, follow-up suggestions, and more. See [App Toolkit](/en/use-dify/build/additional-features) for details.

## Configure

### Write the Prompt

The prompt tells the model what to do, how to respond, and what constraints to follow. For an agent, the prompt also guides how the model reasons through tasks and decides when to use tools, so be specific about the workflow you expect.

Here are some tips for writing effective prompts:

* **Define the persona**: Describe who the model should act as and the expertise it should draw on.

* **Specify the output format**: Describe the structure, length, or style you expect.

* **Set constraints**: Tell the model what to avoid or what rules to follow.

* **Guide tool usage**: Mention specific tools by name and describe when they should be used.

* **Outline the workflow**: Break down complex tasks into logical steps the model should follow.

#### Create Dynamic Prompts with Variables

To adapt the agent to different users or contexts without rewriting the prompt each time, add variables to collect the necessary information upfront.

Variables are placeholders in the prompt—each one appears as an input field that users fill in before the conversation starts, and their values are injected into the prompt at runtime. Users can also update variable values mid-conversation, and the prompt will adjust accordingly.

For example, a data analysis agent might use a domain variable so users can specify which area to focus on:

```text wrap theme={null}

You are a data analyst specializing in {{domain}}. Help users explore and understand their data.

When asked a question, use available data tools to fetch the relevant information. If the result suits a visual format, generate a chart. Explain your findings in plain language.

Keep responses concise. If a question is ambiguous, ask for clarification before fetching data.

```

While drafting the prompt, type `/` > **New Variable** to quickly insert a named placeholder. You can configure its details in the **Variables** section later.

Choose the variable type that matches the input you expect:

Accepts up to 256 characters. Use it for names, email addresses, titles, or any brief text input that fits on a single line.

Allows long-form text without length restrictions. It gives users a multi-line text area for detailed descriptions.

Displays a dropdown menu with predefined options.

Restricts input to numerical values only—ideal for quantities, ratings, IDs, or any data requiring mathematical processing.

Provides a simple yes/no option. When a user checks the box, the output is `true`; otherwise, it's `false`. Use it for confirmations or any case that requires a binary choice.

Fetches variable values from an external API at runtime instead of collecting them from users.

Use it when your prompt needs dynamic data from an external source, such as live weather conditions or database records. See [API Extension](/en/use-dify/workspace/api-extension/api-extension) for details.

**Label Name** is what end users see for each input field.

#### Generate or Improve the Prompt with AI

If you're unsure where to start or want to refine the existing prompt, click **Generate** to let an LLM help you draft it.

Describe what you want from scratch, or reference `current_prompt` and specify what to improve. For more targeted results, add an example in **Ideal Output**.

Each generation is saved as a version, so you can experiment and roll back freely.

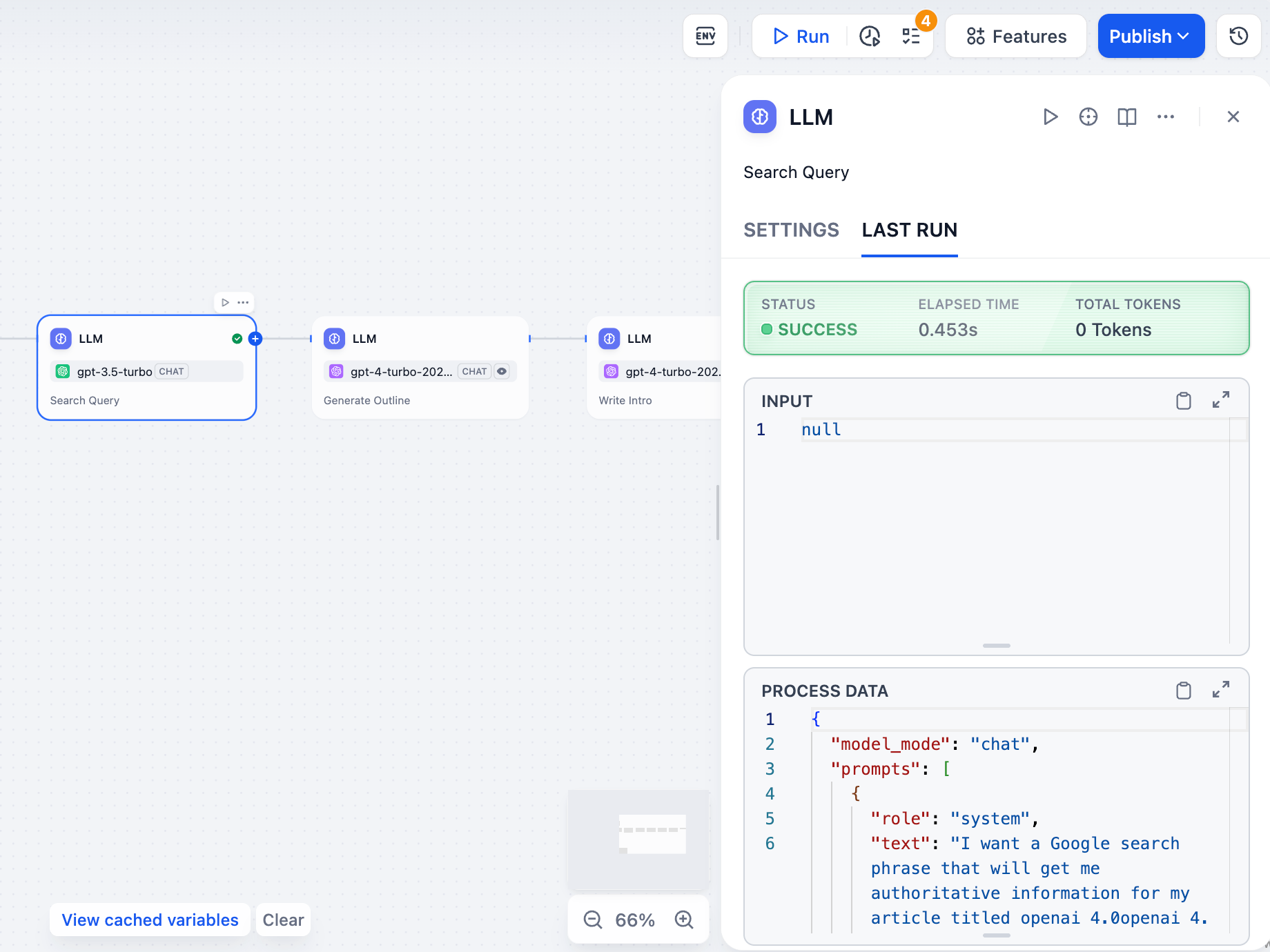

### Extend the Agent with Dify Tools

Add [Dify tools](/en/use-dify/workspace/tools) to enable the model to interact with external services and APIs for tasks beyond text generation, such as fetching live data, searching the web, or querying databases.

The model decides when and which tools to use based on each query. To guide this more precisely, mention specific tool names in your prompt and describe when they should be used.

![Tool Name]() You can disable or remove added tools, and modify their configuration. If a tool requires authentication, select an existing credential or create a new one.

To change the default credential, go to **Tools** or **Plugins**.

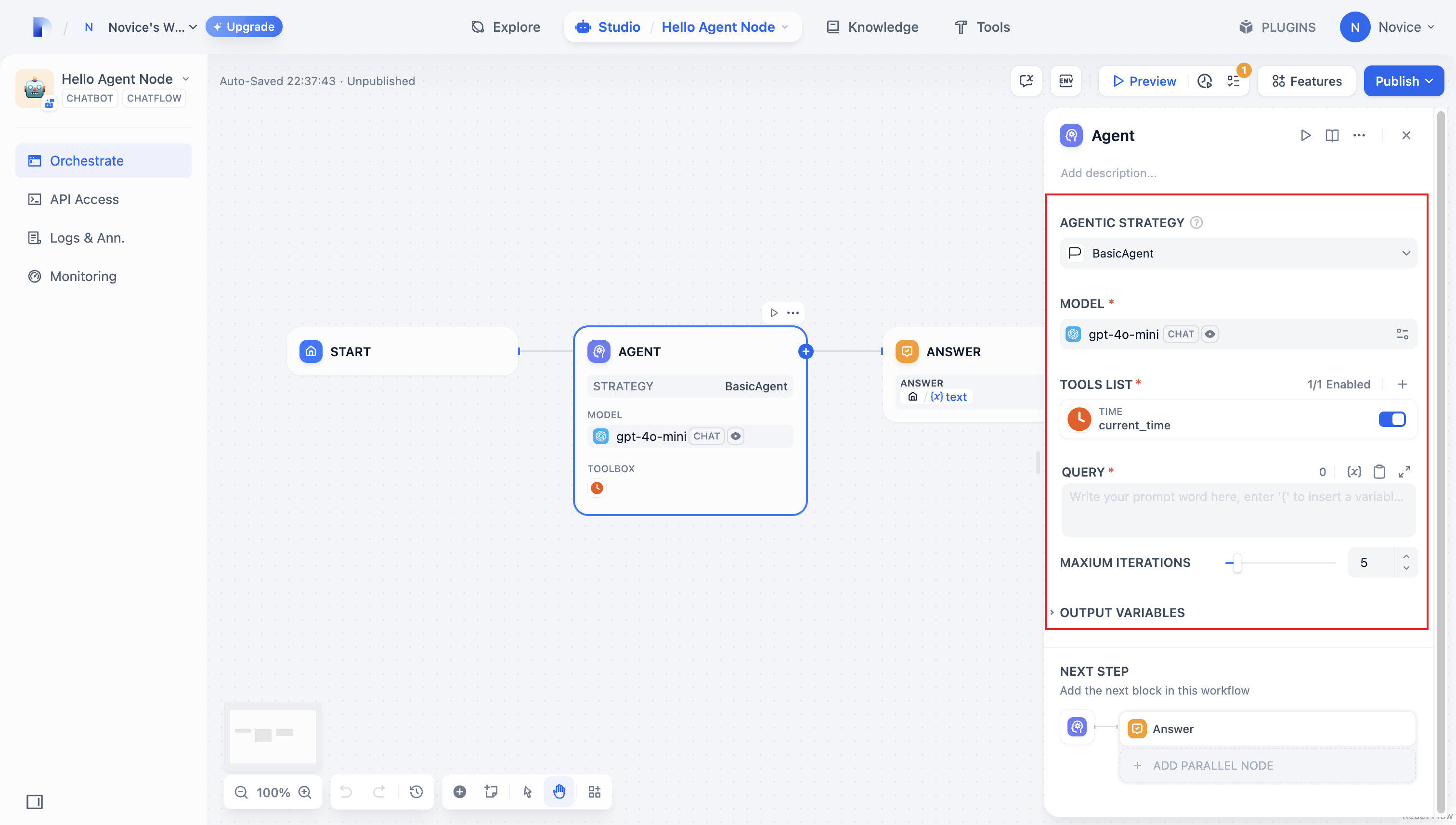

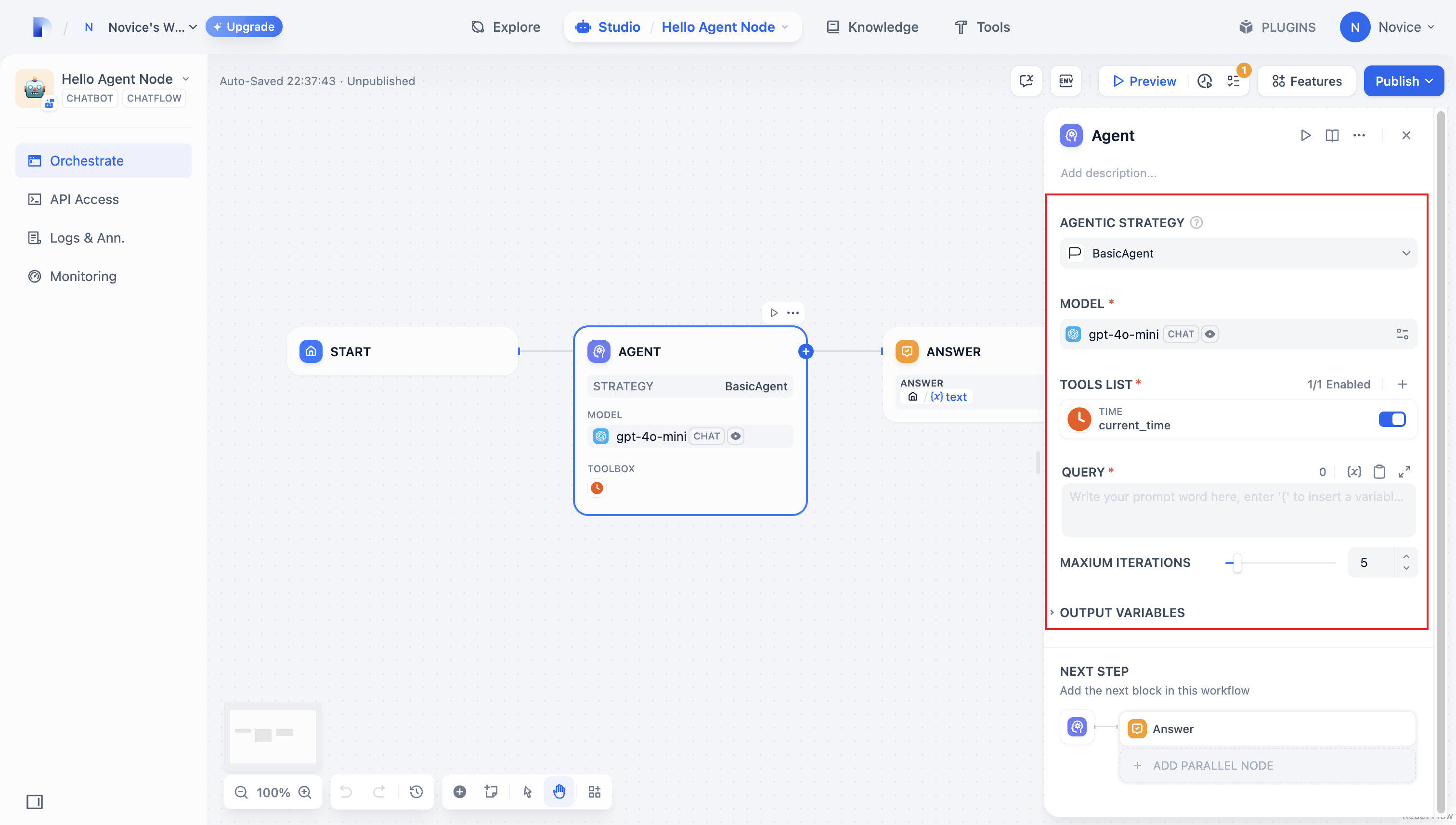

#### Maximum Iterations

**Maximum Iterations** in **Agent Settings** limits how many times the model can repeat its reasoning-and-action cycle (think, call a tool, process the result) for a single request.

Increase this value for complex, multi-step tasks that require multiple tool calls. Higher values increase latency and token costs.

### Ground Responses in Your Own Data

To ground the model's responses in your own data rather than general knowledge, add a knowledge base.

The model evaluates each user query against your knowledge base descriptions and decides whether retrieval is needed—you don't need to mention knowledge bases in your prompt.

**The more detailed your knowledge base description, the better the model can determine relevance**, leading to more accurate and targeted retrieval.

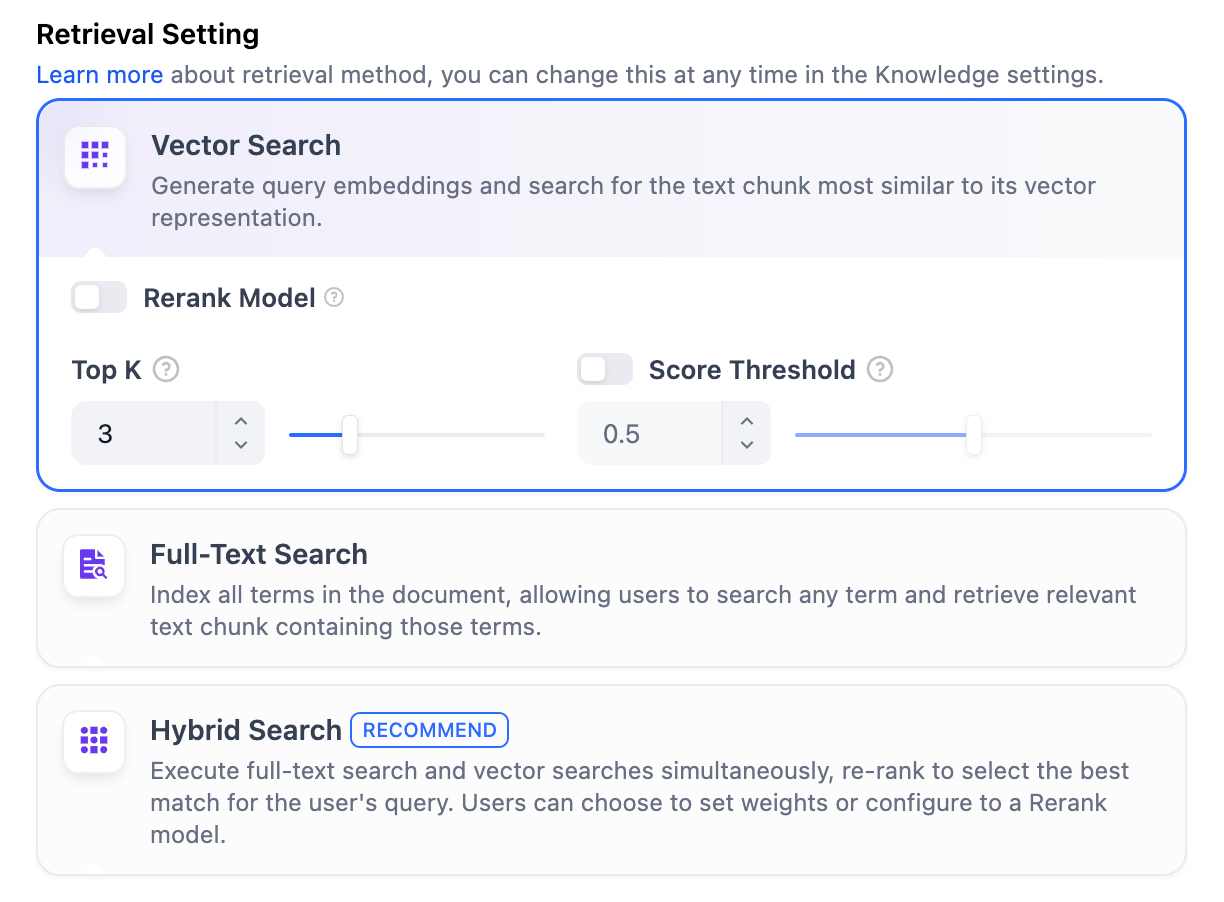

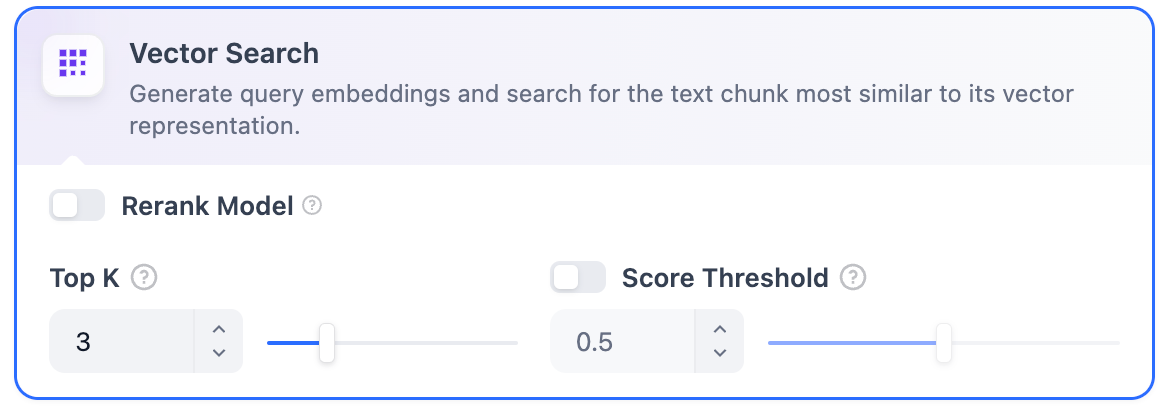

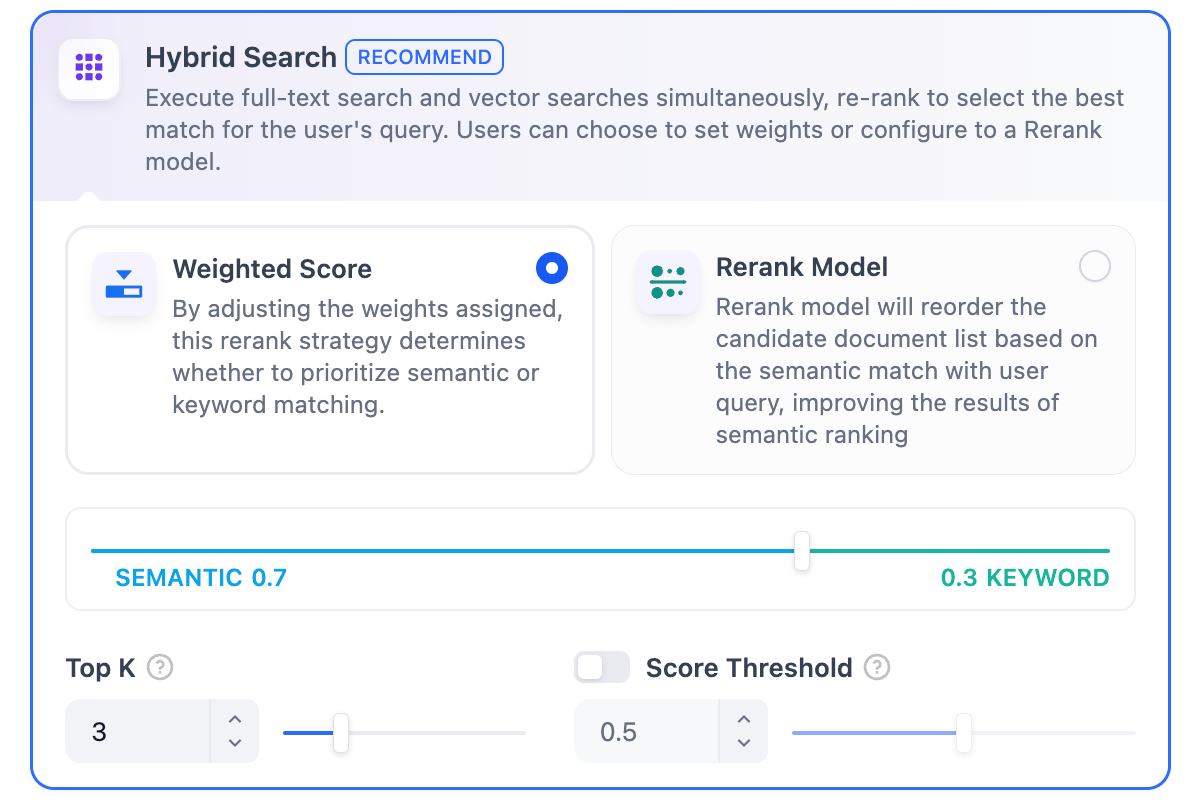

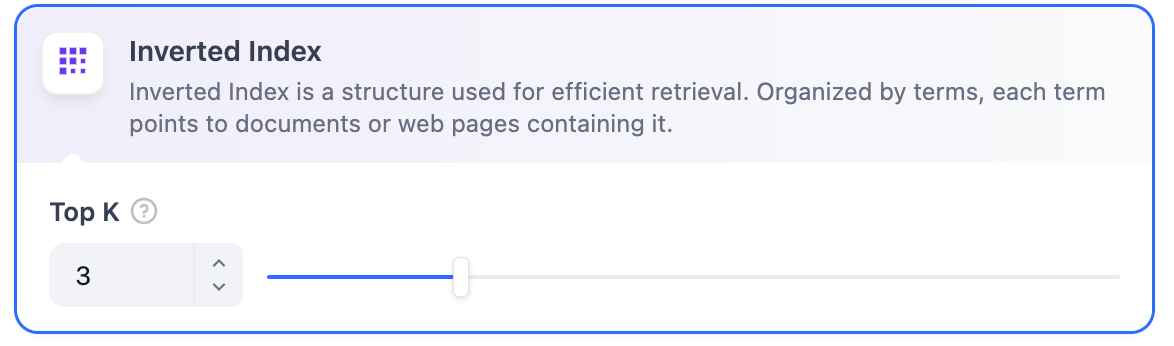

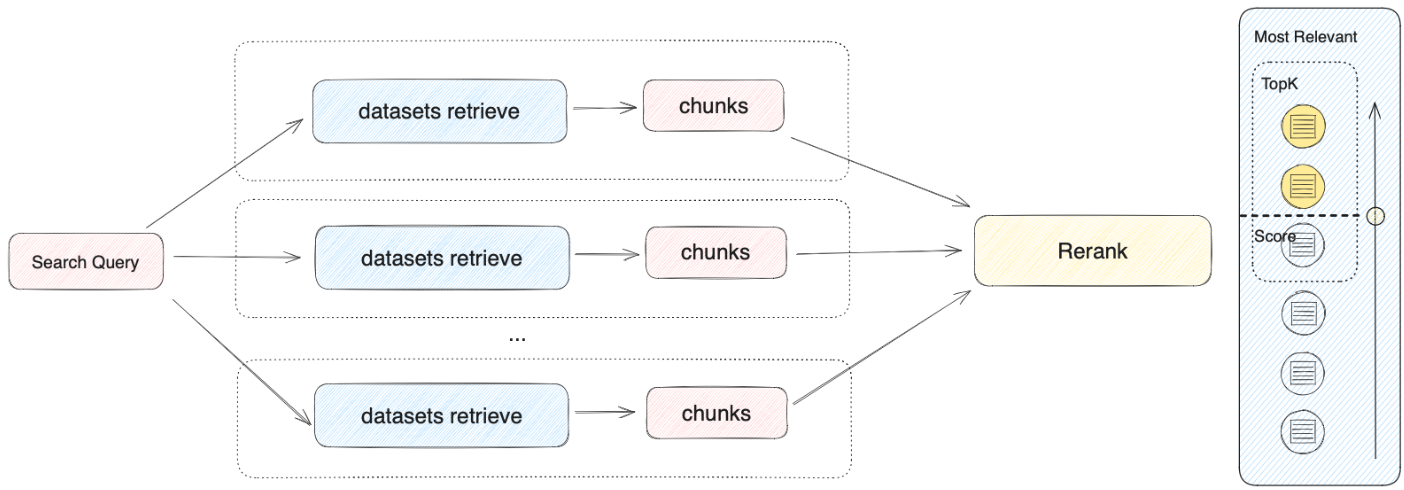

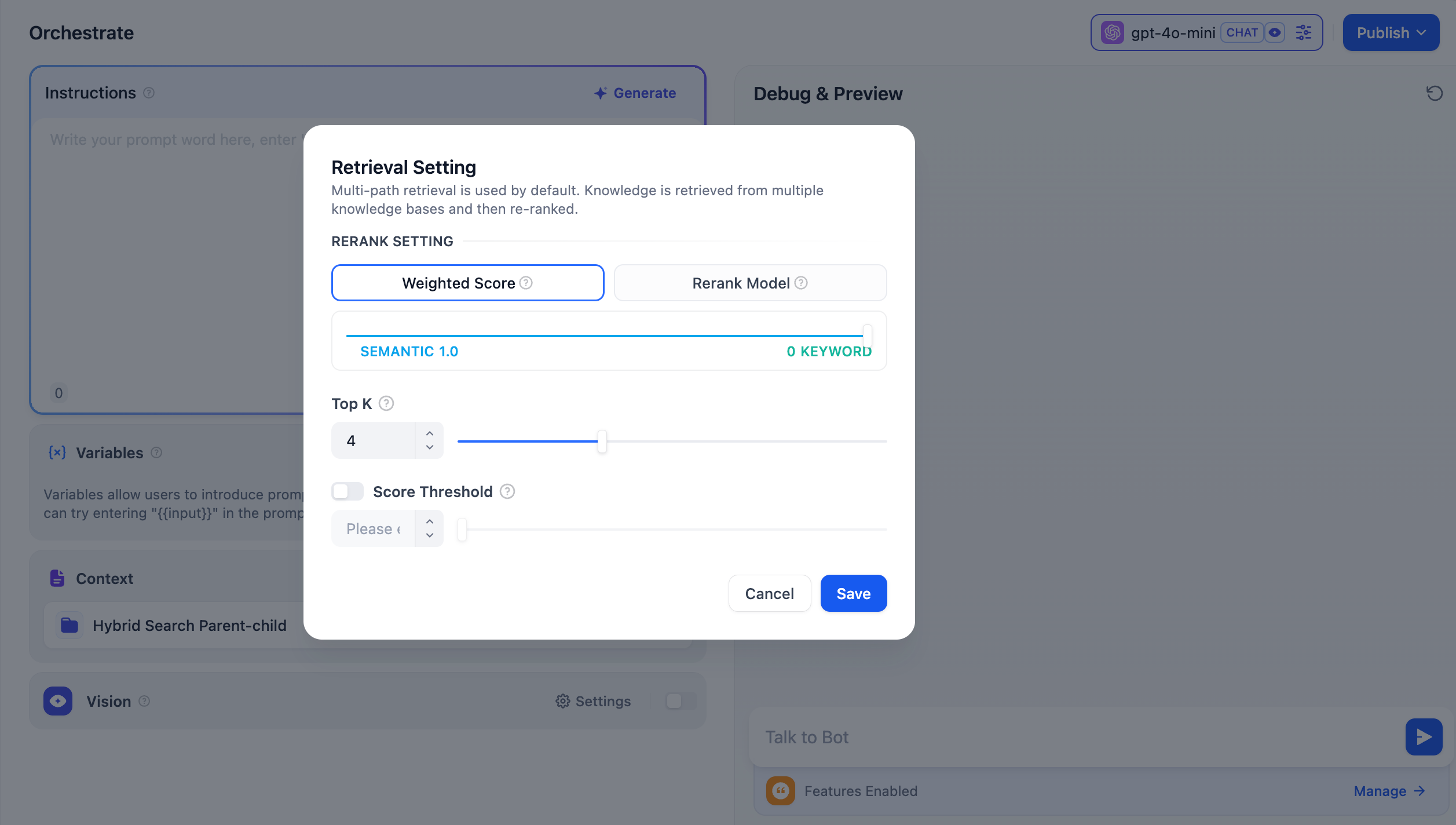

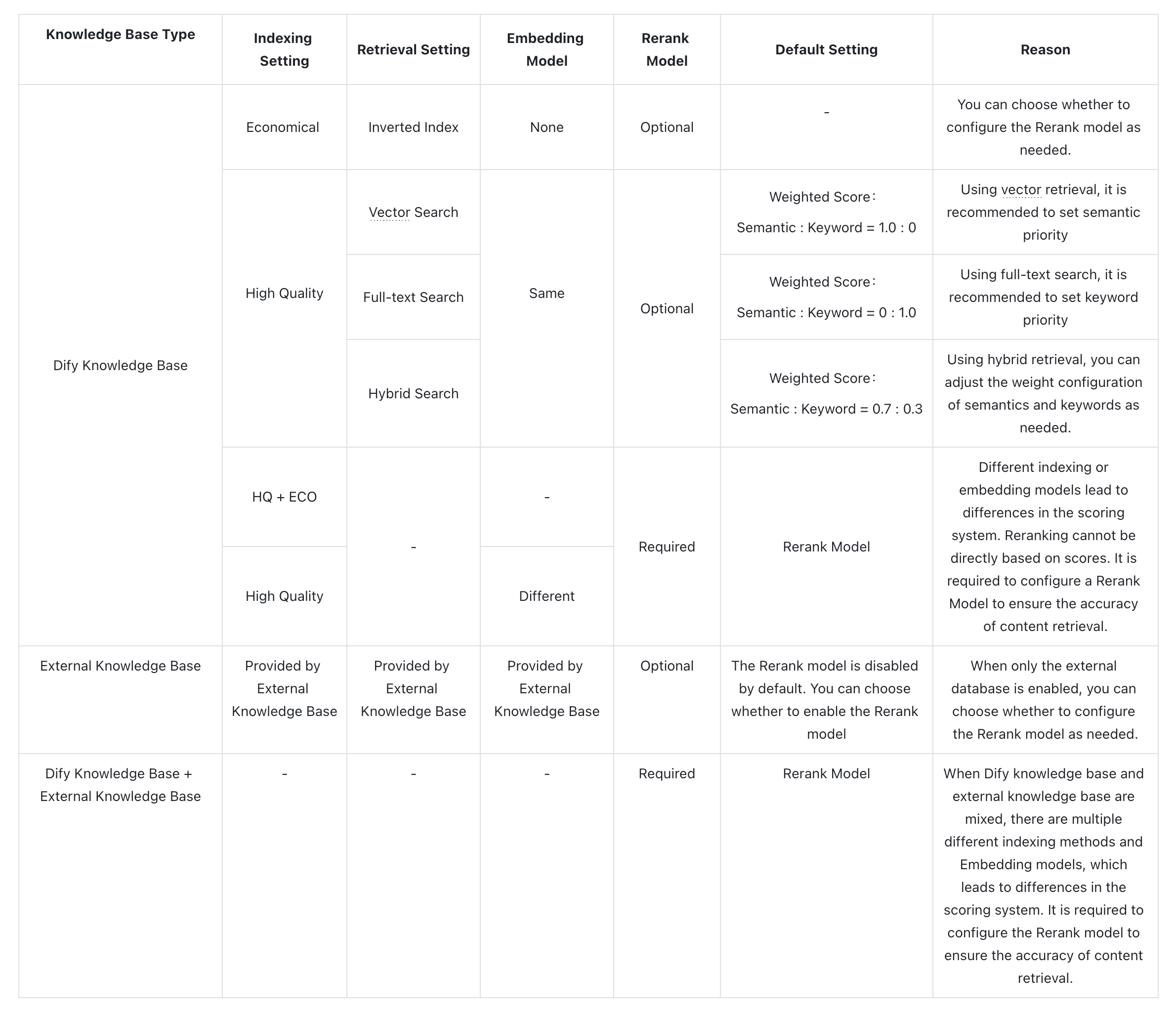

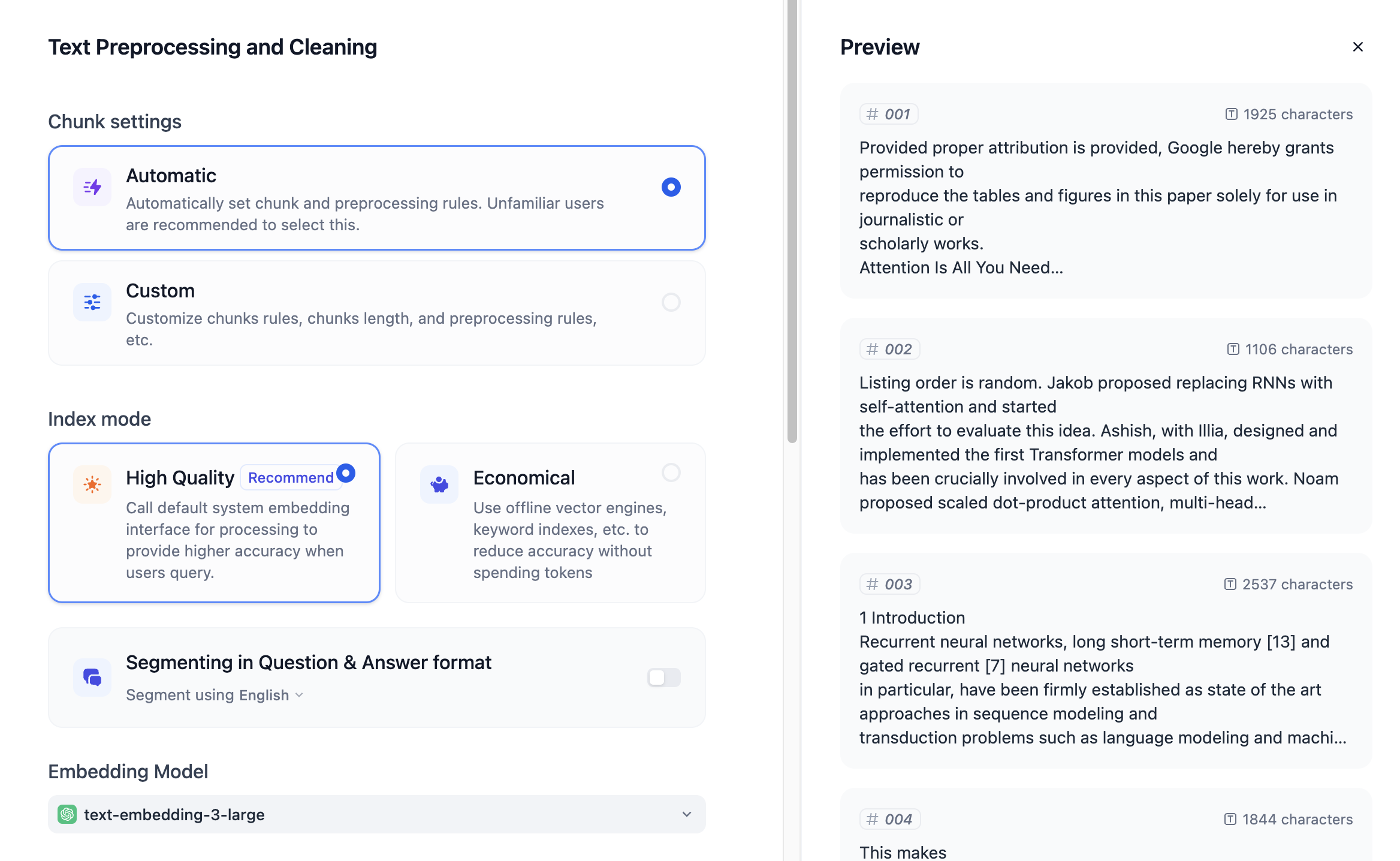

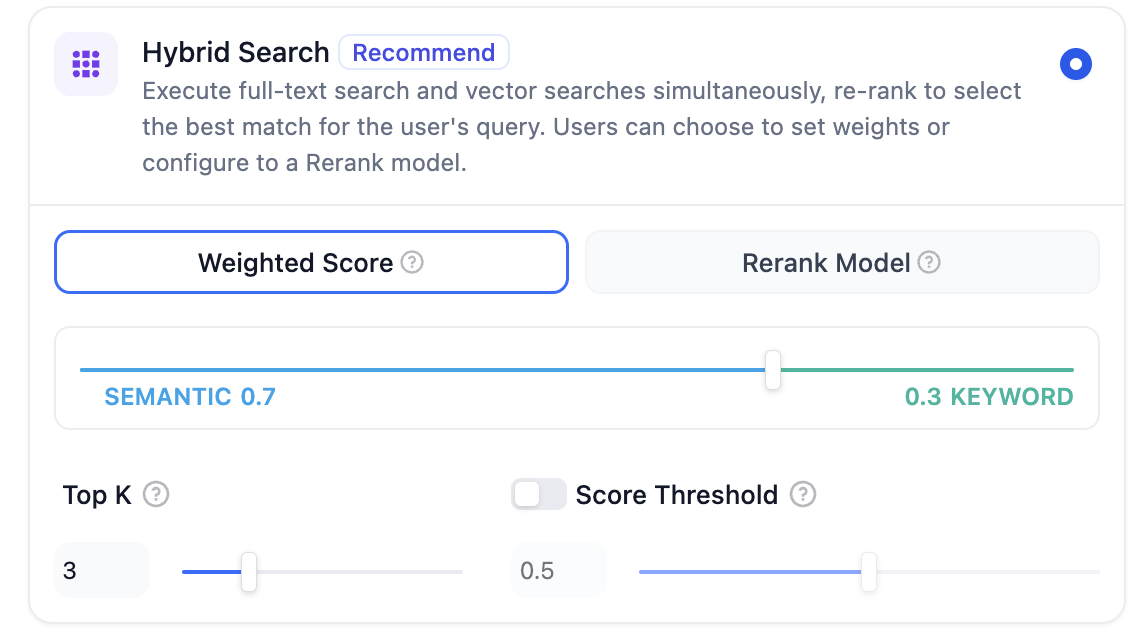

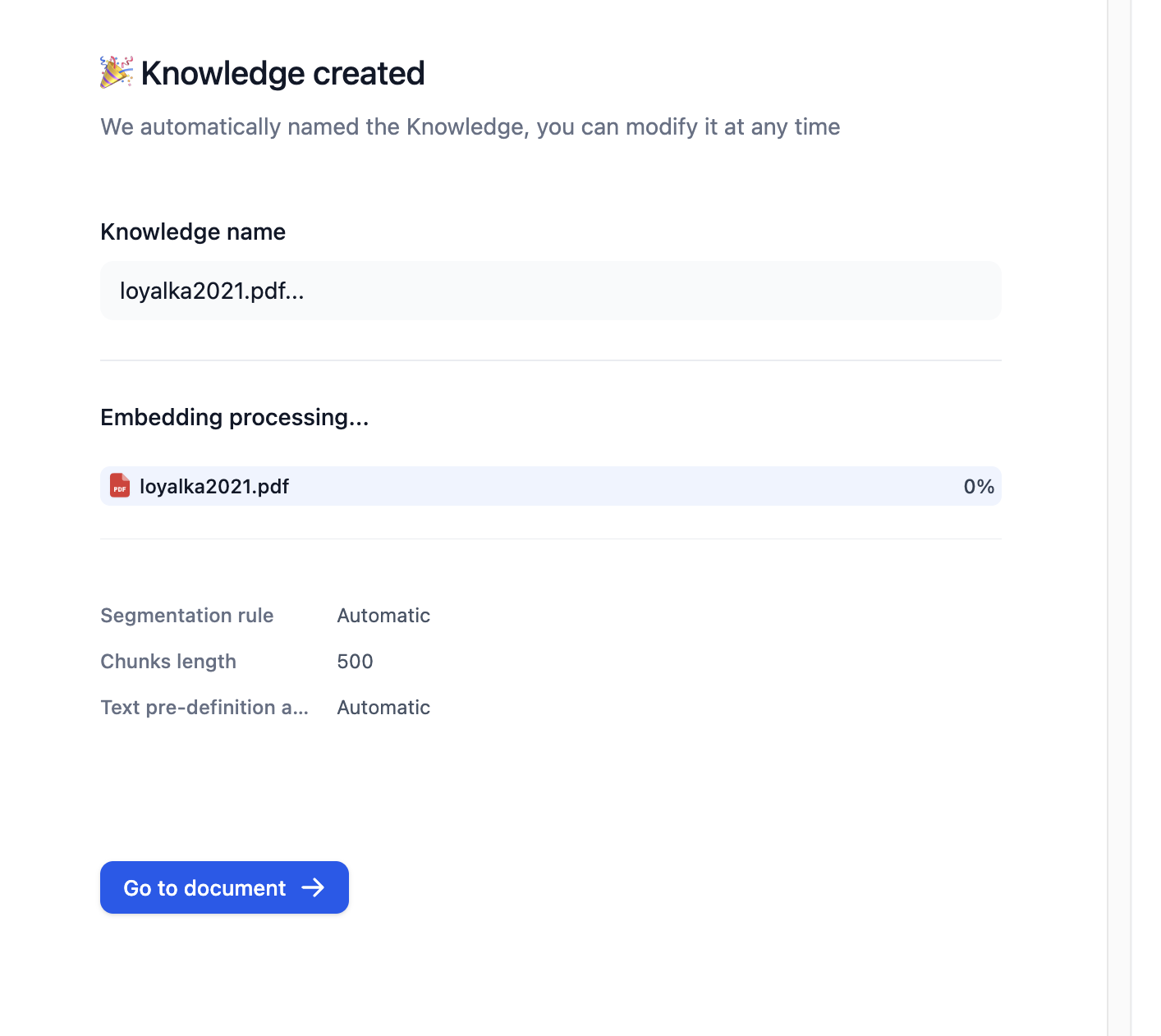

#### Configure App-Level Retrieval Settings

To fine-tune how retrieval results are processed, click **Retrieval Setting**.

There are two layers of retrieval settings—the knowledge base level and the app level.

Think of them as two consecutive filters: the knowledge base settings determine the initial pool of results, and the app settings further rerank the results or narrow down the pool.

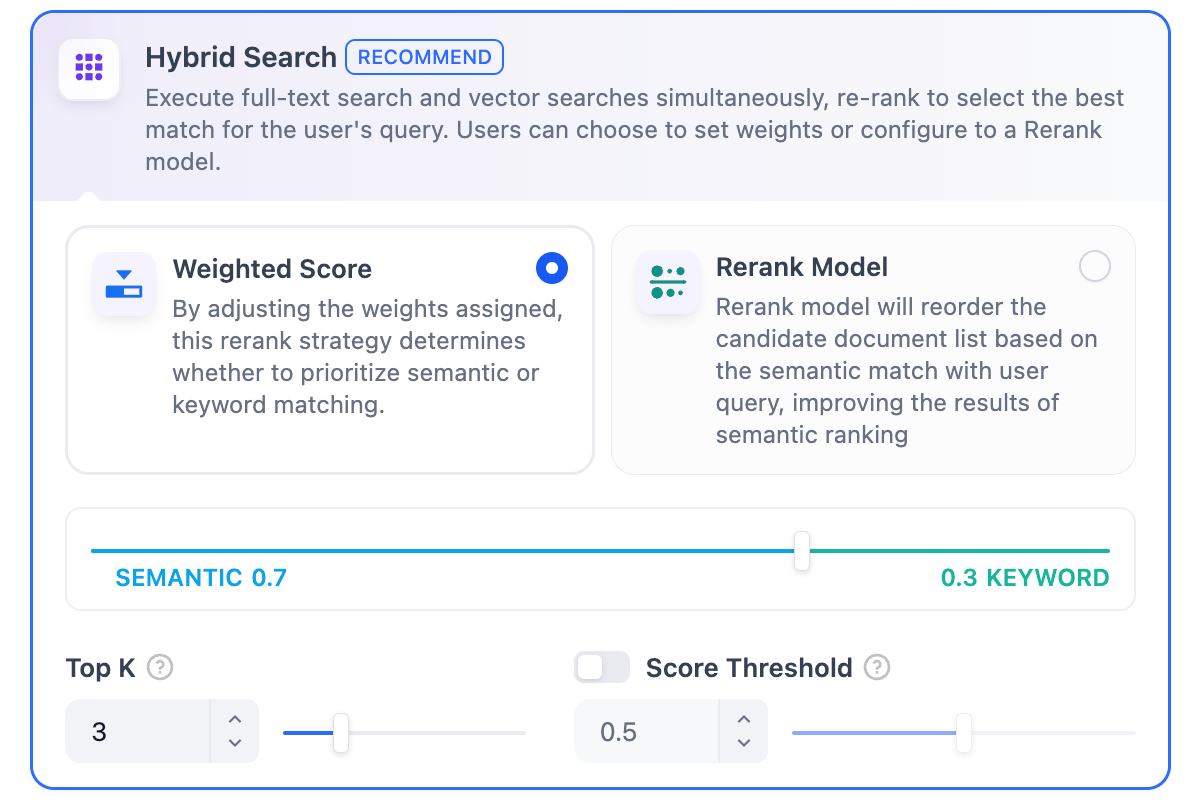

* **Rerank Settings**

* **Weighted Score**

The relative weight between semantic similarity and keyword matching during reranking. Higher semantic weight favors meaning relevance, while higher keyword weight favors exact matches.

Weighted Score is available only when all added knowledge bases are indexed with **High Quality** mode.

* **Rerank Model**

The rerank model to re-score and reorder all the results based on their relevance to the query.

If any multimodal knowledge bases are added, select a multimodal rerank model (marked with a **Vision** tag) as well. Otherwise, retrieved images will be excluded from reranking and the final output.

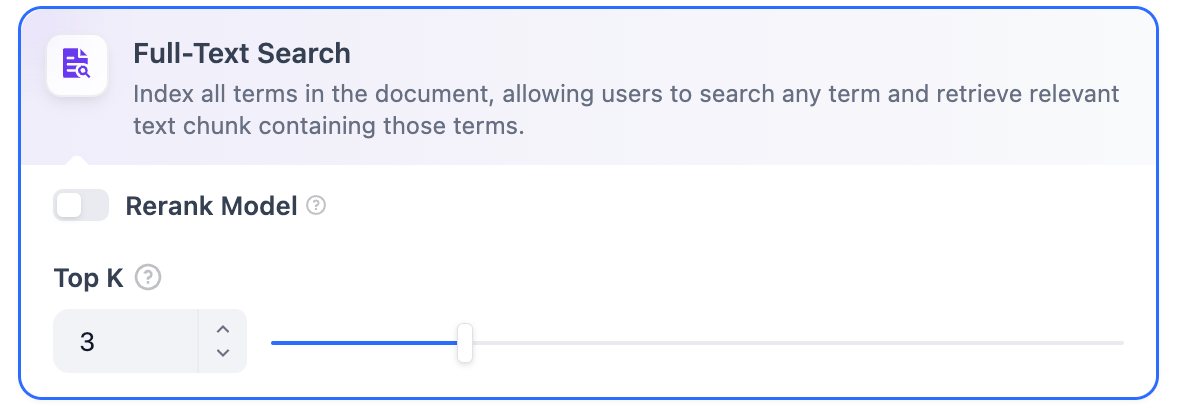

* **Top K**

The maximum number of top results to return after reranking.

When a rerank model is selected, this value will be automatically adjusted based on the model's maximum input capacity (how much text the model can process at once).

* **Score Threshold**

The minimum similarity score for returned results. Results scoring below this threshold are excluded. Use higher thresholds for stricter relevance or lower thresholds to include broader matches.

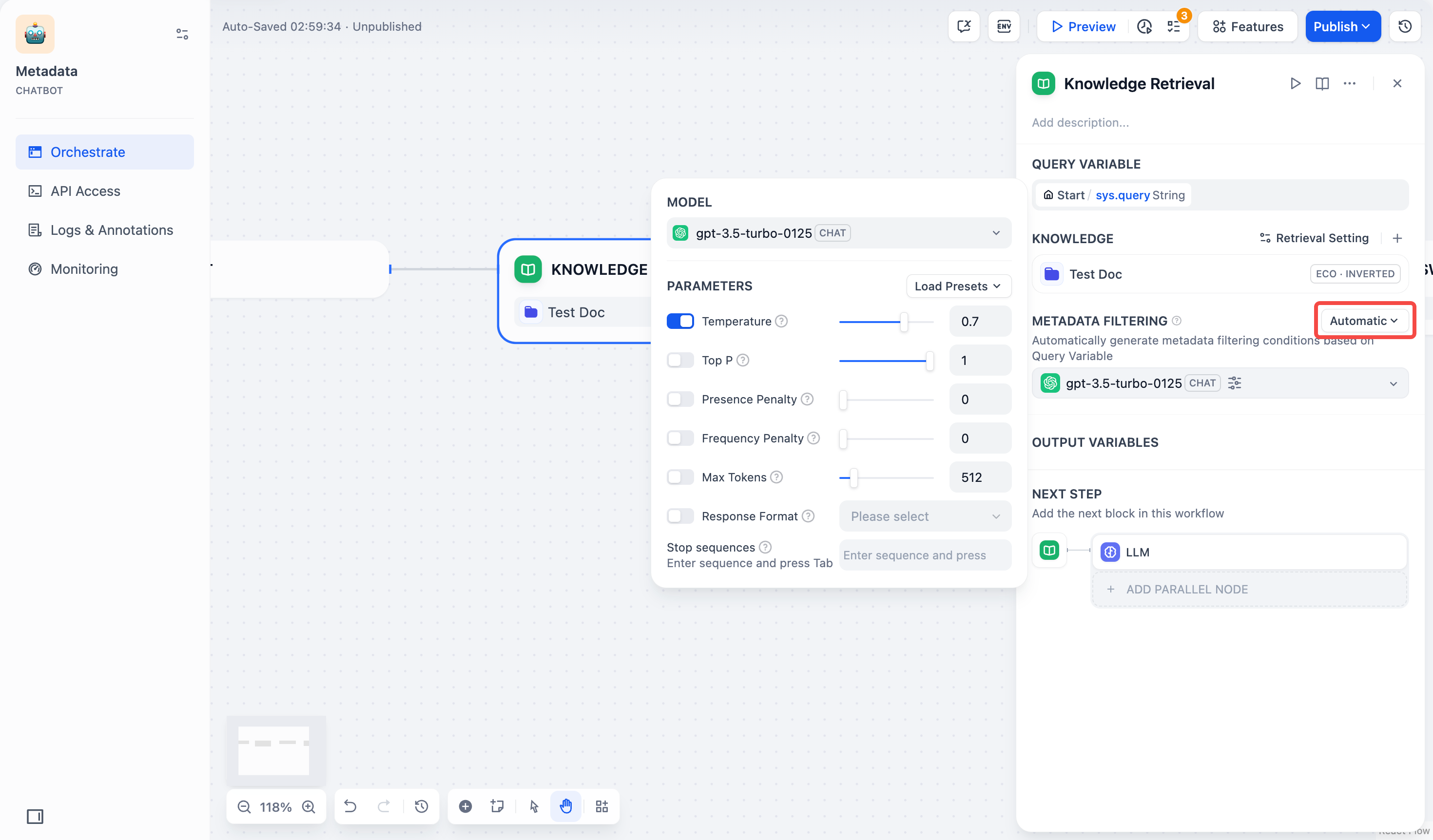

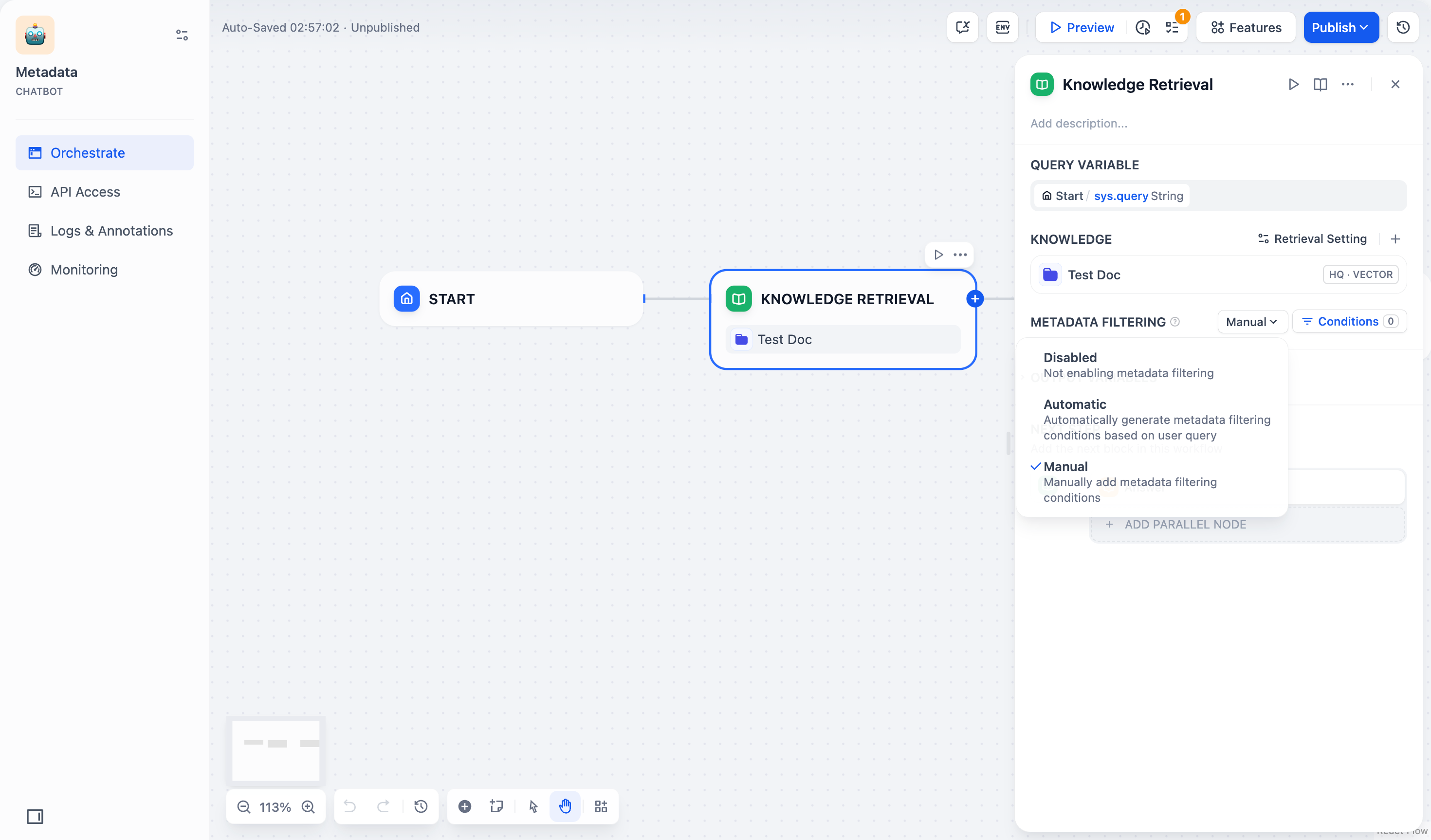

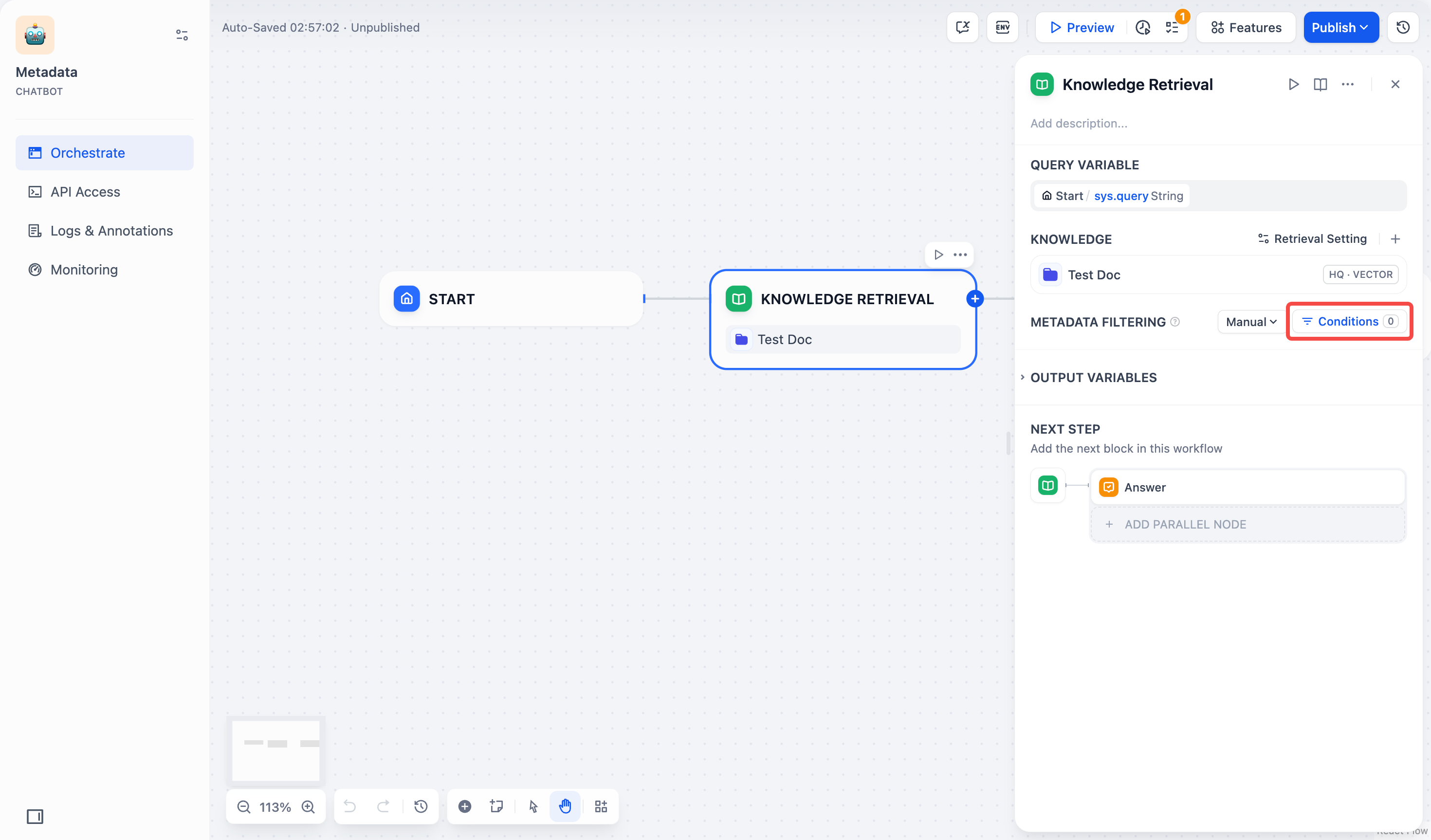

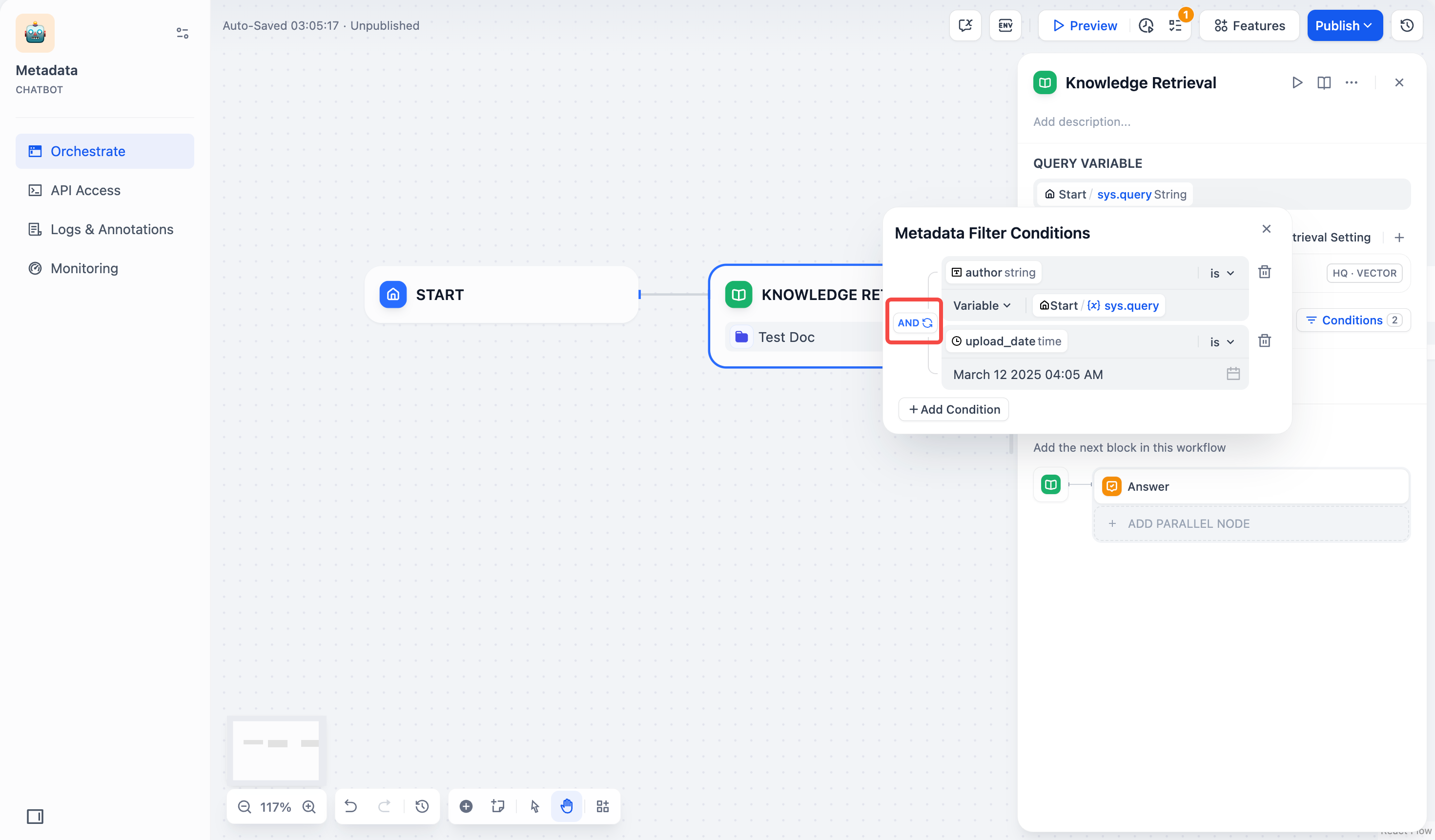

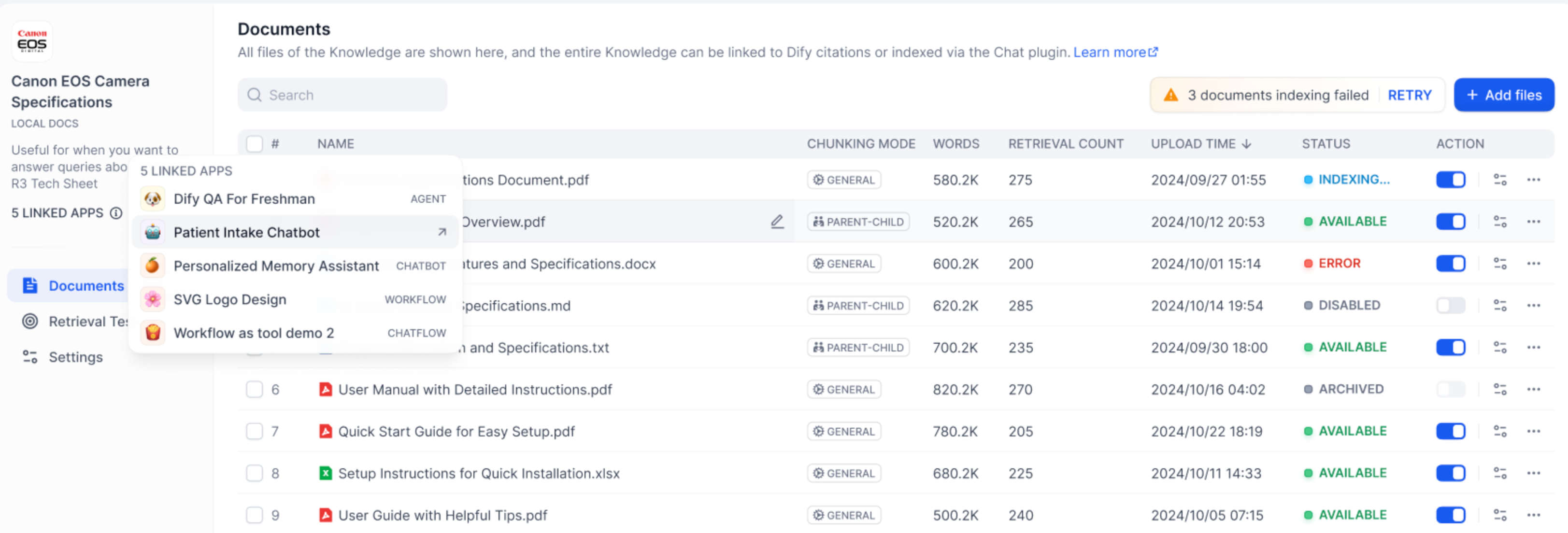

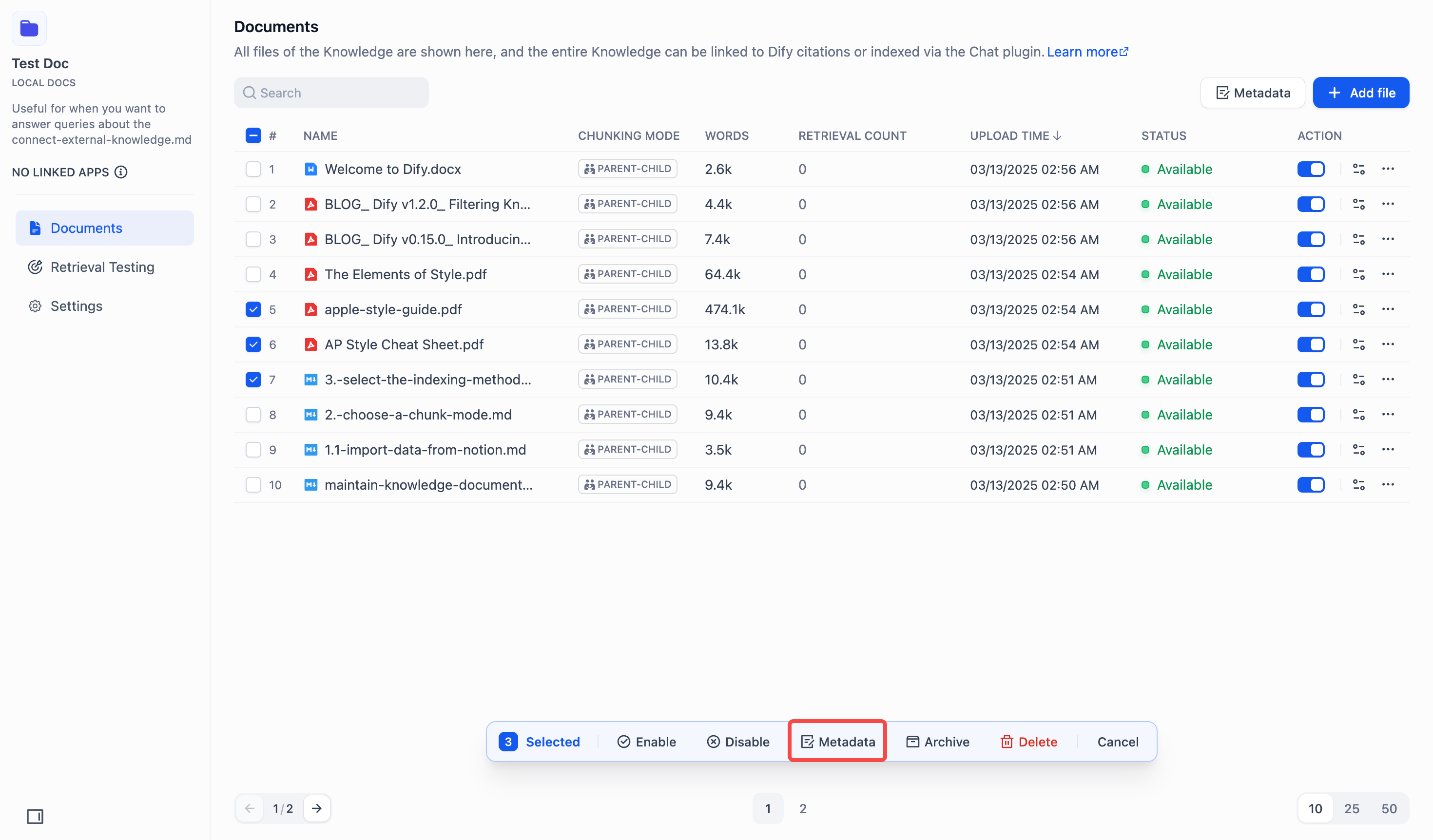

#### Search Within Specific Documents

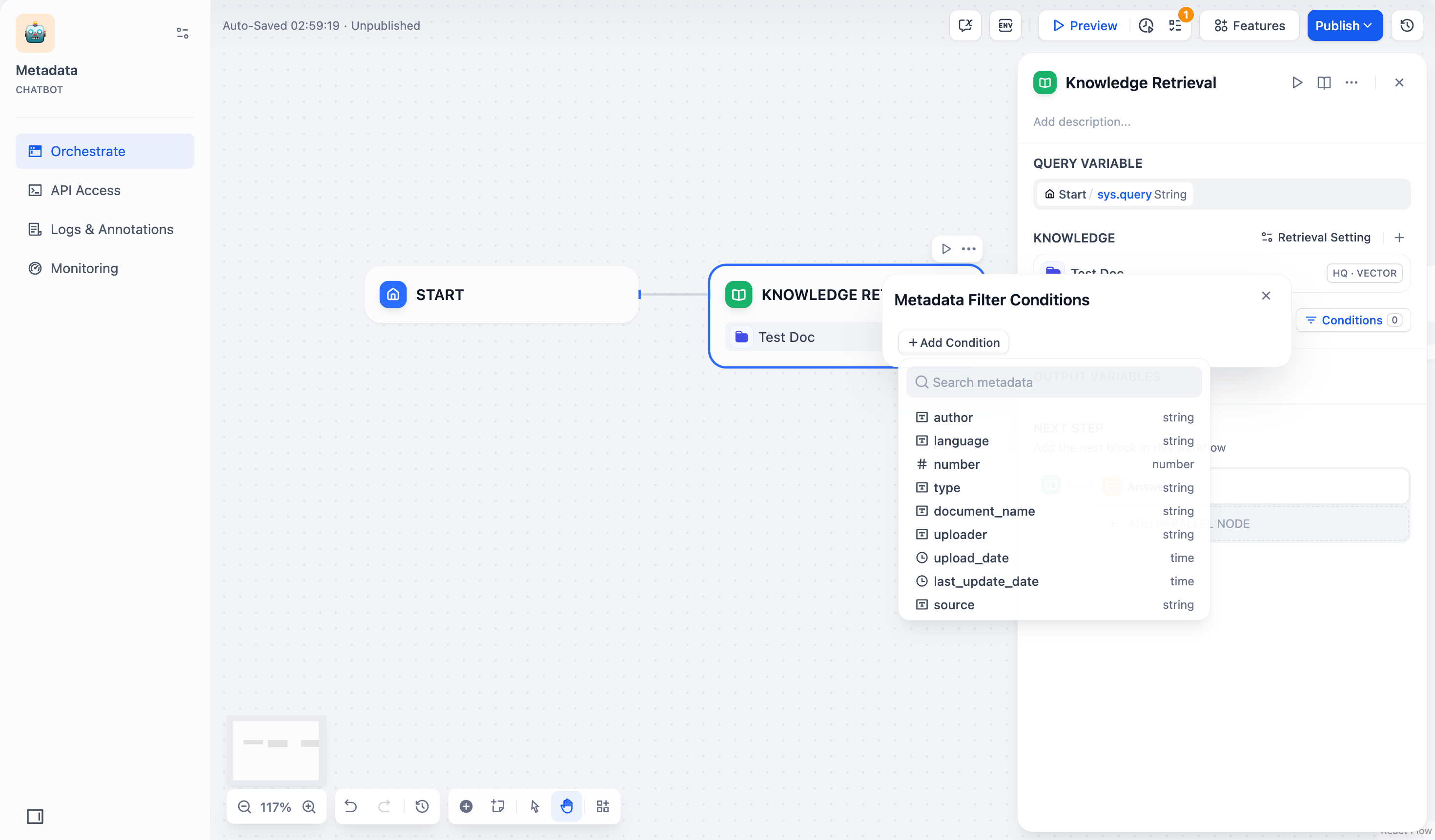

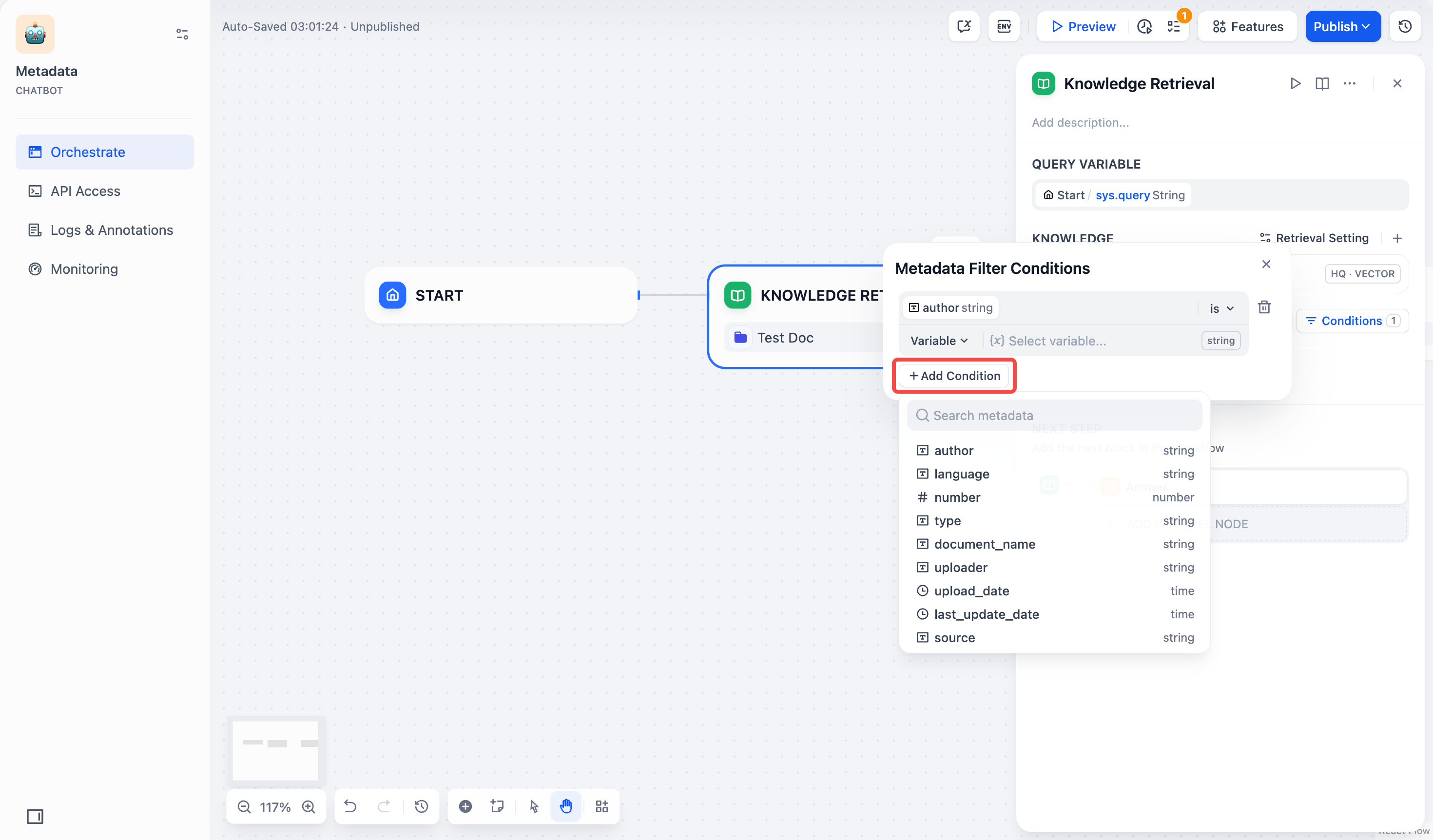

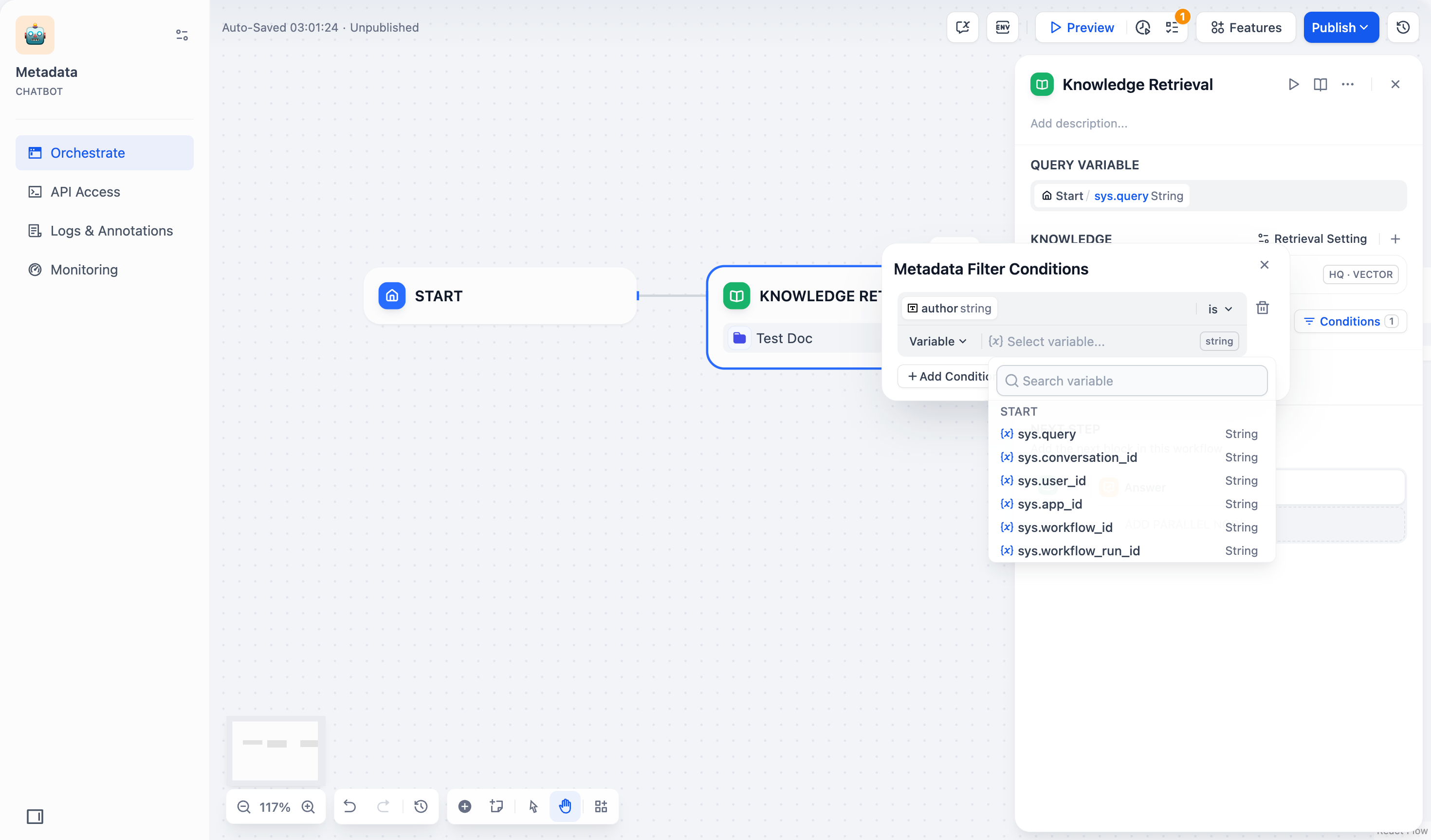

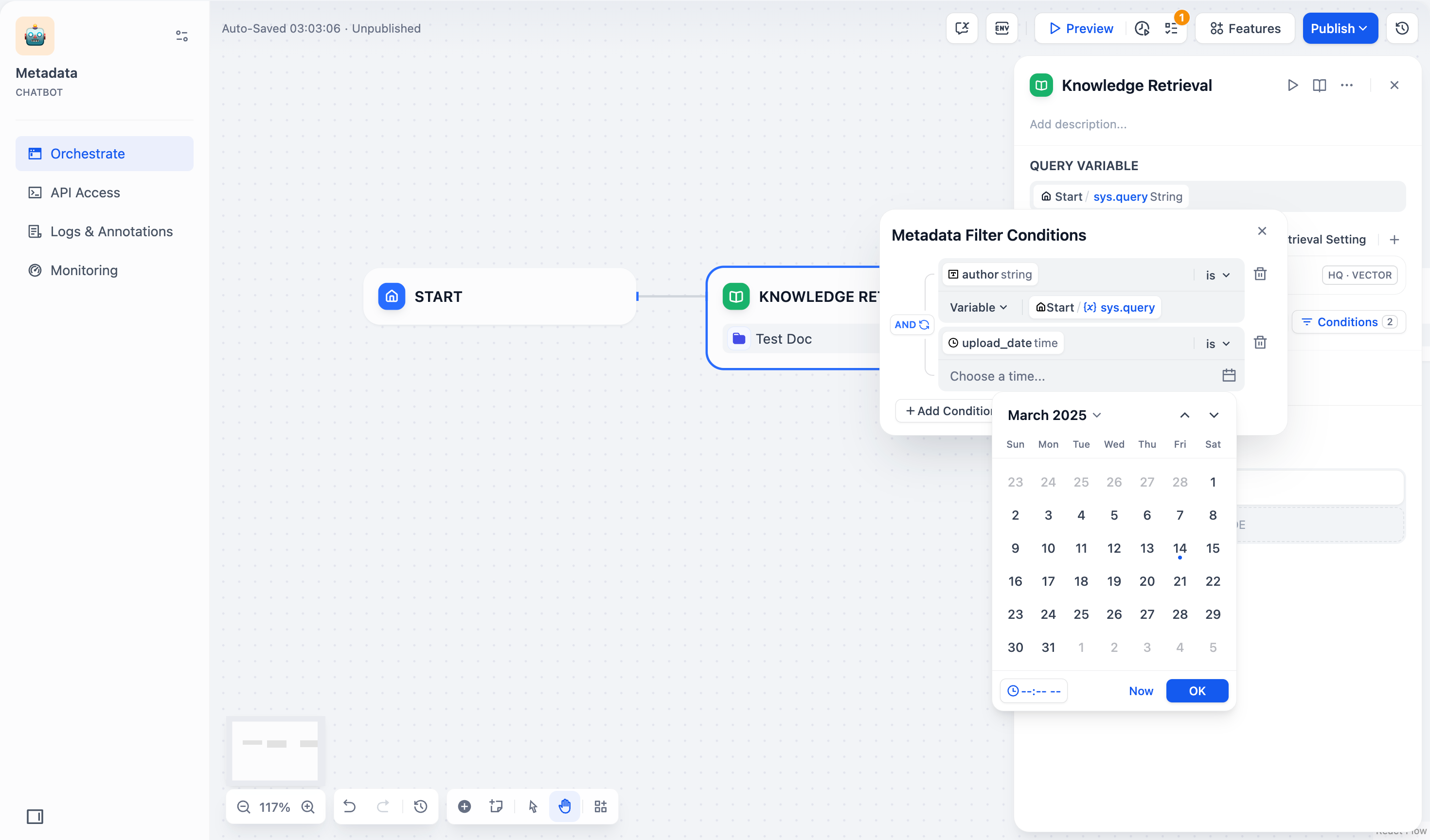

By default, retrieval searches across the entire knowledge base. To restrict retrieval to specific documents, enable manual or automatic metadata filtering.

This improves retrieval precision, especially when your knowledge base is large or contains content for different contexts.

For creating and managing document metadata, see [Metadata](/en/use-dify/knowledge/metadata).

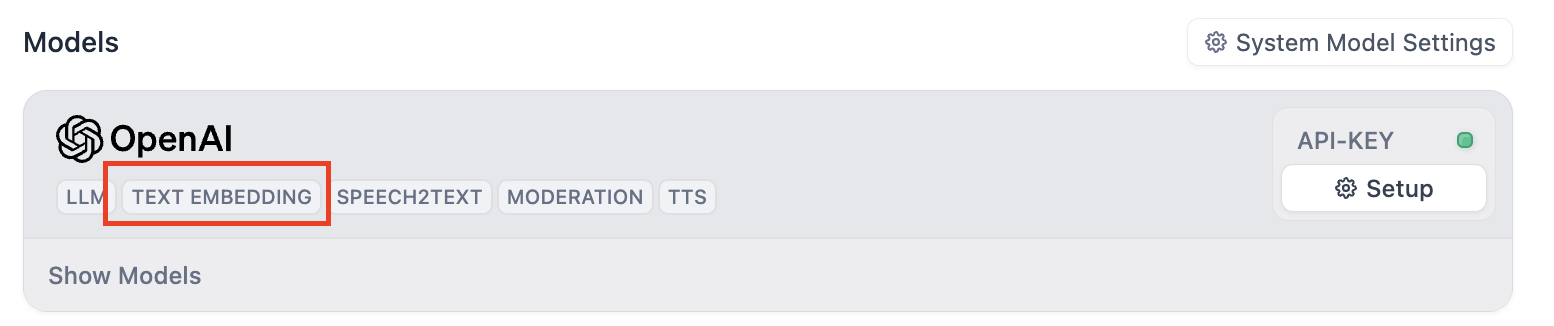

### Process Multimodal Inputs

To allow end users to upload files, select a model with the corresponding multimodal capabilities. The relevant file type toggles—**Vision**, **Audio**, or **Document**—appear once the model supports them, and you can enable each as needed.

You can quickly identify a model's supported modalities by its tags.

You can disable or remove added tools, and modify their configuration. If a tool requires authentication, select an existing credential or create a new one.

To change the default credential, go to **Tools** or **Plugins**.

#### Maximum Iterations

**Maximum Iterations** in **Agent Settings** limits how many times the model can repeat its reasoning-and-action cycle (think, call a tool, process the result) for a single request.

Increase this value for complex, multi-step tasks that require multiple tool calls. Higher values increase latency and token costs.

### Ground Responses in Your Own Data

To ground the model's responses in your own data rather than general knowledge, add a knowledge base.

The model evaluates each user query against your knowledge base descriptions and decides whether retrieval is needed—you don't need to mention knowledge bases in your prompt.

**The more detailed your knowledge base description, the better the model can determine relevance**, leading to more accurate and targeted retrieval.

#### Configure App-Level Retrieval Settings

To fine-tune how retrieval results are processed, click **Retrieval Setting**.

There are two layers of retrieval settings—the knowledge base level and the app level.

Think of them as two consecutive filters: the knowledge base settings determine the initial pool of results, and the app settings further rerank the results or narrow down the pool.

* **Rerank Settings**

* **Weighted Score**

The relative weight between semantic similarity and keyword matching during reranking. Higher semantic weight favors meaning relevance, while higher keyword weight favors exact matches.

Weighted Score is available only when all added knowledge bases are indexed with **High Quality** mode.

* **Rerank Model**

The rerank model to re-score and reorder all the results based on their relevance to the query.

If any multimodal knowledge bases are added, select a multimodal rerank model (marked with a **Vision** tag) as well. Otherwise, retrieved images will be excluded from reranking and the final output.

* **Top K**

The maximum number of top results to return after reranking.

When a rerank model is selected, this value will be automatically adjusted based on the model's maximum input capacity (how much text the model can process at once).

* **Score Threshold**

The minimum similarity score for returned results. Results scoring below this threshold are excluded. Use higher thresholds for stricter relevance or lower thresholds to include broader matches.

#### Search Within Specific Documents

By default, retrieval searches across the entire knowledge base. To restrict retrieval to specific documents, enable manual or automatic metadata filtering.

This improves retrieval precision, especially when your knowledge base is large or contains content for different contexts.

For creating and managing document metadata, see [Metadata](/en/use-dify/knowledge/metadata).

### Process Multimodal Inputs

To allow end users to upload files, select a model with the corresponding multimodal capabilities. The relevant file type toggles—**Vision**, **Audio**, or **Document**—appear once the model supports them, and you can enable each as needed.

You can quickly identify a model's supported modalities by its tags.

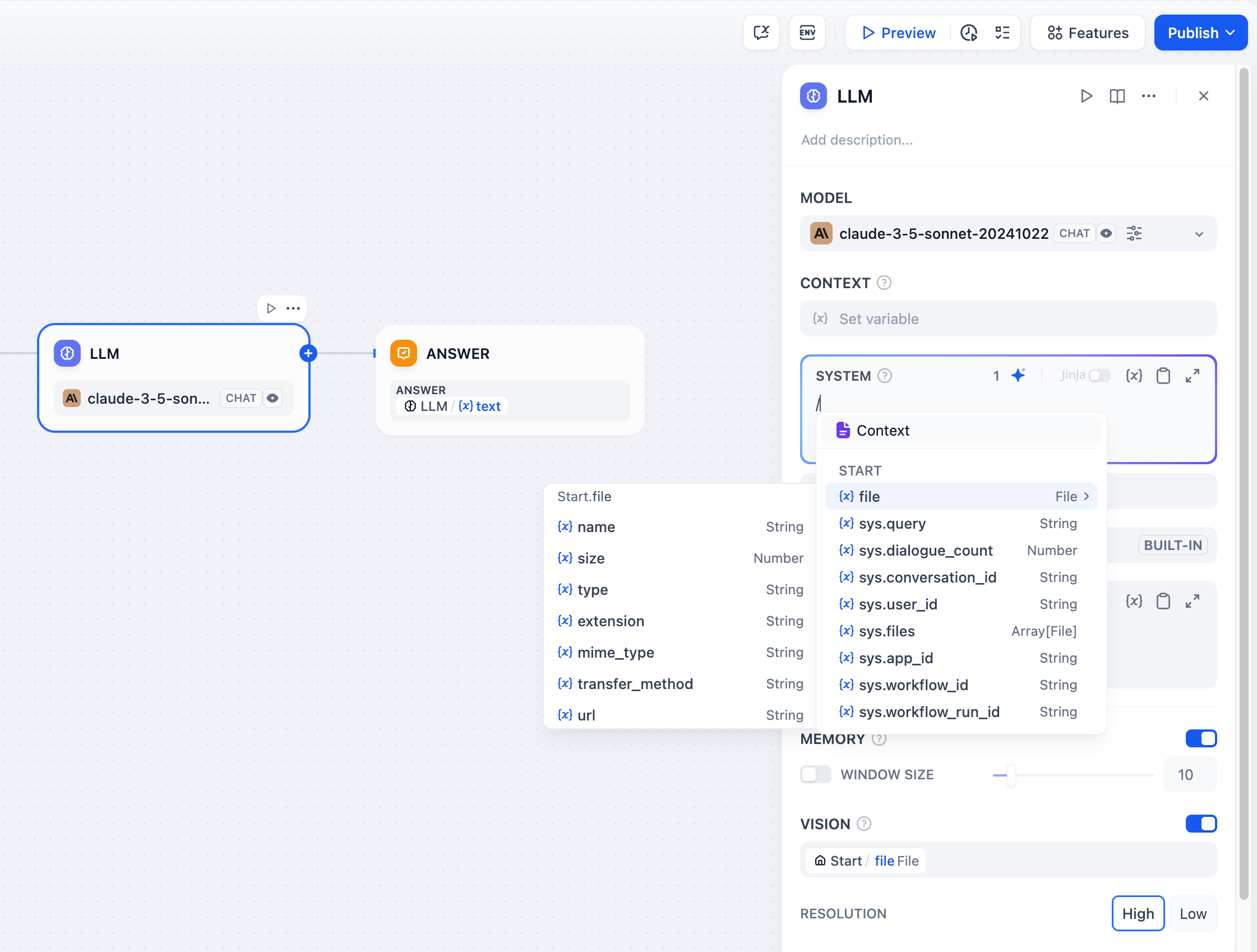

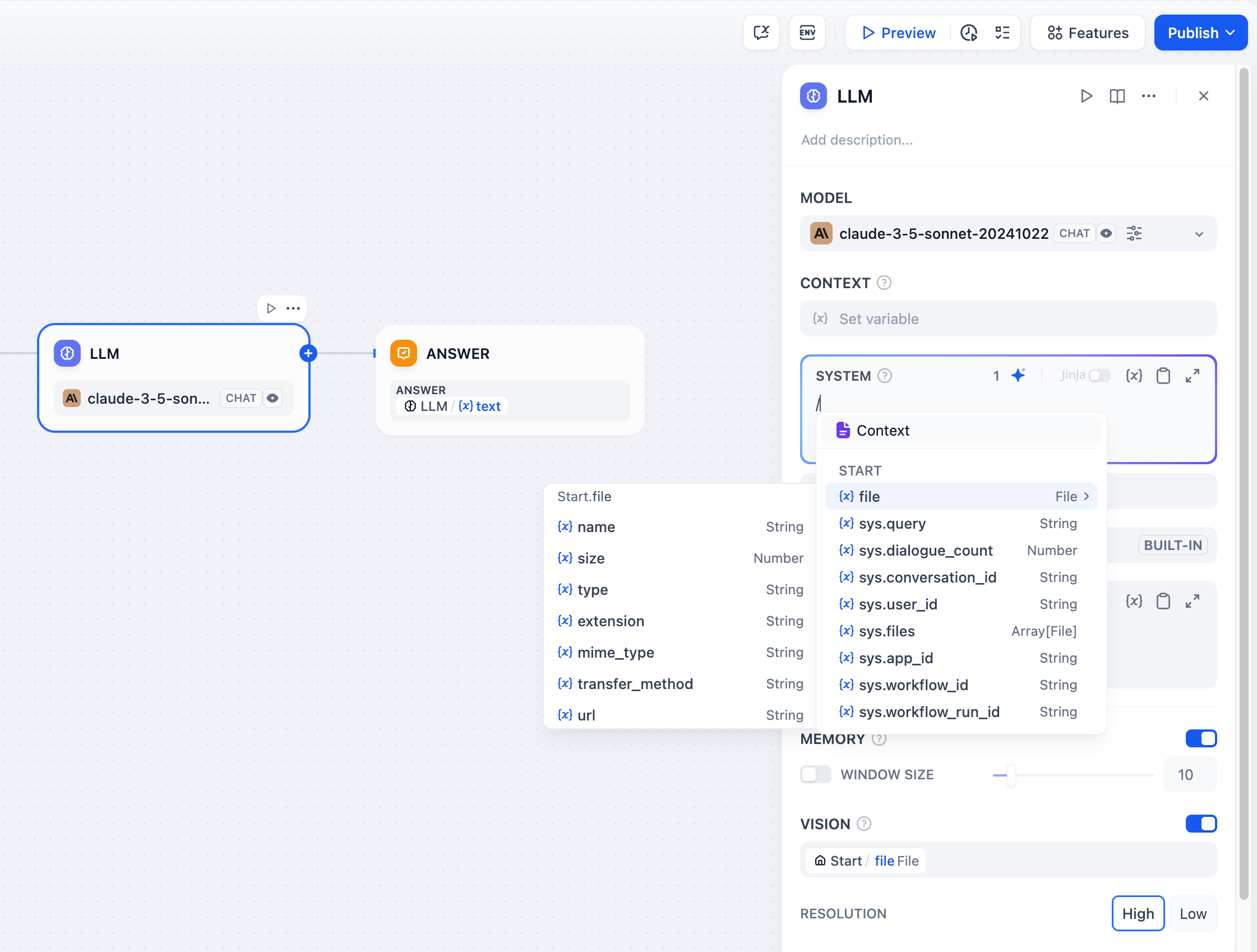

![Model Tags]() Click **Settings** under **Vision** to configure how files are accepted and processed. Upload settings apply across all enabled file types.

* **Resolution**: Controls the detail level for **image** processing only.

* **High**: Better accuracy for complex images but uses more tokens

* **Low**: Faster processing with fewer tokens for simple images

* **Upload Method**: Choose whether users can upload from their device, paste a URL, or both.

* **Upload Limit**: The maximum number of files a user can upload per message.

For self-hosted deployments, you can adjust file size limits via the following environment variables:

* `UPLOAD_IMAGE_FILE_SIZE_LIMIT` (default: 10 MB)

* `UPLOAD_FILE_SIZE_LIMIT` (default: 15 MB)

* `UPLOAD_AUDIO_FILE_SIZE_LIMIT` (default: 50 MB)

See [Environment Variables](/en/self-host/configuration/environments) for details.

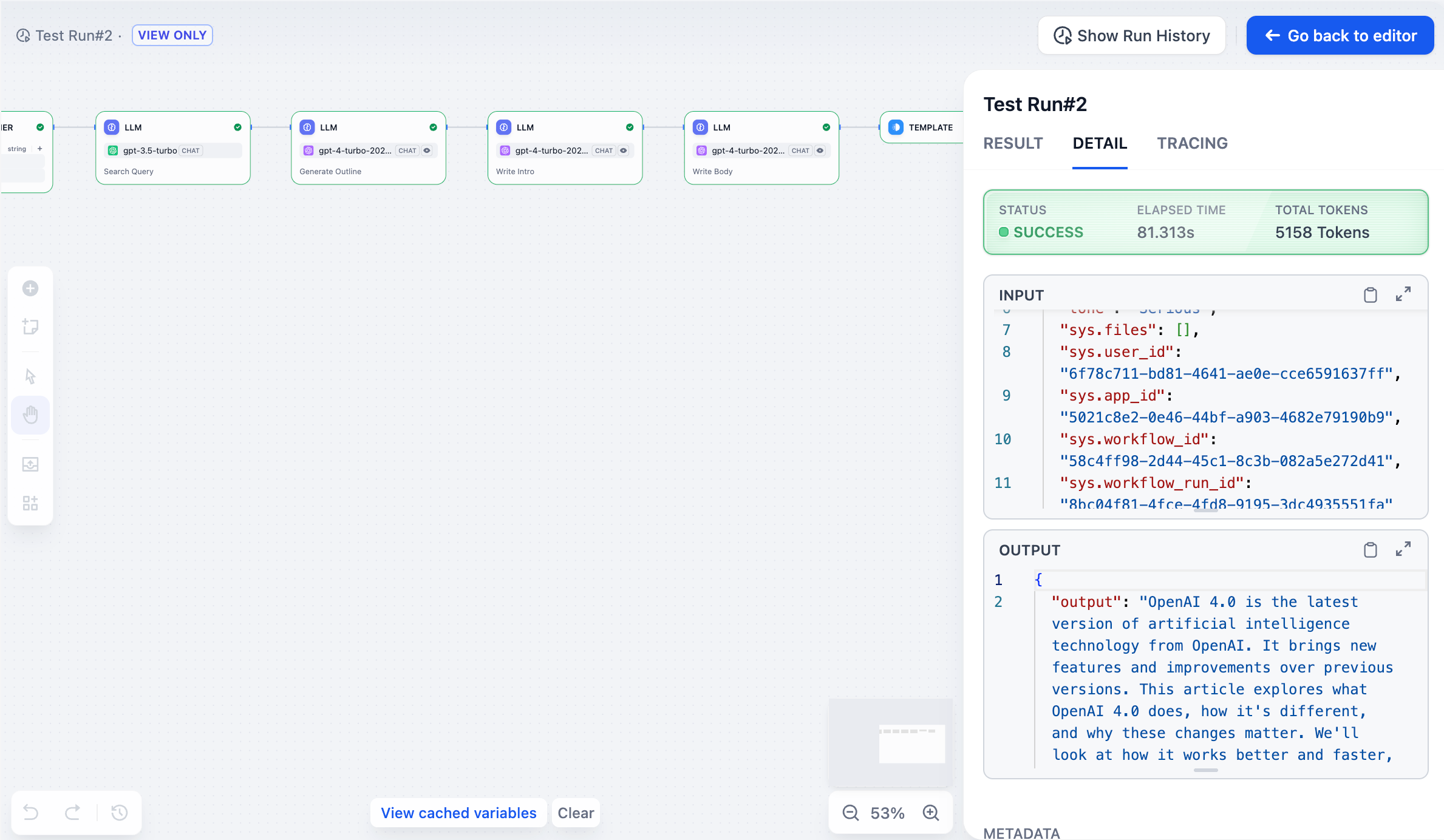

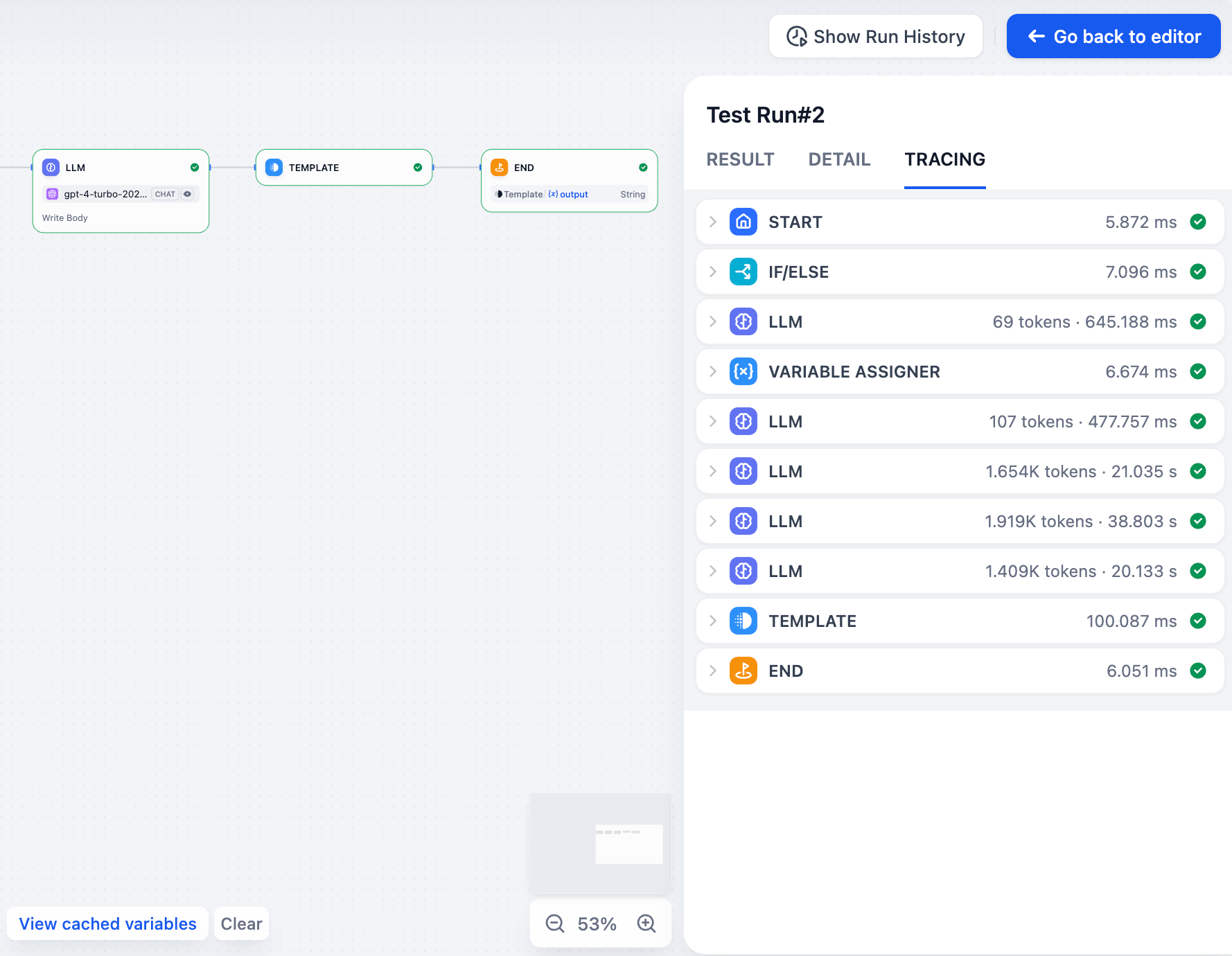

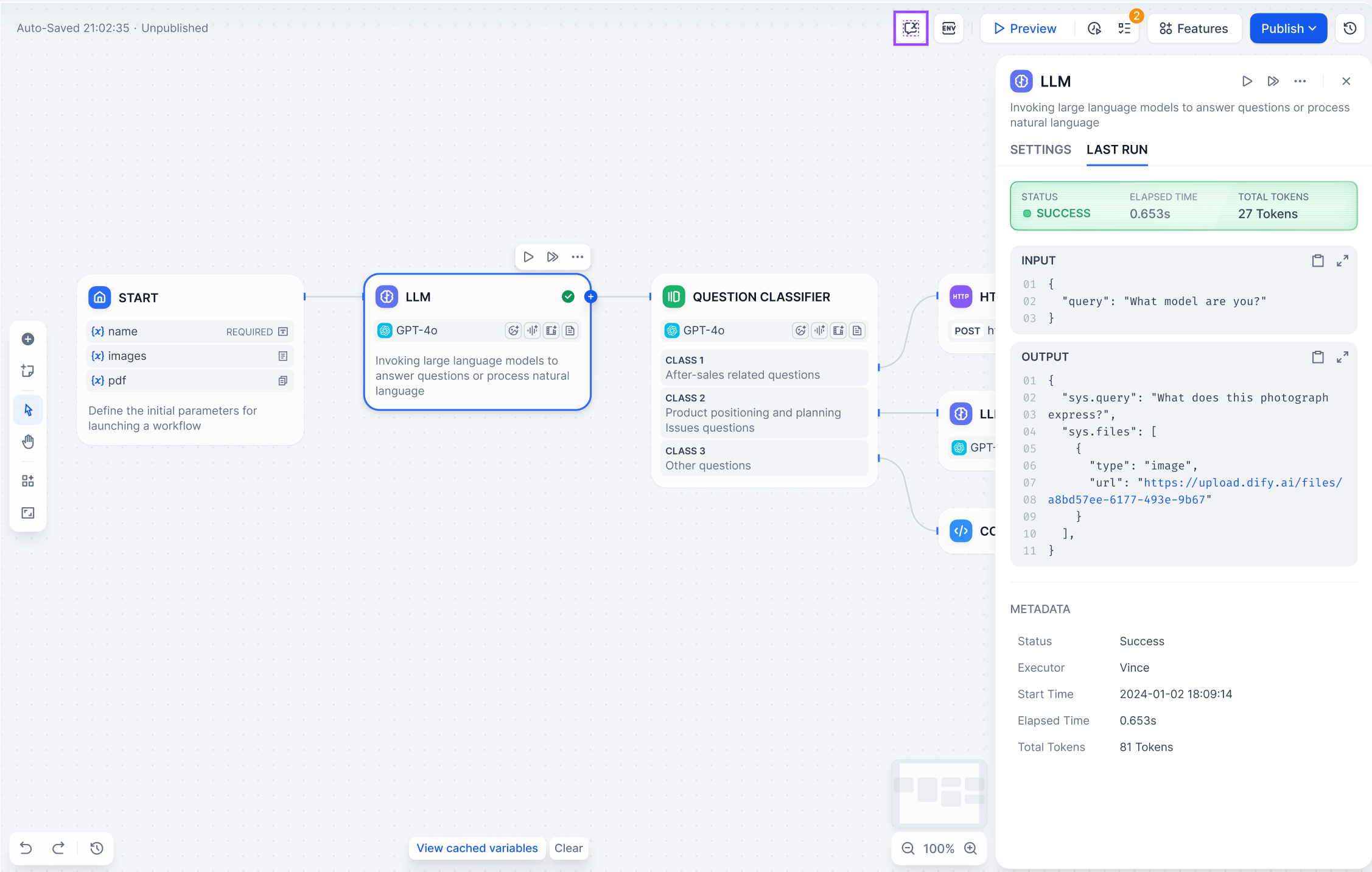

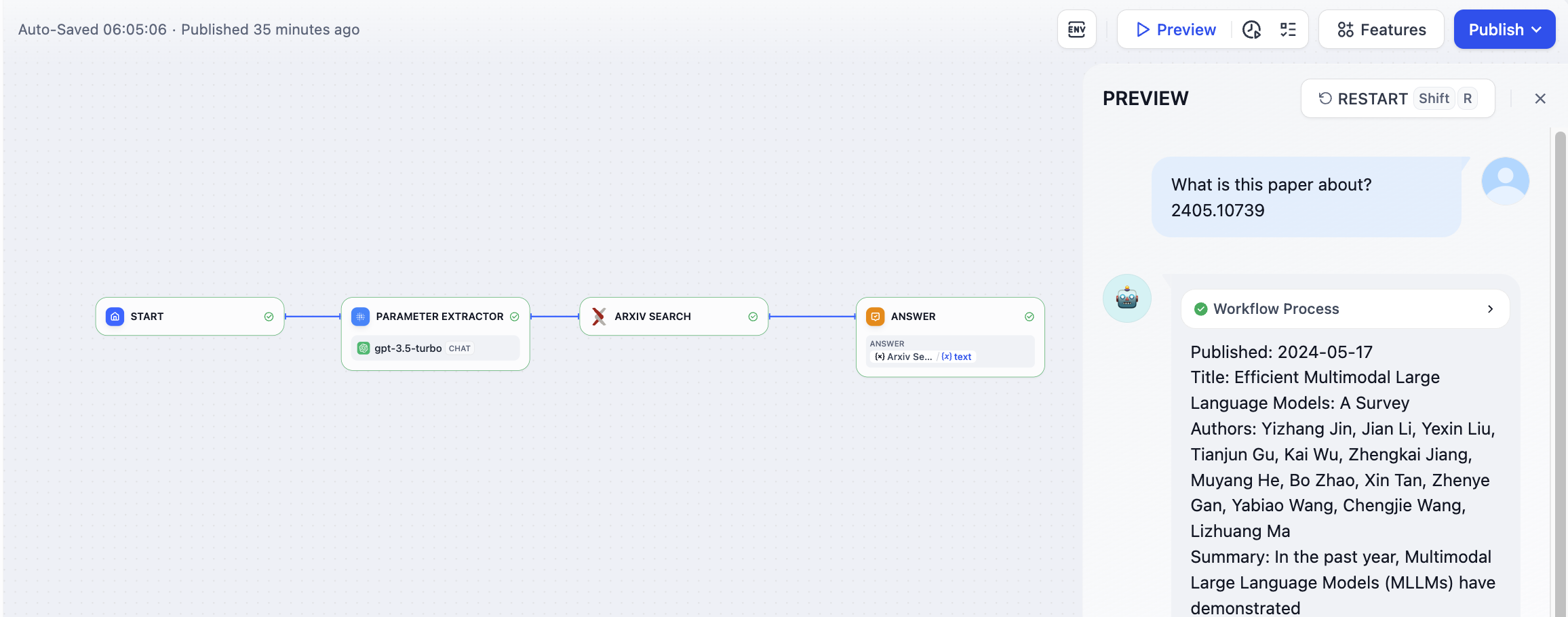

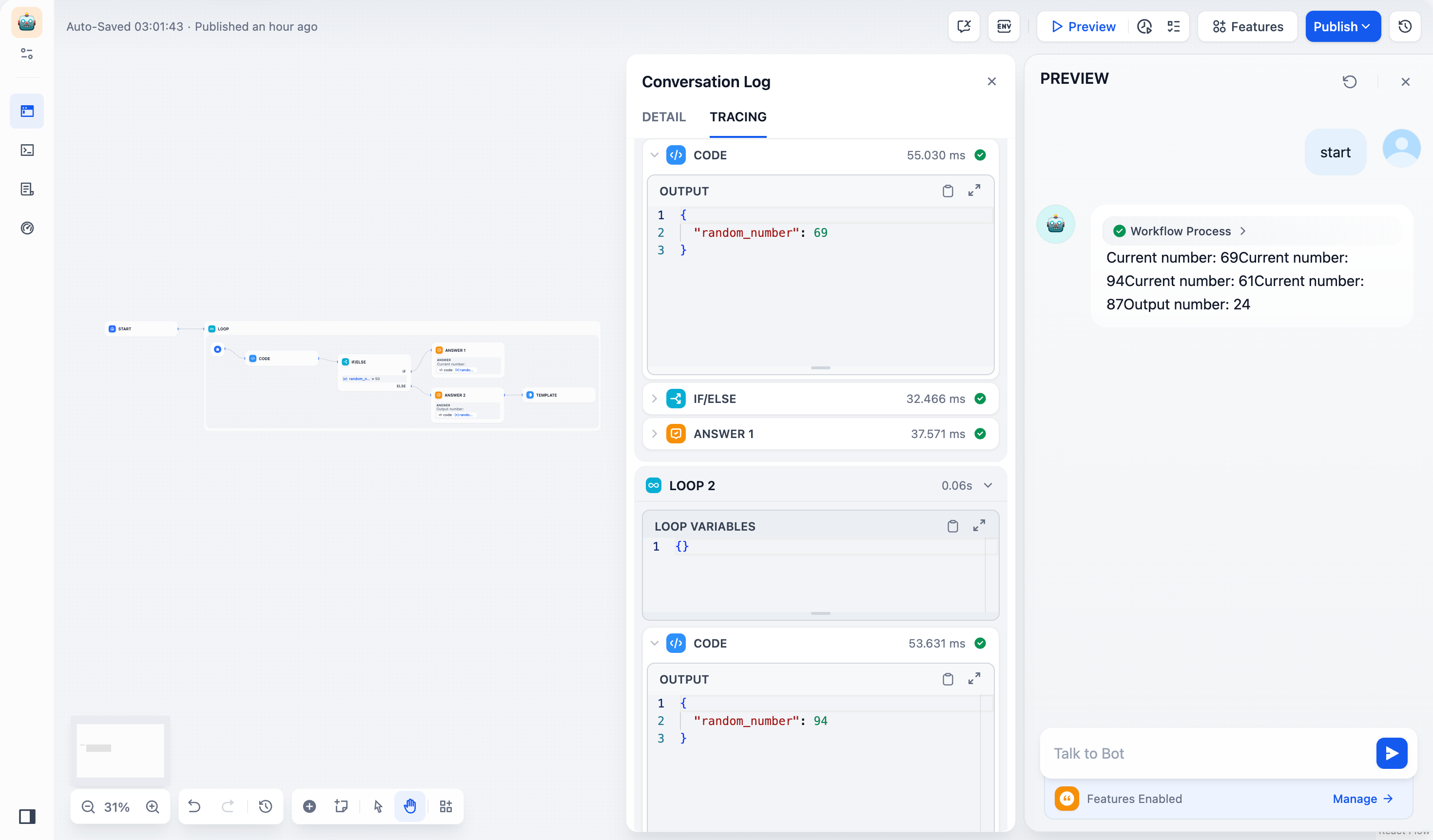

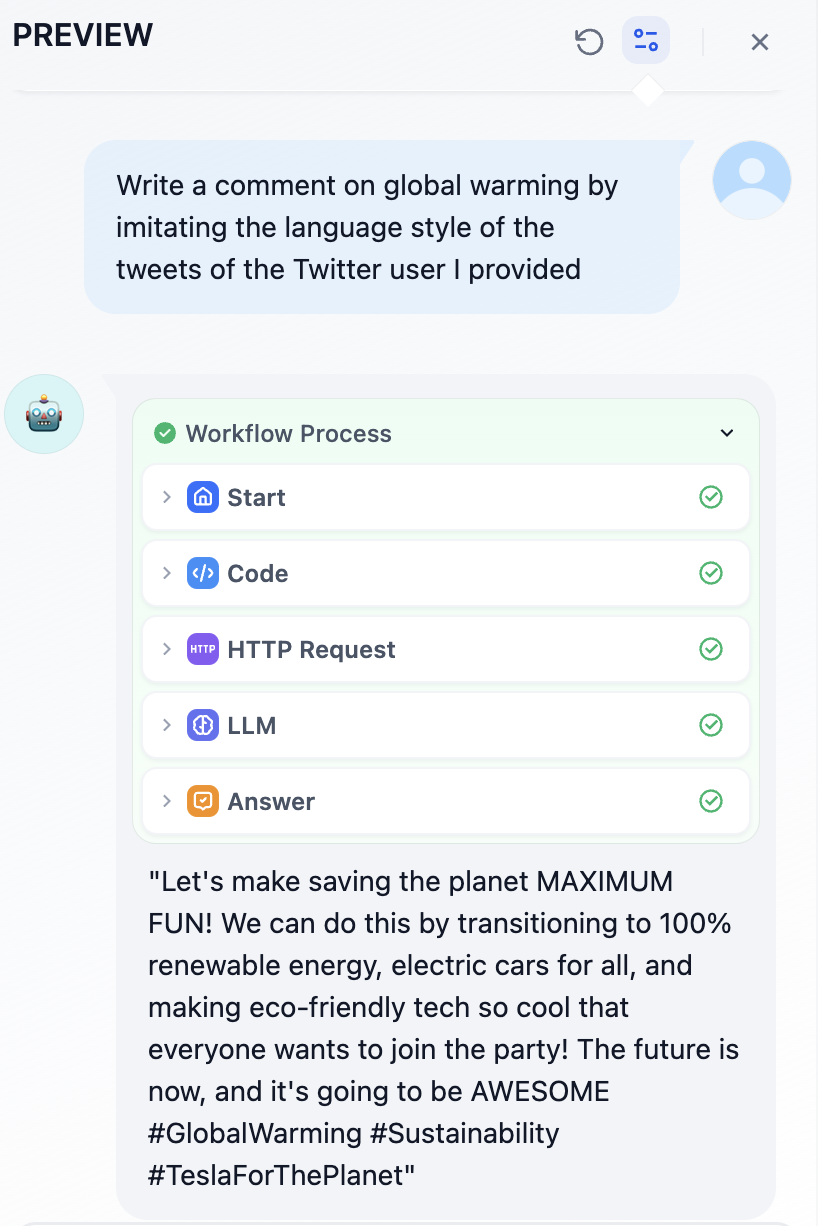

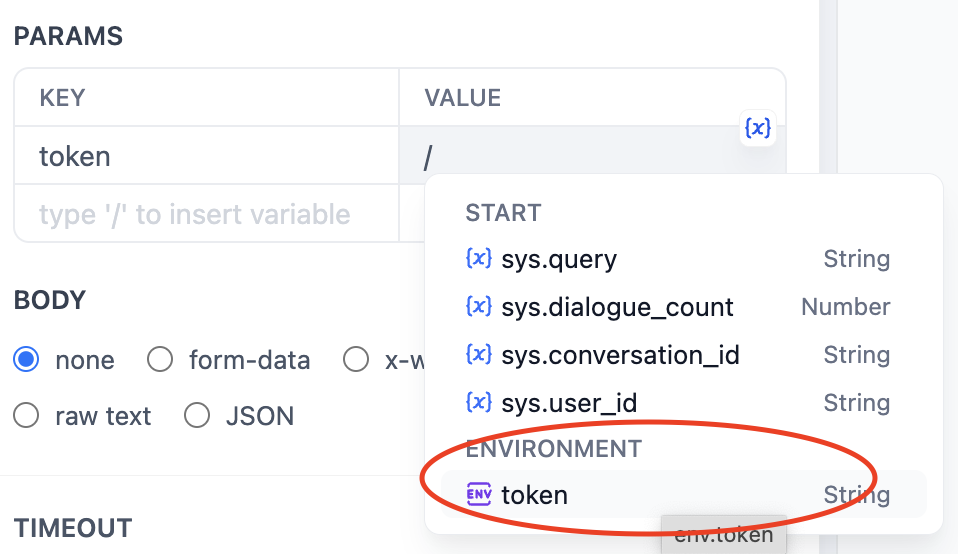

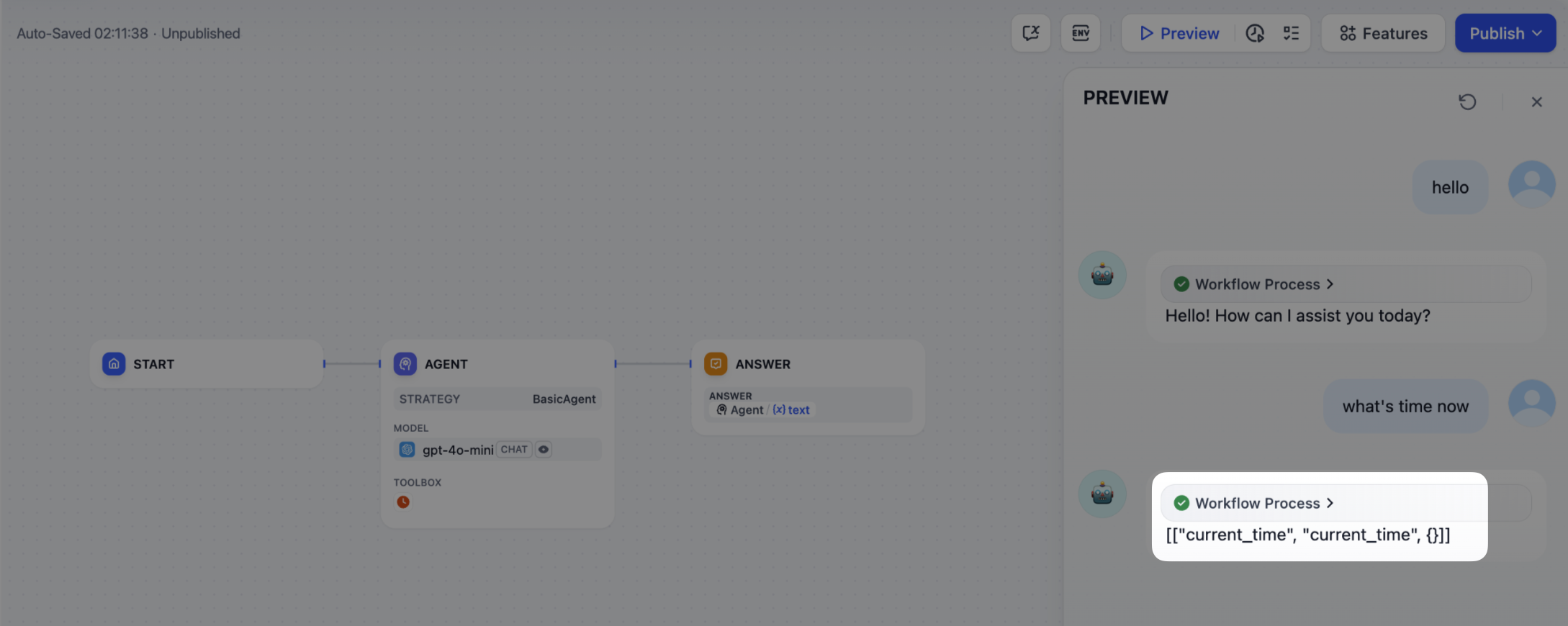

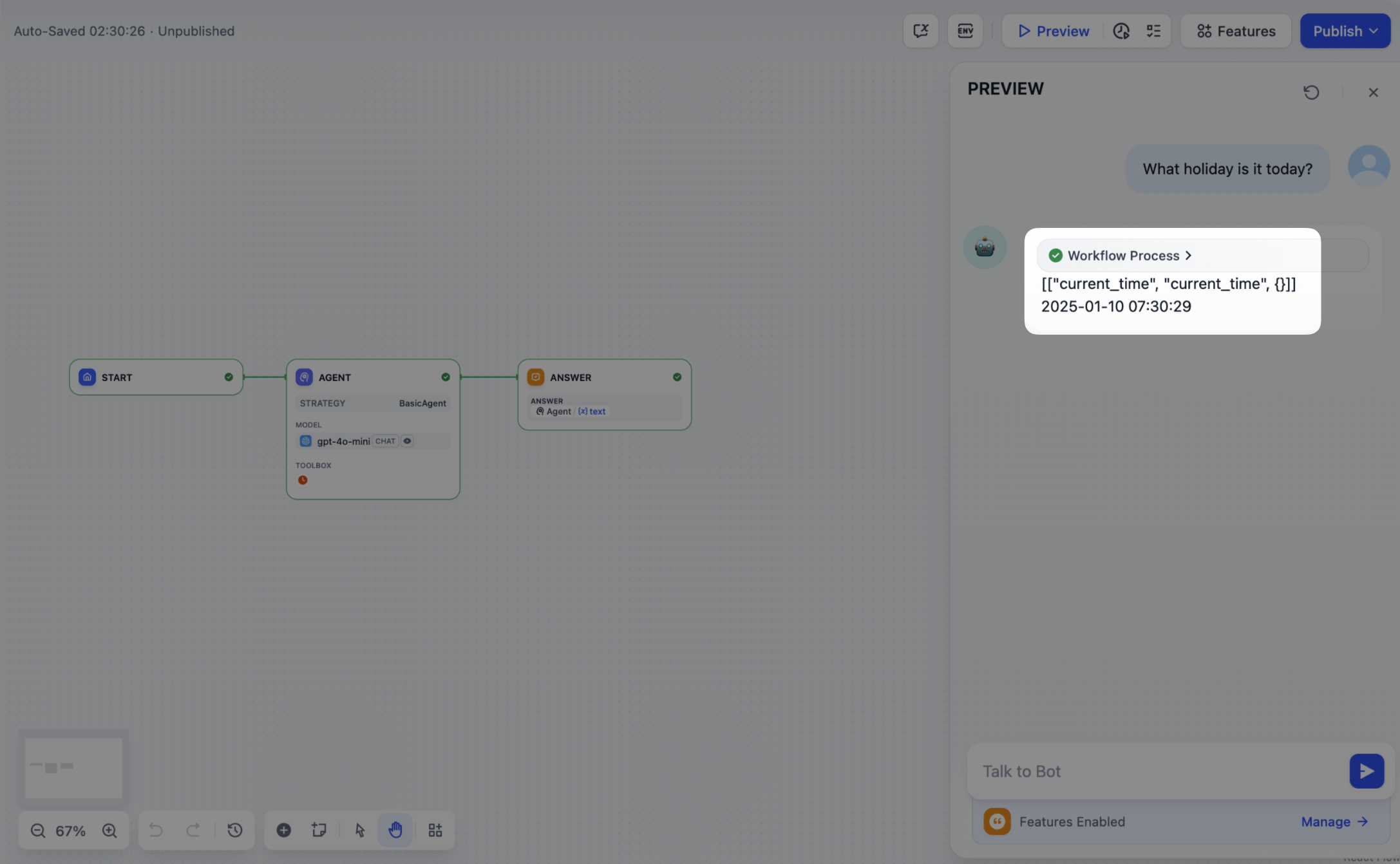

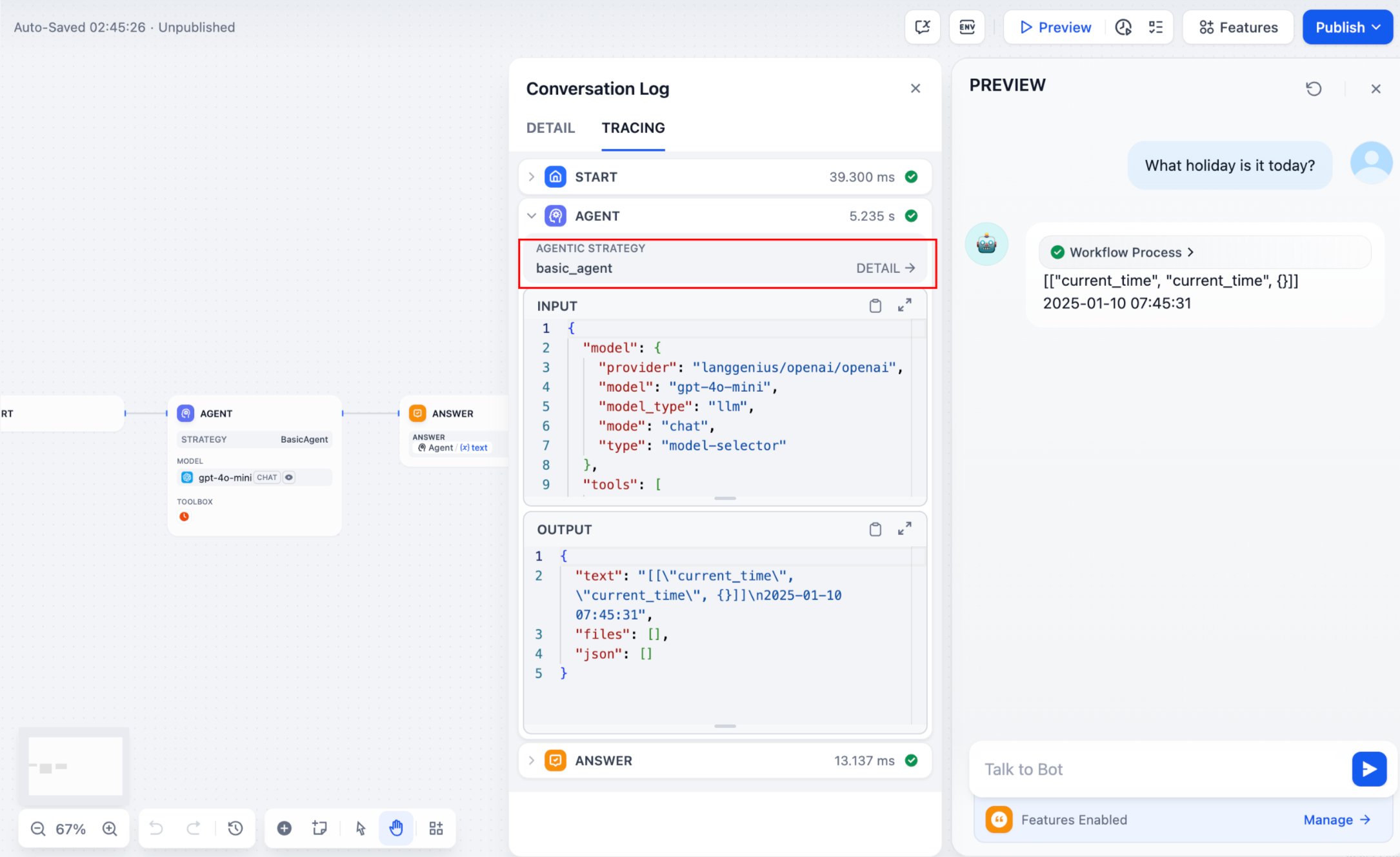

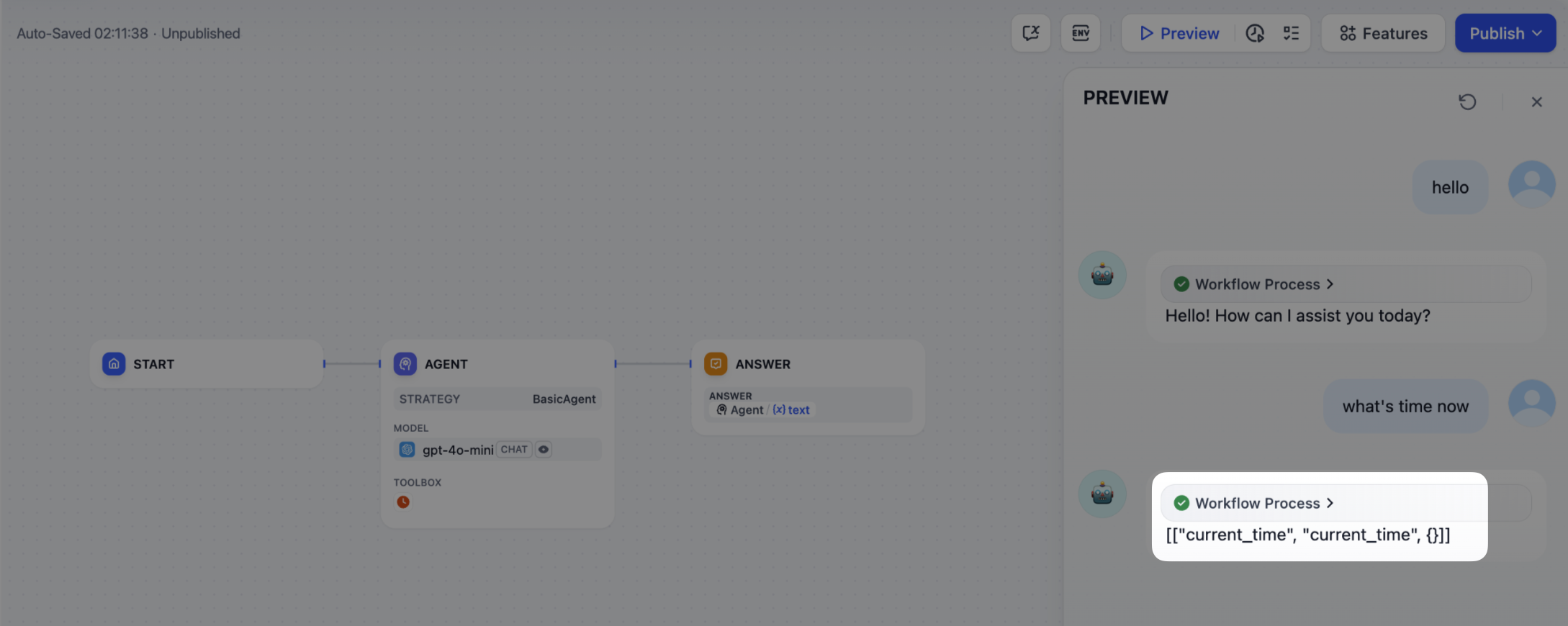

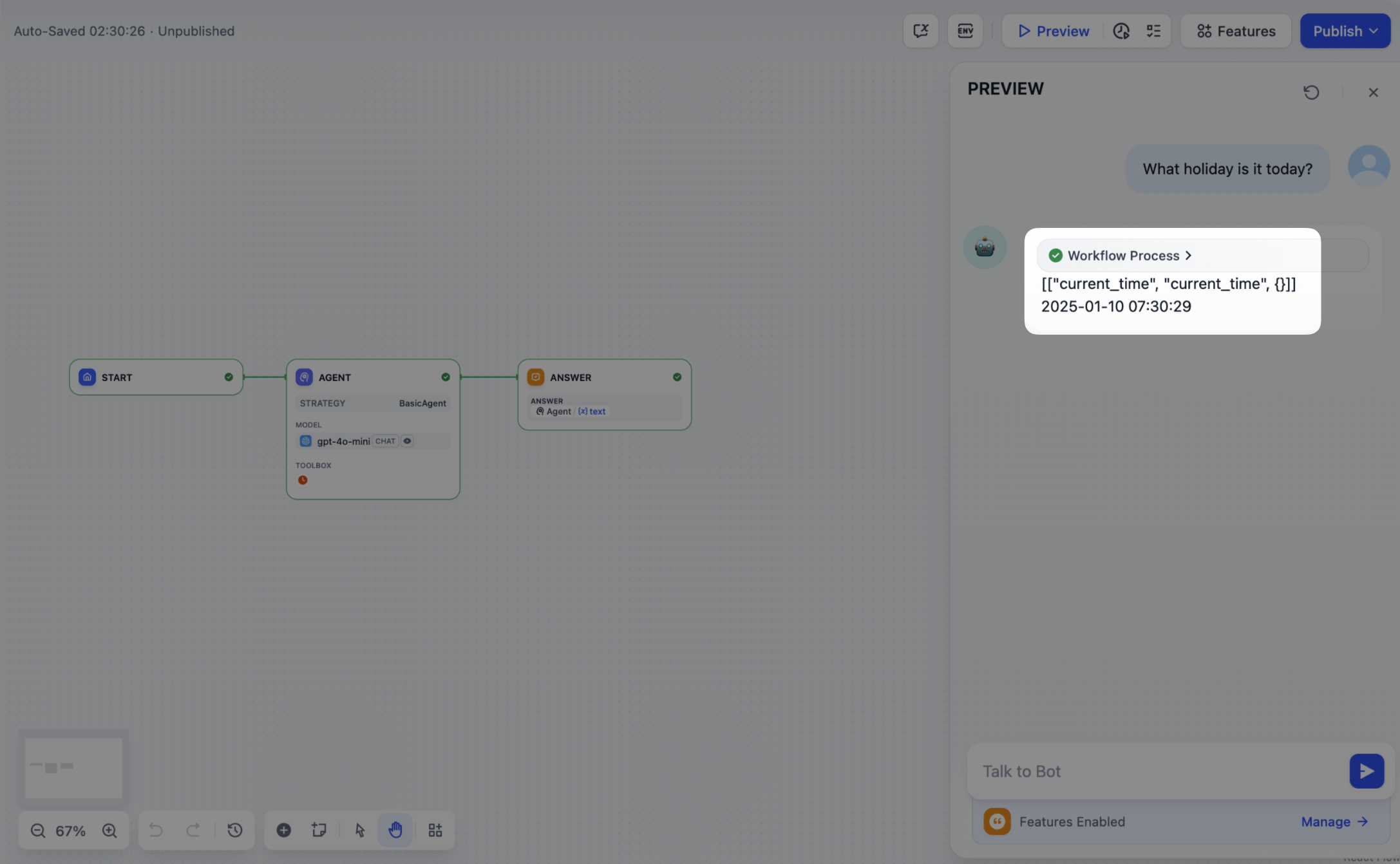

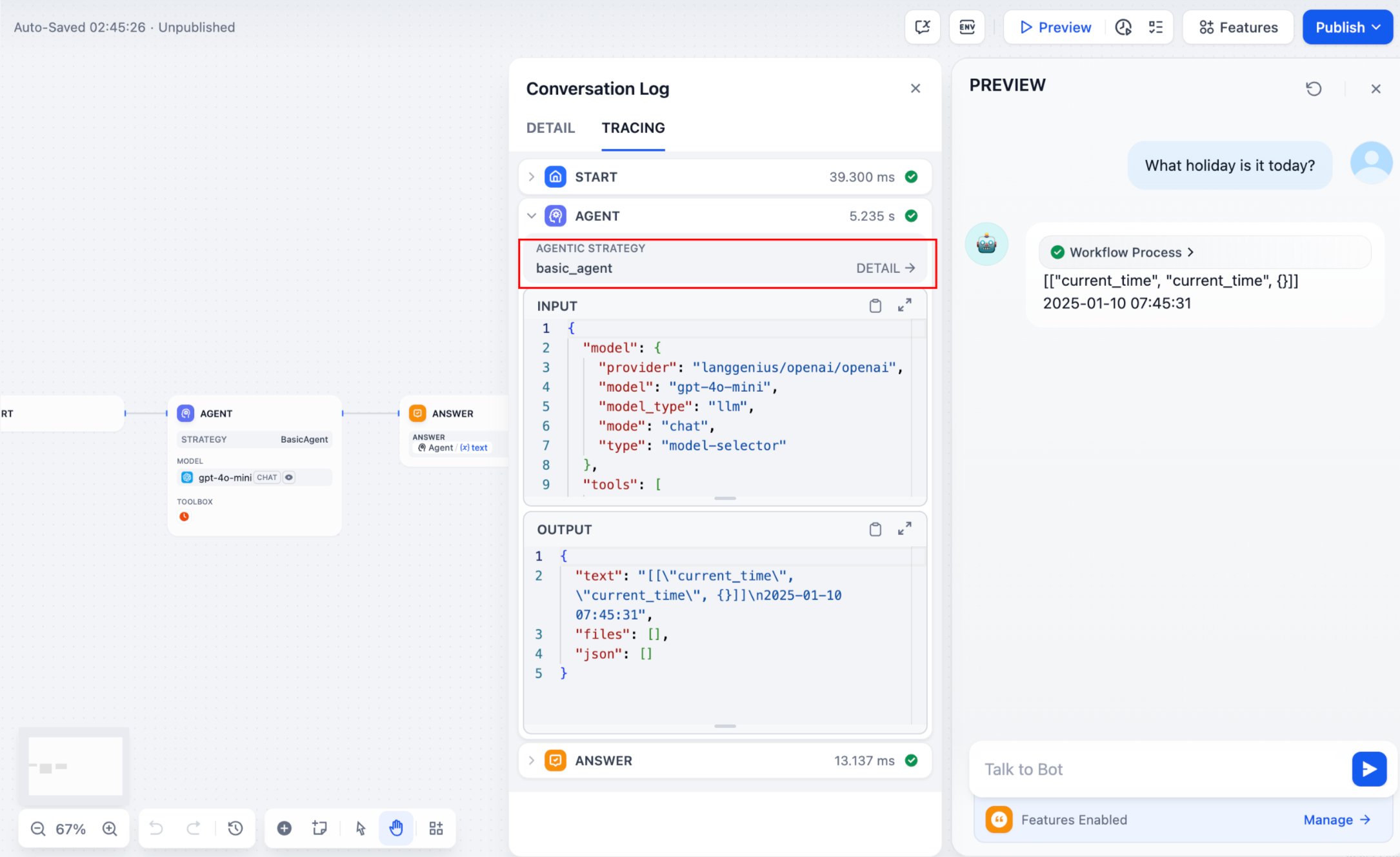

## Debug & Preview

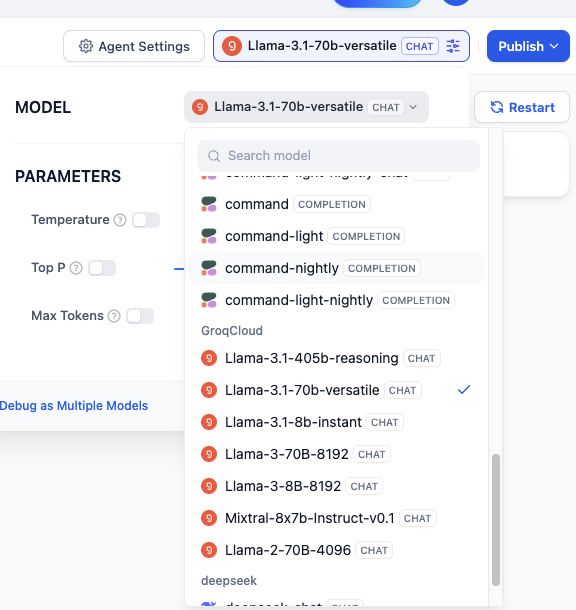

In the preview panel on the right, test your agent in real time. Select a model, type a message, and send it to see how the agent responds.

You can adjust a model's parameters to control how it generates responses. Available parameters and presets vary by model.

To compare outputs across different models, click **Debug as Multiple Models** to run up to 4 models simultaneously.

Click **Settings** under **Vision** to configure how files are accepted and processed. Upload settings apply across all enabled file types.

* **Resolution**: Controls the detail level for **image** processing only.

* **High**: Better accuracy for complex images but uses more tokens

* **Low**: Faster processing with fewer tokens for simple images

* **Upload Method**: Choose whether users can upload from their device, paste a URL, or both.

* **Upload Limit**: The maximum number of files a user can upload per message.

For self-hosted deployments, you can adjust file size limits via the following environment variables:

* `UPLOAD_IMAGE_FILE_SIZE_LIMIT` (default: 10 MB)

* `UPLOAD_FILE_SIZE_LIMIT` (default: 15 MB)

* `UPLOAD_AUDIO_FILE_SIZE_LIMIT` (default: 50 MB)

See [Environment Variables](/en/self-host/configuration/environments) for details.

## Debug & Preview

In the preview panel on the right, test your agent in real time. Select a model, type a message, and send it to see how the agent responds.

You can adjust a model's parameters to control how it generates responses. Available parameters and presets vary by model.

To compare outputs across different models, click **Debug as Multiple Models** to run up to 4 models simultaneously.

![Debug with Multiple Models]() We recommend selecting models that are strong at **reasoning** and **natively support tool calling**.

An agent needs to judge *when* to use a tool, *which tool* fits the task, and *how* to interpret the result—this depends on the model's reasoning ability. Models with built-in tool-call support also execute these decisions more reliably.

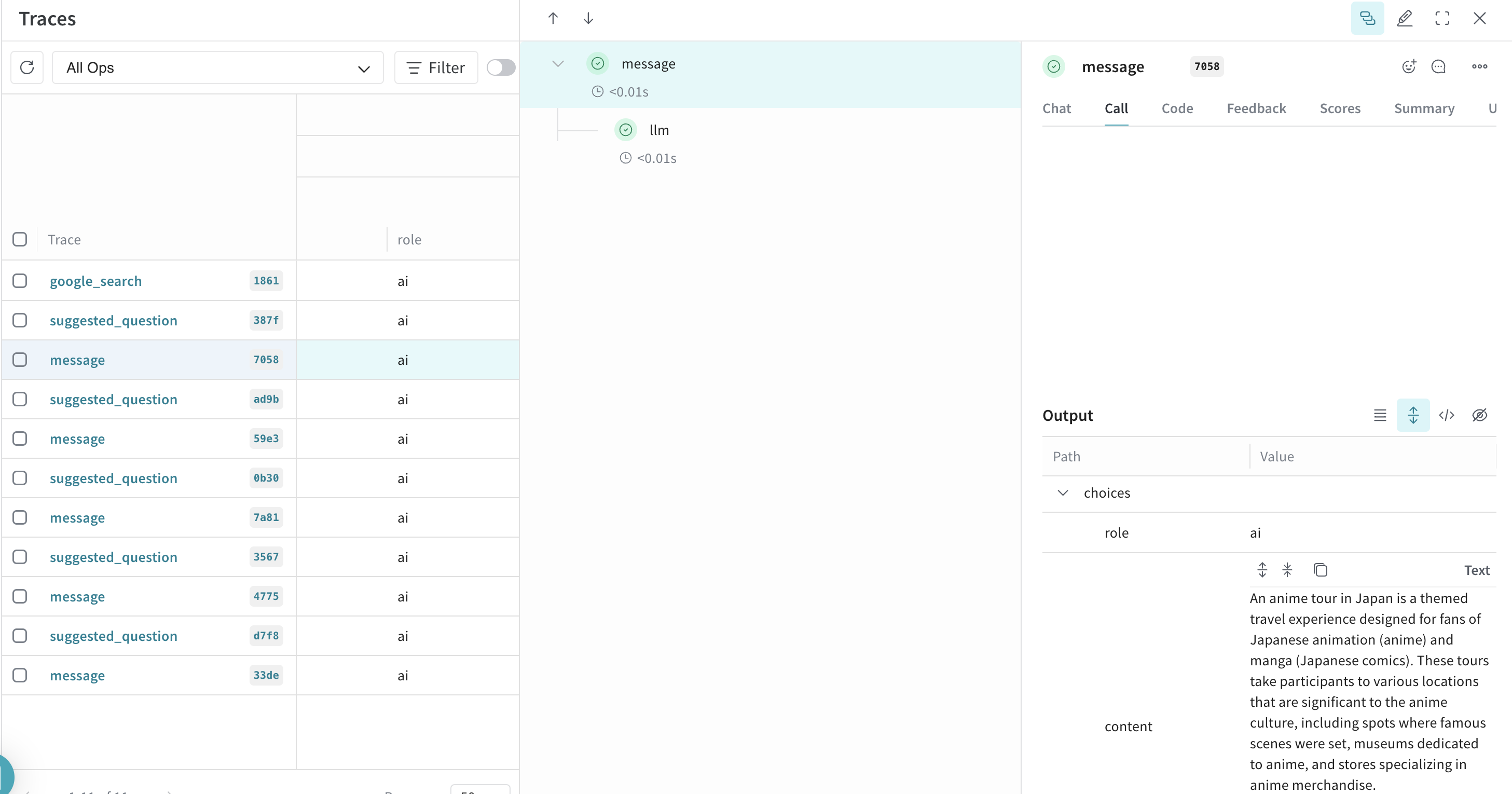

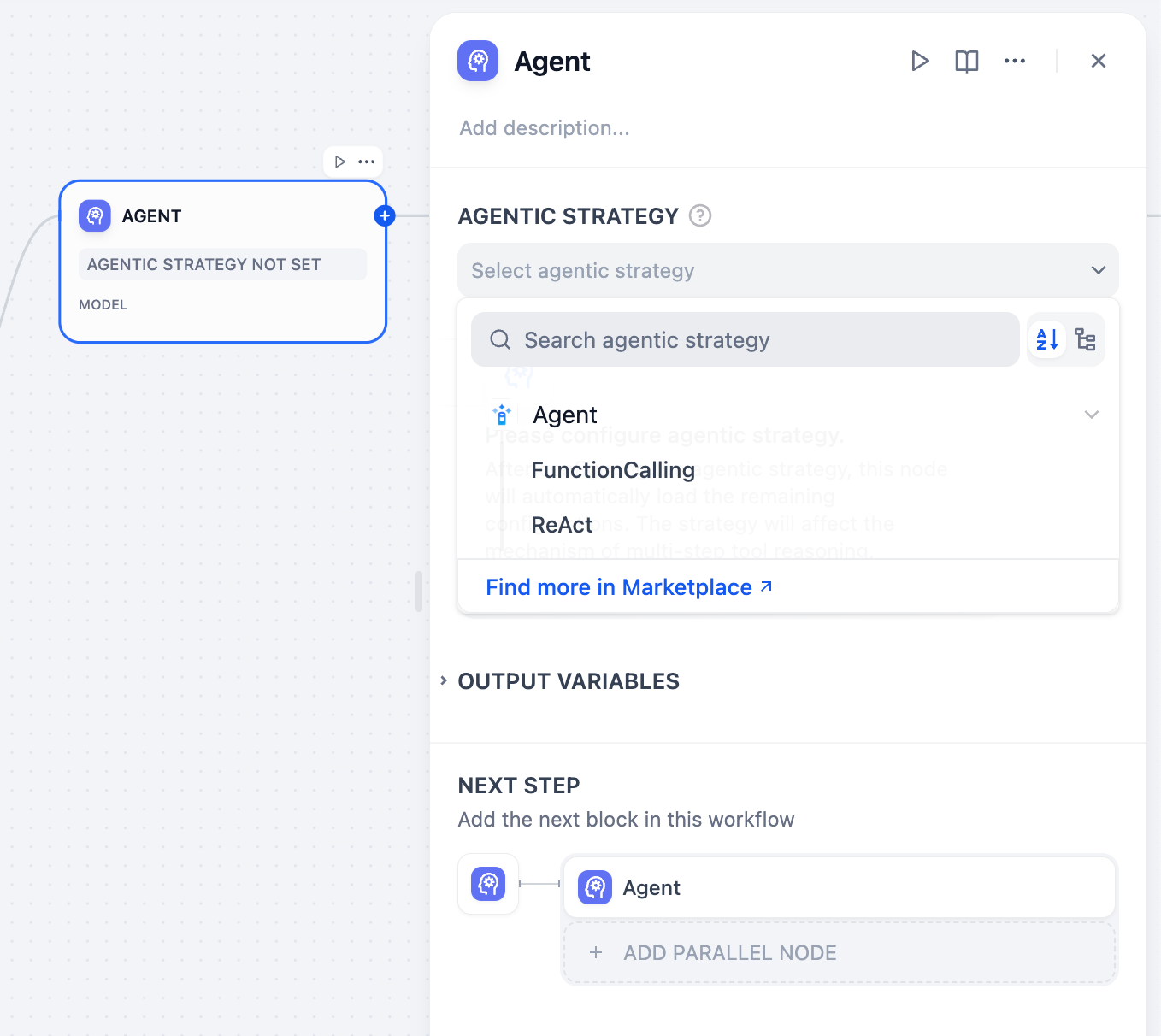

You can verify your model's tool-call support in **Agent Settings**, where the system automatically displays the agent mode:

* **Function Calling** for models with native support, meaning they can call tools directly.

* **ReAct** for others, so Dify guides them to use tools through a prompting strategy.

## Publish

When you're happy with the results, click **Publish** to make your app available. See [Publish](/en/use-dify/publish/README) for the full list of publishing options.

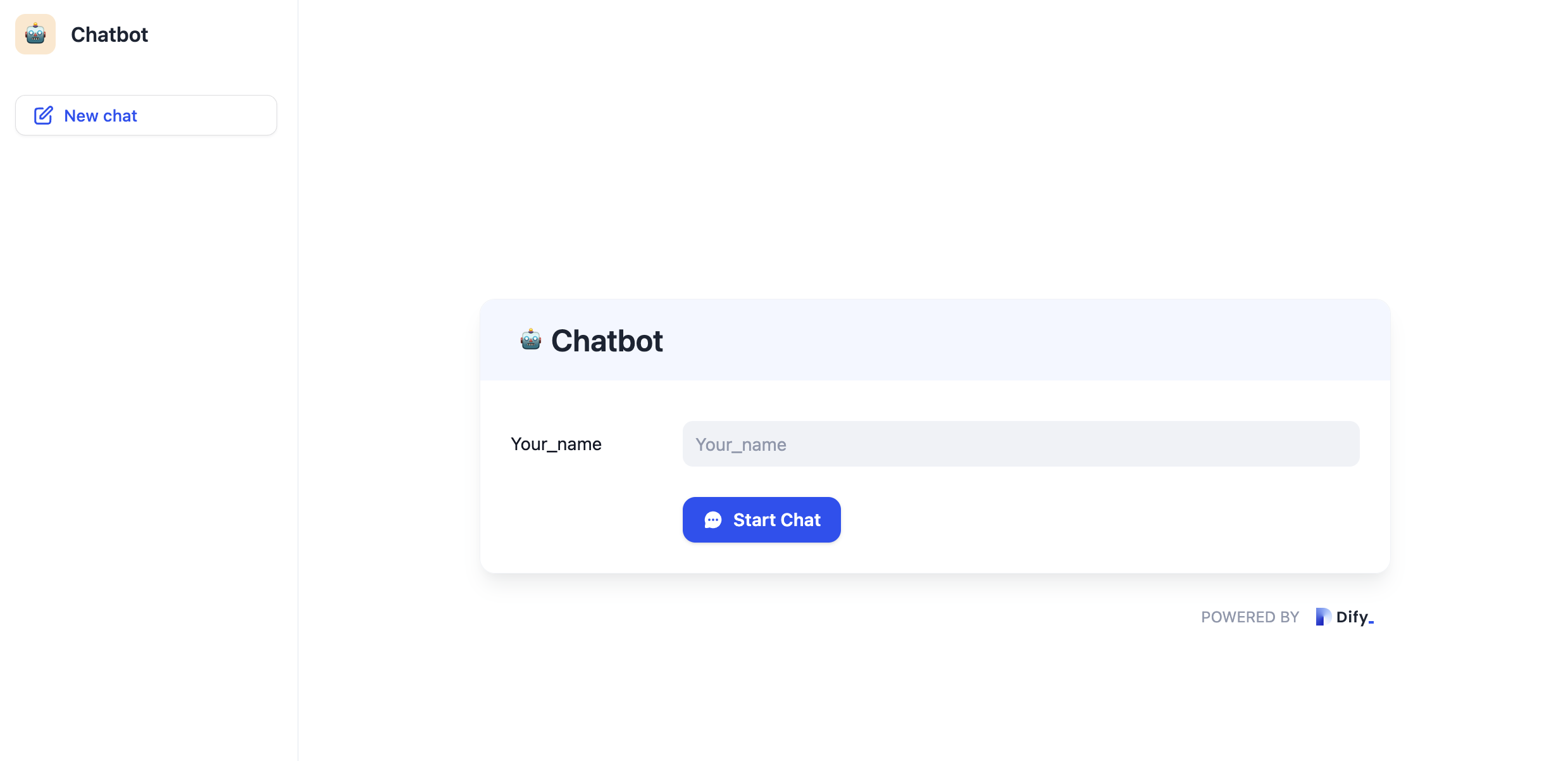

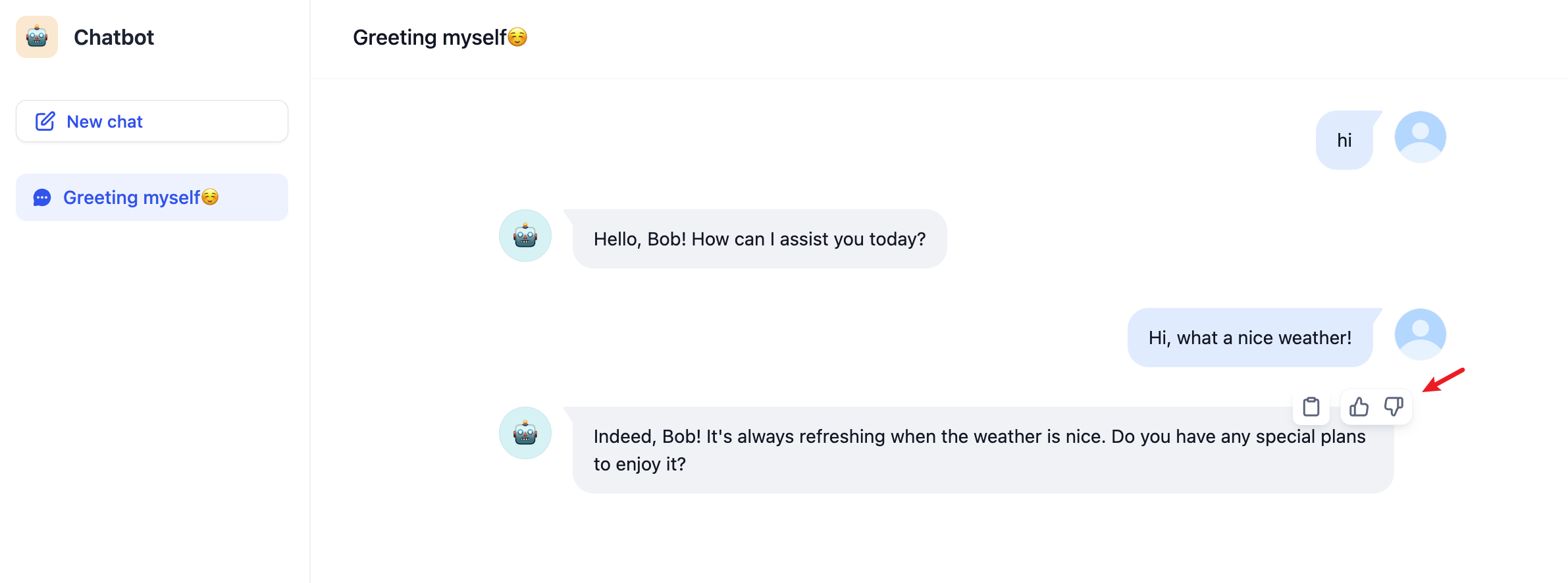

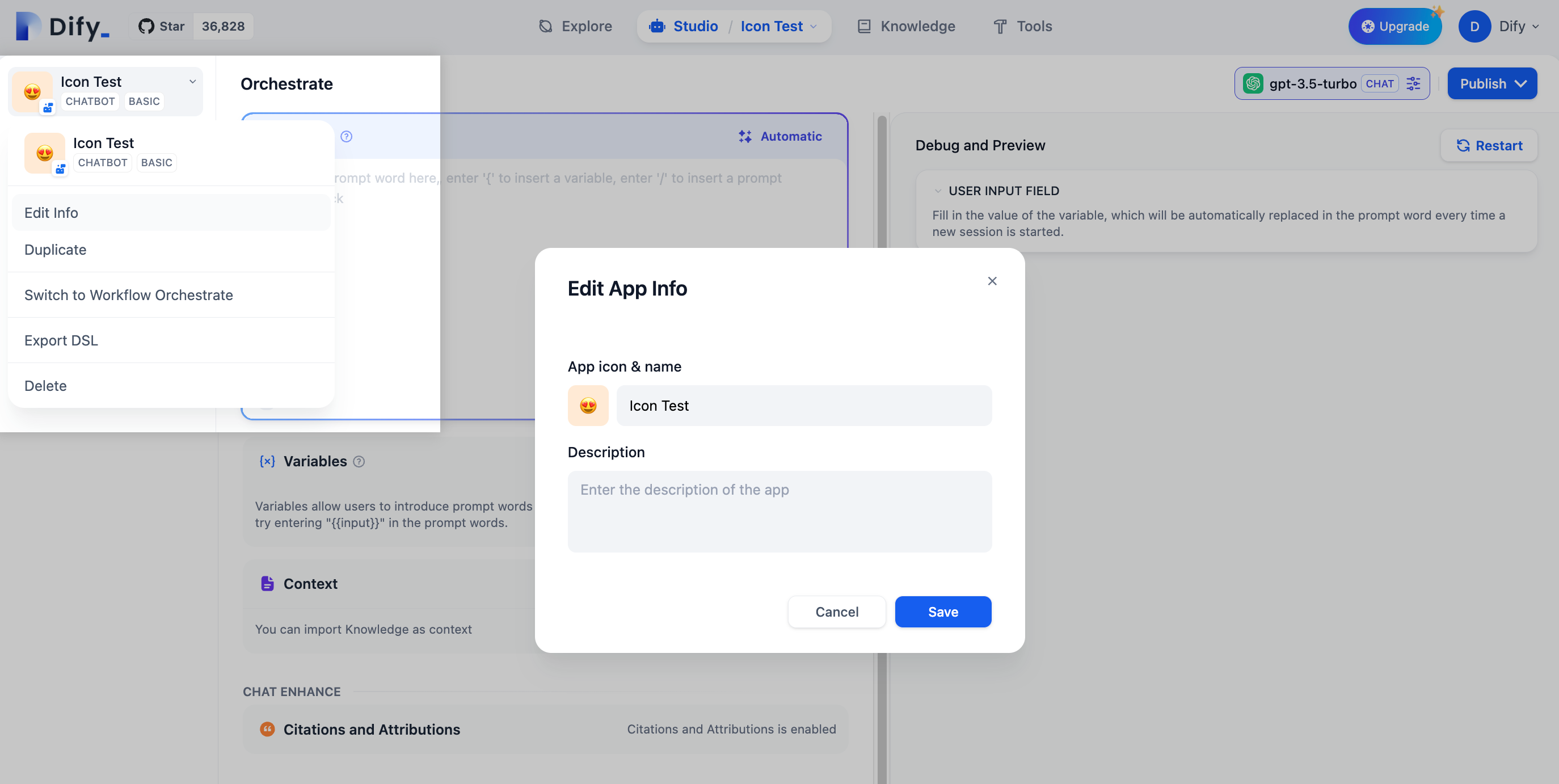

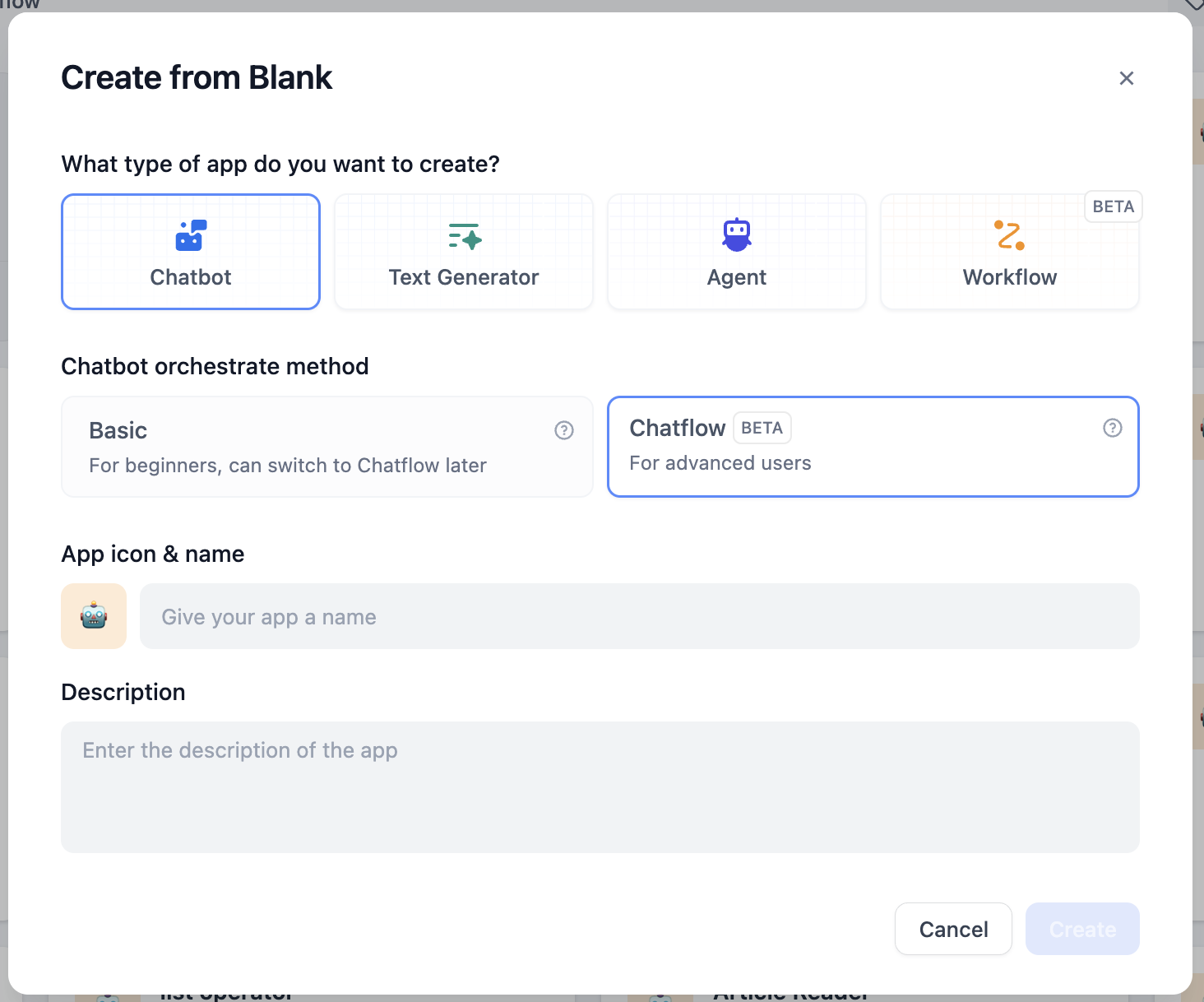

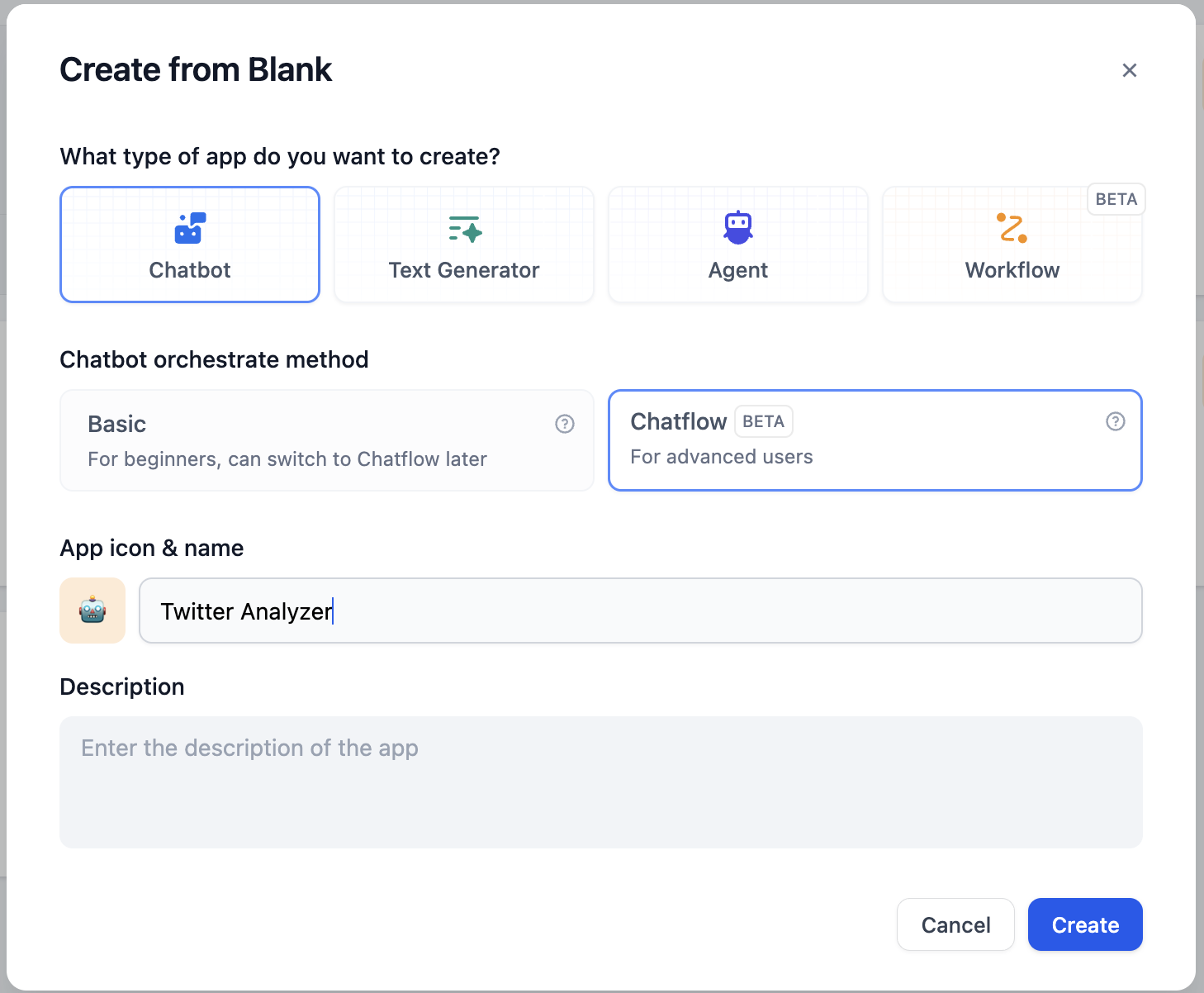

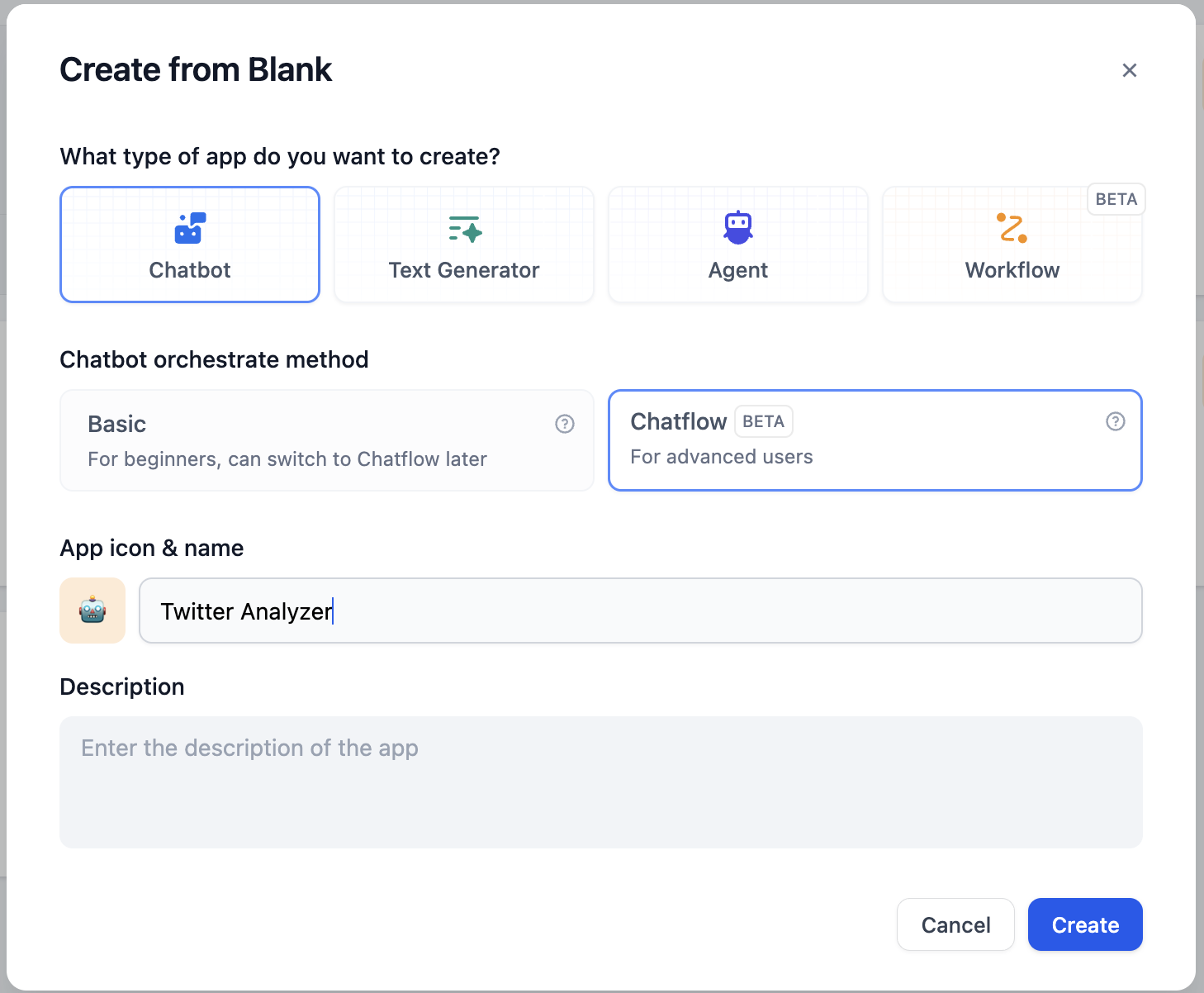

# Chatbot

Source: https://docs.dify.ai/en/use-dify/build/chatbot

The simplest way to build a conversational app with a model and a prompt

Chatbots are conversational apps where users interact with the model through a chat interface.

Use it for tasks that benefit from back-and-forth interaction but don't require tool calls or a multi-step workflow—for example, building an internal Q\&A assistant grounded in your team's knowledge base.

Chatbots keep up to 500 messages or 2,000 tokens of history per conversation. If either limit is exceeded, the oldest messages will be removed to make room for new ones.

Chatbots also support optional features like conversation openers, follow-up suggestions, and more. See [App Toolkit](/en/use-dify/build/additional-features) for details.

## Configure

### Write the Prompt

The prompt tells the model what to do, how to respond, and what constraints to follow. It shapes how the model behaves throughout the conversation, so think of it as defining a consistent persona rather than describing a one-off task.

Here are some tips for writing effective prompts:

* **Define the persona**: Describe who the model should act as and the tone it should use.

* **Specify the output format**: Describe the structure, length, or style you expect.

* **Set constraints**: Tell the model what to avoid or what rules to follow.

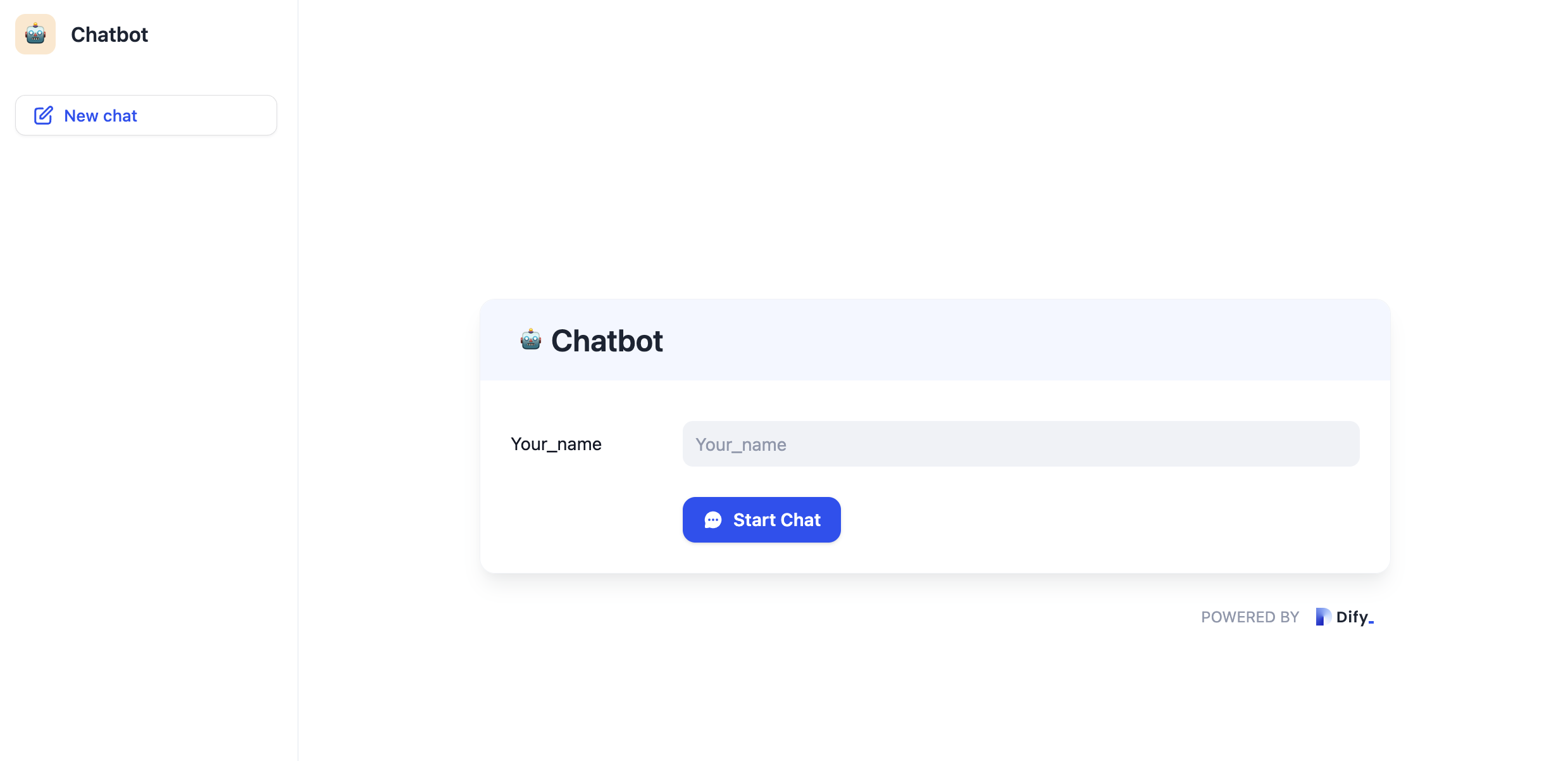

#### Create Dynamic Prompts with Variables

To adapt your chatbot to different users or contexts without rewriting the prompt each time, add variables to collect the necessary information upfront.

Variables are placeholders in the prompt—each one appears as an input field that users fill in before the conversation starts, and their values are injected into the prompt at runtime. Users can also update variable values mid-conversation, and the prompt will adjust accordingly.

For example, an onboarding assistant might use `role` and `language` to tailor its responses:

```text wrap theme={null}

You are an onboarding assistant for new {{role}} hires. Answer questions about company processes and policies. Keep answers friendly and concise, and respond in {{language}}.

```

While drafting the prompt, type `/` > **New Variable** to quickly insert a named placeholder. You can configure its details in the **Variables** section later.

Choose the variable type that matches the input you expect:

Accepts up to 256 characters. Use it for names, email addresses, titles, or any brief text input that fits on a single line.

Allows long-form text without length restrictions. It gives users a multi-line text area for detailed descriptions.

Displays a dropdown menu with predefined options.

Restricts input to numerical values only—ideal for quantities, ratings, IDs, or any data requiring mathematical processing.

Provides a simple yes/no option. When a user checks the box, the output is `true`; otherwise, it's `false`. Use it for confirmations or any case that requires a binary choice.

Fetches variable values from an external API at runtime instead of collecting them from users.

Use it when your prompt needs dynamic data from an external source, such as live weather conditions or database records. See [API Extension](/en/use-dify/workspace/api-extension/api-extension) for details.

**Label Name** is what end users see for each input field.

#### Generate or Improve the Prompt with AI

If you're unsure where to start or want to refine the existing prompt, click **Generate** to let an LLM help you draft it.

Describe what you want from scratch, or reference `current_prompt` and specify what to improve. For more targeted results, add an example in **Ideal Output**.

Each generation is saved as a version, so you can experiment and roll back freely.

### Ground Responses in Your Own Data

To ground the model's responses in your own data rather than general knowledge, add a knowledge base.

Each time a user sends a message, it is used as the search query to retrieve relevant content from the knowledge base, which is then injected into the prompt as context for the model.

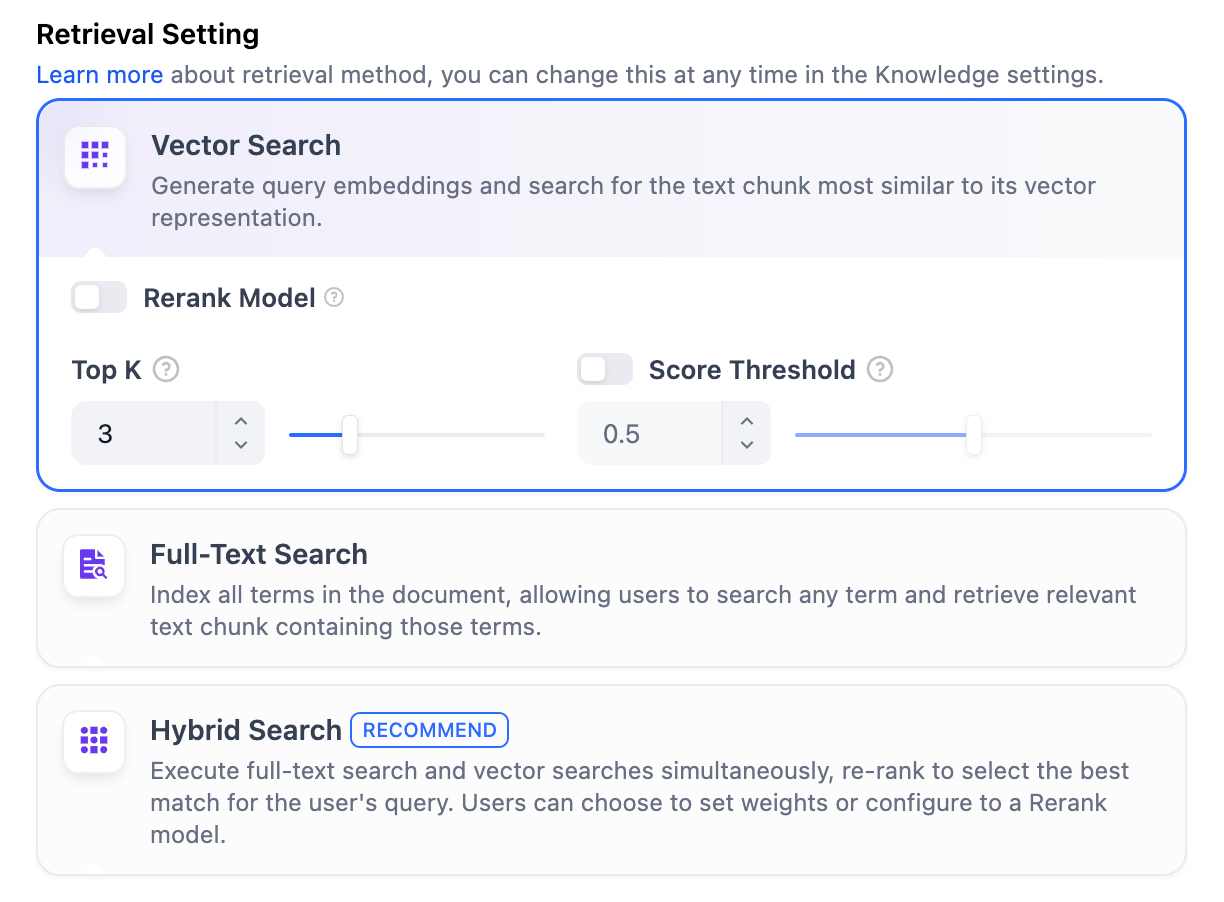

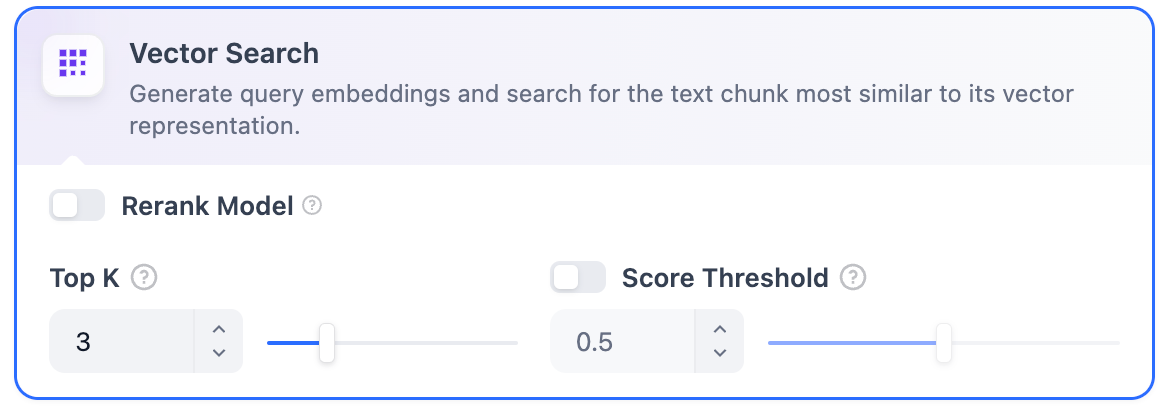

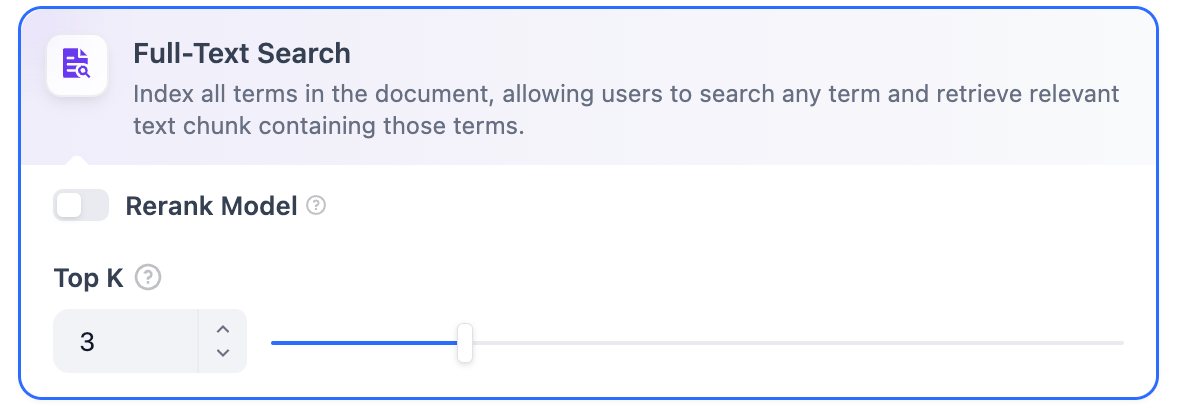

#### Configure App-Level Retrieval Settings

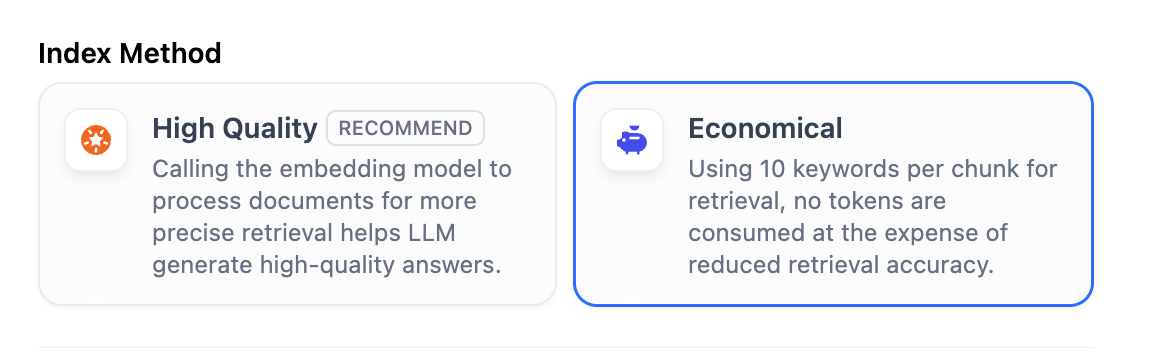

To fine-tune how retrieval results are processed, click **Retrieval Setting**.

There are two layers of retrieval settings—the knowledge base level and the app level.

Think of them as two consecutive filters: the knowledge base settings determine the initial pool of results, and the app settings further rerank the results or narrow down the pool.

* **Rerank Settings**

* **Weighted Score**

The relative weight between semantic similarity and keyword matching during reranking. Higher semantic weight favors meaning relevance, while higher keyword weight favors exact matches.

Weighted Score is available only when all added knowledge bases are indexed with **High Quality** mode.

* **Rerank Model**

The rerank model to re-score and reorder all the results based on their relevance to the query.

If any multimodal knowledge bases are added, select a multimodal rerank model (marked with a **Vision** tag) as well. Otherwise, retrieved images will be excluded from reranking and the final output.

* **Top K**

The maximum number of top results to return after reranking.

When a rerank model is selected, this value will be automatically adjusted based on the model's maximum input capacity (how much text the model can process at once).

* **Score Threshold**

The minimum similarity score for returned results. Results scoring below this threshold are excluded. Use higher thresholds for stricter relevance or lower thresholds to include broader matches.

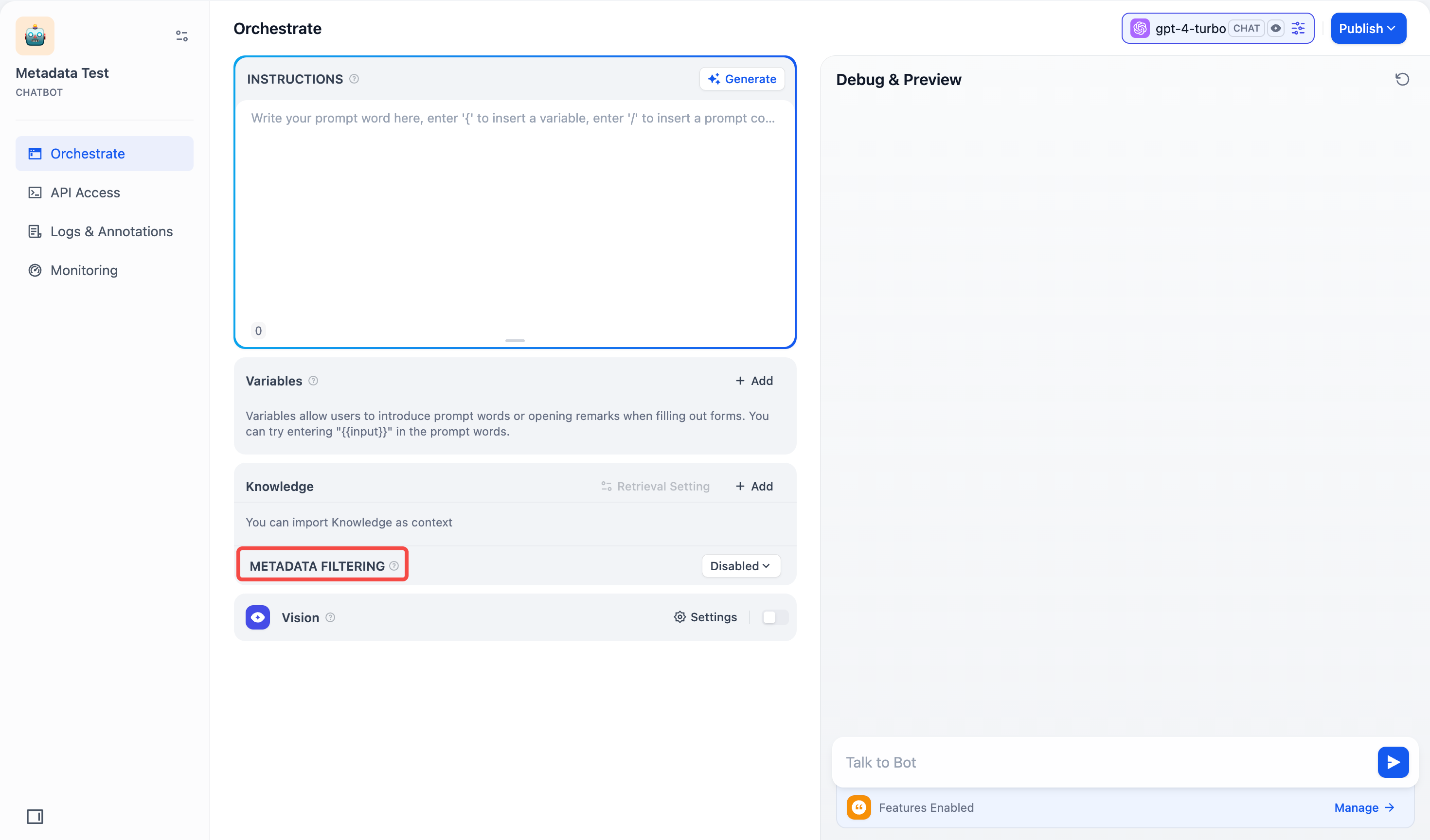

#### Search Within Specific Documents

By default, retrieval searches across the entire knowledge base. To restrict retrieval to specific documents, enable manual or automatic metadata filtering.

This improves retrieval precision, especially when your knowledge base is large or contains content for different contexts.

For creating and managing document metadata, see [Metadata](/en/use-dify/knowledge/metadata).

### Process Multimodal Inputs

To allow end users to upload files, select a model with the corresponding multimodal capabilities. The relevant file type toggles—**Vision**, **Audio**, or **Document**—appear once the model supports them, and you can enable each as needed.

You can quickly identify a model's supported modalities by its tags.

We recommend selecting models that are strong at **reasoning** and **natively support tool calling**.

An agent needs to judge *when* to use a tool, *which tool* fits the task, and *how* to interpret the result—this depends on the model's reasoning ability. Models with built-in tool-call support also execute these decisions more reliably.

You can verify your model's tool-call support in **Agent Settings**, where the system automatically displays the agent mode:

* **Function Calling** for models with native support, meaning they can call tools directly.

* **ReAct** for others, so Dify guides them to use tools through a prompting strategy.

## Publish

When you're happy with the results, click **Publish** to make your app available. See [Publish](/en/use-dify/publish/README) for the full list of publishing options.

# Chatbot

Source: https://docs.dify.ai/en/use-dify/build/chatbot

The simplest way to build a conversational app with a model and a prompt

Chatbots are conversational apps where users interact with the model through a chat interface.

Use it for tasks that benefit from back-and-forth interaction but don't require tool calls or a multi-step workflow—for example, building an internal Q\&A assistant grounded in your team's knowledge base.

Chatbots keep up to 500 messages or 2,000 tokens of history per conversation. If either limit is exceeded, the oldest messages will be removed to make room for new ones.

Chatbots also support optional features like conversation openers, follow-up suggestions, and more. See [App Toolkit](/en/use-dify/build/additional-features) for details.

## Configure

### Write the Prompt

The prompt tells the model what to do, how to respond, and what constraints to follow. It shapes how the model behaves throughout the conversation, so think of it as defining a consistent persona rather than describing a one-off task.

Here are some tips for writing effective prompts:

* **Define the persona**: Describe who the model should act as and the tone it should use.

* **Specify the output format**: Describe the structure, length, or style you expect.

* **Set constraints**: Tell the model what to avoid or what rules to follow.

#### Create Dynamic Prompts with Variables

To adapt your chatbot to different users or contexts without rewriting the prompt each time, add variables to collect the necessary information upfront.

Variables are placeholders in the prompt—each one appears as an input field that users fill in before the conversation starts, and their values are injected into the prompt at runtime. Users can also update variable values mid-conversation, and the prompt will adjust accordingly.

For example, an onboarding assistant might use `role` and `language` to tailor its responses:

```text wrap theme={null}

You are an onboarding assistant for new {{role}} hires. Answer questions about company processes and policies. Keep answers friendly and concise, and respond in {{language}}.

```

While drafting the prompt, type `/` > **New Variable** to quickly insert a named placeholder. You can configure its details in the **Variables** section later.

Choose the variable type that matches the input you expect:

Accepts up to 256 characters. Use it for names, email addresses, titles, or any brief text input that fits on a single line.

Allows long-form text without length restrictions. It gives users a multi-line text area for detailed descriptions.

Displays a dropdown menu with predefined options.

Restricts input to numerical values only—ideal for quantities, ratings, IDs, or any data requiring mathematical processing.

Provides a simple yes/no option. When a user checks the box, the output is `true`; otherwise, it's `false`. Use it for confirmations or any case that requires a binary choice.

Fetches variable values from an external API at runtime instead of collecting them from users.

Use it when your prompt needs dynamic data from an external source, such as live weather conditions or database records. See [API Extension](/en/use-dify/workspace/api-extension/api-extension) for details.

**Label Name** is what end users see for each input field.

#### Generate or Improve the Prompt with AI

If you're unsure where to start or want to refine the existing prompt, click **Generate** to let an LLM help you draft it.

Describe what you want from scratch, or reference `current_prompt` and specify what to improve. For more targeted results, add an example in **Ideal Output**.

Each generation is saved as a version, so you can experiment and roll back freely.

### Ground Responses in Your Own Data

To ground the model's responses in your own data rather than general knowledge, add a knowledge base.

Each time a user sends a message, it is used as the search query to retrieve relevant content from the knowledge base, which is then injected into the prompt as context for the model.

#### Configure App-Level Retrieval Settings

To fine-tune how retrieval results are processed, click **Retrieval Setting**.

There are two layers of retrieval settings—the knowledge base level and the app level.

Think of them as two consecutive filters: the knowledge base settings determine the initial pool of results, and the app settings further rerank the results or narrow down the pool.

* **Rerank Settings**

* **Weighted Score**

The relative weight between semantic similarity and keyword matching during reranking. Higher semantic weight favors meaning relevance, while higher keyword weight favors exact matches.

Weighted Score is available only when all added knowledge bases are indexed with **High Quality** mode.

* **Rerank Model**

The rerank model to re-score and reorder all the results based on their relevance to the query.

If any multimodal knowledge bases are added, select a multimodal rerank model (marked with a **Vision** tag) as well. Otherwise, retrieved images will be excluded from reranking and the final output.

* **Top K**

The maximum number of top results to return after reranking.

When a rerank model is selected, this value will be automatically adjusted based on the model's maximum input capacity (how much text the model can process at once).

* **Score Threshold**

The minimum similarity score for returned results. Results scoring below this threshold are excluded. Use higher thresholds for stricter relevance or lower thresholds to include broader matches.

#### Search Within Specific Documents

By default, retrieval searches across the entire knowledge base. To restrict retrieval to specific documents, enable manual or automatic metadata filtering.

This improves retrieval precision, especially when your knowledge base is large or contains content for different contexts.

For creating and managing document metadata, see [Metadata](/en/use-dify/knowledge/metadata).

### Process Multimodal Inputs

To allow end users to upload files, select a model with the corresponding multimodal capabilities. The relevant file type toggles—**Vision**, **Audio**, or **Document**—appear once the model supports them, and you can enable each as needed.

You can quickly identify a model's supported modalities by its tags.

![Model Tags]() Click **Settings** under **Vision** to configure how files are accepted and processed. Upload settings apply across all enabled file types.

* **Resolution**: Controls the detail level for **image** processing only.

* **High**: Better accuracy for complex images but uses more tokens

* **Low**: Faster processing with fewer tokens for simple images

* **Upload Method**: Choose whether users can upload from their device, paste a URL, or both.

* **Upload Limit**: The maximum number of files a user can upload per message.

For self-hosted deployments, you can adjust file size limits via the following environment variables:

* `UPLOAD_IMAGE_FILE_SIZE_LIMIT` (default: 10 MB)

* `UPLOAD_FILE_SIZE_LIMIT` (default: 15 MB)

* `UPLOAD_AUDIO_FILE_SIZE_LIMIT` (default: 50 MB)

See [Environment Variables](/en/self-host/configuration/environments) for details.

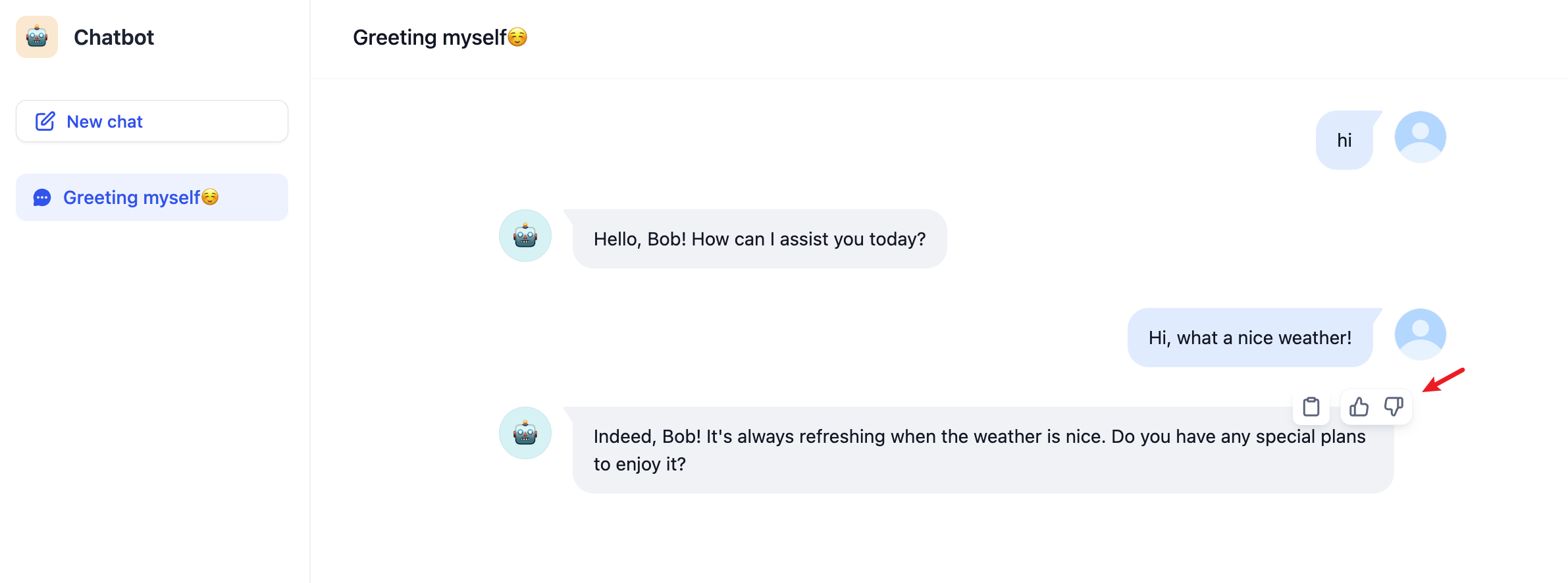

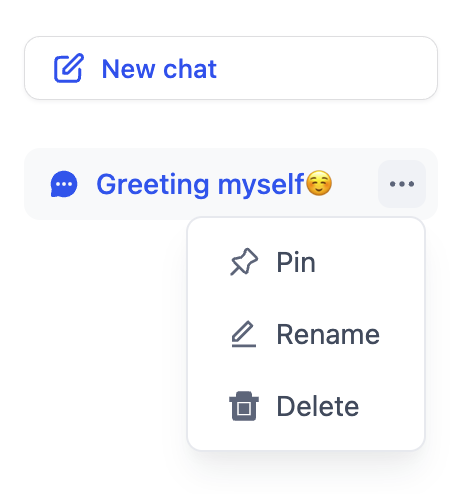

## Debug & Preview

In the preview panel on the right, test your chatbot in real time. Select a model that best fits your task, type a message, and send it to see how the model responds.

After selecting a model, you can adjust its parameters to control how it generates responses. Available parameters and presets vary by model.

To compare outputs across different models, click **Debug as Multiple Models** to run up to 4 models simultaneously.

Click **Settings** under **Vision** to configure how files are accepted and processed. Upload settings apply across all enabled file types.

* **Resolution**: Controls the detail level for **image** processing only.

* **High**: Better accuracy for complex images but uses more tokens

* **Low**: Faster processing with fewer tokens for simple images

* **Upload Method**: Choose whether users can upload from their device, paste a URL, or both.

* **Upload Limit**: The maximum number of files a user can upload per message.

For self-hosted deployments, you can adjust file size limits via the following environment variables:

* `UPLOAD_IMAGE_FILE_SIZE_LIMIT` (default: 10 MB)

* `UPLOAD_FILE_SIZE_LIMIT` (default: 15 MB)

* `UPLOAD_AUDIO_FILE_SIZE_LIMIT` (default: 50 MB)

See [Environment Variables](/en/self-host/configuration/environments) for details.

## Debug & Preview

In the preview panel on the right, test your chatbot in real time. Select a model that best fits your task, type a message, and send it to see how the model responds.

After selecting a model, you can adjust its parameters to control how it generates responses. Available parameters and presets vary by model.

To compare outputs across different models, click **Debug as Multiple Models** to run up to 4 models simultaneously.

![Debug with Multiple Models]() ## Publish

When you're happy with the results, click **Publish** to make your app available. See [Publish](/en/use-dify/publish/README) for the full list of publishing options.

# Using MCP Tools

Source: https://docs.dify.ai/en/use-dify/build/mcp

Connect external tools from [MCP servers](https://modelcontextprotocol.io/docs/getting-started/intro) to your Dify apps. Instead of just built-in tools, you can use tools from the growing [MCP ecosystem](https://mcpservers.org/).

This covers using MCP tools in Dify. To publish Dify apps as MCP servers, see [here](/en/use-dify/publish/publish-mcp).

Only supports MCP servers with [HTTP transport](https://modelcontextprotocol.io/docs/learn/architecture#transport-layer) right now.

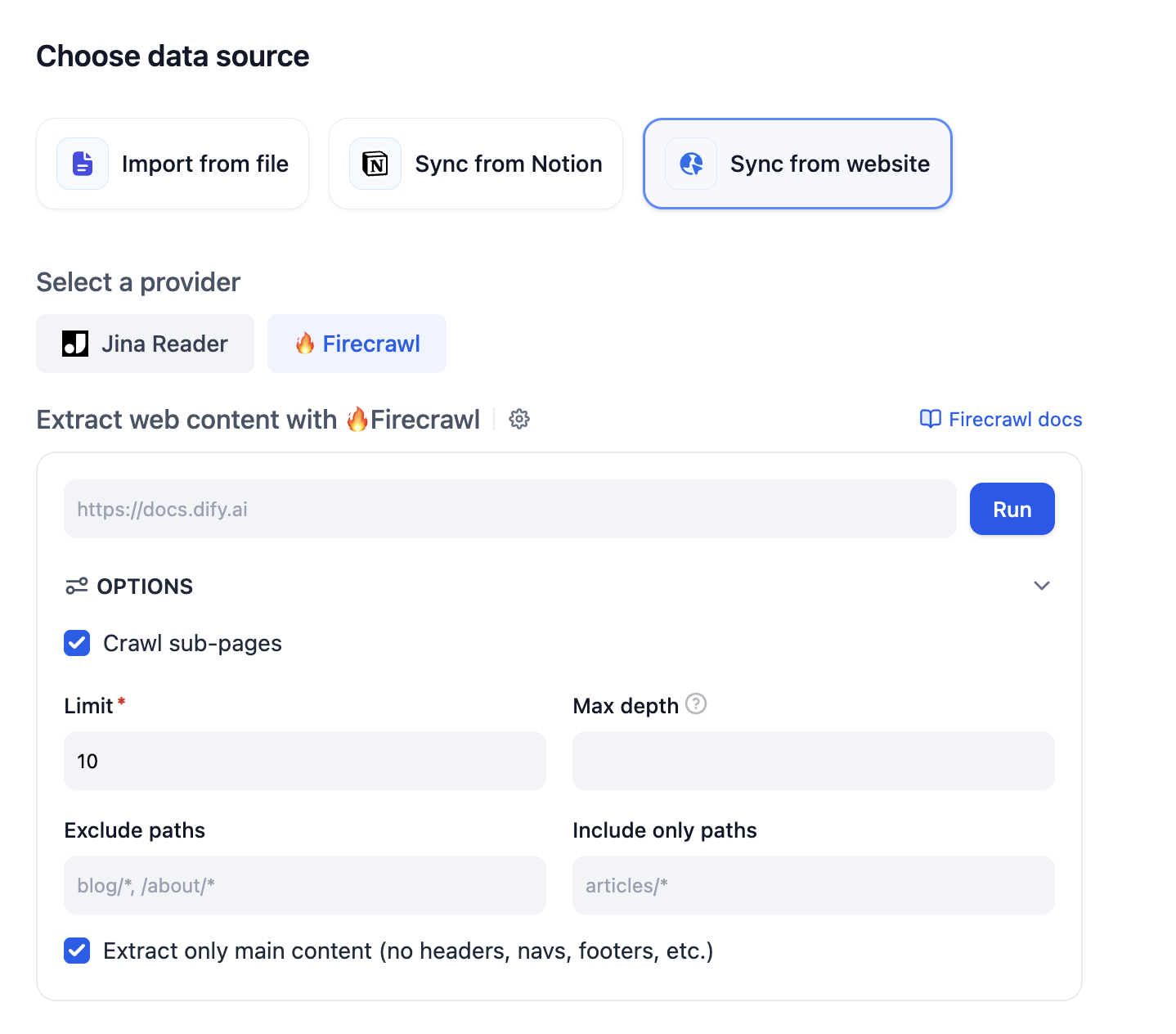

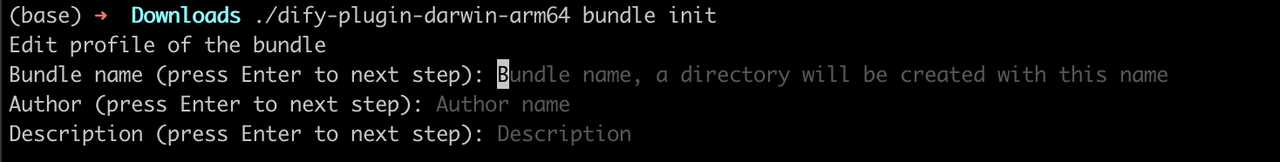

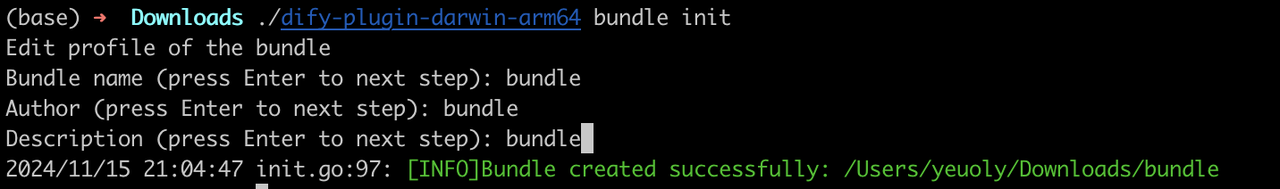

## Adding MCP servers

Go to **Tools** → **MCP** in your workspace.

## Publish

When you're happy with the results, click **Publish** to make your app available. See [Publish](/en/use-dify/publish/README) for the full list of publishing options.

# Using MCP Tools

Source: https://docs.dify.ai/en/use-dify/build/mcp

Connect external tools from [MCP servers](https://modelcontextprotocol.io/docs/getting-started/intro) to your Dify apps. Instead of just built-in tools, you can use tools from the growing [MCP ecosystem](https://mcpservers.org/).

This covers using MCP tools in Dify. To publish Dify apps as MCP servers, see [here](/en/use-dify/publish/publish-mcp).

Only supports MCP servers with [HTTP transport](https://modelcontextprotocol.io/docs/learn/architecture#transport-layer) right now.

## Adding MCP servers

Go to **Tools** → **MCP** in your workspace.

![MCP Server List]() Click **Add MCP Server (HTTP)**:

Click **Add MCP Server (HTTP)**:

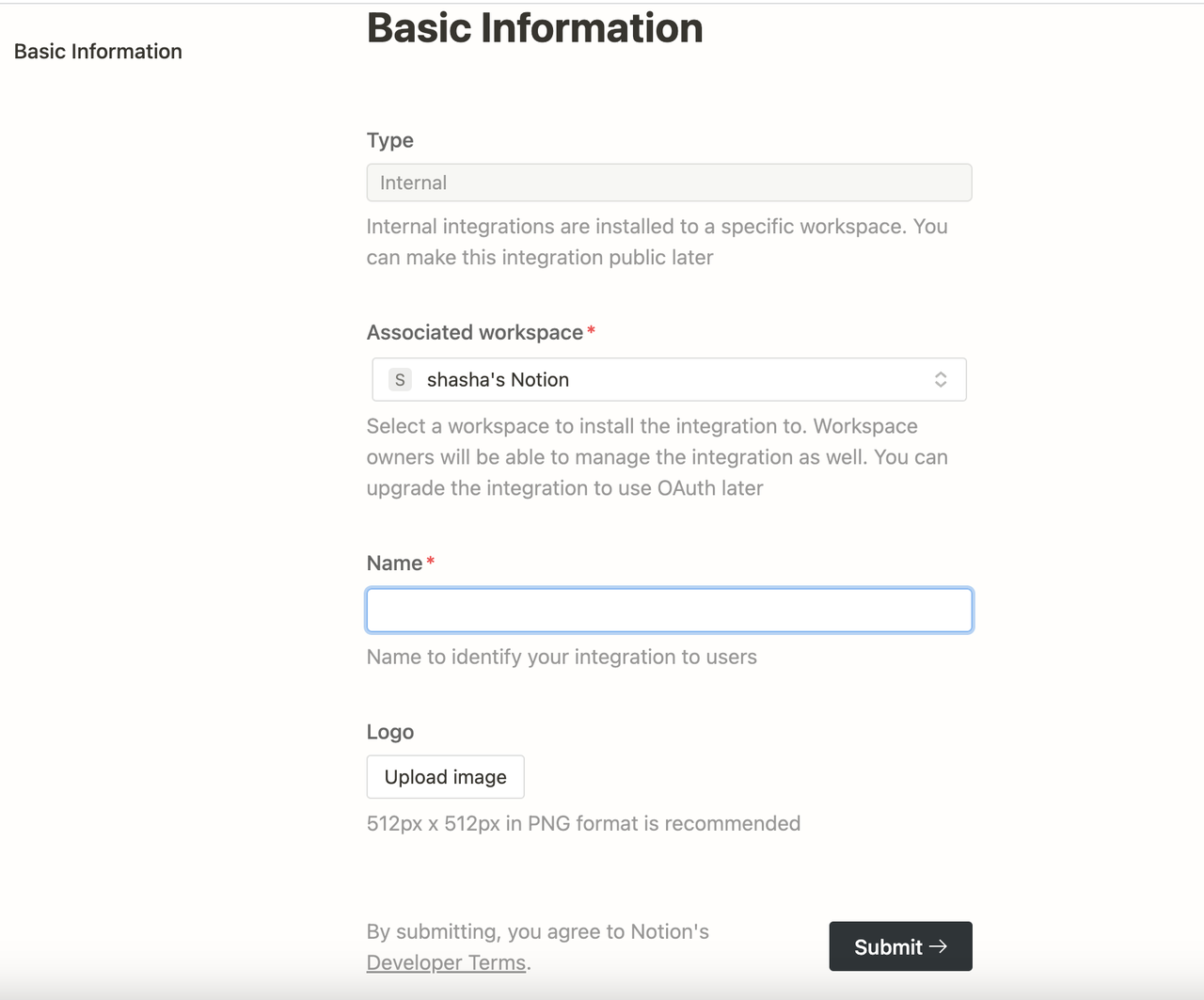

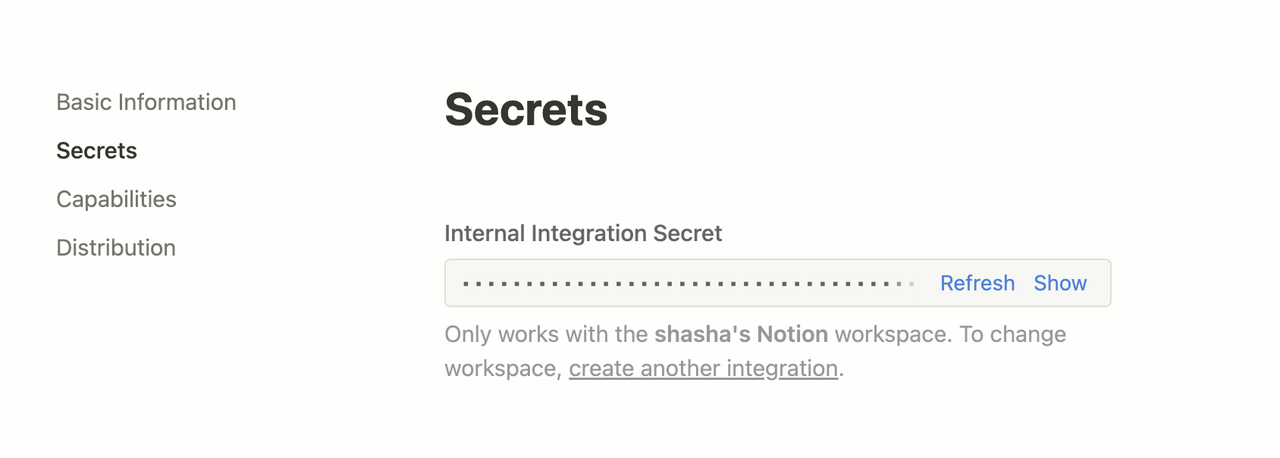

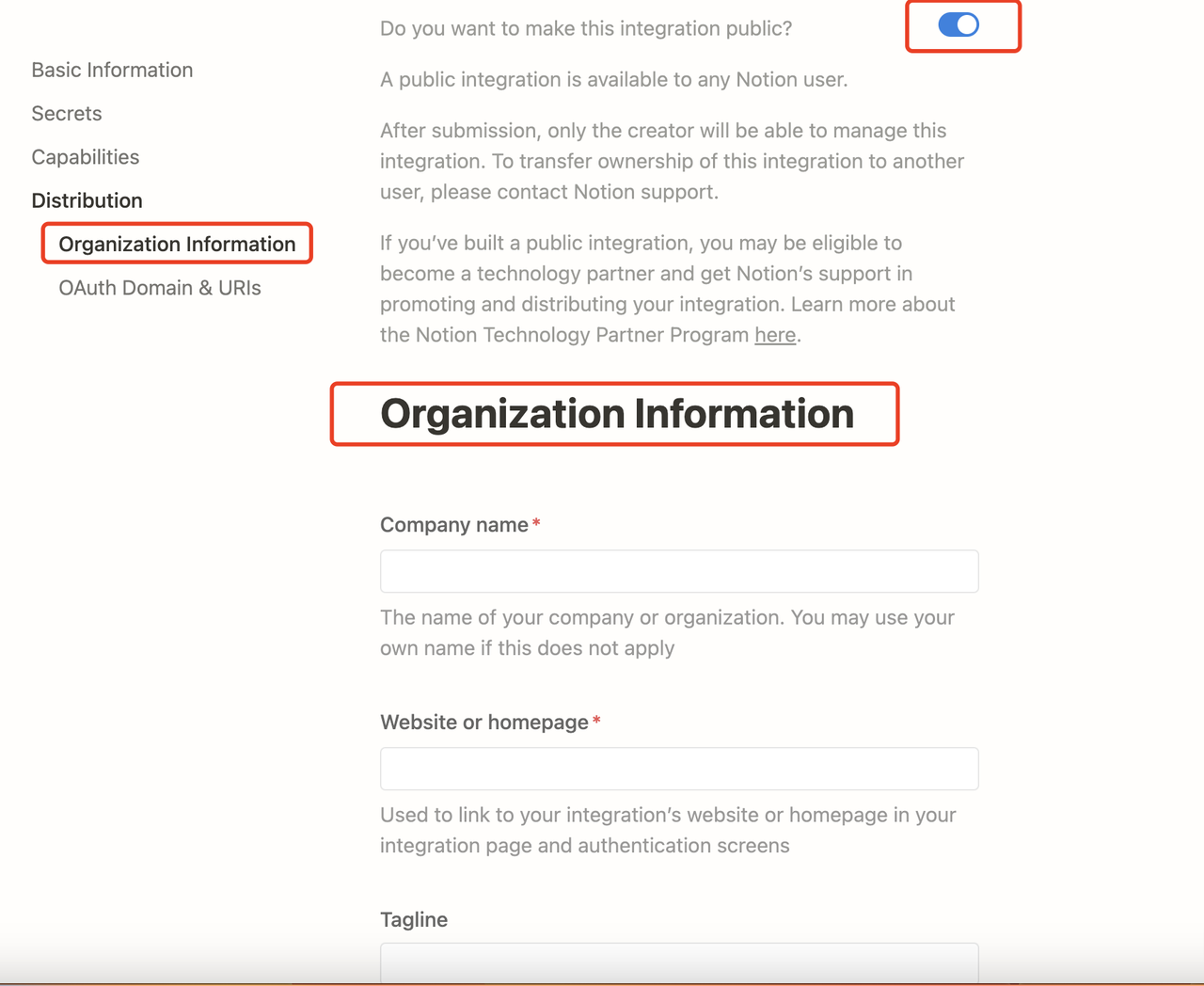

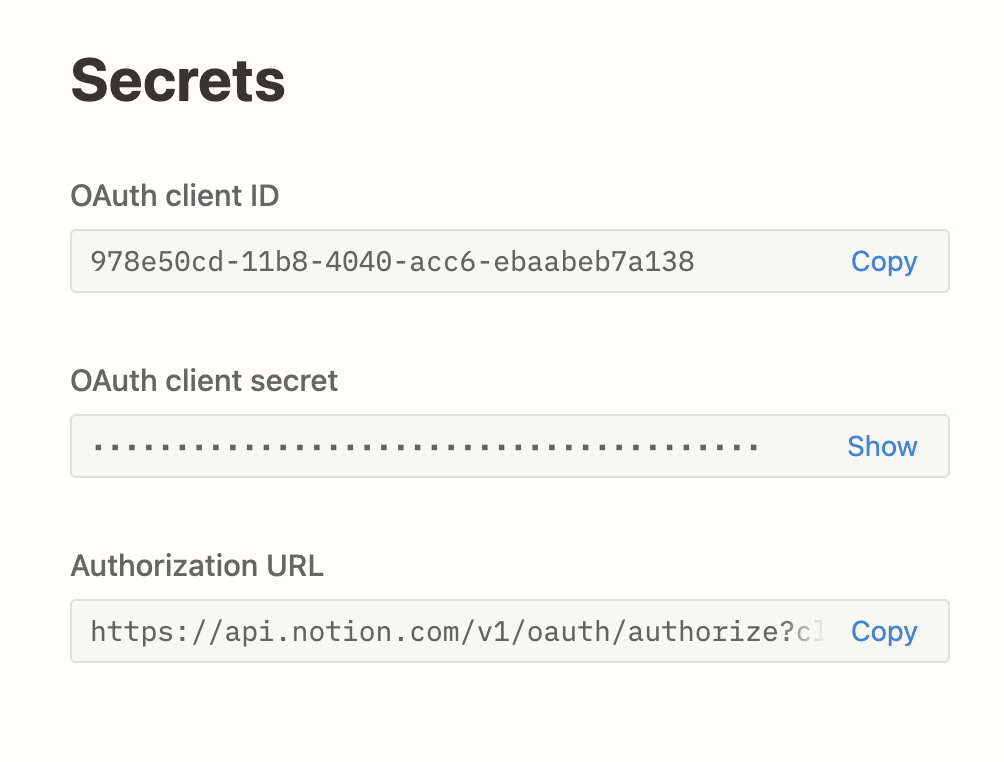

![MCP Add Server Dialog]() **Server URL**: Where the MCP server lives (like `https://api.notion.com/mcp`)

**Name & Icon**: Call it something useful. Dify tries to grab icons automatically.

**Server ID**: Unique identifier (lowercase, numbers, underscores, hyphens, max 24 chars)

Never change the server ID once you start using it. This will break any apps that use tools from this server.

## What happens next

Dify automatically:

1. Connects to the server

2. Handles any OAuth stuff

3. Gets the list of available tools

4. Makes them available in your app builder

You'll see a server card once it finds tools:

**Server URL**: Where the MCP server lives (like `https://api.notion.com/mcp`)

**Name & Icon**: Call it something useful. Dify tries to grab icons automatically.

**Server ID**: Unique identifier (lowercase, numbers, underscores, hyphens, max 24 chars)

Never change the server ID once you start using it. This will break any apps that use tools from this server.

## What happens next

Dify automatically:

1. Connects to the server

2. Handles any OAuth stuff

3. Gets the list of available tools

4. Makes them available in your app builder

You'll see a server card once it finds tools:

![MCP Server Card]() ## Managing servers

Click any server card to:

**Update Tools**: Refresh when the external service adds new tools

## Managing servers

Click any server card to:

**Update Tools**: Refresh when the external service adds new tools

![MCP Server Tools List]() **Re-authorize**: Fix auth when tokens expire

**Edit Settings**: Change server details (but not the ID!)

**Remove**: Disconnect the server (this breaks apps using its tools)

## Using MCP tools

Once connected, MCP tools show up everywhere you'd expect:

**In agents**: Tools appear grouped by server ("Notion MCP » Create Page")

**In workflows**: MCP tools become available as nodes

**In agent nodes**: Same as regular agents

## Customizing tools

When you add an MCP tool, you can customize it:

**Re-authorize**: Fix auth when tokens expire

**Edit Settings**: Change server details (but not the ID!)

**Remove**: Disconnect the server (this breaks apps using its tools)

## Using MCP tools

Once connected, MCP tools show up everywhere you'd expect:

**In agents**: Tools appear grouped by server ("Notion MCP » Create Page")

**In workflows**: MCP tools become available as nodes

**In agent nodes**: Same as regular agents

## Customizing tools

When you add an MCP tool, you can customize it:

![MCP Tool Settings]() **Description**: Override the default description to be more specific

**Parameters**: For each tool parameter, choose:

* **Auto**: Let the AI decide the value

* **Fixed**: Set a specific value that never changes

**Example**: For a search tool, set `numResults` to 5 (fixed) but keep `query` on auto.

## Sharing apps

When you export apps that use MCP tools:

* The export includes server IDs

* To use the app elsewhere, add the same servers with identical IDs

* Document which MCP servers your app needs

## Troubleshooting

**"Unconfigured Server"**: Check the URL and re-authorize

**Missing tools**: Hit "Update Tools"

**Broken apps**: You probably changed a server ID. Add it back with the original ID.

## Tips

* Use permanent, descriptive server IDs like `github-prod` or `crm-system`

* Keep the same MCP setup across dev/staging/production

* Set fixed values for config stuff, auto for dynamic inputs

* Test MCP integrations before deploying

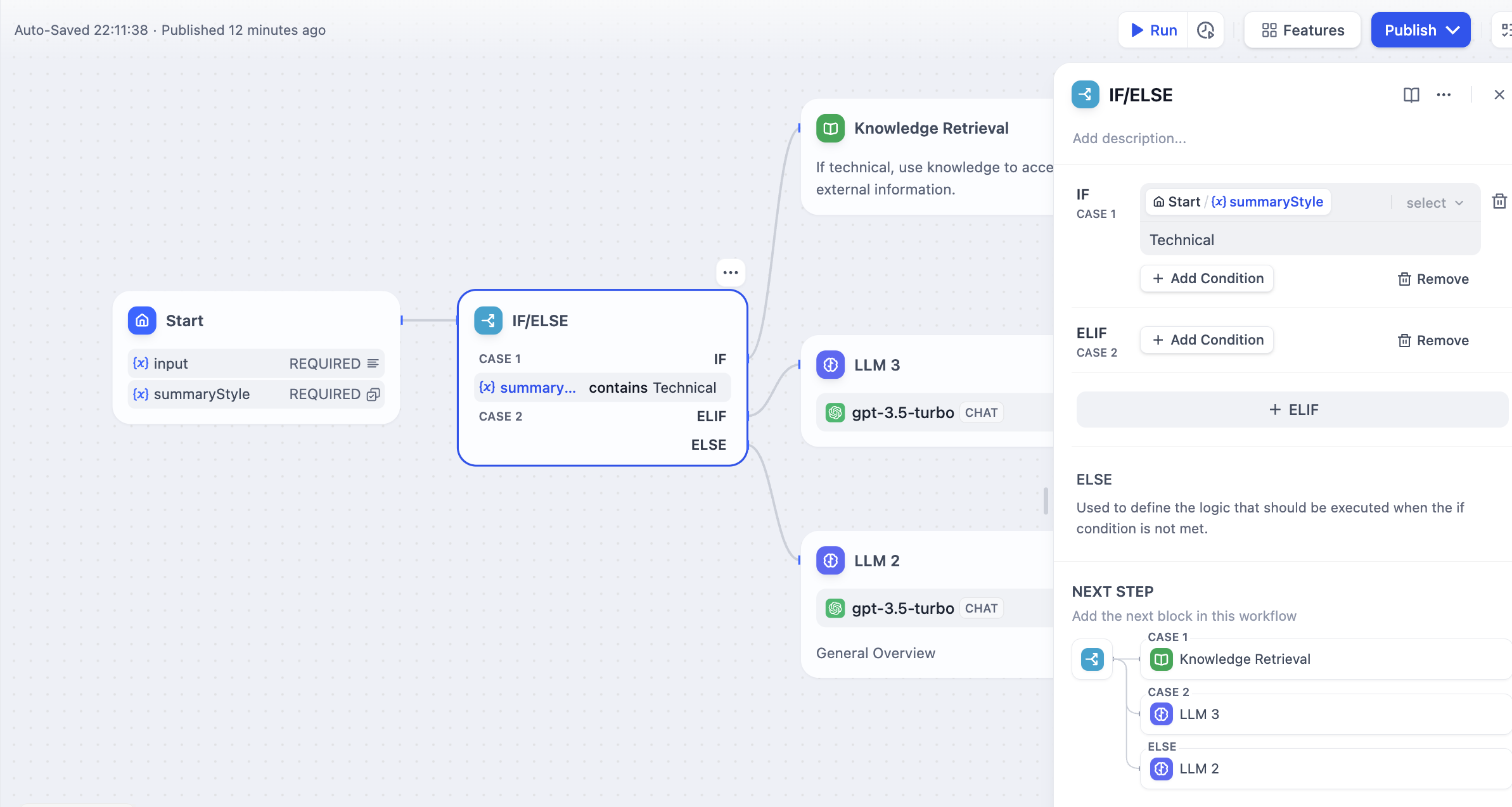

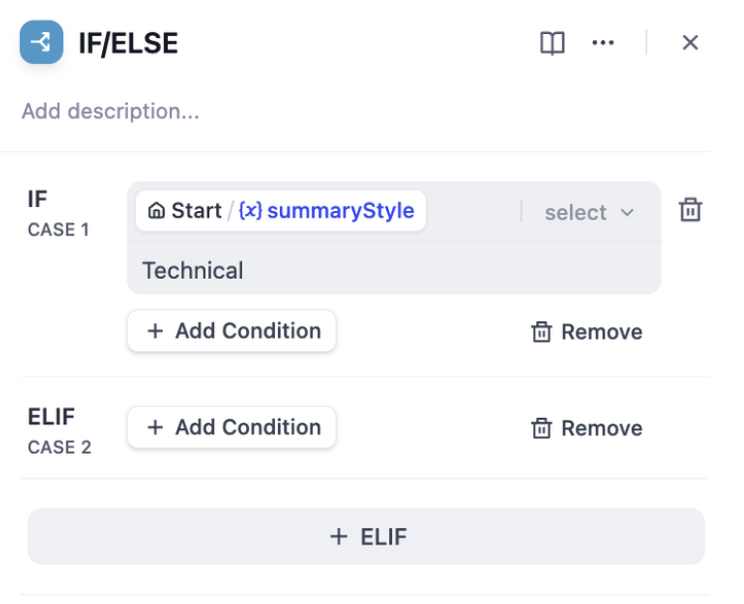

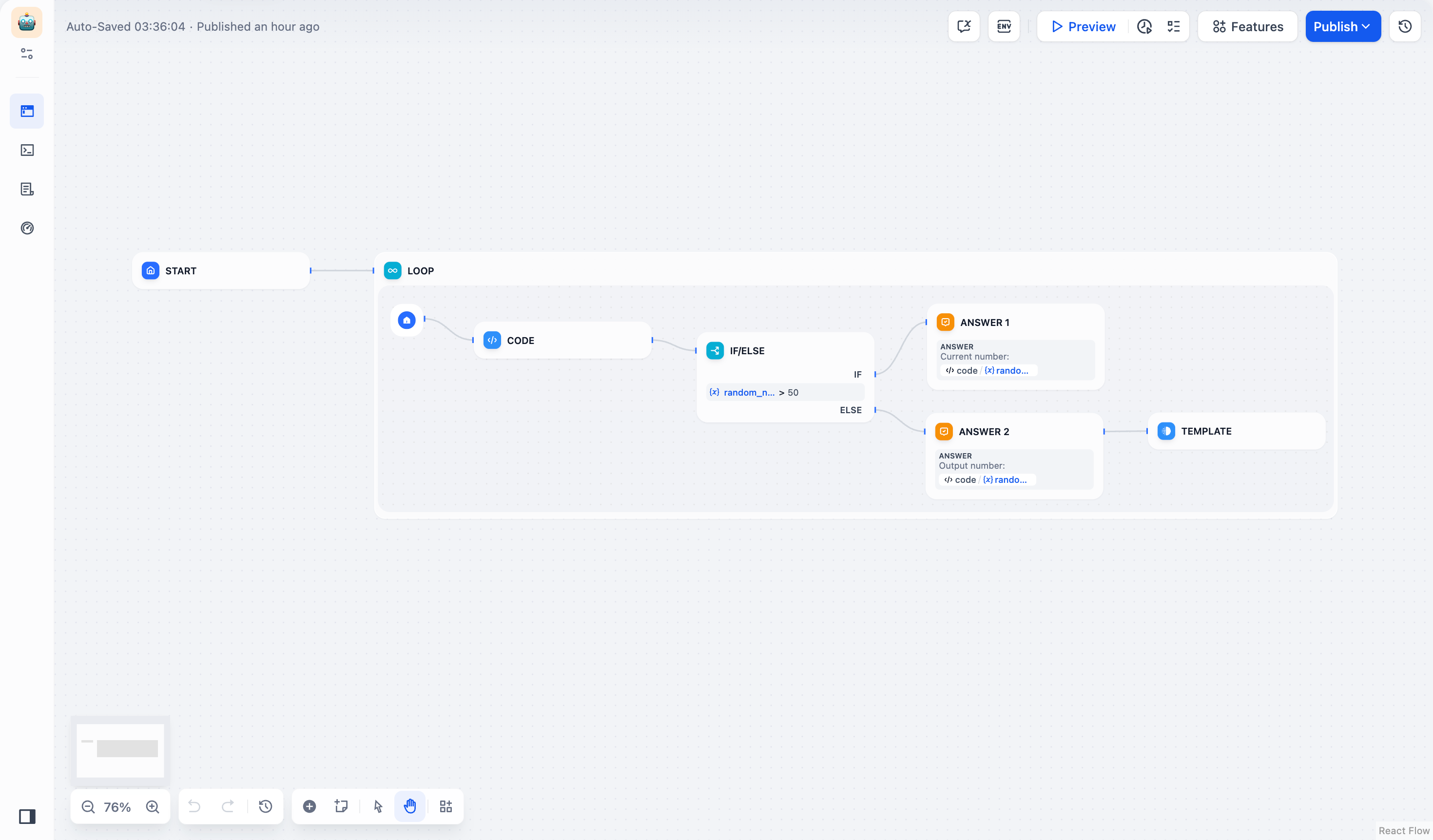

# Flow Logic

Source: https://docs.dify.ai/en/use-dify/build/orchestrate-node

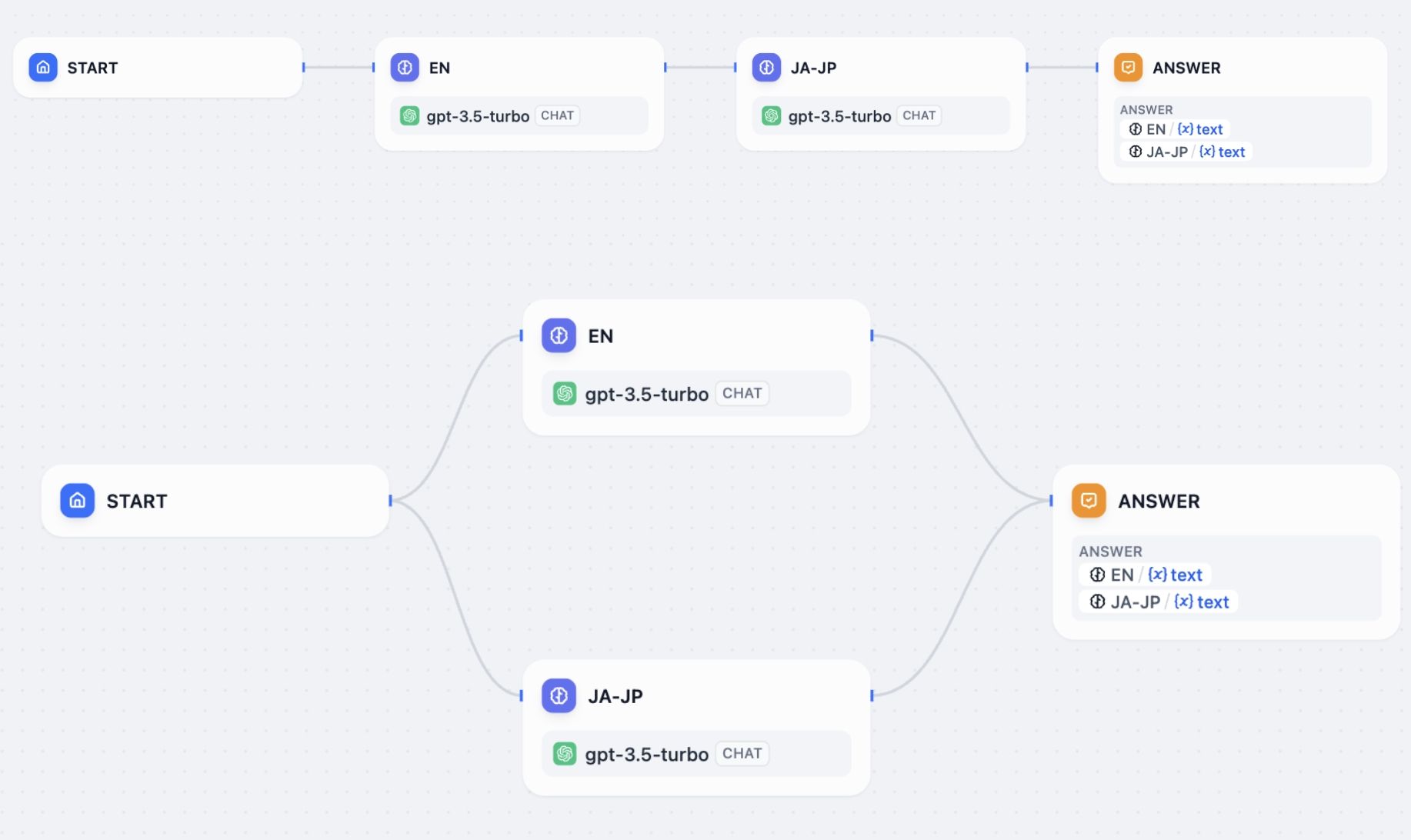

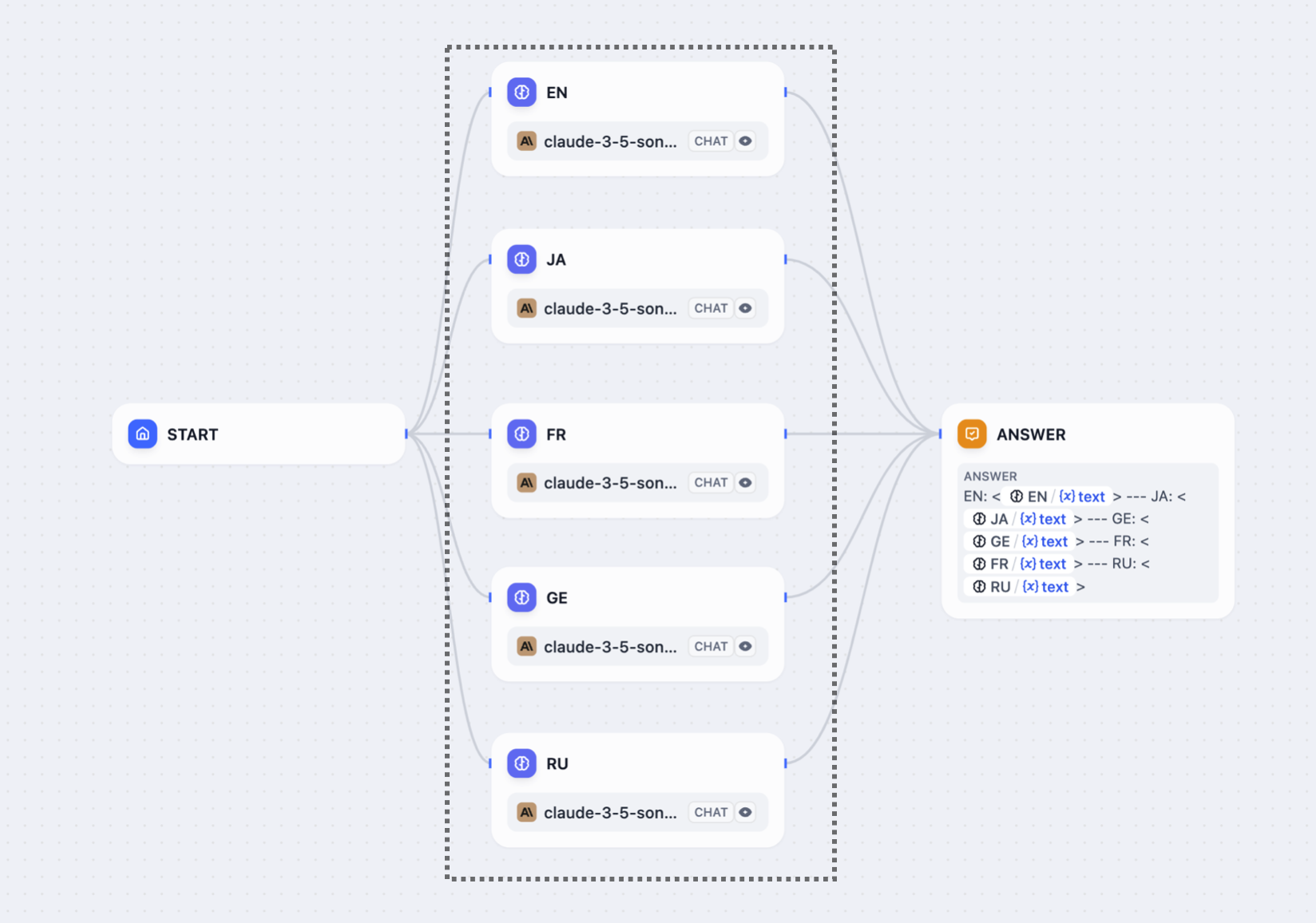

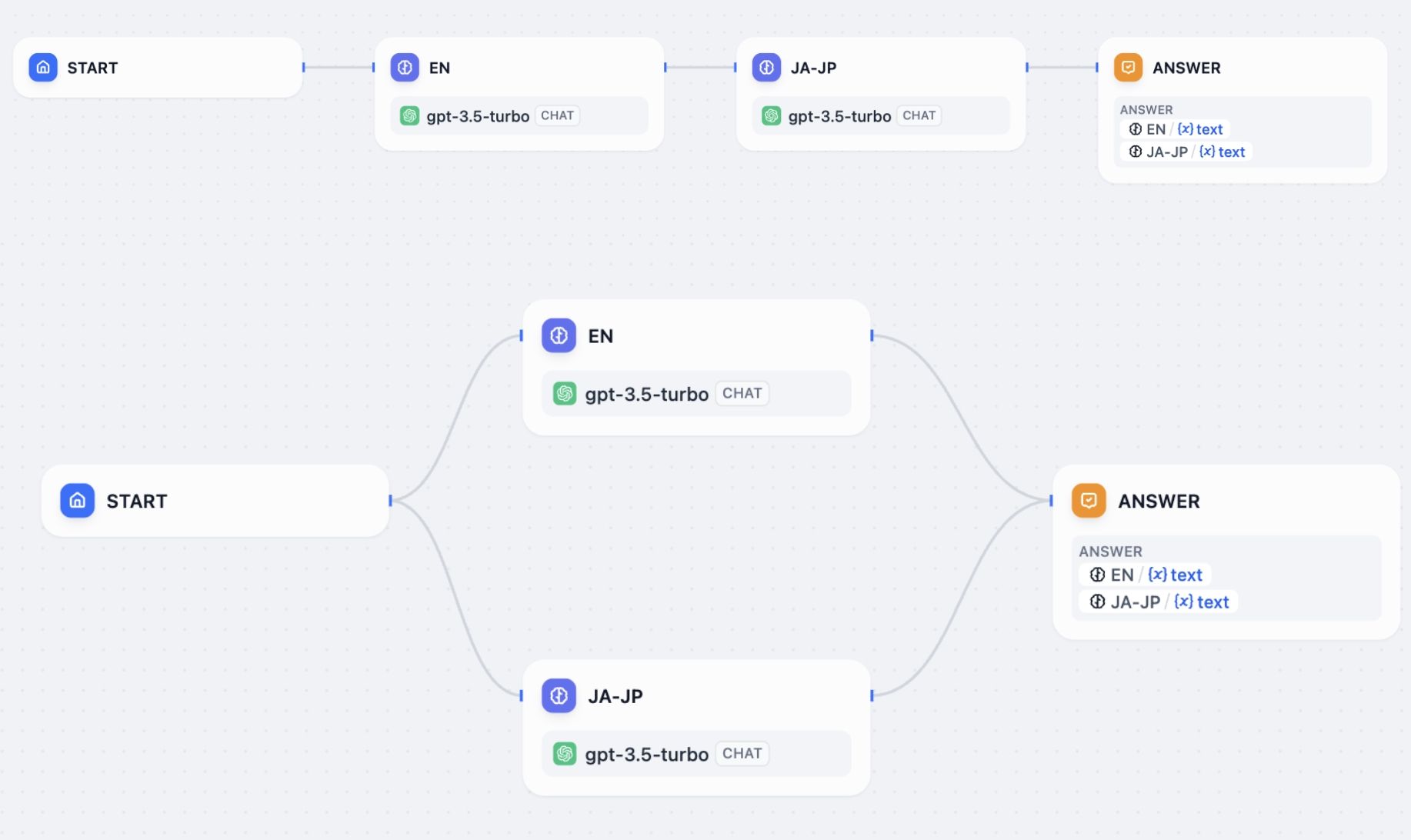

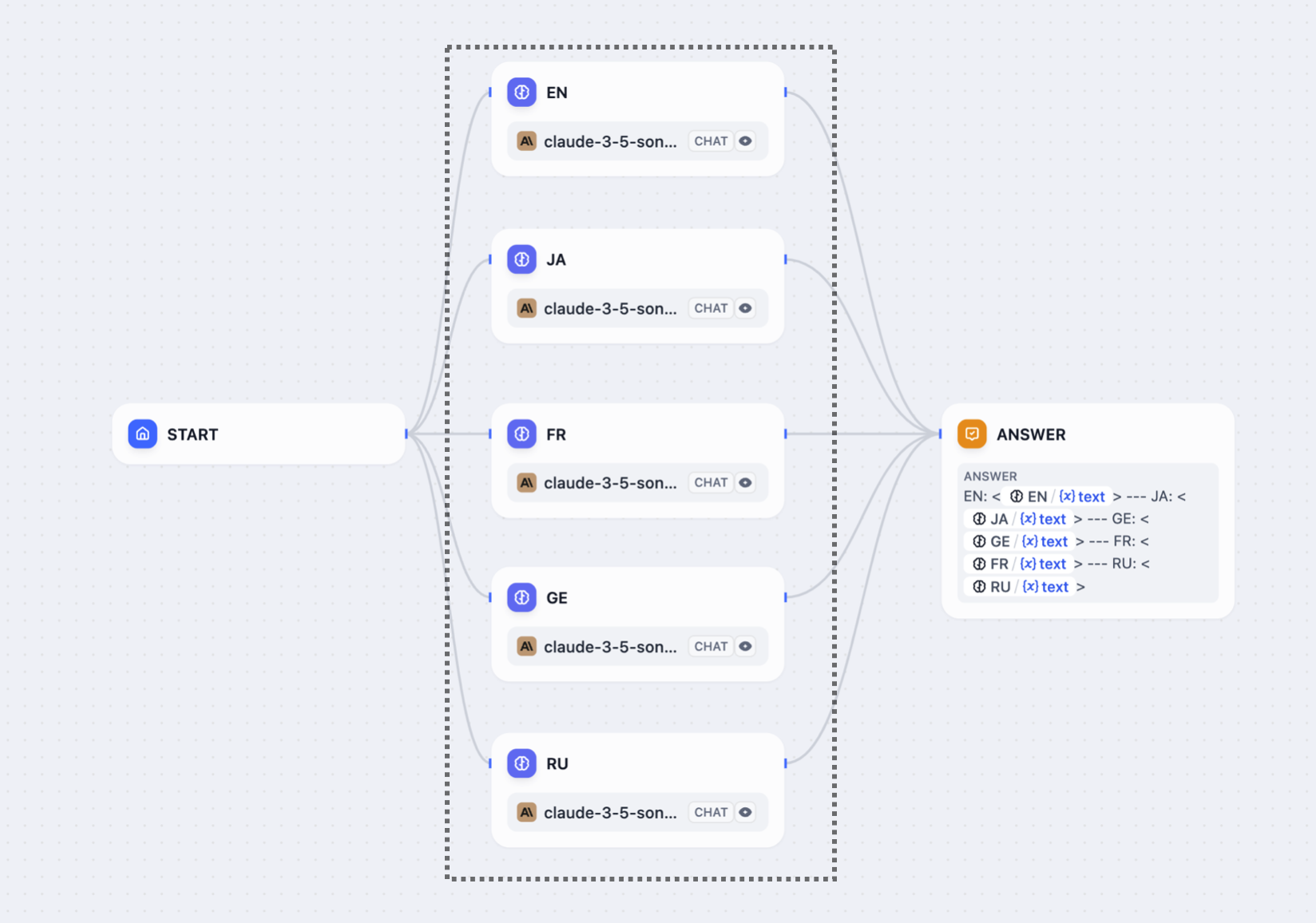

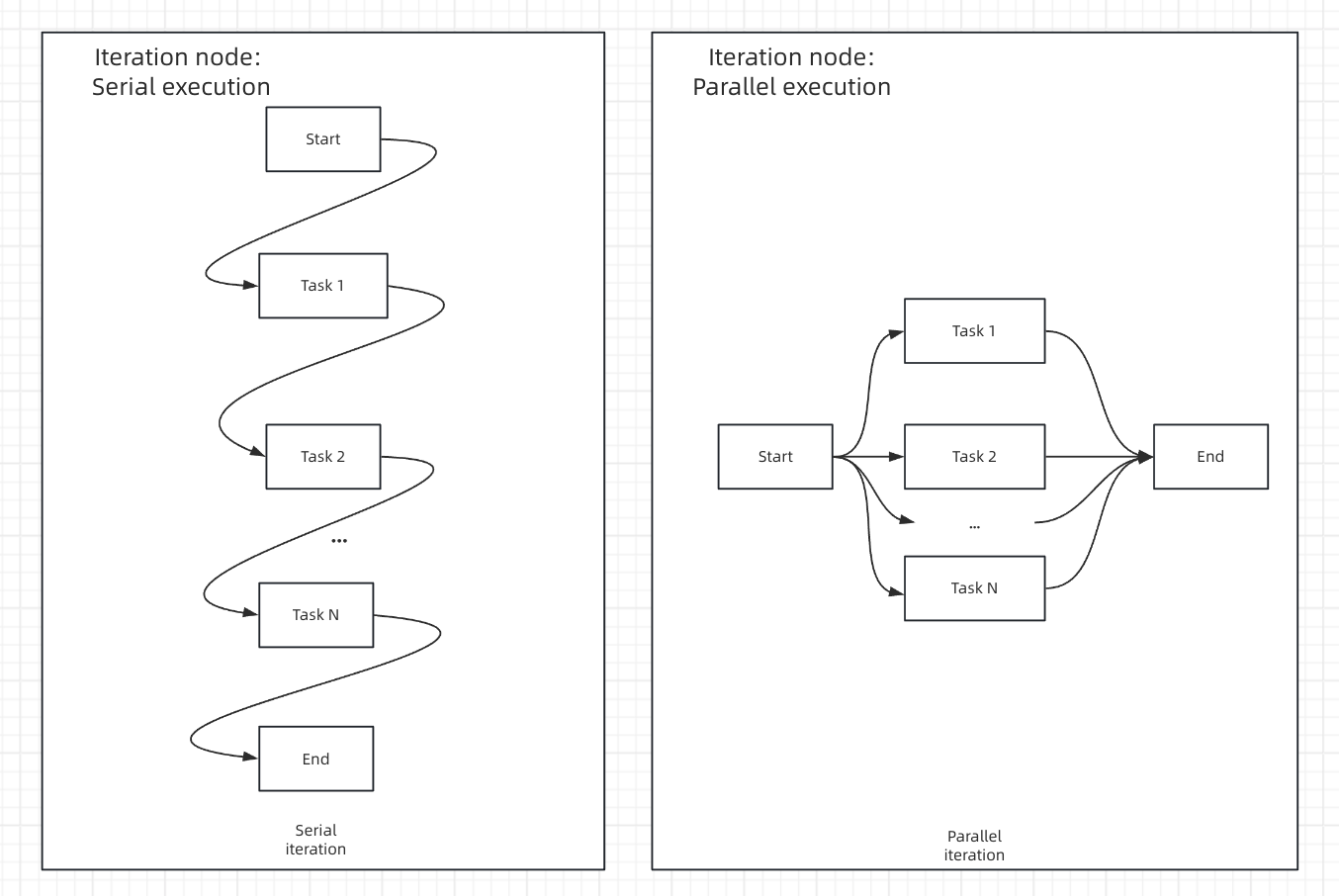

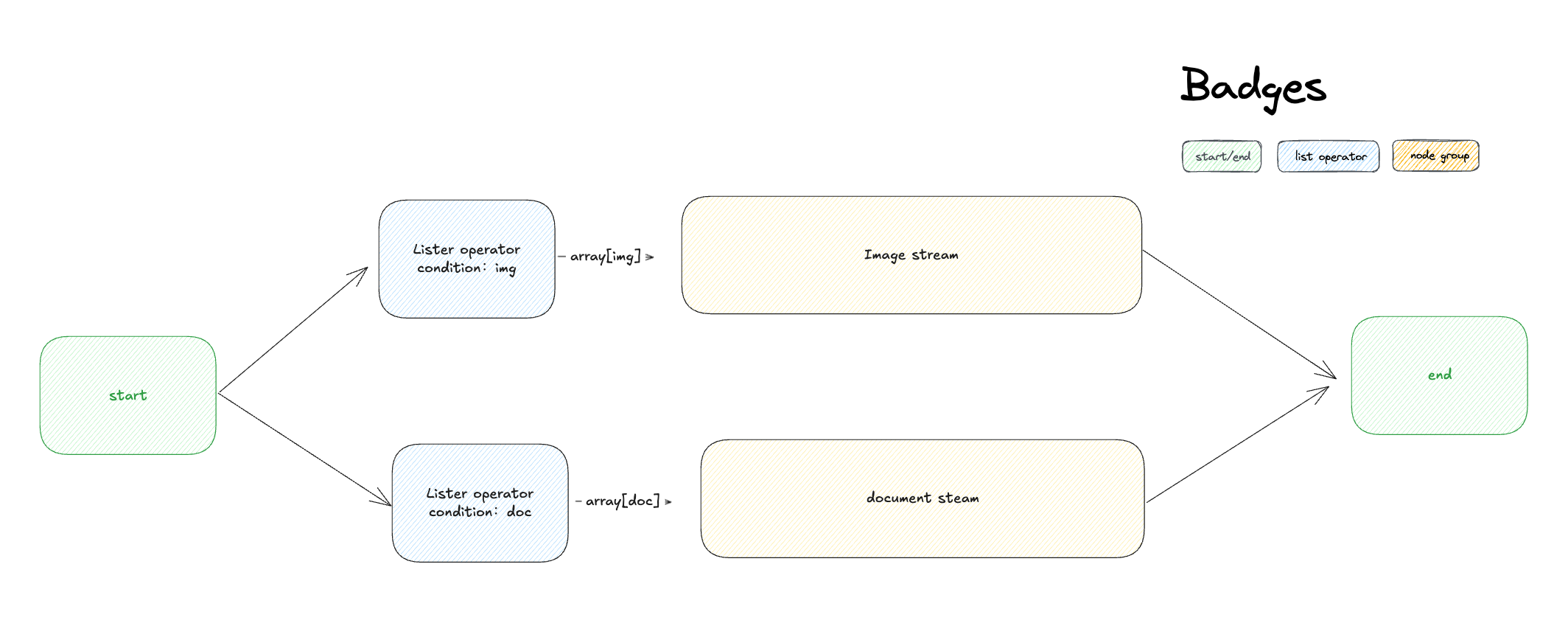

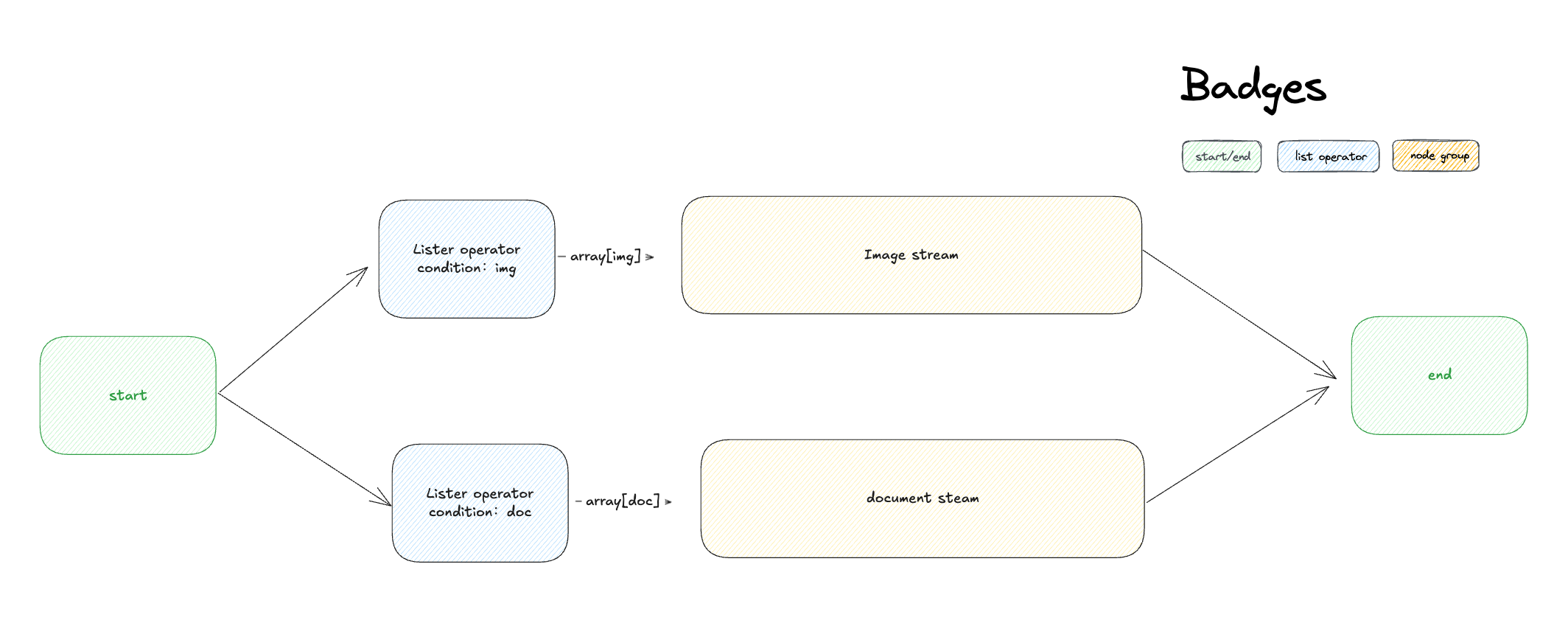

## Serial vs. Parallel execution

Flows execute differently depending on how you connect the nodes.

When you connect nodes one after another, they execute in sequence. Each node waits for the previous one to finish before starting. Each node may use variables from any node that ran before it in the chain.

When you connect multiple nodes to the same starting node, they all run at the same time. Nodes may not reference parallel node outputs.

You can have a maximum of 10 parallel branches from one node, and up to 3 levels of nested parallel structures.

## Variable access

In serial flows, nodes can access variables from any previous node in the chain.

In parallel flows, nodes can access variables from nodes that ran before the parallel split, but they cannot access variables from other parallel nodes since they're running simultaneously.

After parallel branches finish, downstream nodes can access variables from all the parallel outputs.

## Answer node streaming

Answer nodes handle parallel outputs differently. When an Answer node references variables from multiple parallel branches, it streams content progressively:

* Content streams up to the first unresolved variable

* Once that variable's node completes, streaming continues to the next unresolved variable

* The order of variables in the Answer node determines the streaming sequence, not the node execution order

For example, in a flow where `Node A -> Node B -> Answer` with the Answer containing `{{B}}` then `{{A}}`, the Answer will wait for Node B before streaming any content, even if Node A completes first.

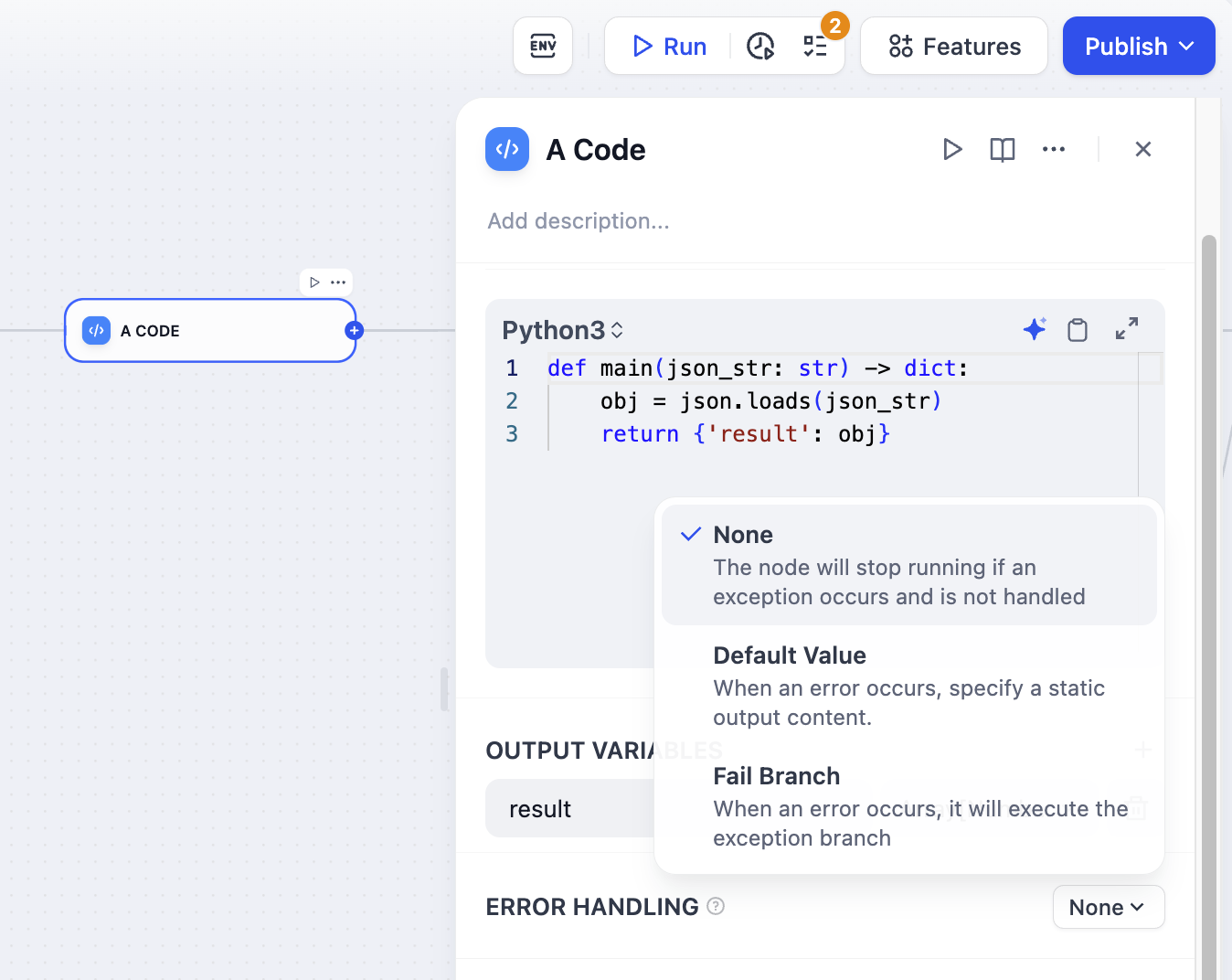

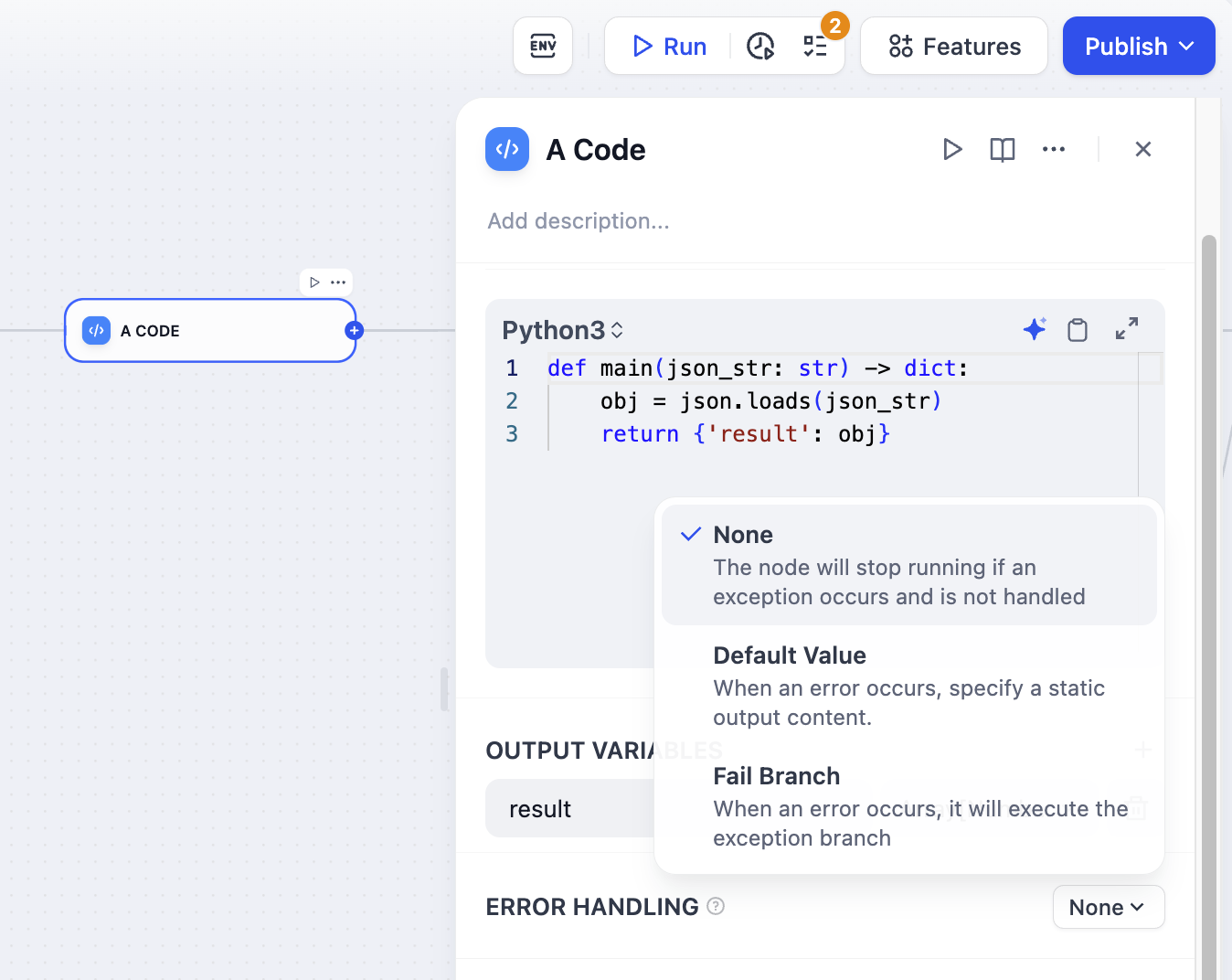

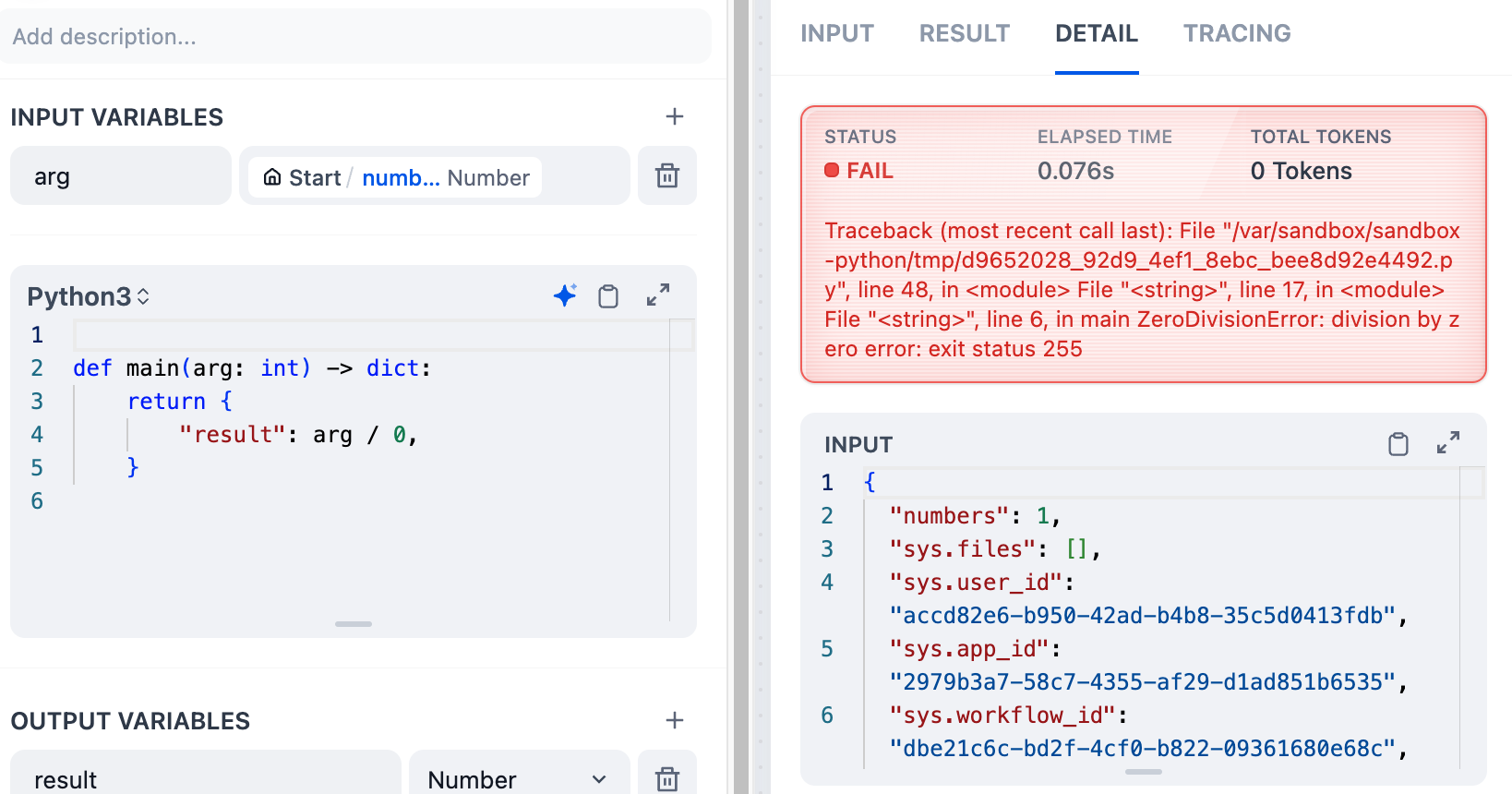

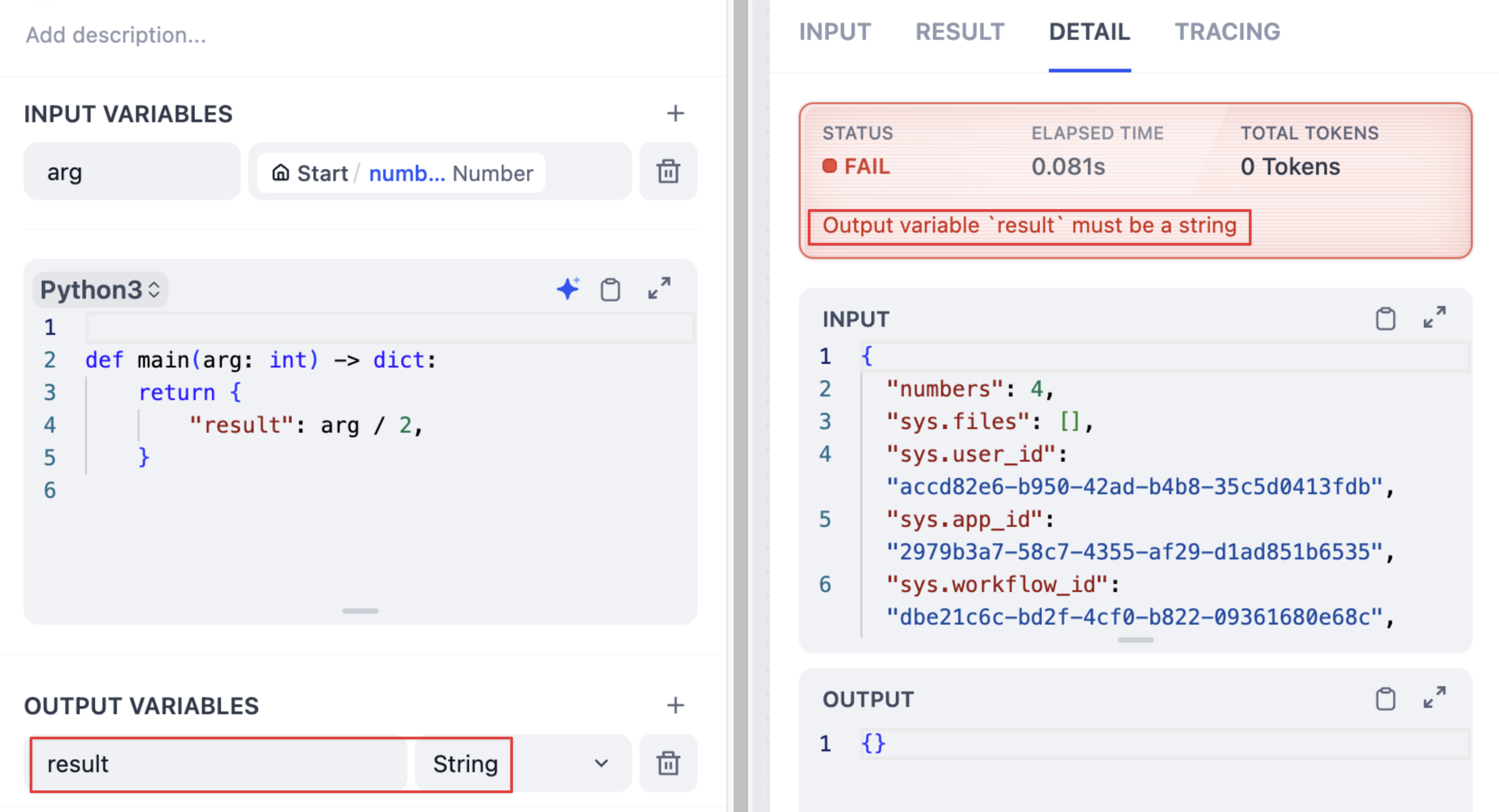

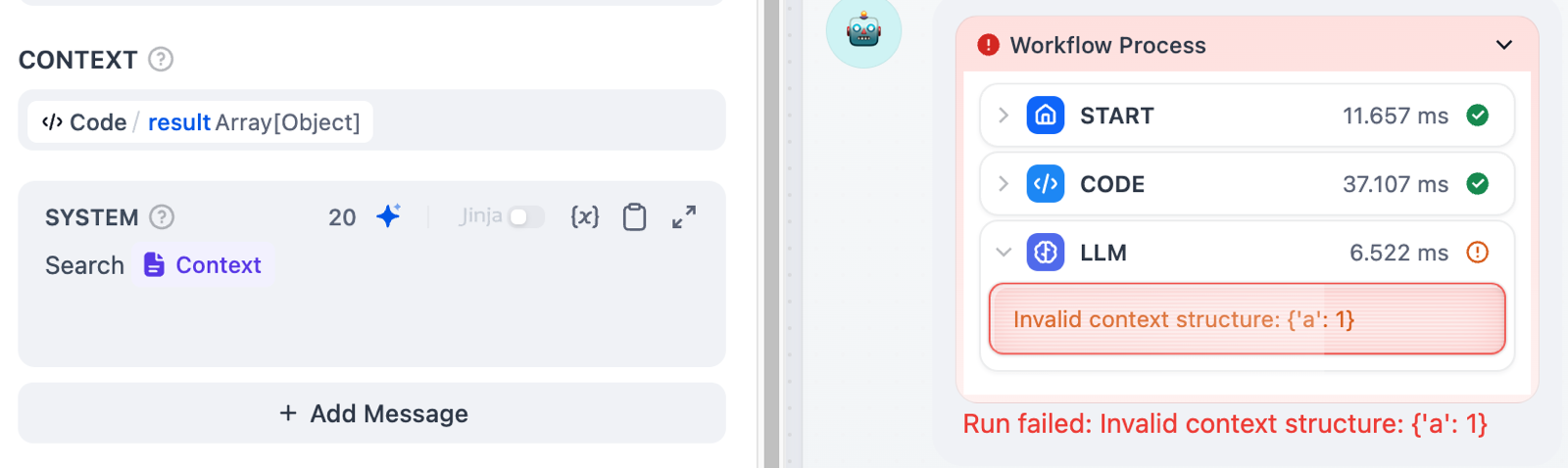

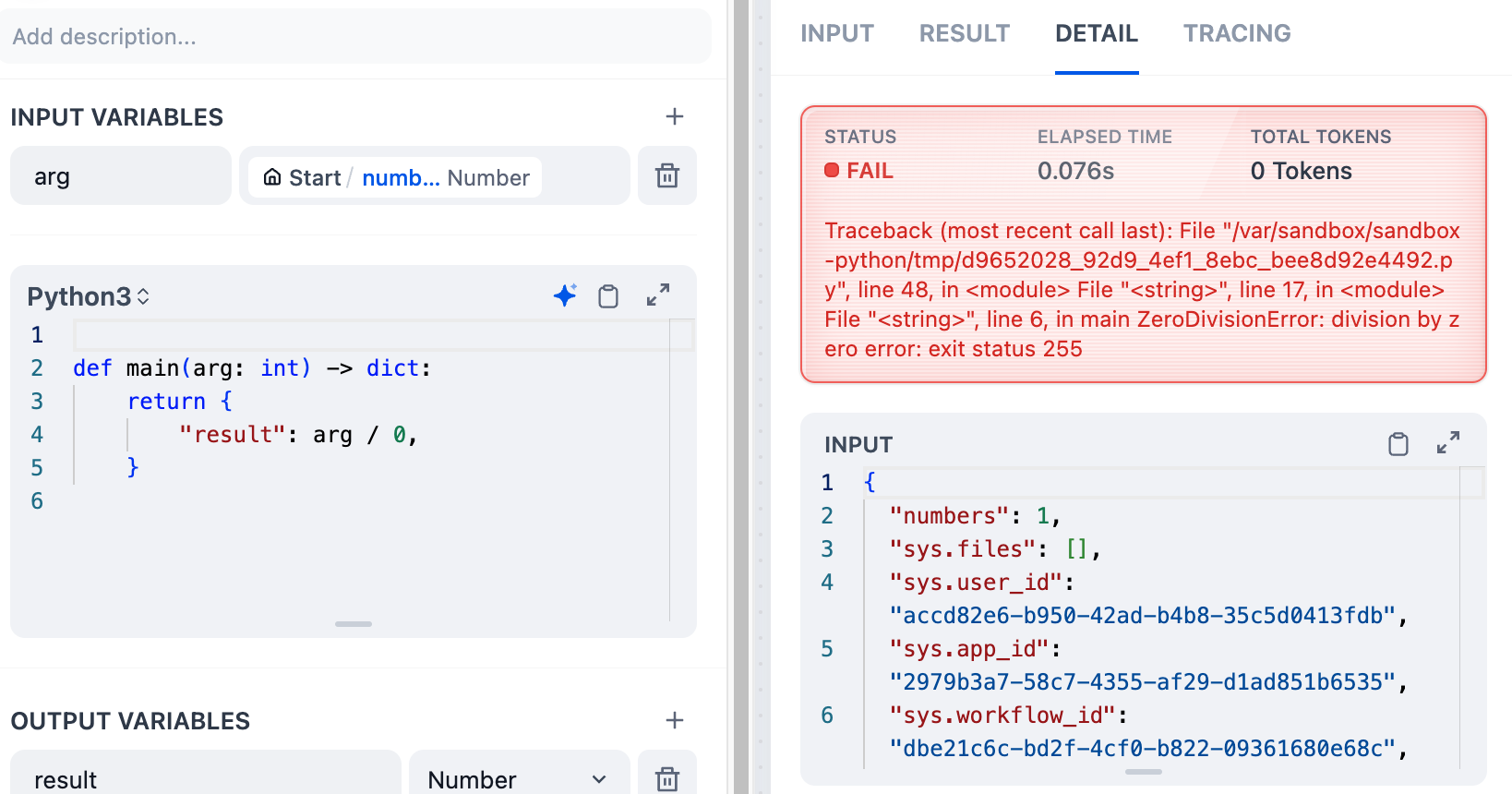

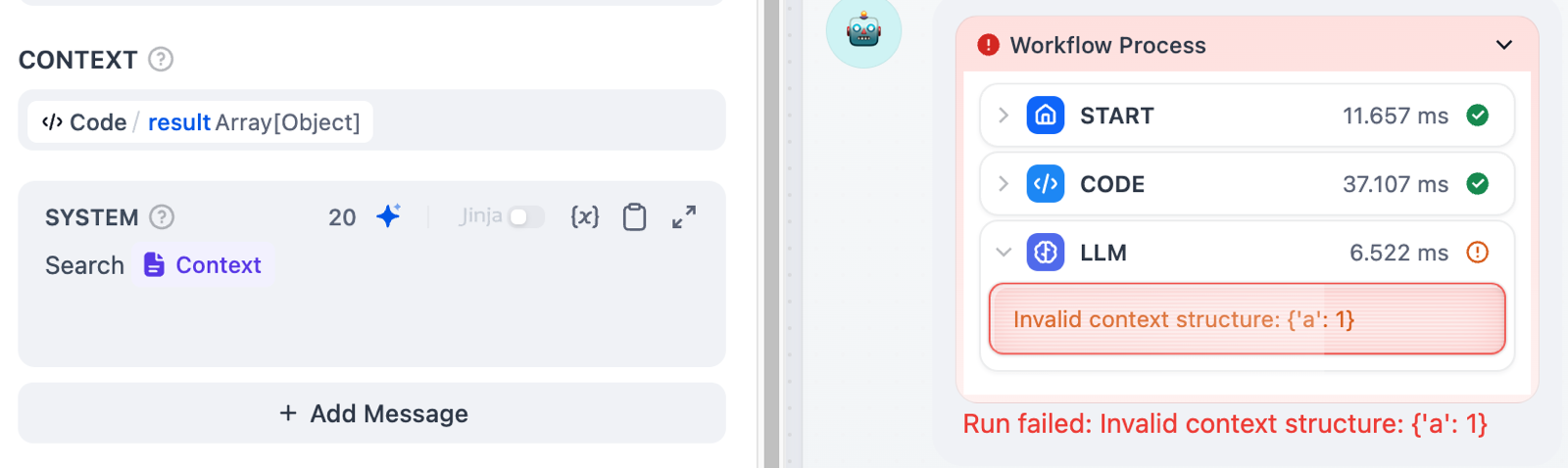

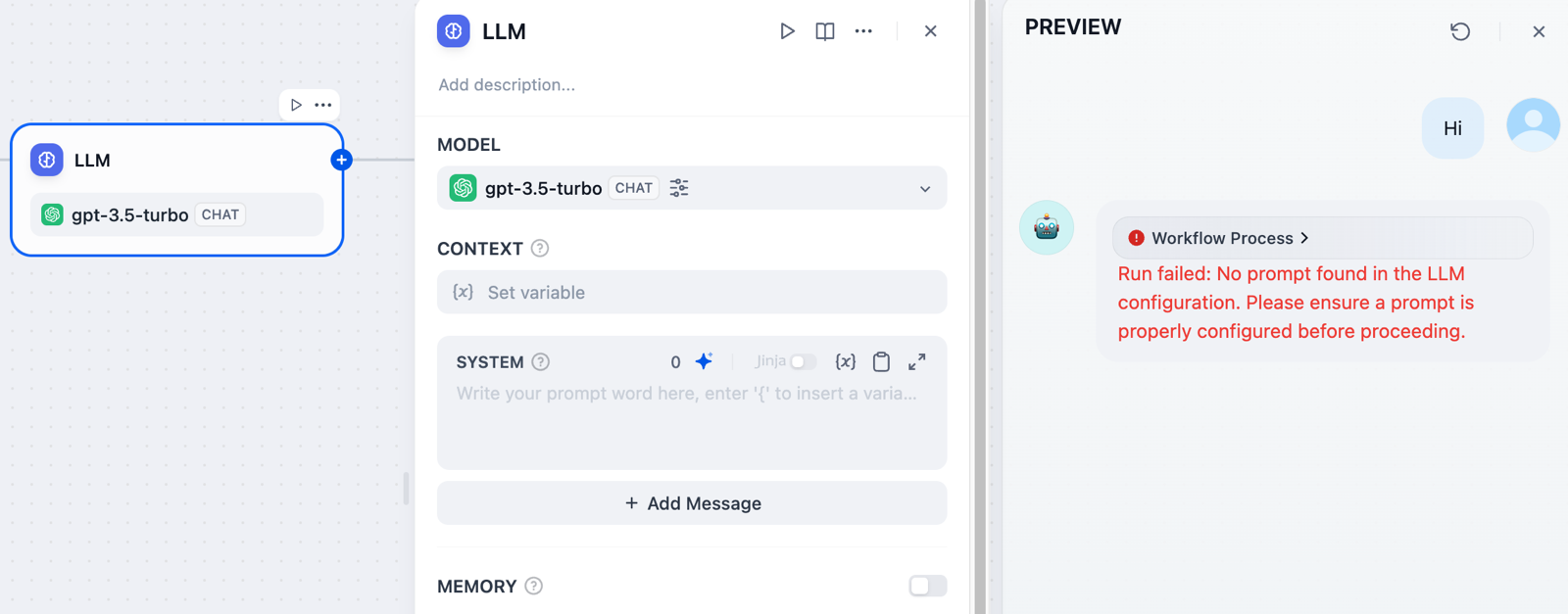

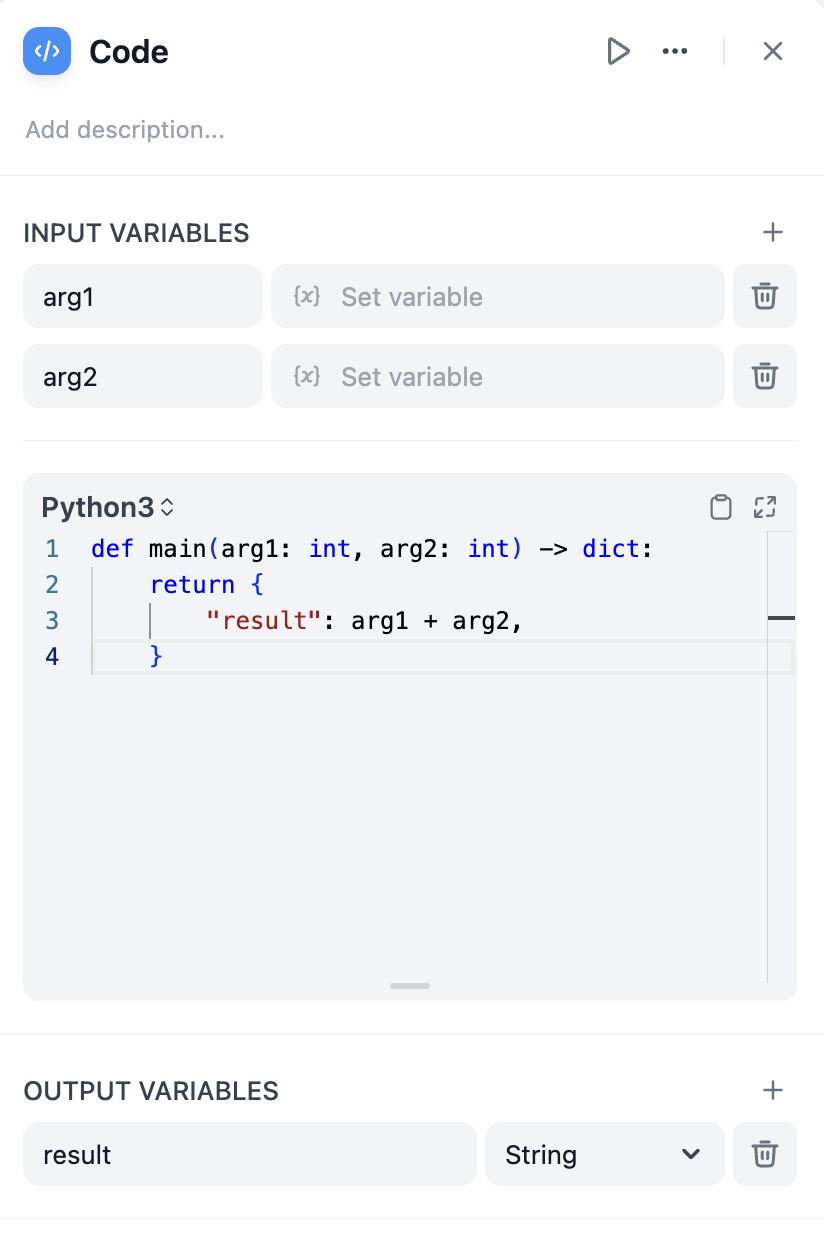

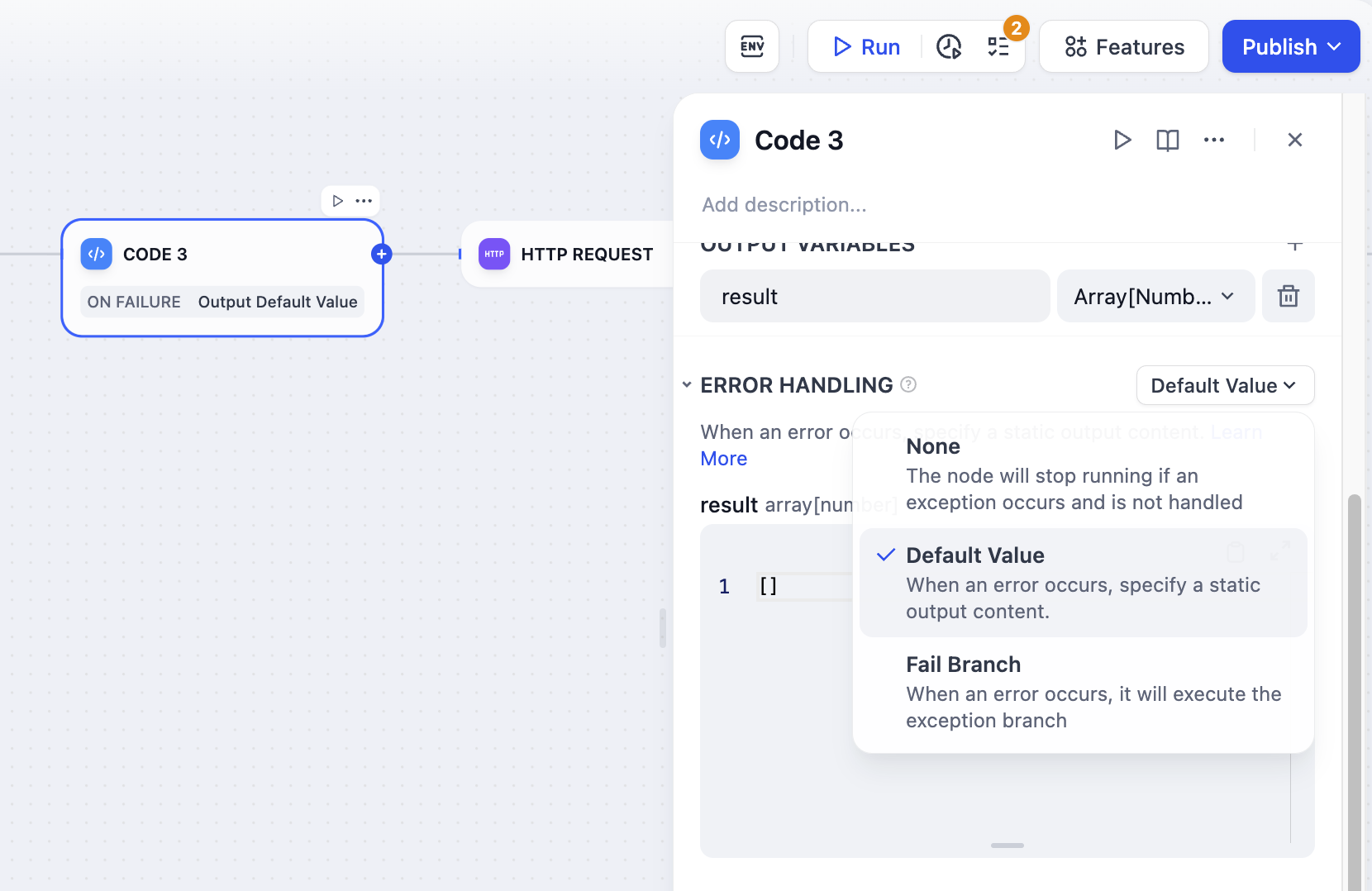

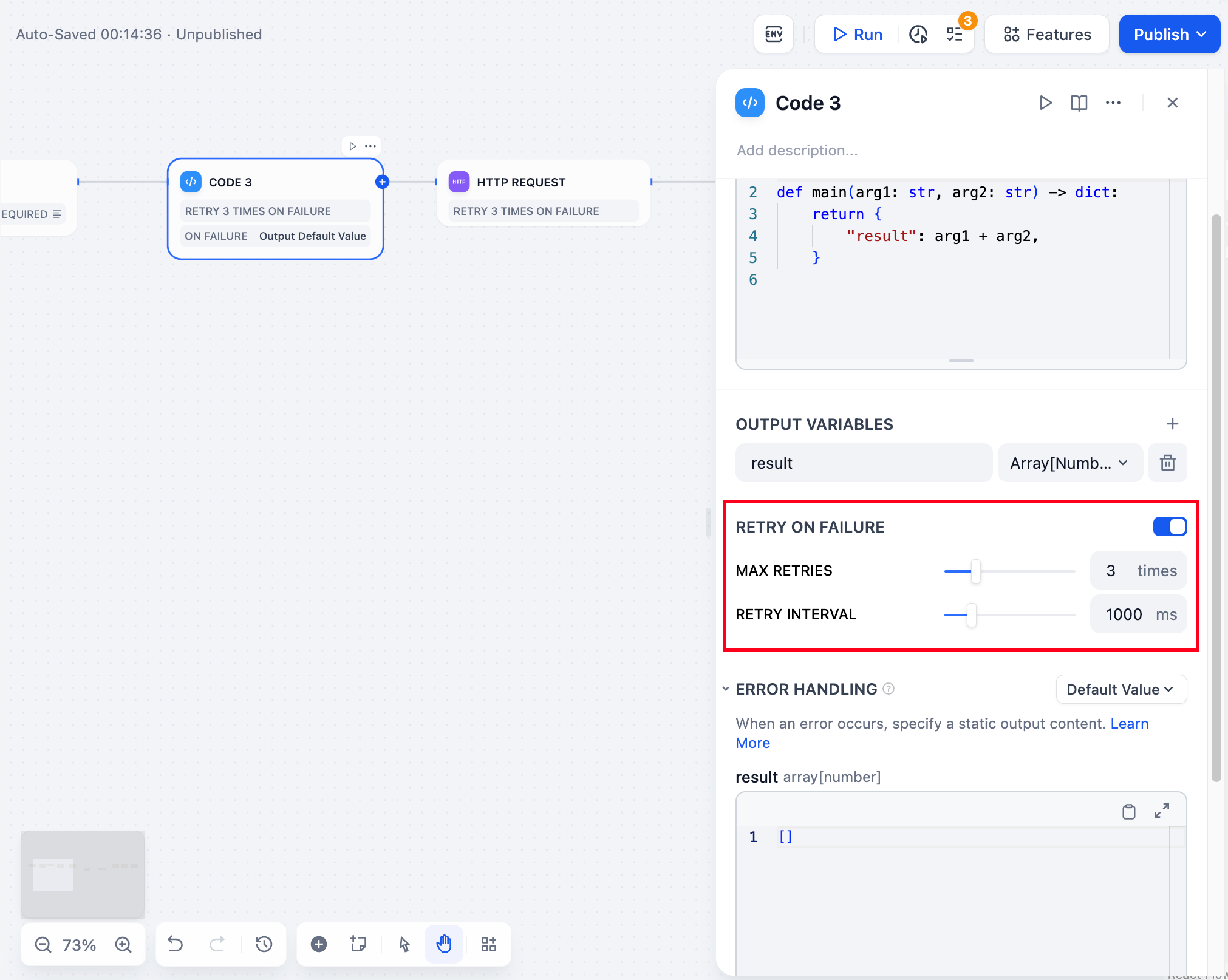

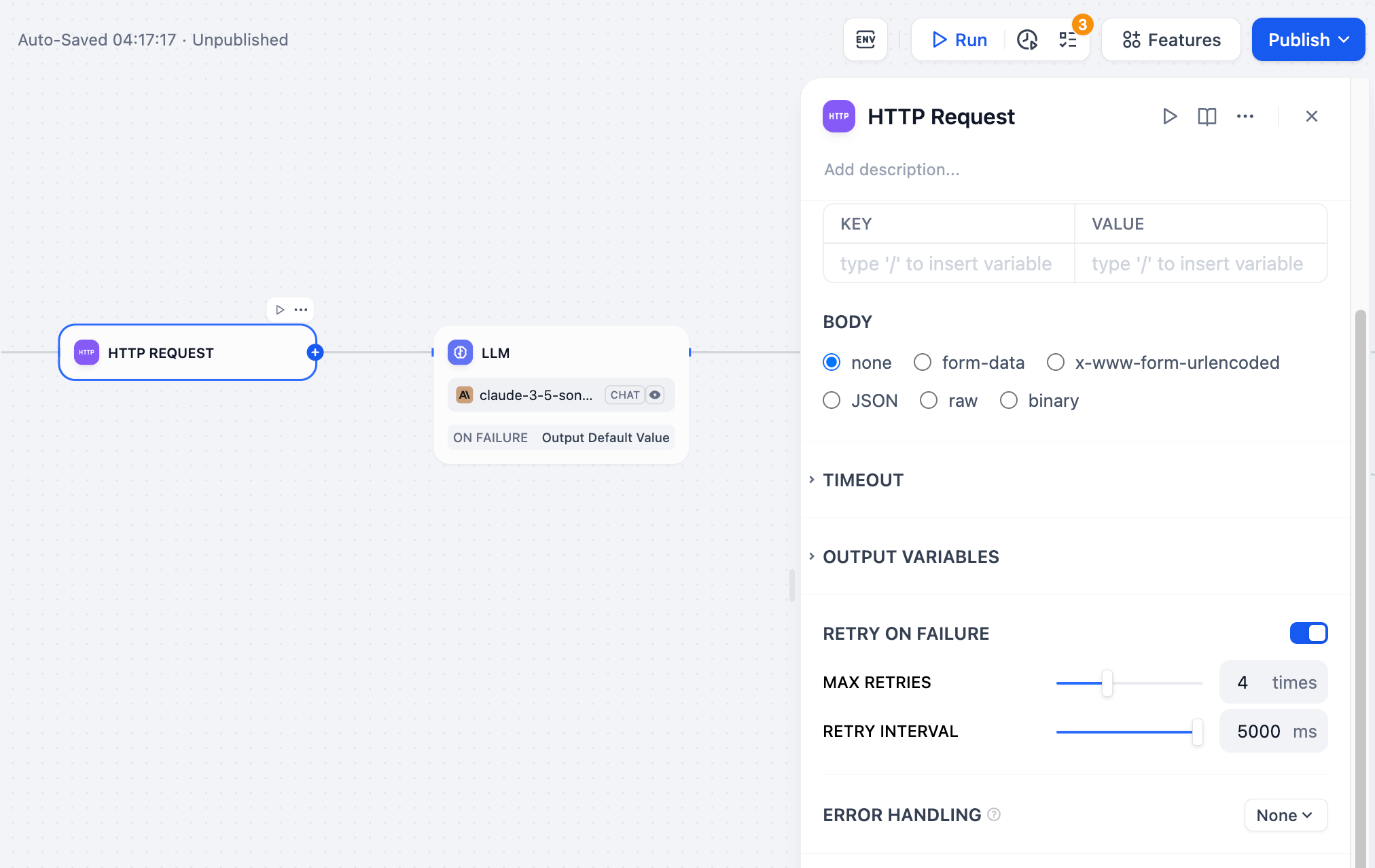

# Handling Errors

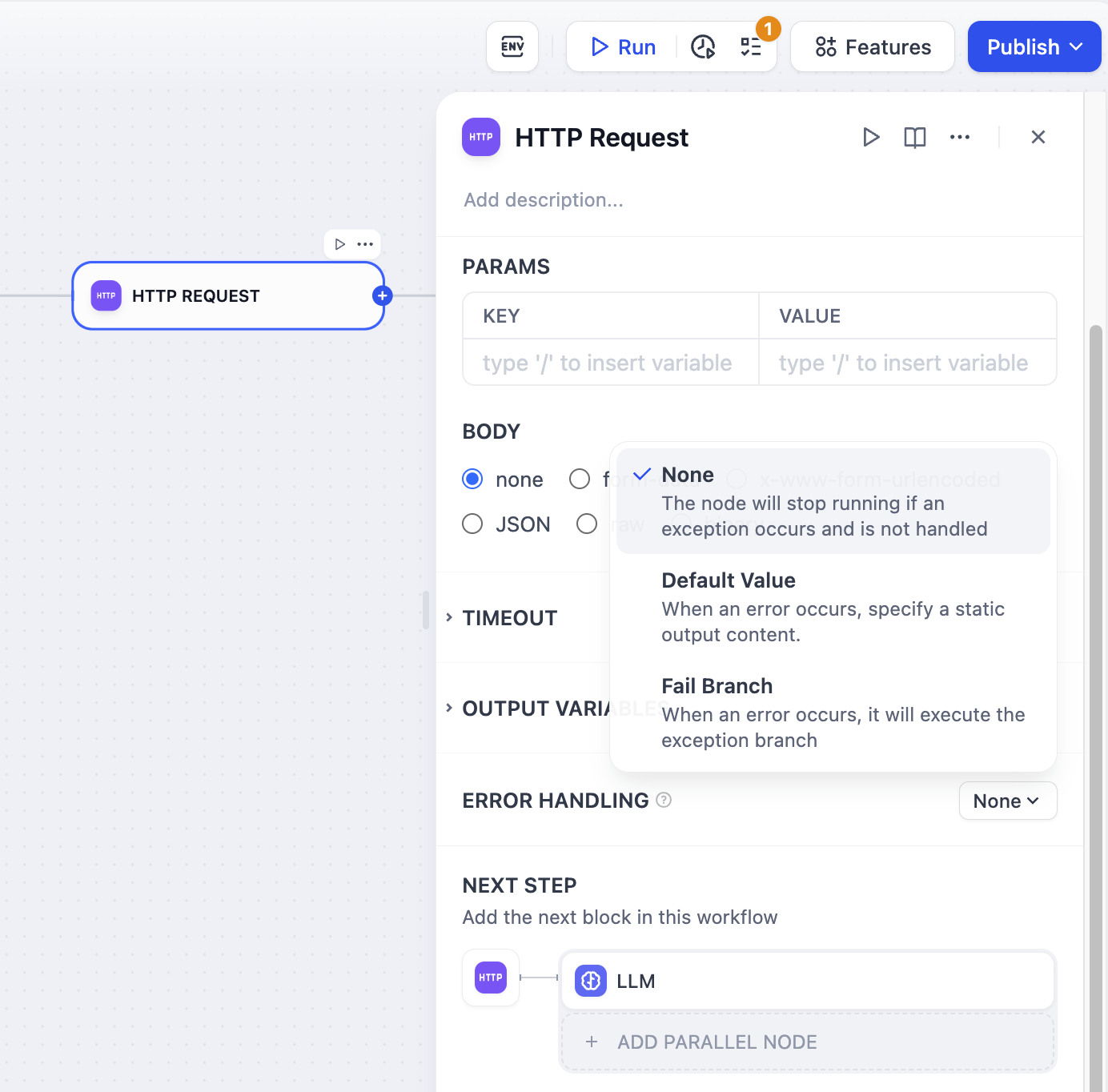

Source: https://docs.dify.ai/en/use-dify/build/predefined-error-handling-logic

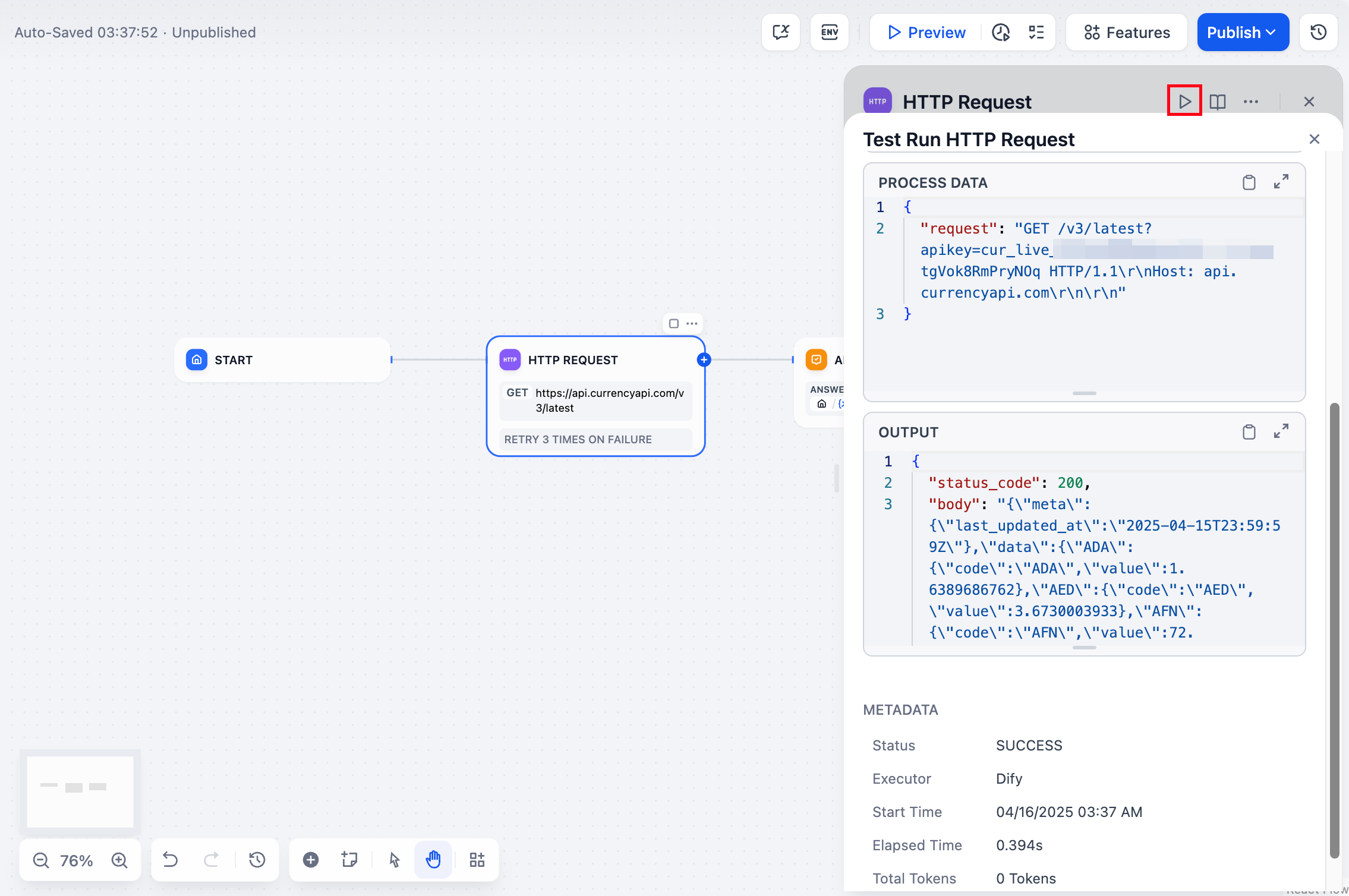

[LLM](/en/use-dify/nodes/llm), [HTTP](/en/use-dify/nodes/http-request), [Code](/en/use-dify/nodes/code), and [Tool](/en/use-dify/nodes/tools)

nodes support error handling out-of-box. When a node fails, it can take one of the three behaviors below:

The default behavior. When a node fails, the whole workflow stops. You get the original error message.

Use this when:

* You're testing and want to see what broke

* The workflow can't continue without this step

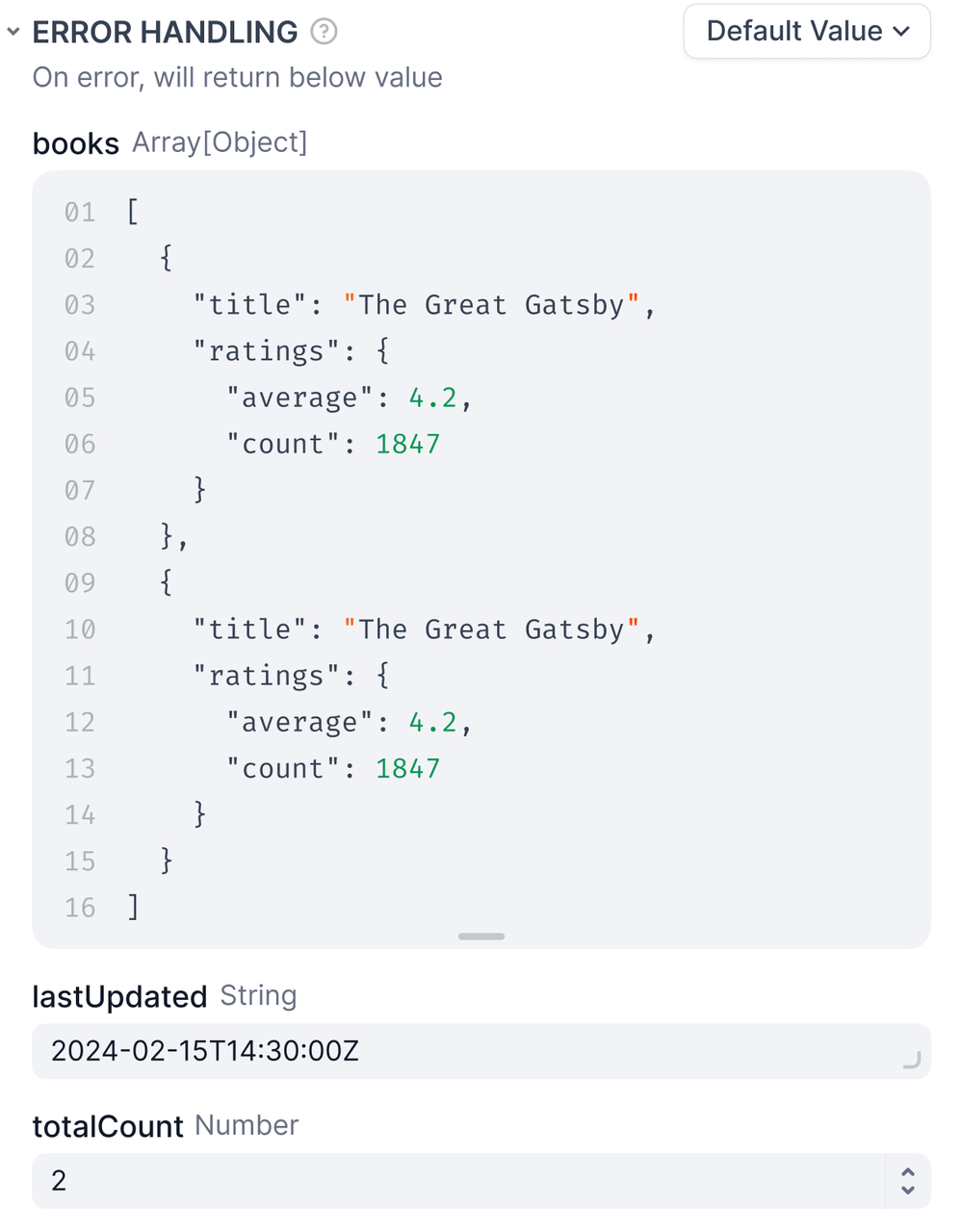

When a node fails, use a backup value instead. The workflow keeps running.

**Requirements**

* The default value must match the node's output type -- if it outputs a string, your default must be a string.

**Example**

Your LLM node normally returns analysis, but sometimes it fails due to rate limits. Set a default value like:

```

"Sorry, I'm temporarily unavailable. Please try again in a few minutes."

```

Now users get a helpful message instead of a broken workflow.

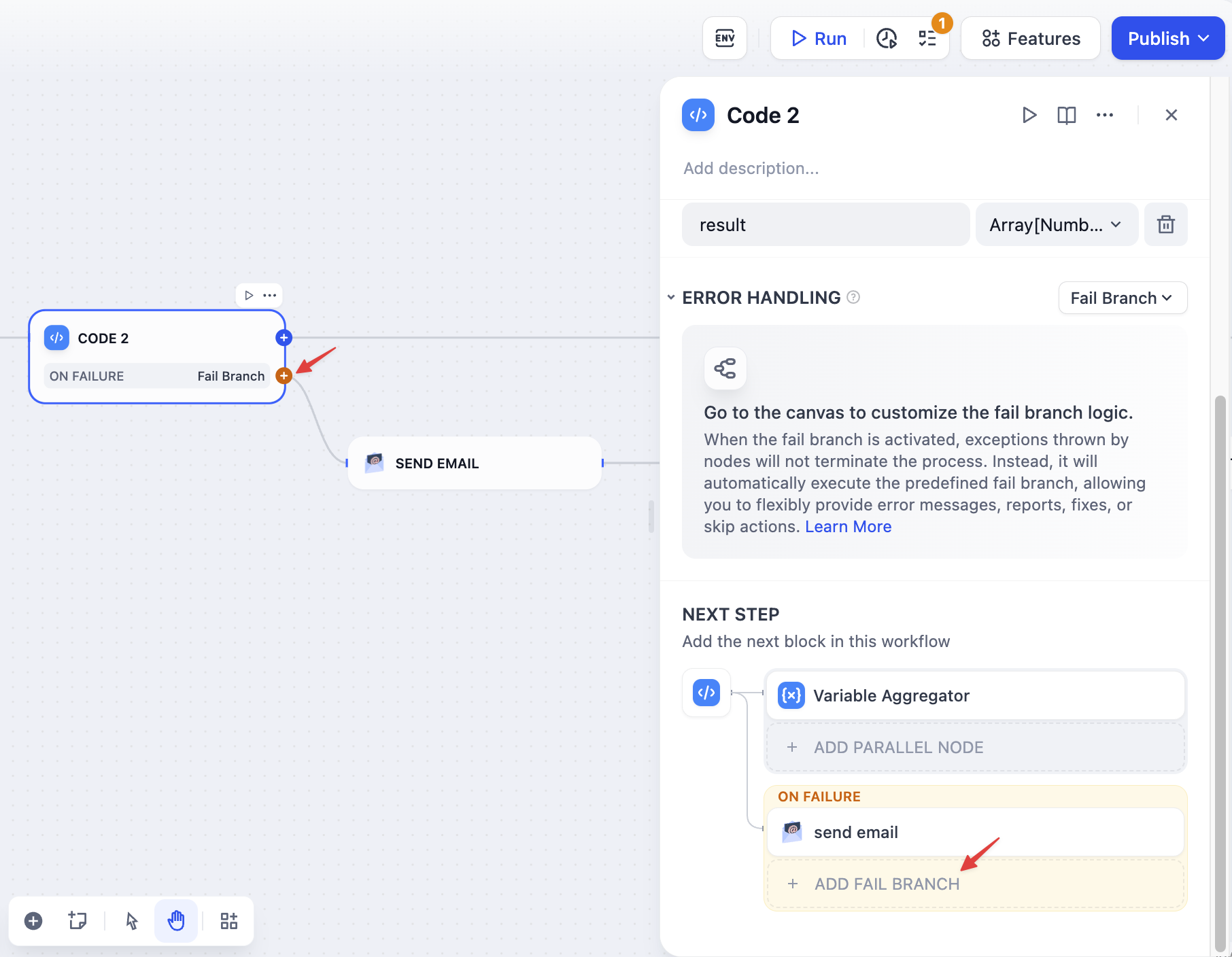

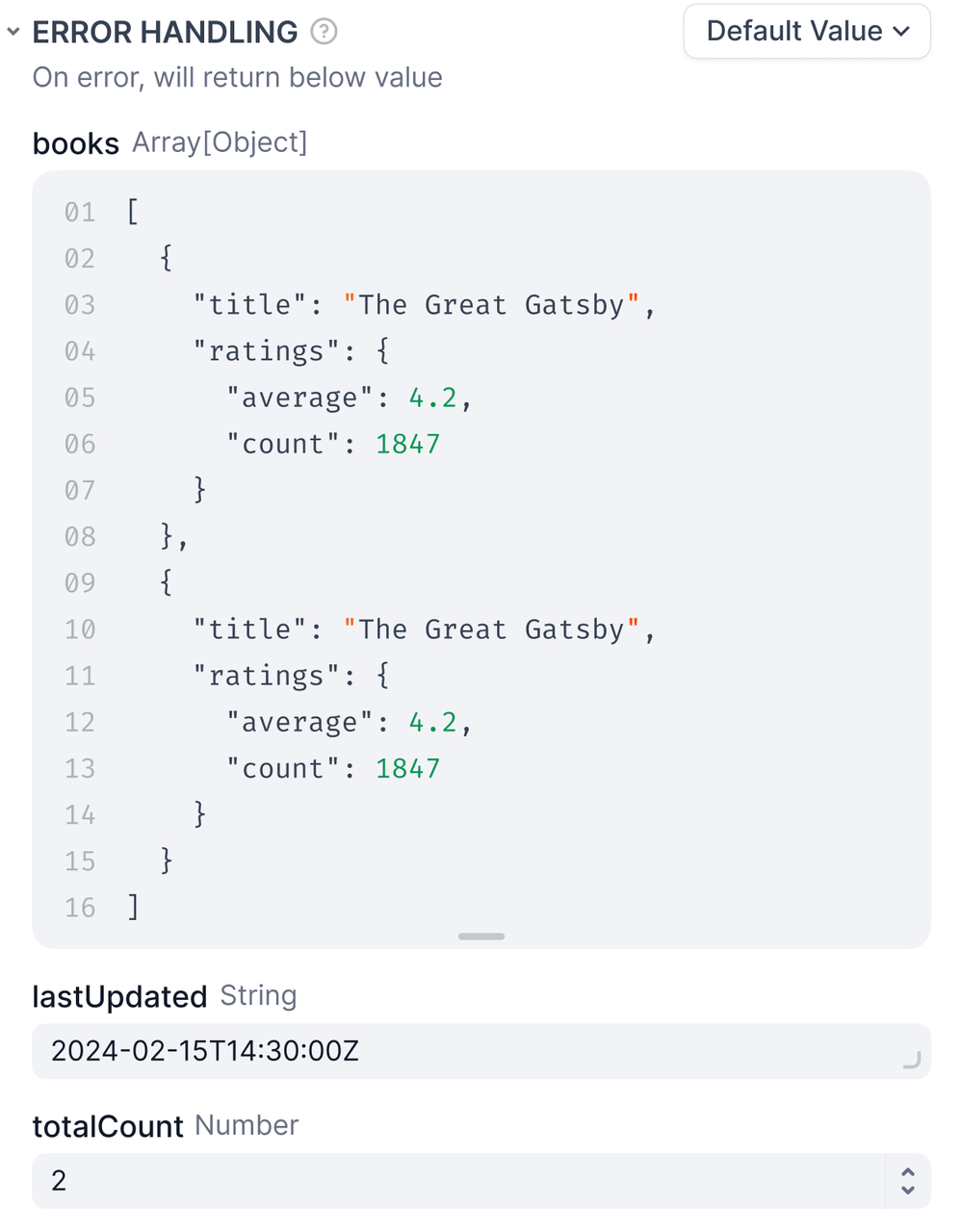

When a node fails, trigger a separate flow to handle the error.

The fail branch is highlighted in orange. You can:

* Send error notifications

* Try a different approach

* Log the error for debugging

* Use a backup service

**Example**

Your main API fails, so the fail branch calls a backup API instead. Users never know there was a problem.

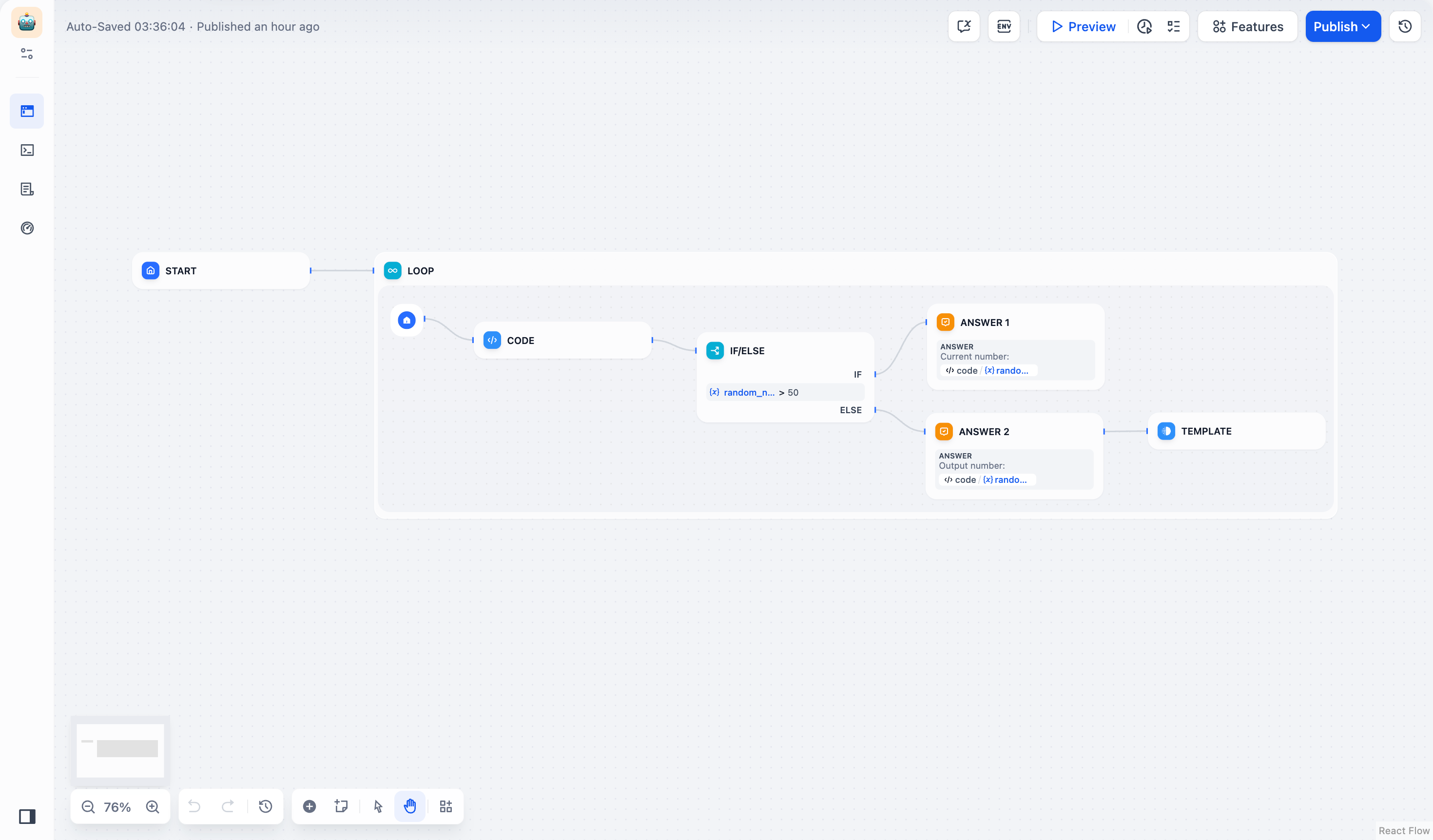

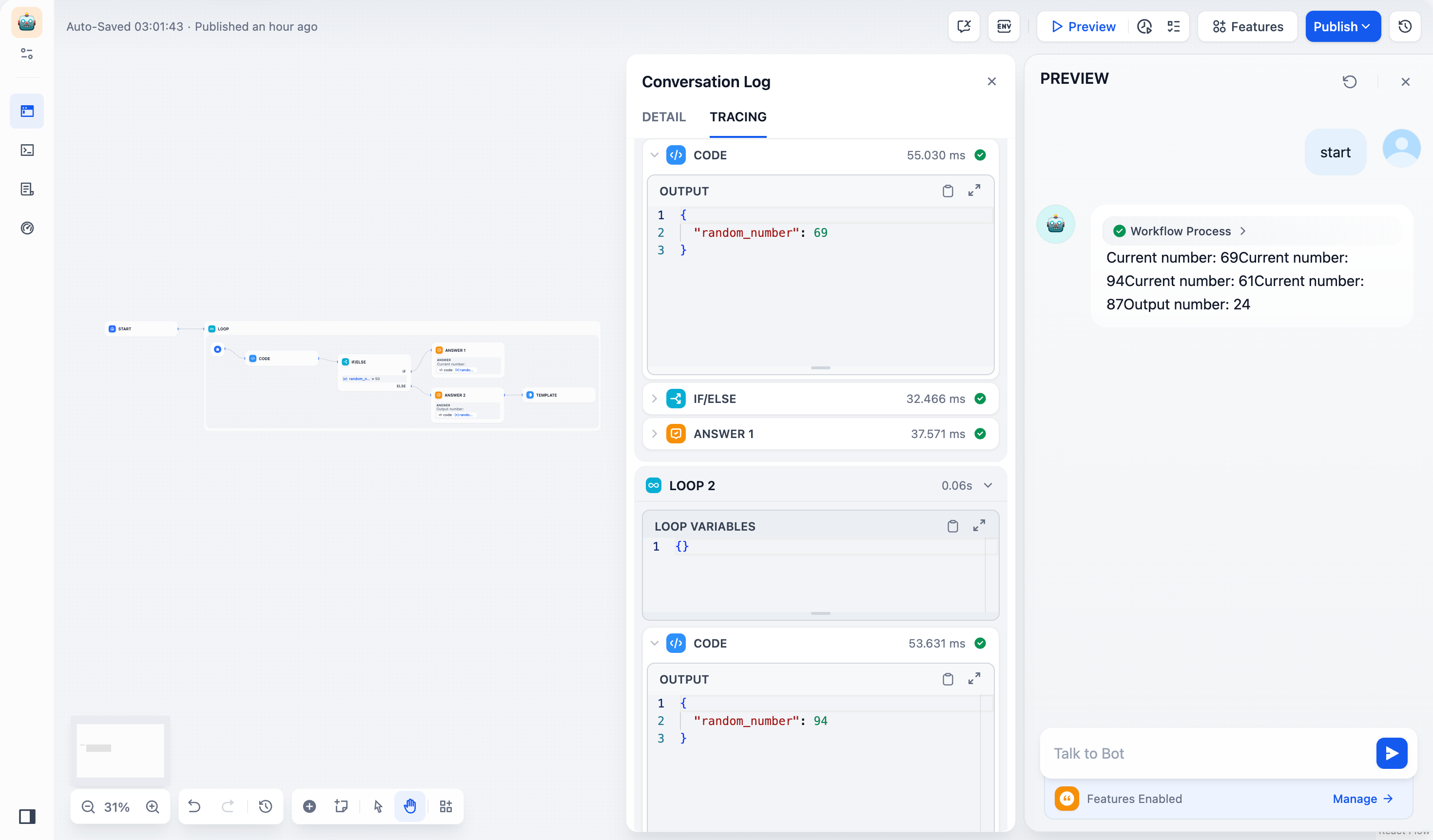

## Error in Loop/Iteration Nodes

When child nodes fail inside loops and iterations, these control flow nodes have their own error behaviors.

**Loop nodes** always stop immediately when any child node fails. The entire loop terminates and returns the error, preventing any further iterations from running.

**Iteration nodes** let you choose how to handle child node failures through the error handling mode setting:

* `terminated` - Stops processing immediately when any item fails (default)

* `continue-on-error` - Skips the failed item and continues with the next one

* `remove-abnormal-output` - Continues processing but filters out failed items from the final output

When you set an iteration to `continue-on-error`, failed items return `null` in the output array. When you use `remove-abnormal-output`, the output array only contains successful results, making it shorter than the input array.

## Error variables

When using default value or fail branch, you get two special variables:

* `error_type` - What kind of error happened (see [Error Types](/en/use-dify/debug/error-type))

* `error_message` - The actual error details

Use these to:

* Show users helpful messages

* Send alerts to your team

* Choose different recovery strategies

* Log errors for debugging

**Example**

```

{% if error_type == "rate_limit" %}

Too many requests. Please wait a moment and try again.

{% else %}

Something went wrong. Our team has been notified.

{% endif %}

```

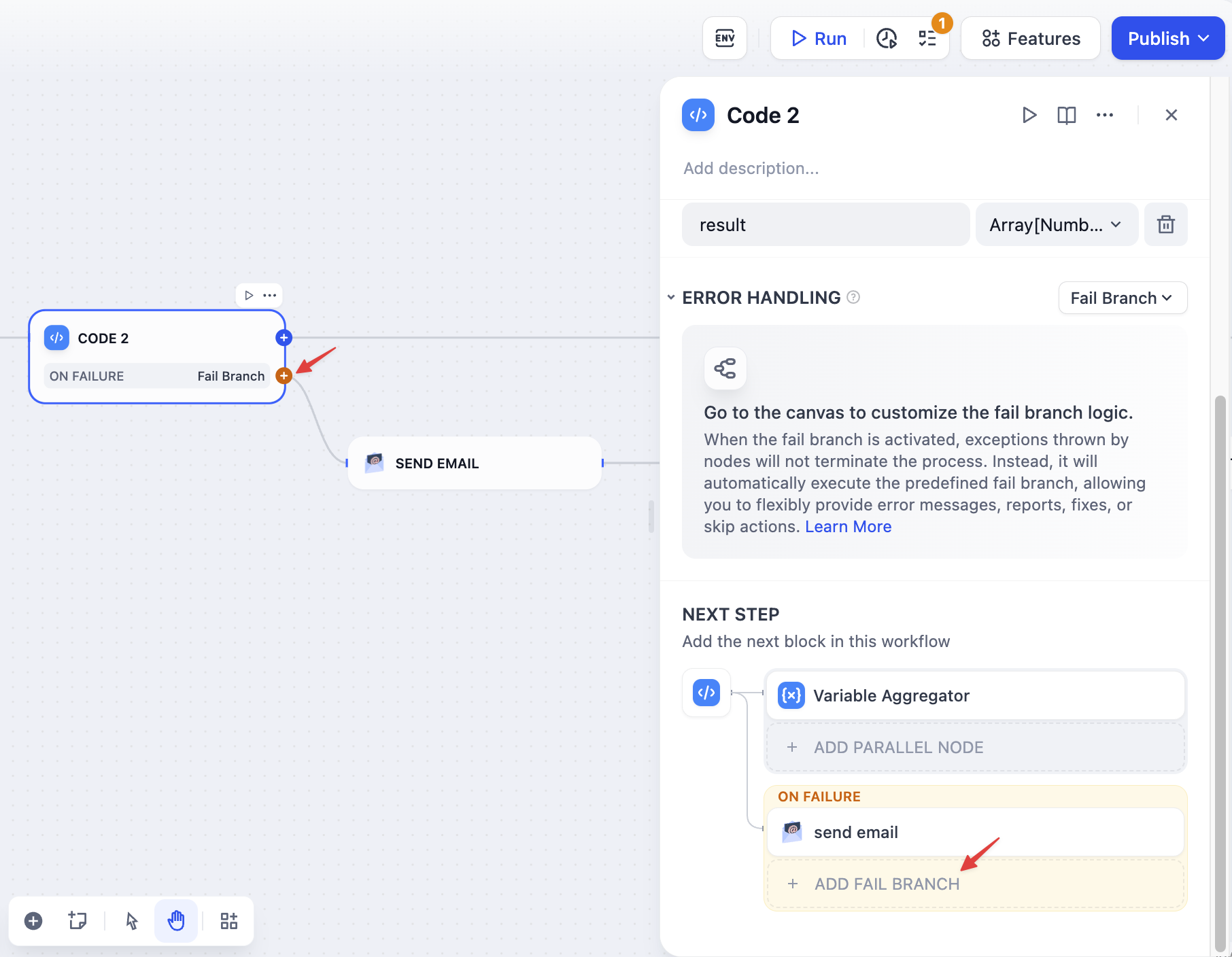

# Hotkeys

Source: https://docs.dify.ai/en/use-dify/build/shortcut-key

Speed up your workflow building with keyboard shortcuts.

**[Go to Anything](/en/use-dify/build/goto-anything)**: Press `Cmd+K` (macOS) or `Ctrl+K` (Windows) anywhere in Dify to search and jump to everything—apps, plugins, knowledge bases, even workflow nodes. Use slash commands like `/theme` to change appearance, `/language` to switch languages, or `/help` to access documentation.

**Description**: Override the default description to be more specific

**Parameters**: For each tool parameter, choose:

* **Auto**: Let the AI decide the value

* **Fixed**: Set a specific value that never changes

**Example**: For a search tool, set `numResults` to 5 (fixed) but keep `query` on auto.

## Sharing apps

When you export apps that use MCP tools:

* The export includes server IDs

* To use the app elsewhere, add the same servers with identical IDs

* Document which MCP servers your app needs

## Troubleshooting

**"Unconfigured Server"**: Check the URL and re-authorize

**Missing tools**: Hit "Update Tools"

**Broken apps**: You probably changed a server ID. Add it back with the original ID.

## Tips

* Use permanent, descriptive server IDs like `github-prod` or `crm-system`

* Keep the same MCP setup across dev/staging/production

* Set fixed values for config stuff, auto for dynamic inputs

* Test MCP integrations before deploying

# Flow Logic

Source: https://docs.dify.ai/en/use-dify/build/orchestrate-node

## Serial vs. Parallel execution

Flows execute differently depending on how you connect the nodes.

When you connect nodes one after another, they execute in sequence. Each node waits for the previous one to finish before starting. Each node may use variables from any node that ran before it in the chain.

When you connect multiple nodes to the same starting node, they all run at the same time. Nodes may not reference parallel node outputs.

You can have a maximum of 10 parallel branches from one node, and up to 3 levels of nested parallel structures.

## Variable access

In serial flows, nodes can access variables from any previous node in the chain.

In parallel flows, nodes can access variables from nodes that ran before the parallel split, but they cannot access variables from other parallel nodes since they're running simultaneously.

After parallel branches finish, downstream nodes can access variables from all the parallel outputs.

## Answer node streaming

Answer nodes handle parallel outputs differently. When an Answer node references variables from multiple parallel branches, it streams content progressively:

* Content streams up to the first unresolved variable

* Once that variable's node completes, streaming continues to the next unresolved variable

* The order of variables in the Answer node determines the streaming sequence, not the node execution order

For example, in a flow where `Node A -> Node B -> Answer` with the Answer containing `{{B}}` then `{{A}}`, the Answer will wait for Node B before streaming any content, even if Node A completes first.

# Handling Errors

Source: https://docs.dify.ai/en/use-dify/build/predefined-error-handling-logic

[LLM](/en/use-dify/nodes/llm), [HTTP](/en/use-dify/nodes/http-request), [Code](/en/use-dify/nodes/code), and [Tool](/en/use-dify/nodes/tools)

nodes support error handling out-of-box. When a node fails, it can take one of the three behaviors below:

The default behavior. When a node fails, the whole workflow stops. You get the original error message.

Use this when:

* You're testing and want to see what broke

* The workflow can't continue without this step

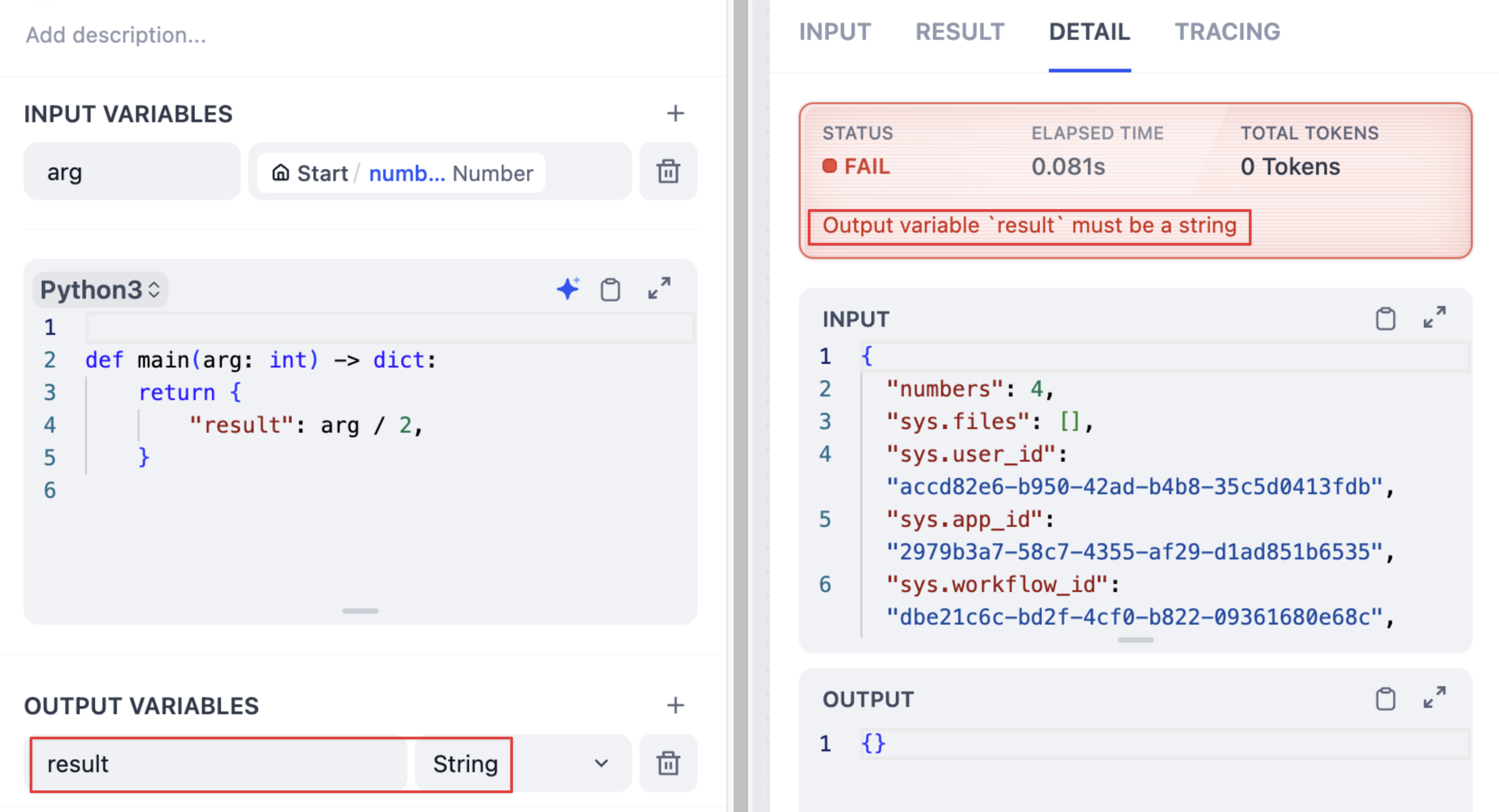

When a node fails, use a backup value instead. The workflow keeps running.

**Requirements**

* The default value must match the node's output type -- if it outputs a string, your default must be a string.

**Example**

Your LLM node normally returns analysis, but sometimes it fails due to rate limits. Set a default value like:

```

"Sorry, I'm temporarily unavailable. Please try again in a few minutes."

```

Now users get a helpful message instead of a broken workflow.

When a node fails, trigger a separate flow to handle the error.

The fail branch is highlighted in orange. You can:

* Send error notifications

* Try a different approach

* Log the error for debugging

* Use a backup service

**Example**

Your main API fails, so the fail branch calls a backup API instead. Users never know there was a problem.

## Error in Loop/Iteration Nodes

When child nodes fail inside loops and iterations, these control flow nodes have their own error behaviors.

**Loop nodes** always stop immediately when any child node fails. The entire loop terminates and returns the error, preventing any further iterations from running.

**Iteration nodes** let you choose how to handle child node failures through the error handling mode setting:

* `terminated` - Stops processing immediately when any item fails (default)

* `continue-on-error` - Skips the failed item and continues with the next one

* `remove-abnormal-output` - Continues processing but filters out failed items from the final output

When you set an iteration to `continue-on-error`, failed items return `null` in the output array. When you use `remove-abnormal-output`, the output array only contains successful results, making it shorter than the input array.

## Error variables

When using default value or fail branch, you get two special variables:

* `error_type` - What kind of error happened (see [Error Types](/en/use-dify/debug/error-type))

* `error_message` - The actual error details

Use these to:

* Show users helpful messages

* Send alerts to your team

* Choose different recovery strategies

* Log errors for debugging

**Example**

```

{% if error_type == "rate_limit" %}

Too many requests. Please wait a moment and try again.

{% else %}

Something went wrong. Our team has been notified.

{% endif %}

```

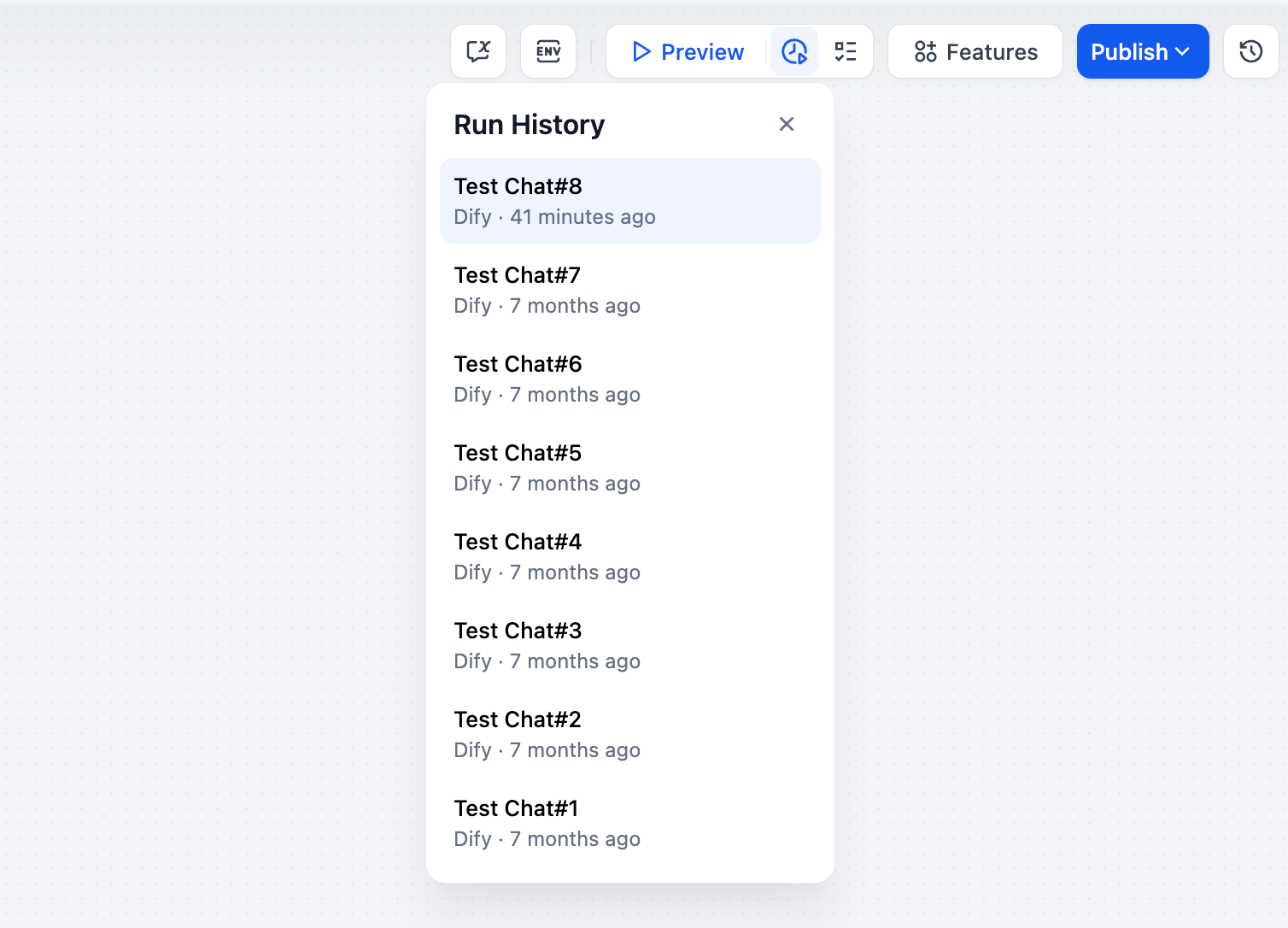

# Hotkeys

Source: https://docs.dify.ai/en/use-dify/build/shortcut-key

Speed up your workflow building with keyboard shortcuts.

**[Go to Anything](/en/use-dify/build/goto-anything)**: Press `Cmd+K` (macOS) or `Ctrl+K` (Windows) anywhere in Dify to search and jump to everything—apps, plugins, knowledge bases, even workflow nodes. Use slash commands like `/theme` to change appearance, `/language` to switch languages, or `/help` to access documentation.

![Command+K Search Interface]() ## Node operations

With any selected node(s) on canvas:

## Node operations

With any selected node(s) on canvas:

| Windows |

macOS |

Action |

Ctrl + C |

Cmd + C |

Copy nodes |

Ctrl + V |

Cmd + V |

Paste nodes |

Ctrl + D |

Cmd + D |

Duplicate nodes |

Delete |

Delete |

Delete selected nodes |

Ctrl + O |

Cmd + O |

Auto-arrange nodes |

Shift |

Shift |

Visualize variable dependencies (single node only) |

## Canvas navigation

| Windows |

macOS |

Action |

Ctrl + 1 |

Cmd + 1 |

Fit to view |

Ctrl + - |

Cmd + - |

Zoom out |

Ctrl + = |

Cmd + = |

Zoom in |

Shift + 1 |

Shift + 1 |

Reset to 100% |

Shift + 5 |

Shift + 5 |

Set to 50% |

H |

H |

Hand tool (pan) |

V |

V |

Select tool |

## History

| Windows |

macOS |

Action |

Ctrl + Z |

Cmd + Z |

Undo |

Ctrl + Y |

Cmd + Y |

Redo |

Ctrl + Shift + Z |

Cmd + Shift + Z |

Redo |

## Testing

| Windows |

macOS |

Action |

Alt + R |

Option + R |

Run workflow |

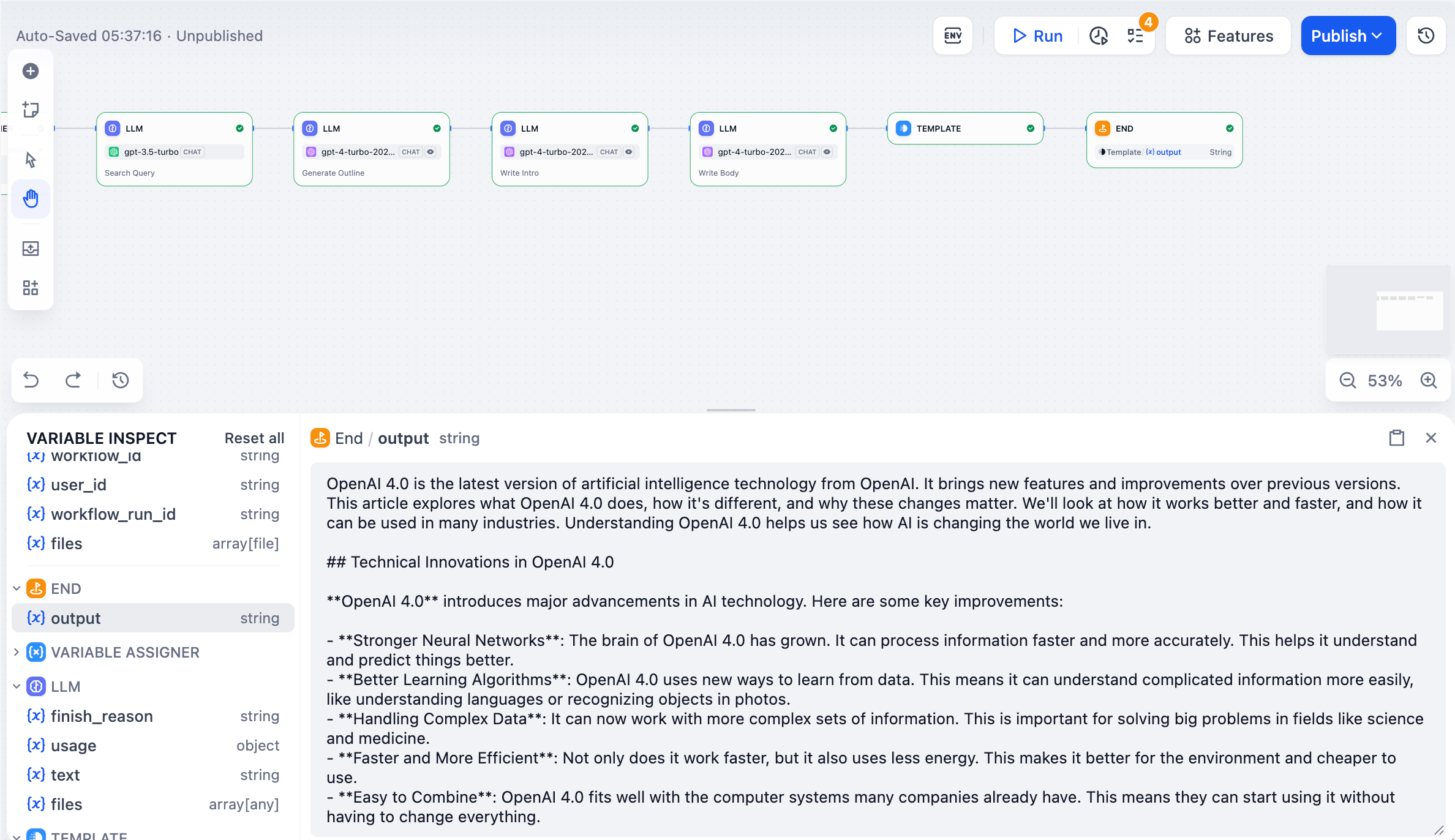

# Text Generator

Source: https://docs.dify.ai/en/use-dify/build/text-generator

Simple single-turn apps for generating text from a prompt and user inputs

Text Generators are simple single-turn apps: you write a prompt, provide inputs, and the model generates a response.

It's a good fit for tasks that don't require multi-turn conversation, tool calls, or a multi-step workflow. Just a clear input, one model call, and a ready-to-use output.

Text Generators support optional features like generating multiple outputs at once, text to speech, and content moderation. See [App Toolkit](/en/use-dify/build/additional-features) for details.

## Configure

### Write the Prompt

The prompt tells the model what to do, how to respond, and what constraints to follow.

Since a Text Generator runs in a single turn with no conversation history, the prompt is the model's only source of context—include everything it needs to produce the right output in one pass.

Here are some tips for writing effective prompts:

* **Define the task clearly**: State what the model should produce (e.g., a translation, a summary, a SQL statement).

* **Specify the output format**: Describe the structure, length, or style you expect.

* **Set constraints**: Tell the model what to avoid or what rules to follow.

Because a Text Generator always requires user input to run, a paragraph-type `query` variable is automatically inserted into the prompt when you create a new app. You can rename `query` or change its type.

Variables are placeholders—each one becomes an input field that users fill in before running the app, and their values are substituted into the prompt at runtime. For example:

```text wrap theme={null}

You are a professional editor. Summarize the following text into 3 concise bullet points. Use neutral tone and avoid adding information not present in the original text.

{{query}}

```

While drafting the prompt, type `/` > **New Variable** to quickly insert a named placeholder. You can configure its details in the **Variables** section later.

Choose the variable type that matches the input you expect:

Accepts up to 256 characters. Use it for names, email addresses, titles, or any brief text input that fits on a single line.

Allows long-form text without length restrictions. It gives users a multi-line text area for detailed descriptions.

Displays a dropdown menu with predefined options.

Restricts input to numerical values only—ideal for quantities, ratings, IDs, or any data requiring mathematical processing.

Provides a simple yes/no option. When a user checks the box, the output is `true`; otherwise, it's `false`. Use it for confirmations or any case that requires a binary choice.

Fetches variable values from an external API at runtime instead of collecting them from users.

Use it when your prompt needs dynamic data from an external source, such as live weather conditions or database records. See [API Extension](/en/use-dify/workspace/api-extension/api-extension) for details.

**Label Name** is what end users see for each input field.

#### Create Dynamic Prompts with Variables

To adapt your app to different users or contexts without rewriting the prompt each time, add more variables.

Each variable collects a specific piece of information upfront and injects it into the prompt at runtime.

For example, an SQL generator might use `database_type` to adapt the output dialect while `query` captures the user's natural language request:

```text wrap theme={null}

You are an SQL generator. Translate the following natural language query into a {{database_type}} SQL statement: {{query}}

```

#### Generate or Improve the Prompt with AI

If you're unsure where to start or want to refine the existing prompt, click **Generate** to let an LLM help you draft it.

Describe what you want from scratch, or reference `current_prompt` and specify what to improve. For more targeted results, add an example in **Ideal Output**.

Each generation is saved as a version, so you can experiment and roll back freely.

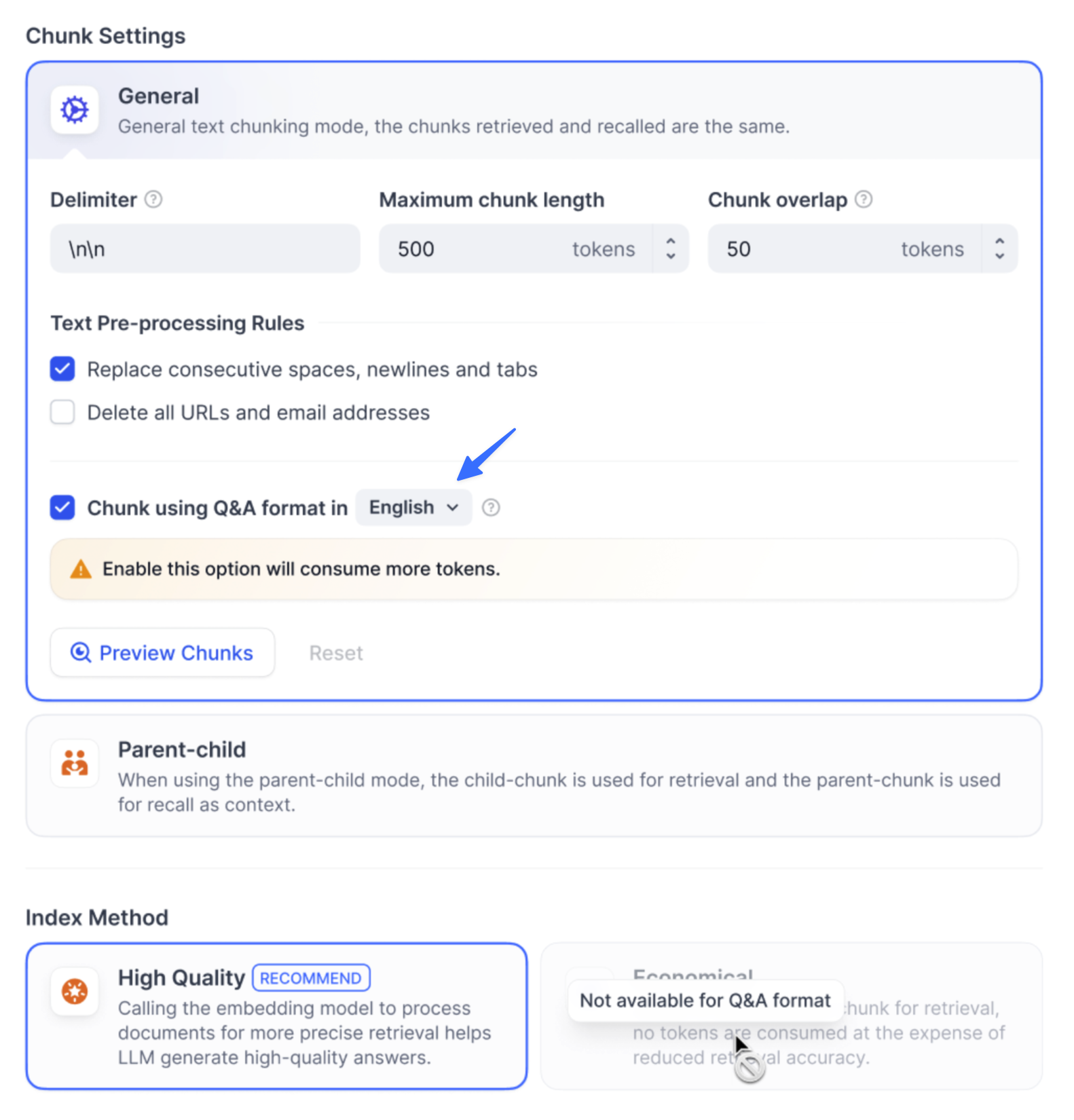

### Ground Responses in Your Own Data

To ground the model's responses in your own data rather than general knowledge, add a knowledge base and select an existing variable as the **Query Variable**.

When a user runs the app and fills in that field, its value is used as the search query to retrieve relevant content from the knowledge base. The retrieved content is then injected into the prompt as context, so the model can generate a more informed response.

For example, suppose your knowledge base contains style guides for different content types—blog posts, social media captions, product descriptions, and so on.

In a content writing app, set `content_type` as the **Query Variable**. When a user selects a content type, the app retrieves the matching style guide and generates copy that follows the corresponding writing standards.

Your prompt might look like this:

```text wrap theme={null}

You are a brand content writer. Write a {{content_type}} based on the following brief: {{brief}}

Follow the style and tone guidelines provided in the context.

```

#### Configure App-Level Retrieval Settings

To fine-tune how retrieval results are processed, click **Retrieval Setting**.

There are two layers of retrieval settings—the knowledge base level and the app level.

Think of them as two consecutive filters: the knowledge base settings determine the initial pool of results, and the app settings further rerank the results or narrow down the pool.

* **Rerank Settings**

* **Weighted Score**

The relative weight between semantic similarity and keyword matching during reranking. Higher semantic weight favors meaning relevance, while higher keyword weight favors exact matches.

Weighted Score is available only when all added knowledge bases are indexed with **High Quality** mode.

* **Rerank Model**

The rerank model to re-score and reorder all the results based on their relevance to the query.

If any multimodal knowledge bases are added, select a multimodal rerank model (marked with a **Vision** tag) as well. Otherwise, retrieved images will be excluded from reranking and the final output.

* **Top K**

The maximum number of top results to return after reranking.

When a rerank model is selected, this value will be automatically adjusted based on the model's maximum input capacity (how much text the model can process at once).

* **Score Threshold**

The minimum similarity score for returned results. Results scoring below this threshold are excluded. Use higher thresholds for stricter relevance or lower thresholds to include broader matches.

#### Search Within Specific Documents

By default, retrieval searches across the entire knowledge base. To restrict retrieval to specific documents, enable manual or automatic metadata filtering.

This improves retrieval precision, especially when your knowledge base is large or contains content for different contexts.

For creating and managing document metadata, see [Metadata](/en/use-dify/knowledge/metadata).

### Process Multimodal Inputs

To allow end users to upload files, select a model with the corresponding multimodal capabilities. The relevant file type toggles—**Vision**, **Audio**, or **Document**—appear once the model supports them, and you can enable each as needed.

You can quickly identify a model's supported modalities by its tags.

![Model Tags]() Click **Settings** under **Vision** to configure how files are accepted and processed. Upload settings apply across all enabled file types.

* **Resolution**: Controls the detail level for **image** processing only.

* **High**: Better accuracy for complex images but uses more tokens

* **Low**: Faster processing with fewer tokens for simple images

* **Upload Method**: Choose whether users can upload from their device, paste a URL, or both.

* **Upload Limit**: The maximum number of files a user can upload per run.

For self-hosted deployments, you can adjust file size limits via the following environment variables:

* `UPLOAD_IMAGE_FILE_SIZE_LIMIT` (default: 10 MB)

* `UPLOAD_FILE_SIZE_LIMIT` (default: 15 MB)

* `UPLOAD_AUDIO_FILE_SIZE_LIMIT` (default: 50 MB)

See [Environment Variables](/en/self-host/configuration/environments) for details.

## Debug & Preview

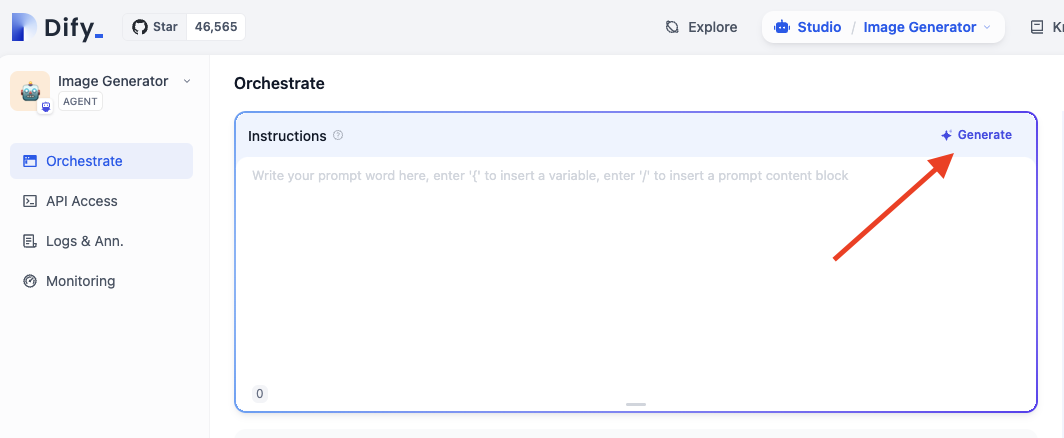

In the preview panel on the right, test your app in real time. Select a model that best fits your task, fill in the input fields, and click **Run** to see the output.

After selecting a model, you can adjust its parameters to control how it generates responses. Available parameters and presets vary by model.

To compare outputs across different models, click **Debug as Multiple Models** to run up to 4 models simultaneously.

Click **Settings** under **Vision** to configure how files are accepted and processed. Upload settings apply across all enabled file types.

* **Resolution**: Controls the detail level for **image** processing only.

* **High**: Better accuracy for complex images but uses more tokens

* **Low**: Faster processing with fewer tokens for simple images

* **Upload Method**: Choose whether users can upload from their device, paste a URL, or both.

* **Upload Limit**: The maximum number of files a user can upload per run.

For self-hosted deployments, you can adjust file size limits via the following environment variables:

* `UPLOAD_IMAGE_FILE_SIZE_LIMIT` (default: 10 MB)

* `UPLOAD_FILE_SIZE_LIMIT` (default: 15 MB)

* `UPLOAD_AUDIO_FILE_SIZE_LIMIT` (default: 50 MB)

See [Environment Variables](/en/self-host/configuration/environments) for details.

## Debug & Preview

In the preview panel on the right, test your app in real time. Select a model that best fits your task, fill in the input fields, and click **Run** to see the output.

After selecting a model, you can adjust its parameters to control how it generates responses. Available parameters and presets vary by model.

To compare outputs across different models, click **Debug as Multiple Models** to run up to 4 models simultaneously.

![Debug with Multiple Models]() ## Publish

When you're happy with the results, click **Publish** to make your app available. See [Publish](/en/use-dify/publish/README) for the full list of publishing options.

When running the web app, end users can save individual outputs for future reference.

## Publish

When you're happy with the results, click **Publish** to make your app available. See [Publish](/en/use-dify/publish/README) for the full list of publishing options.

When running the web app, end users can save individual outputs for future reference.

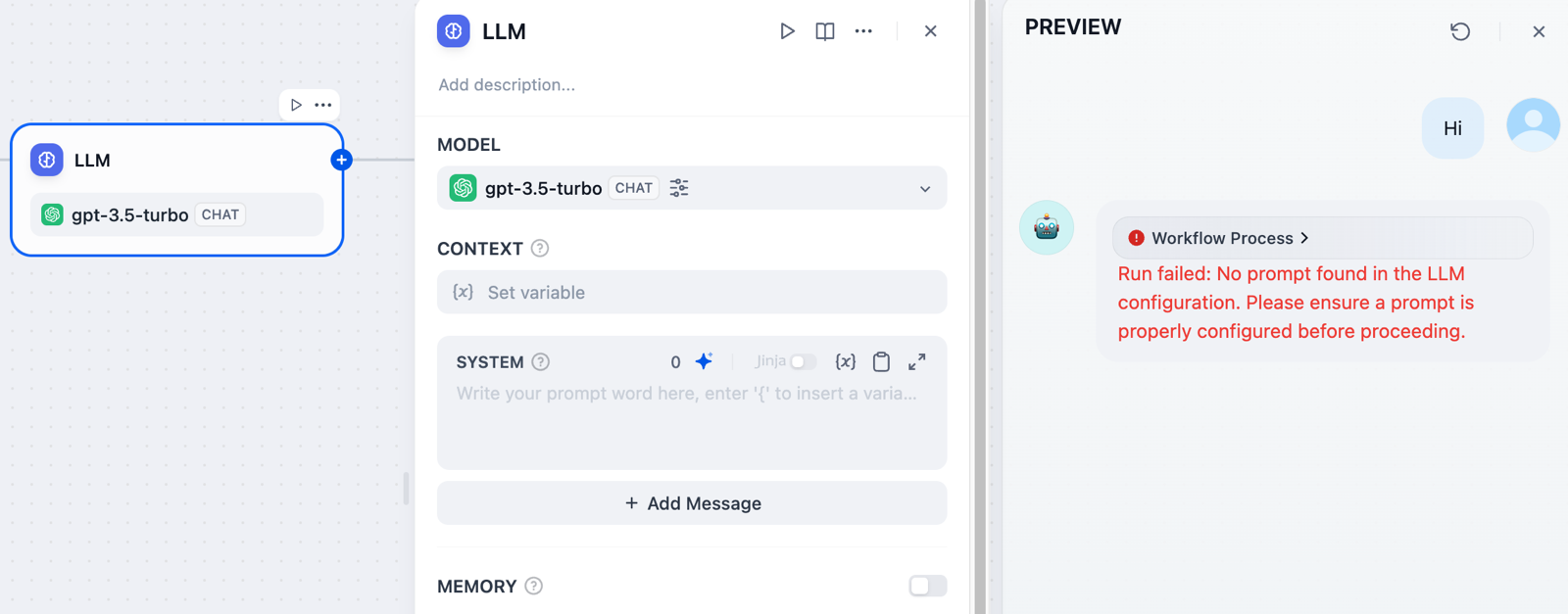

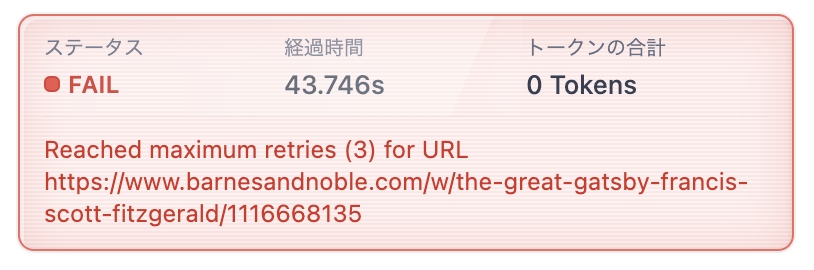

![Save Output]() # Version Control

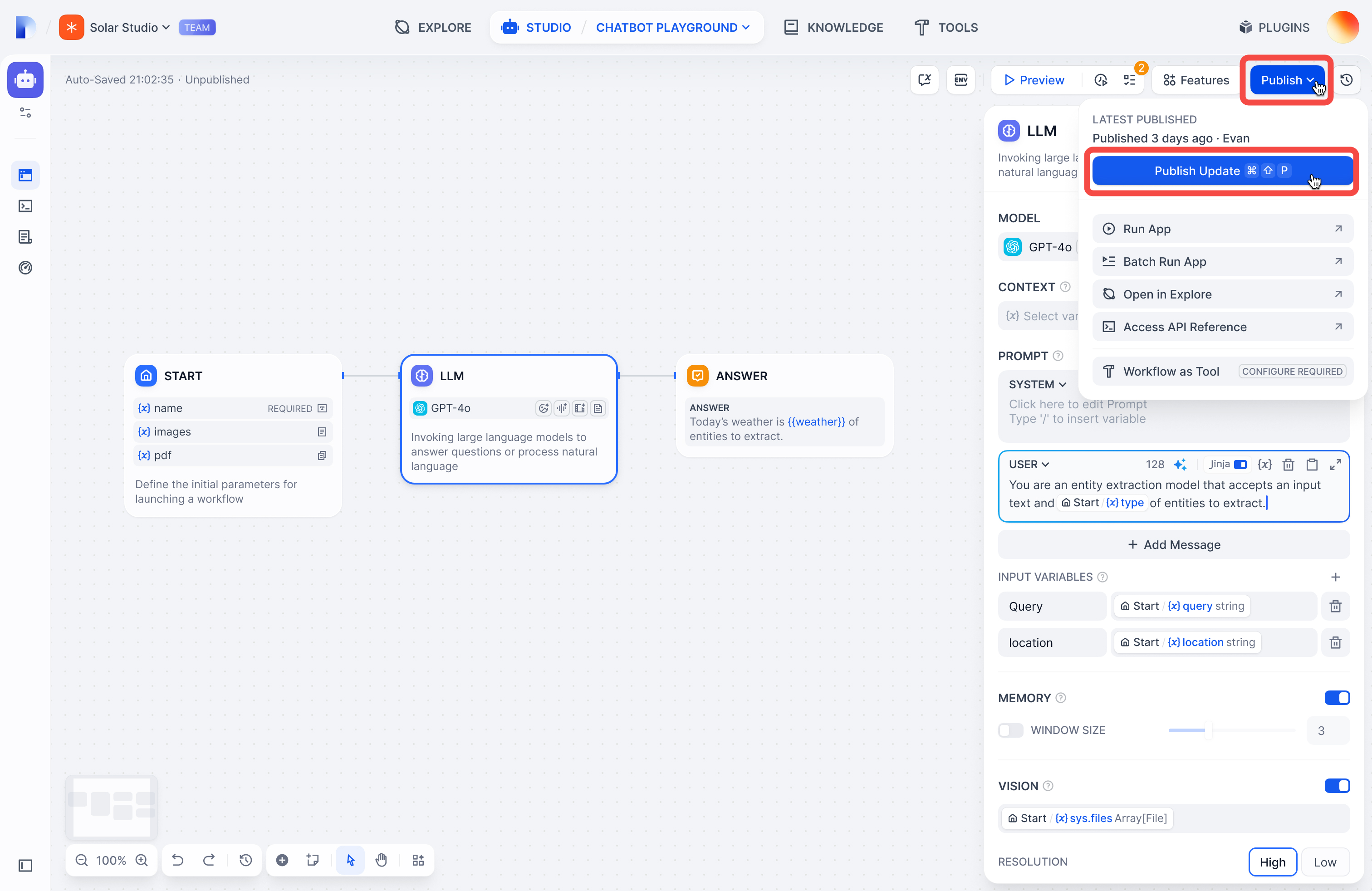

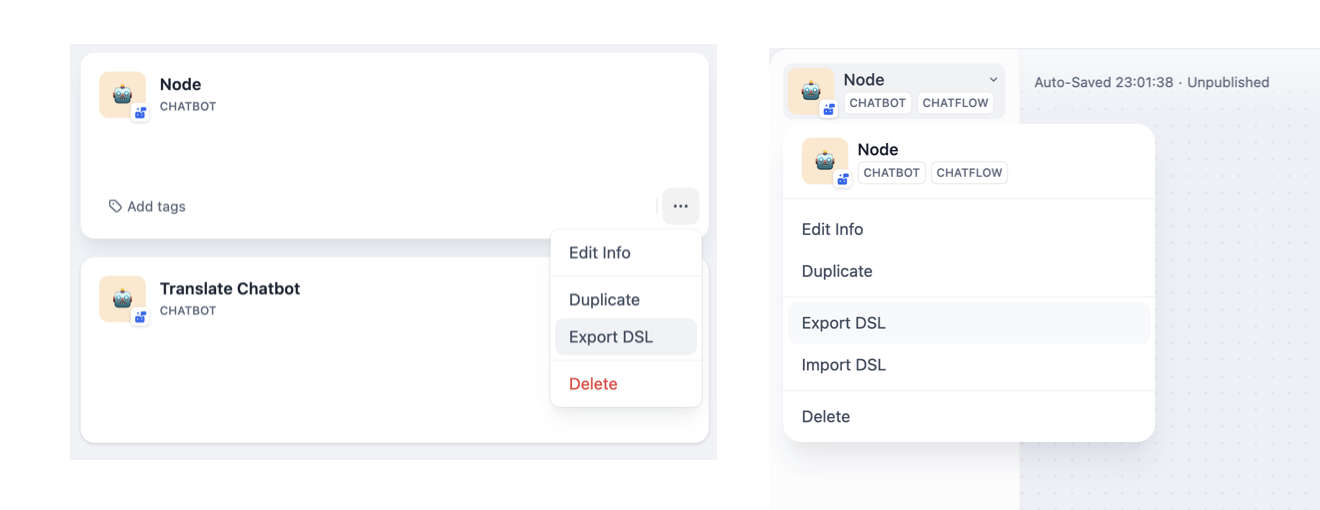

Source: https://docs.dify.ai/en/use-dify/build/version-control

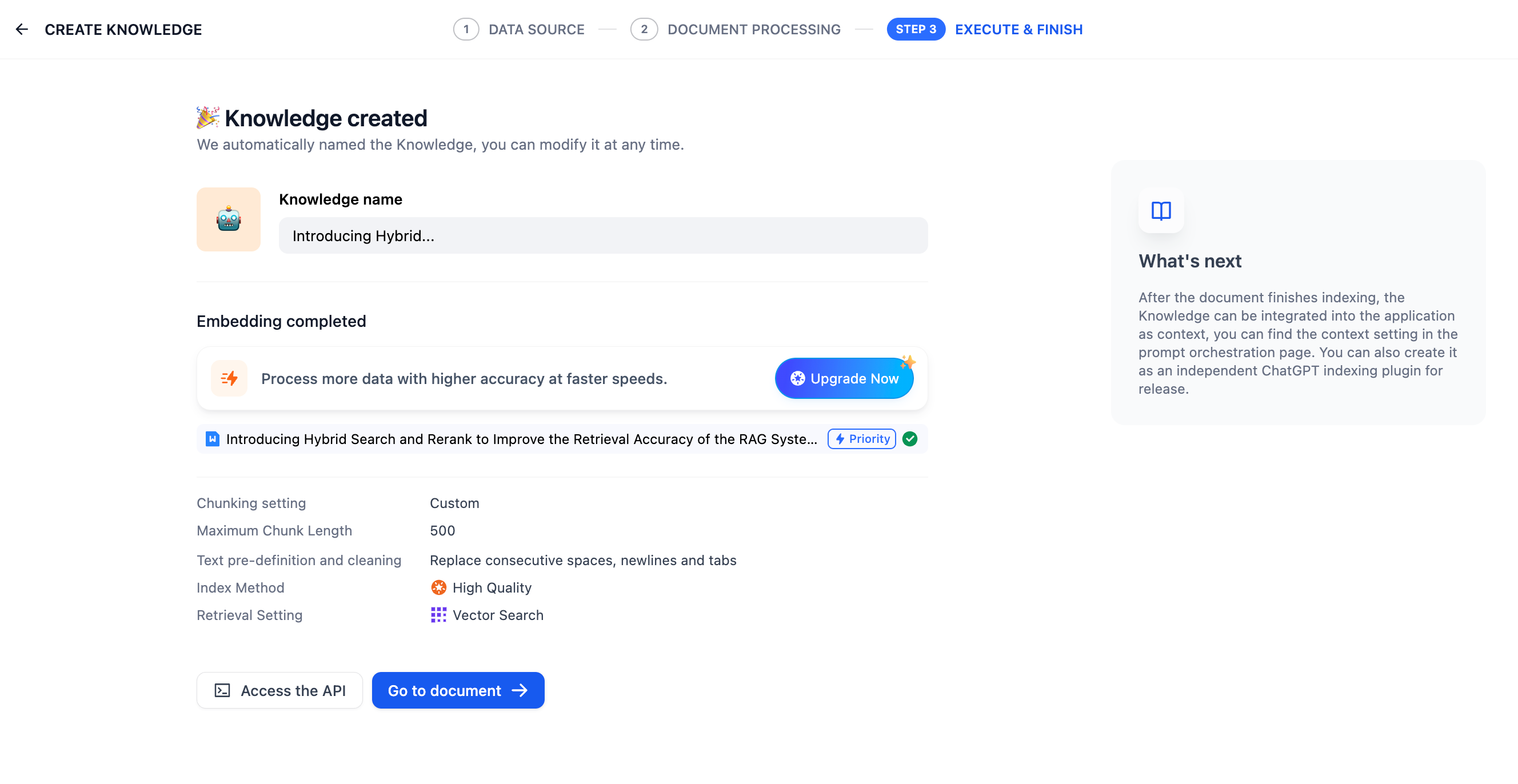

Track changes and manage versions in Chatflow and Workflow apps.

Only available for Chatflow and Workflow apps right now.

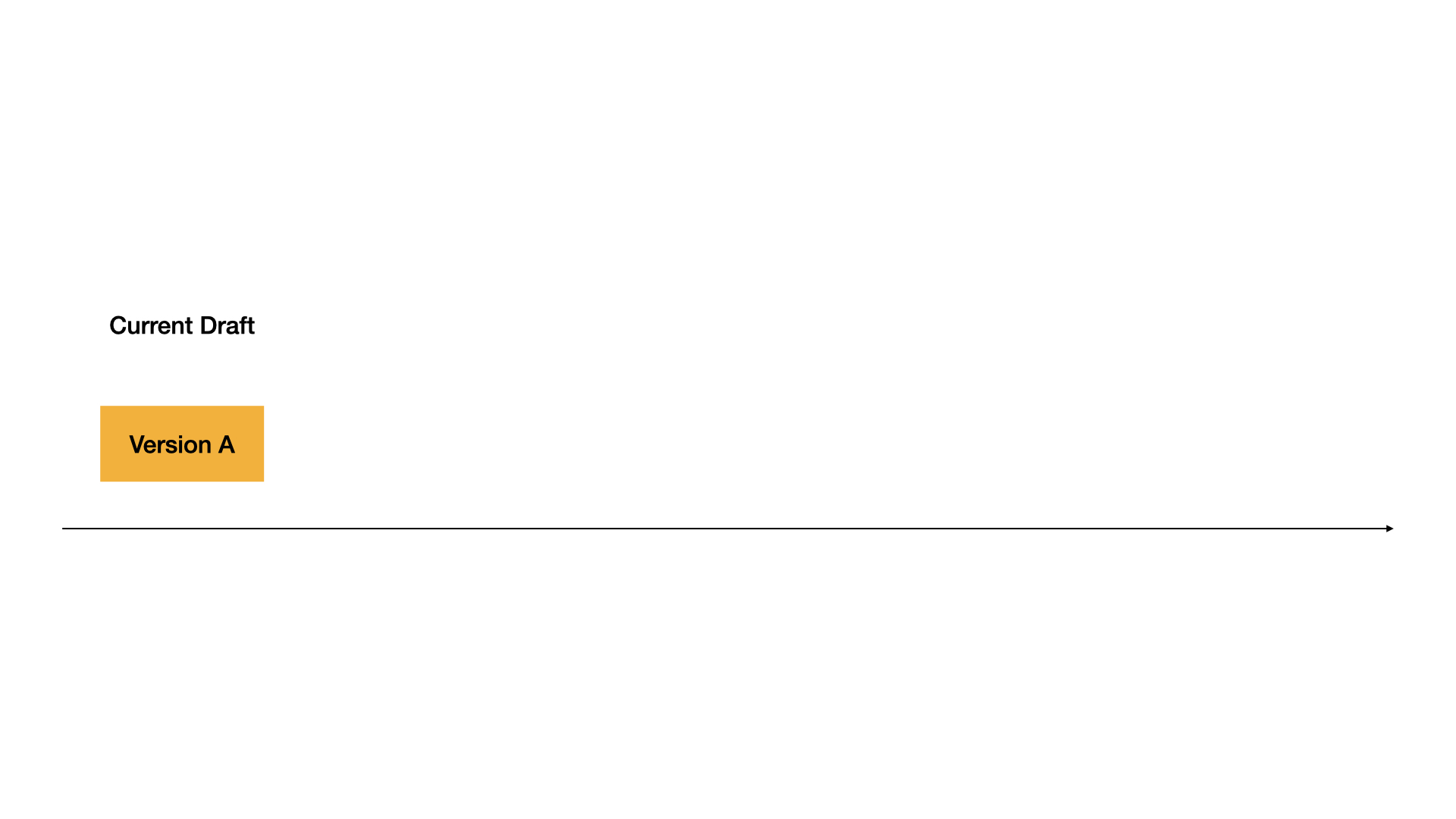

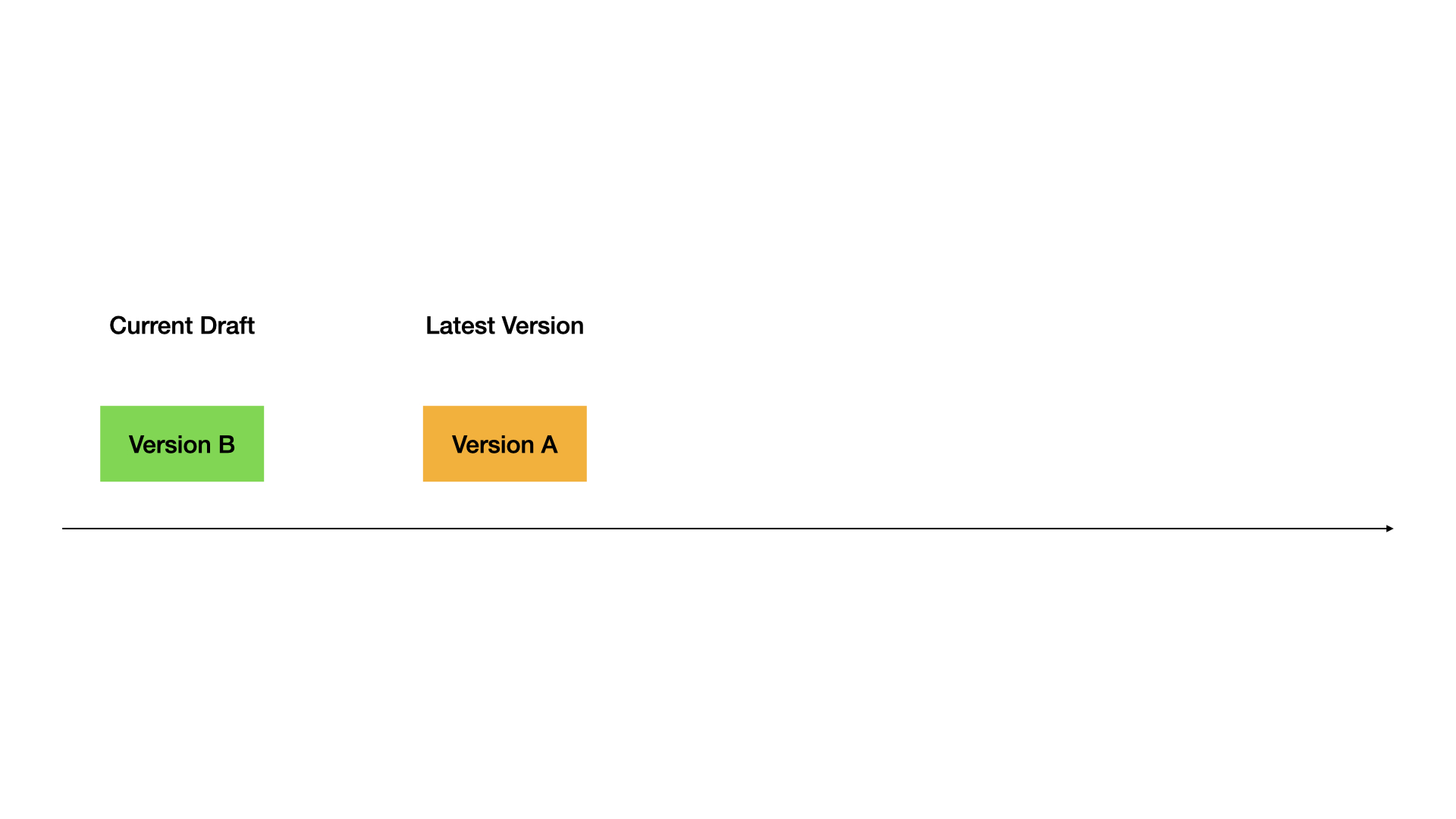

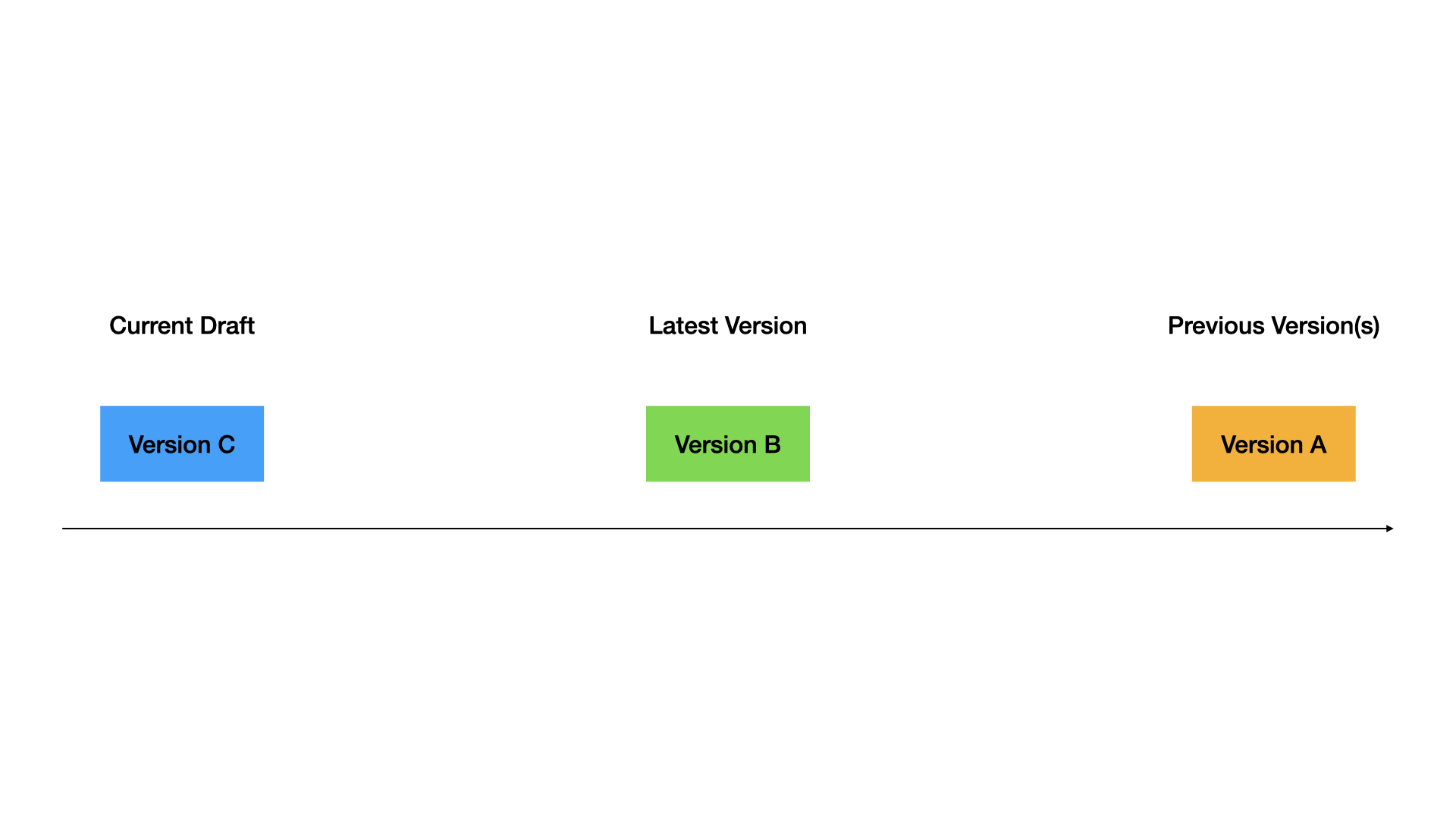

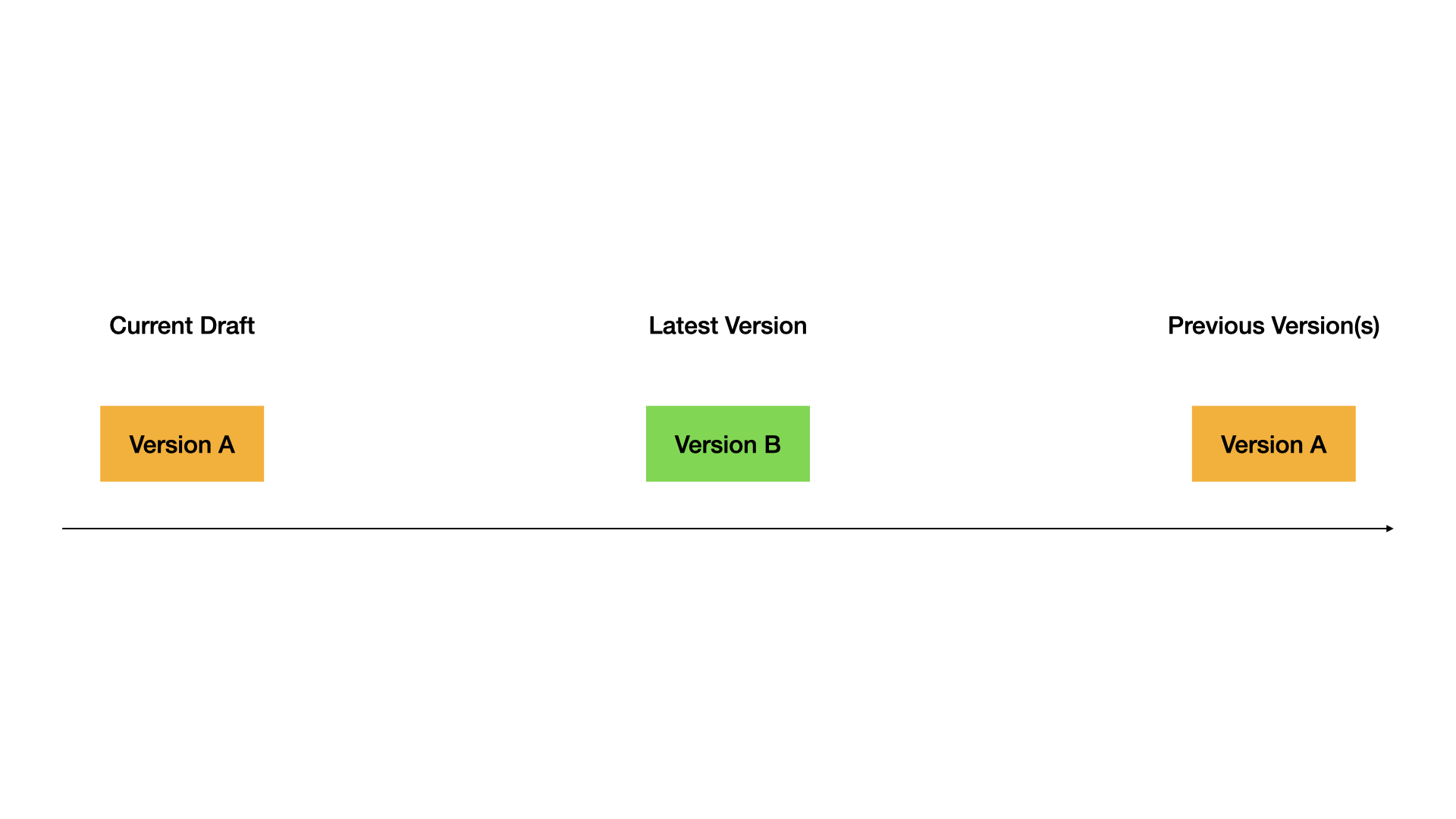

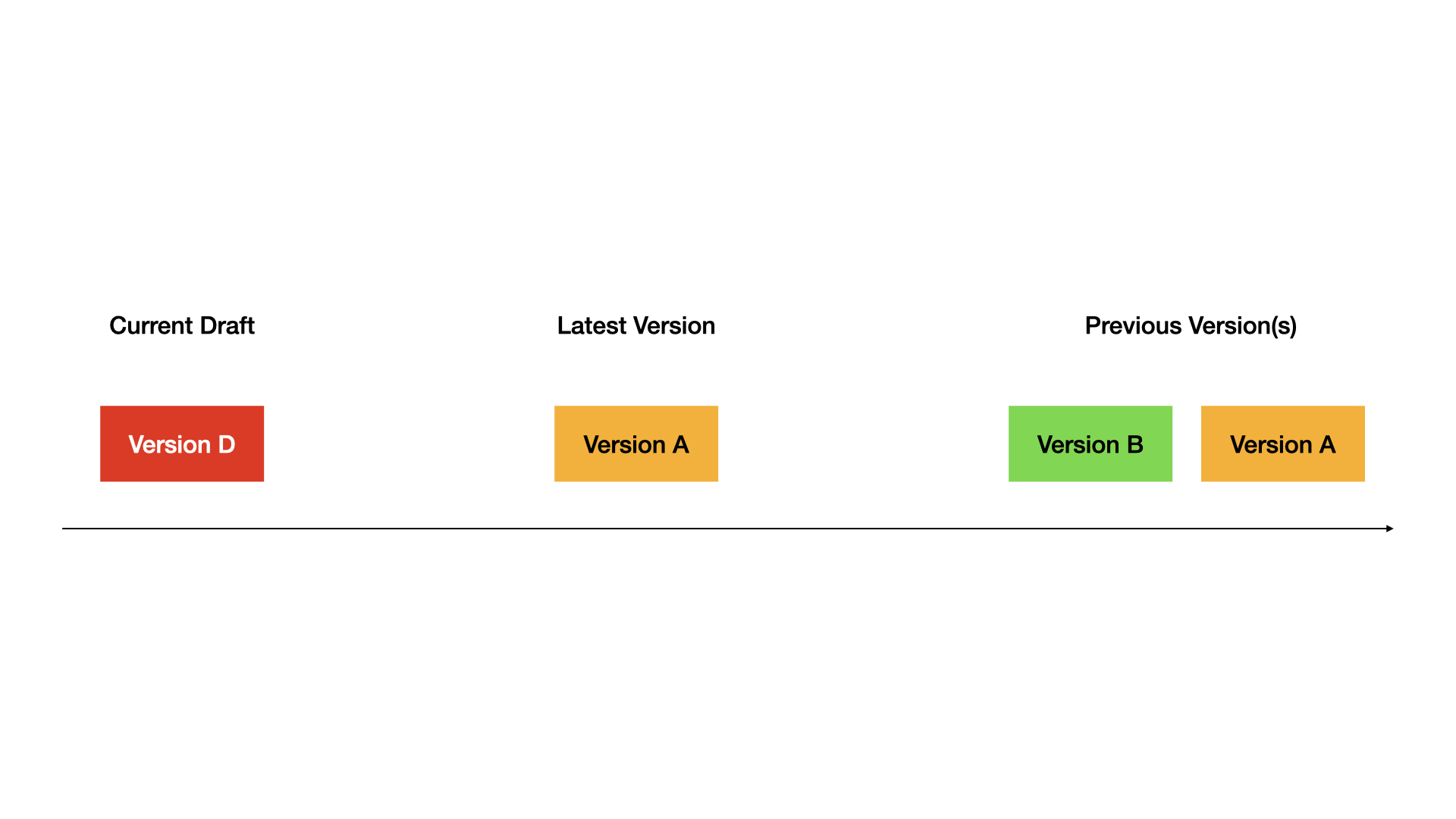

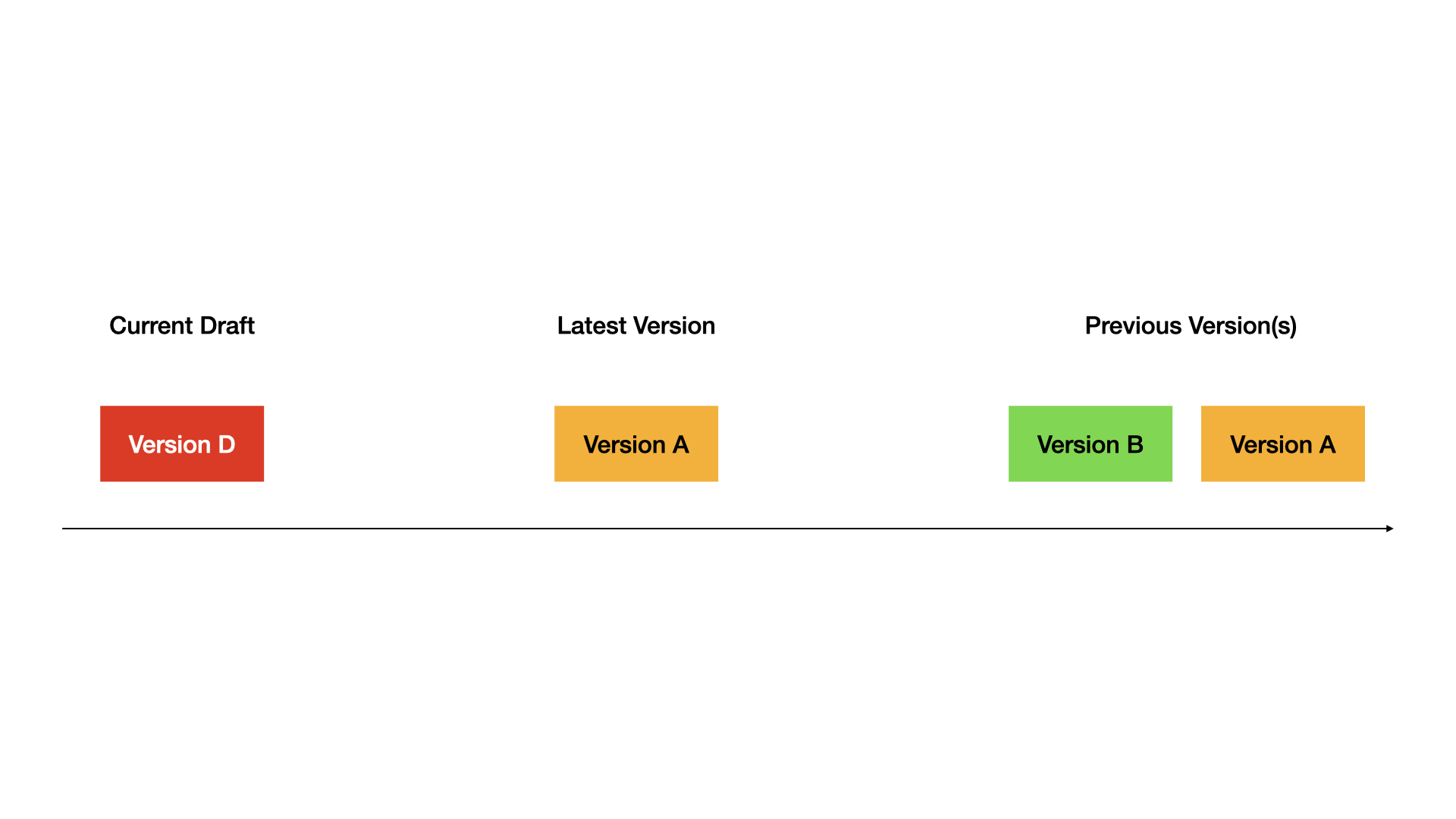

## How it works

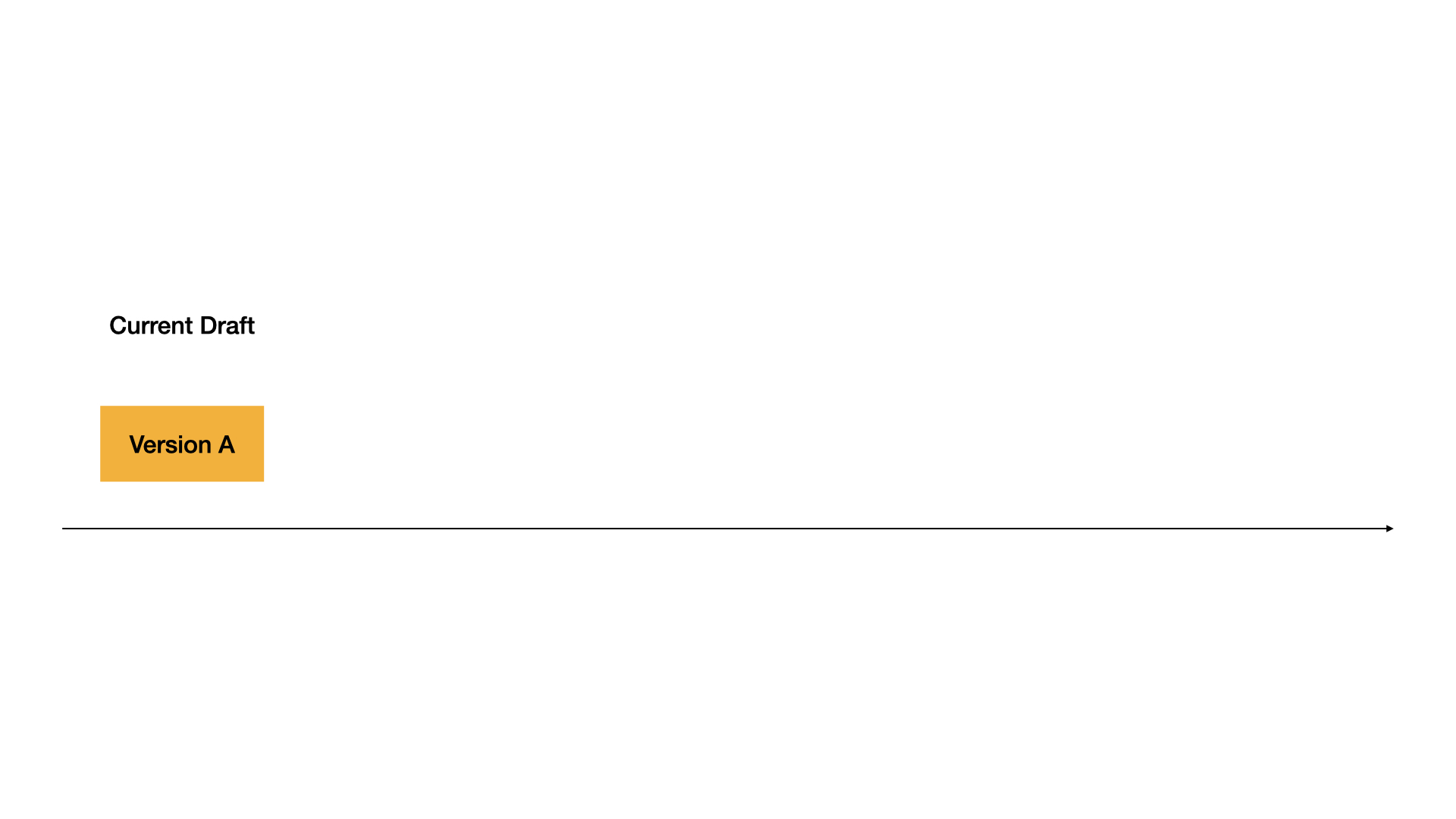

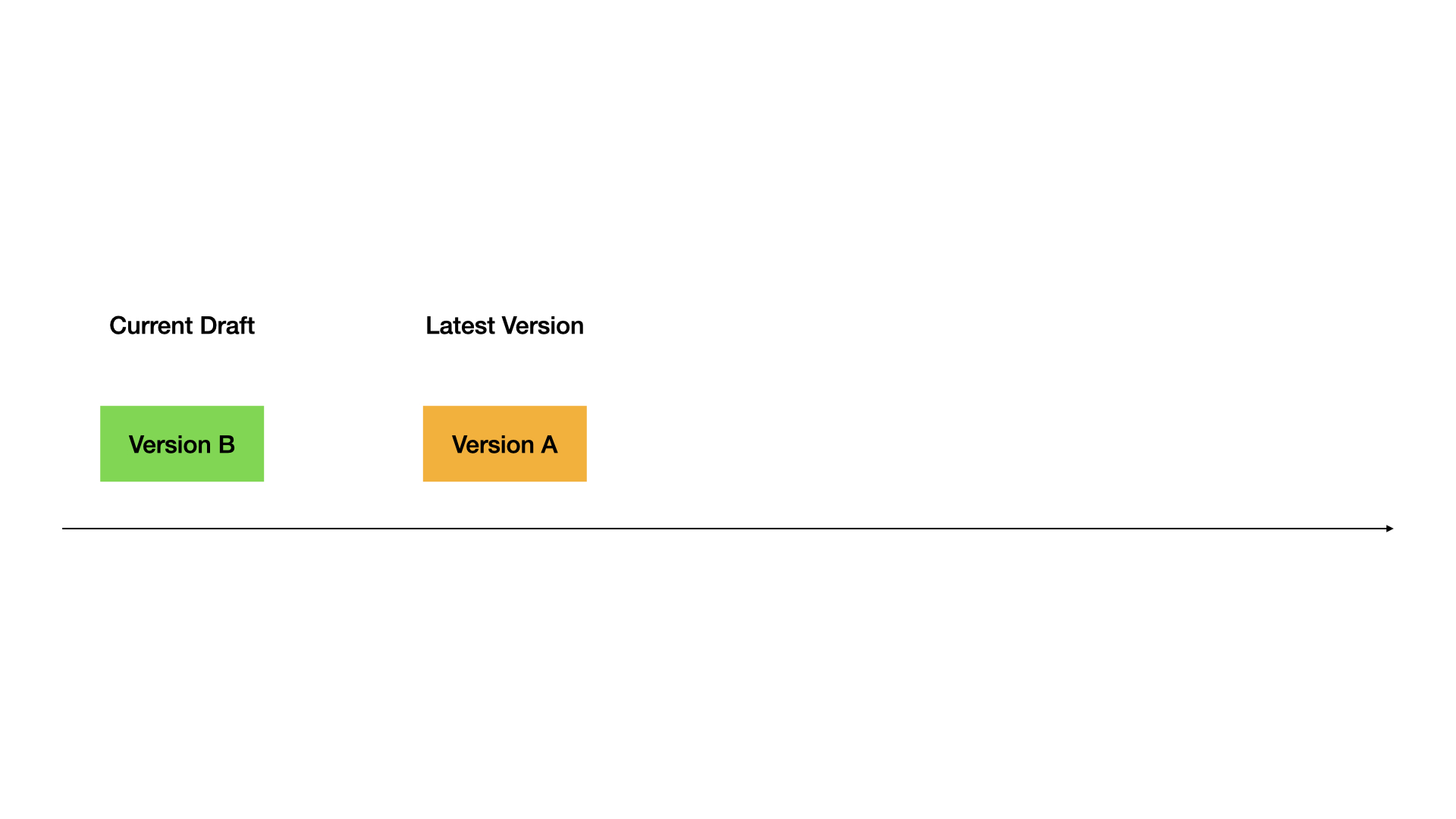

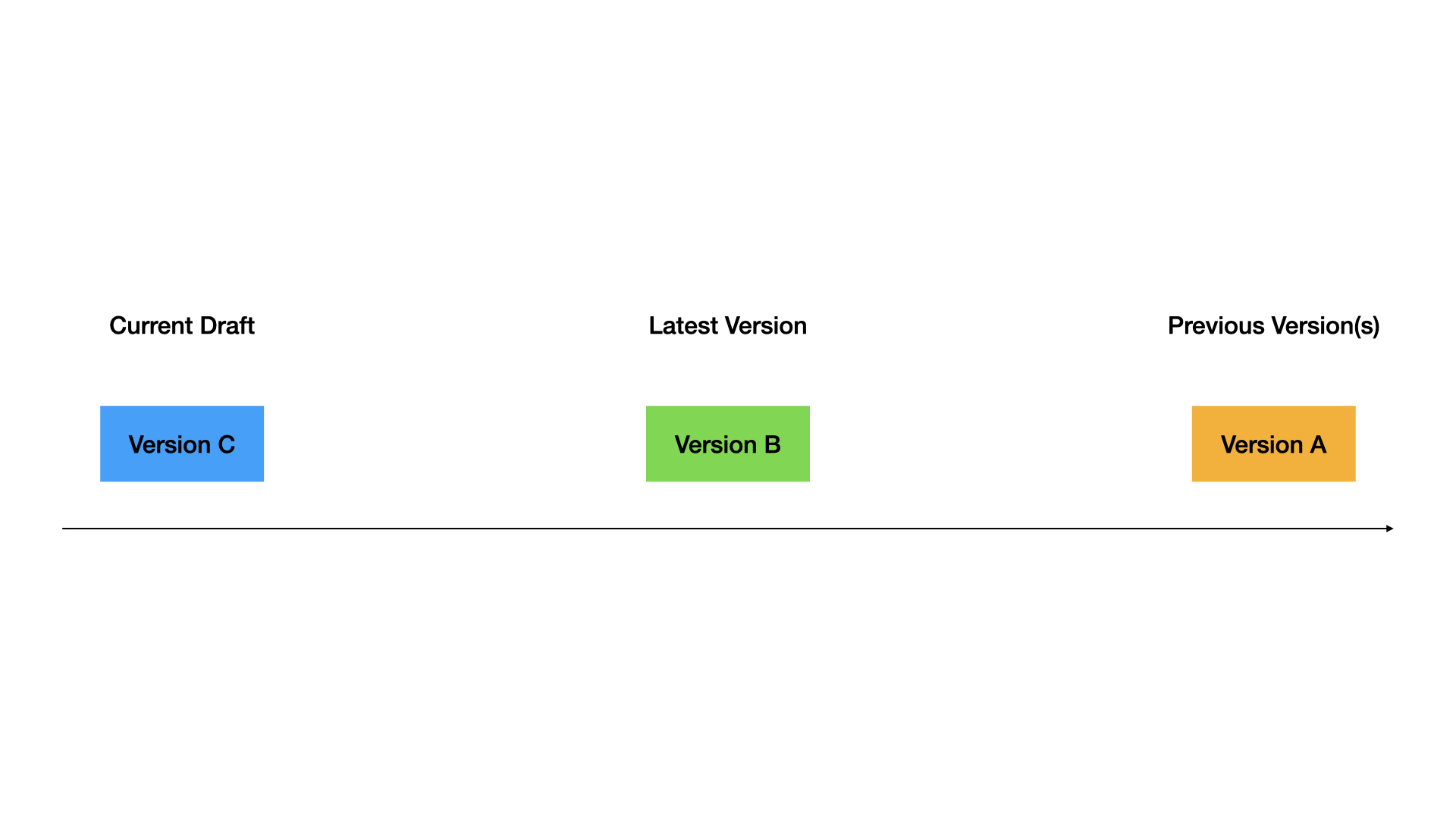

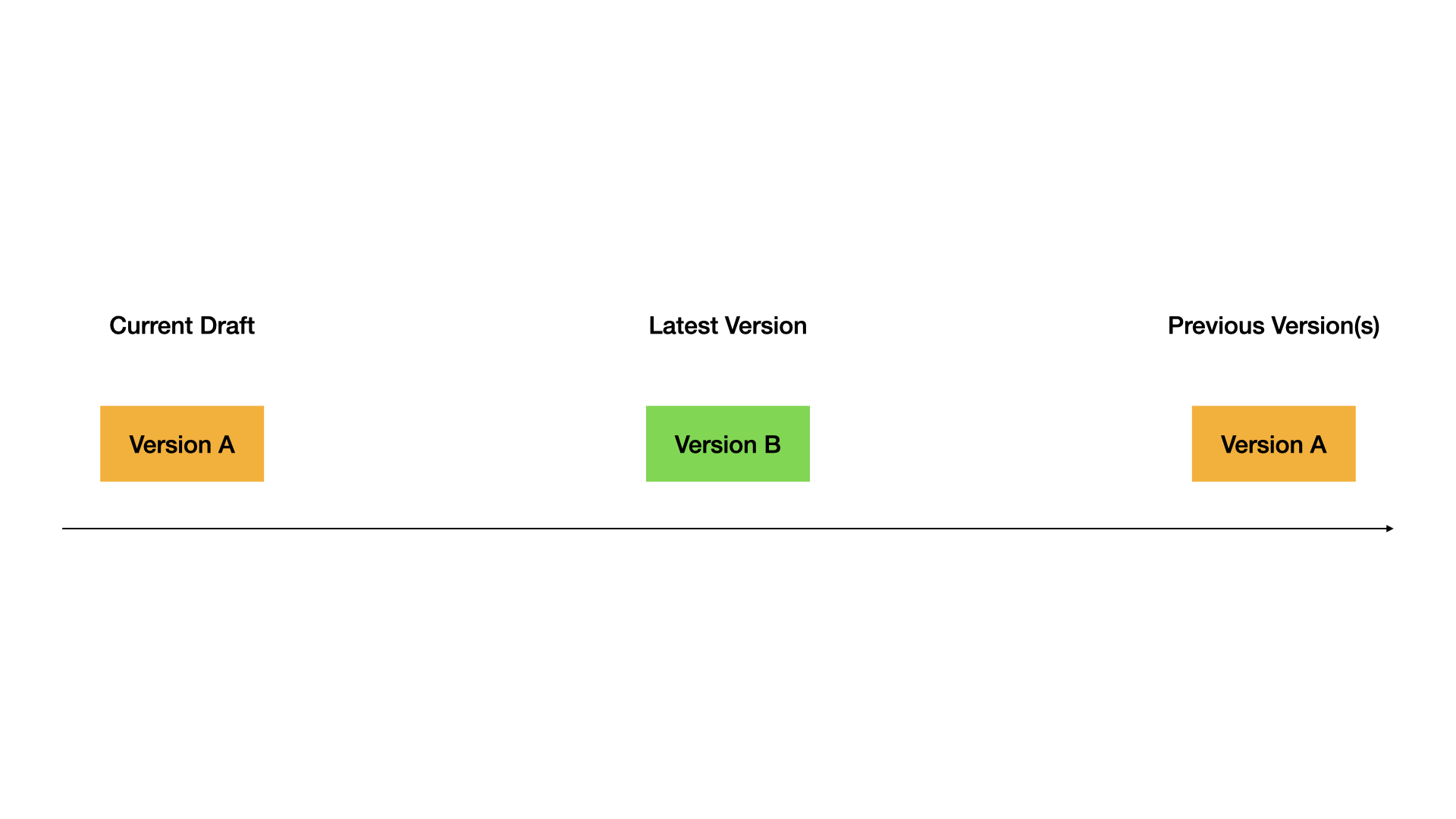

**Current Draft**: Your working version. This is where you make changes. Not live for users.

**Latest Version**: The live version users see.

**Previous Versions**: Older published versions.

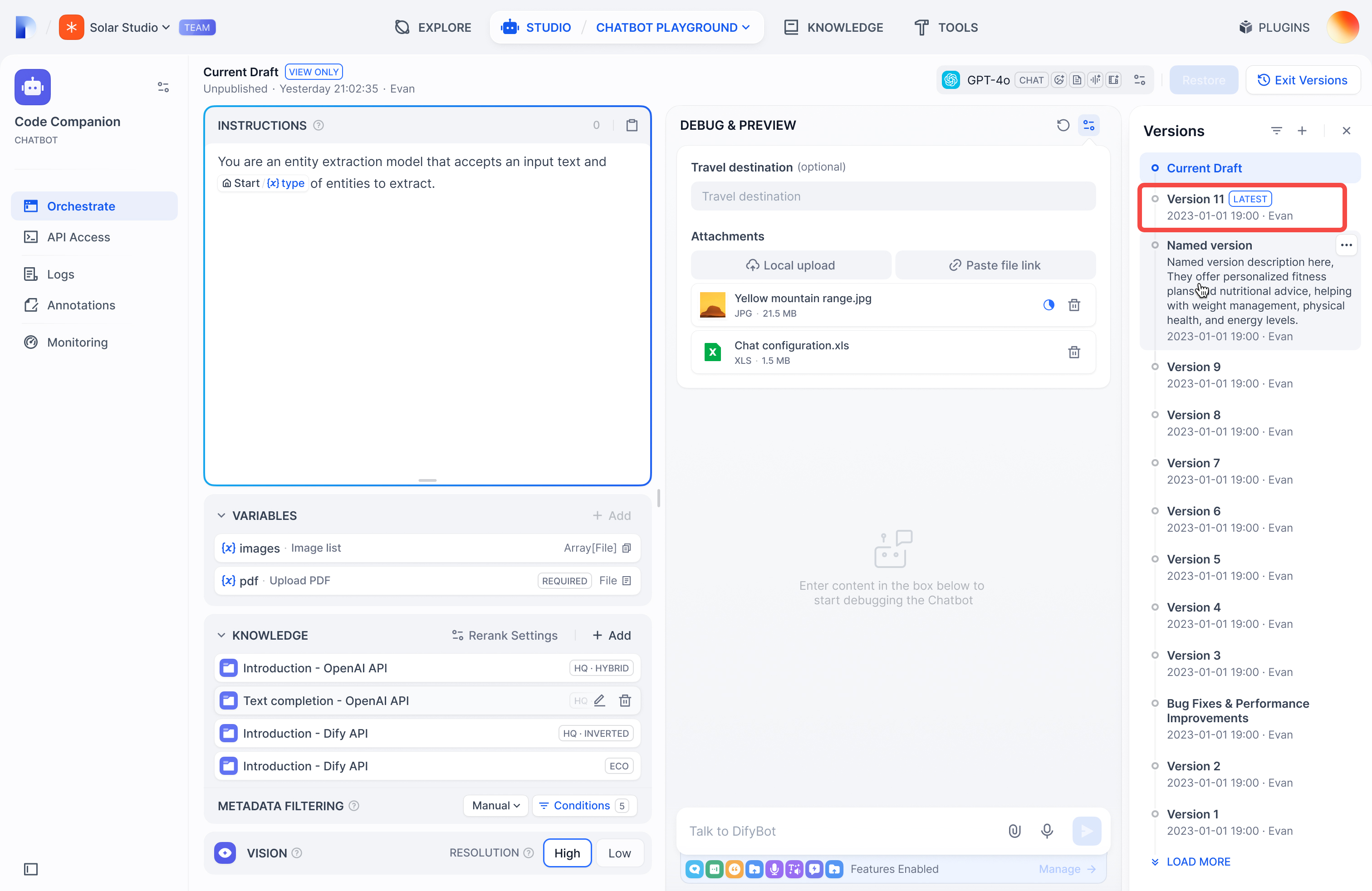

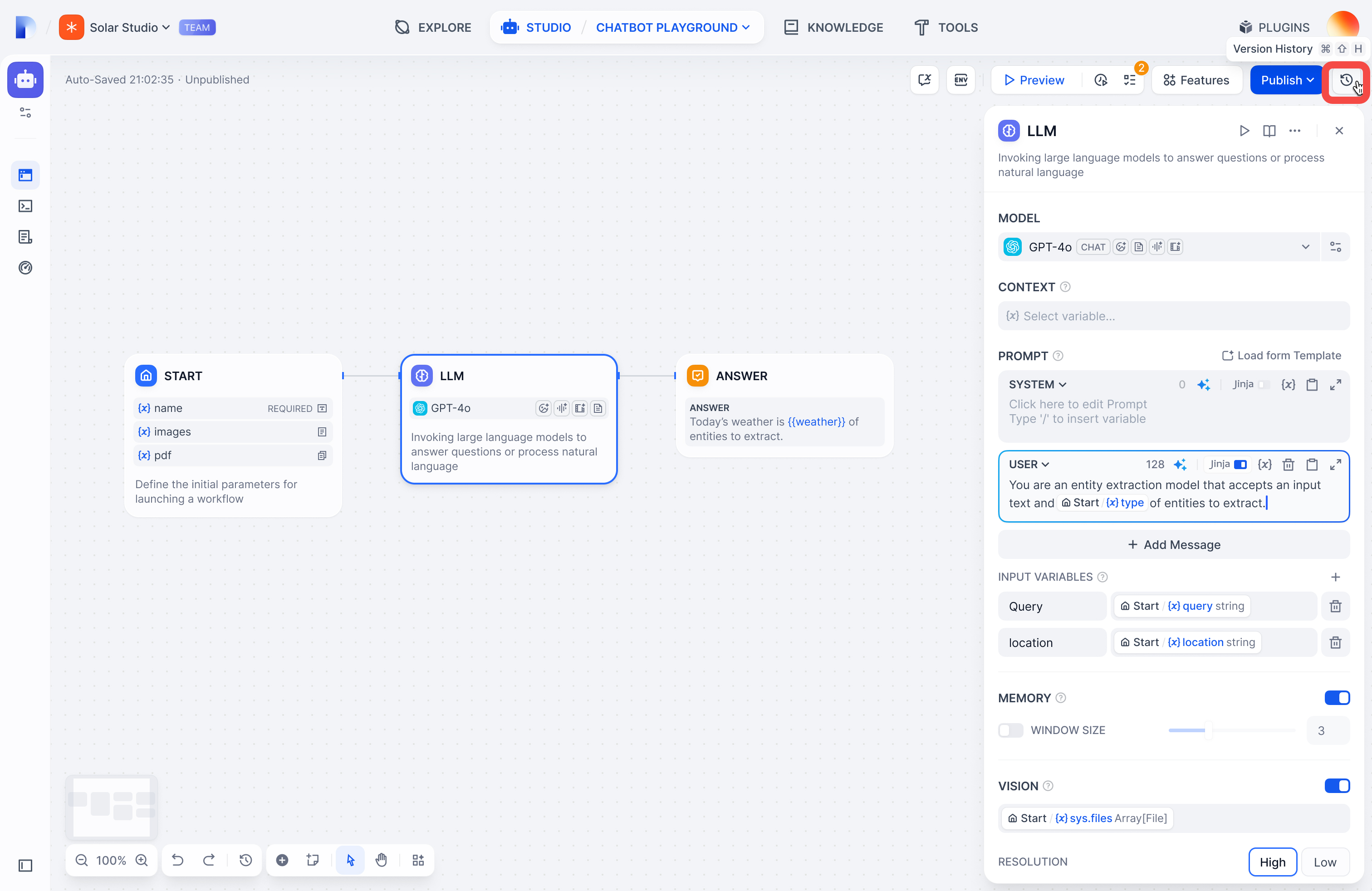

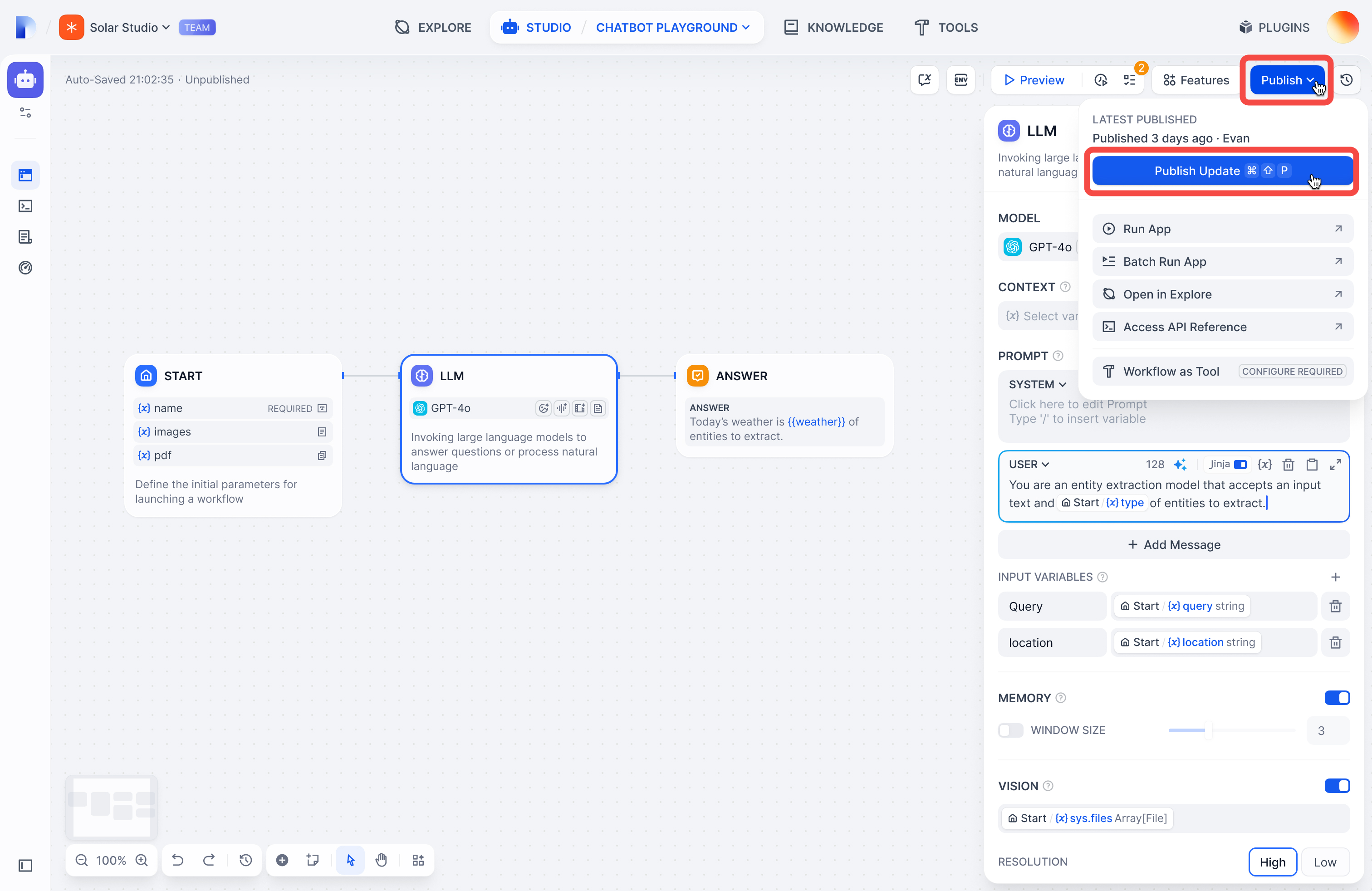

## Publishing versions

Click **Publish** → **Publish Update** to make your draft live.

Your draft becomes the new Latest Version, and you get a fresh draft to work in.

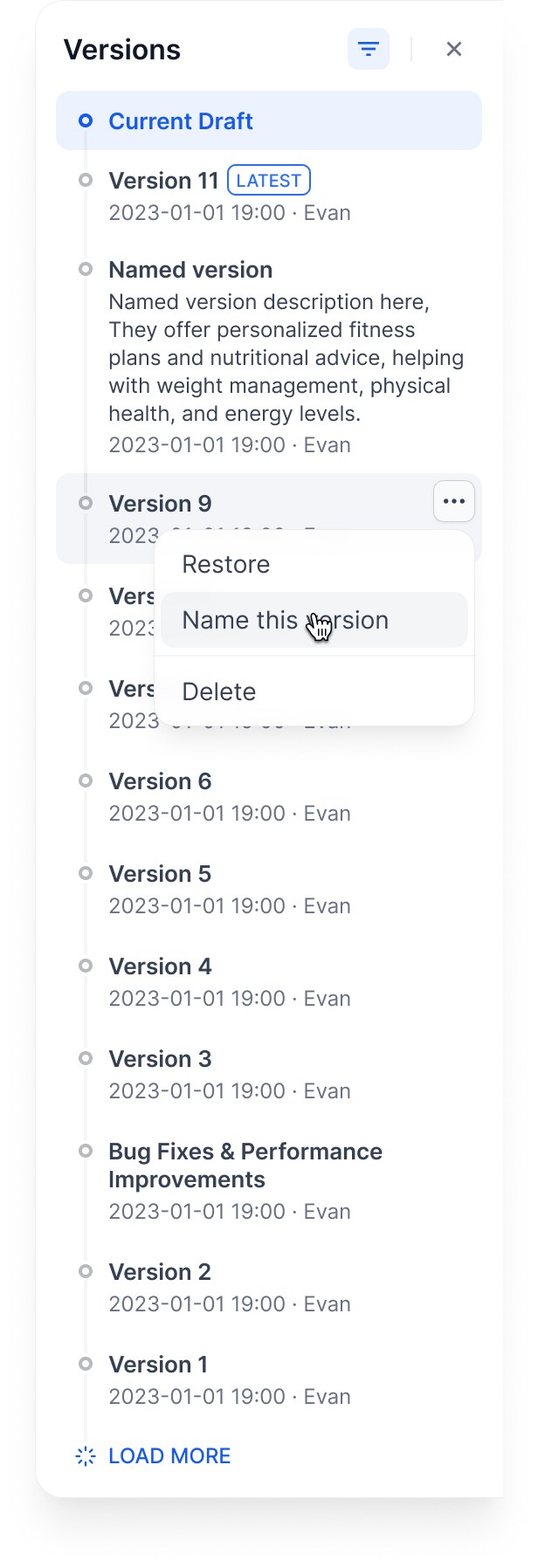

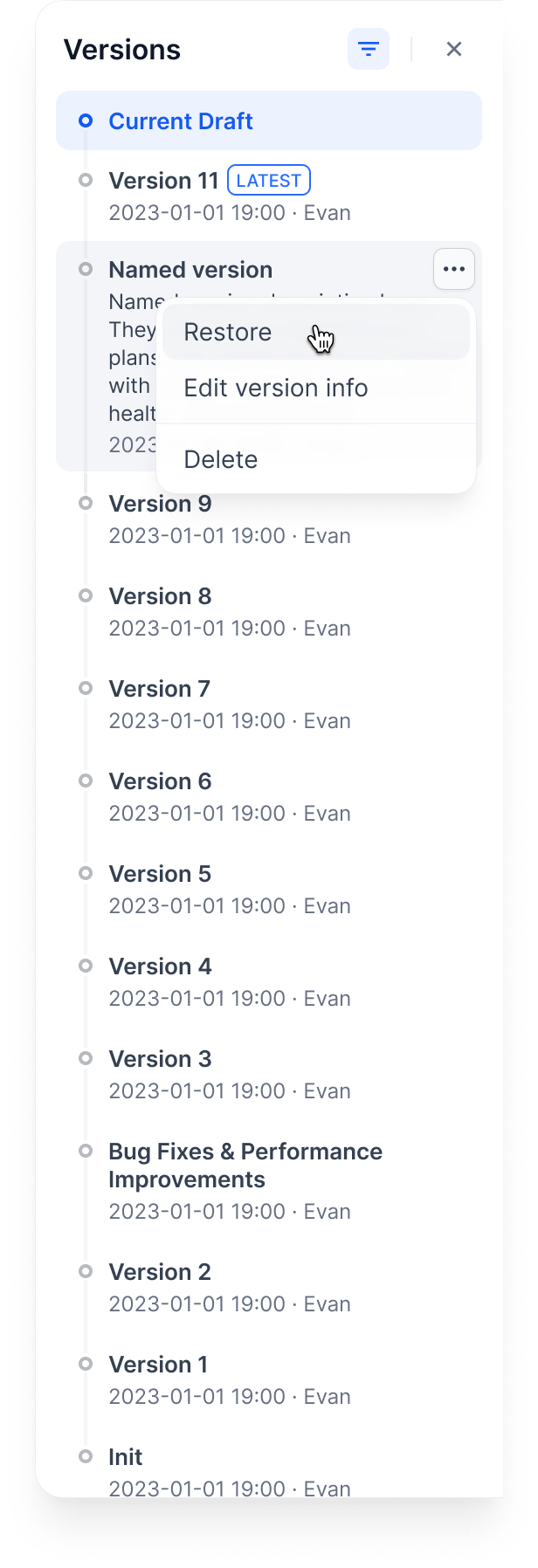

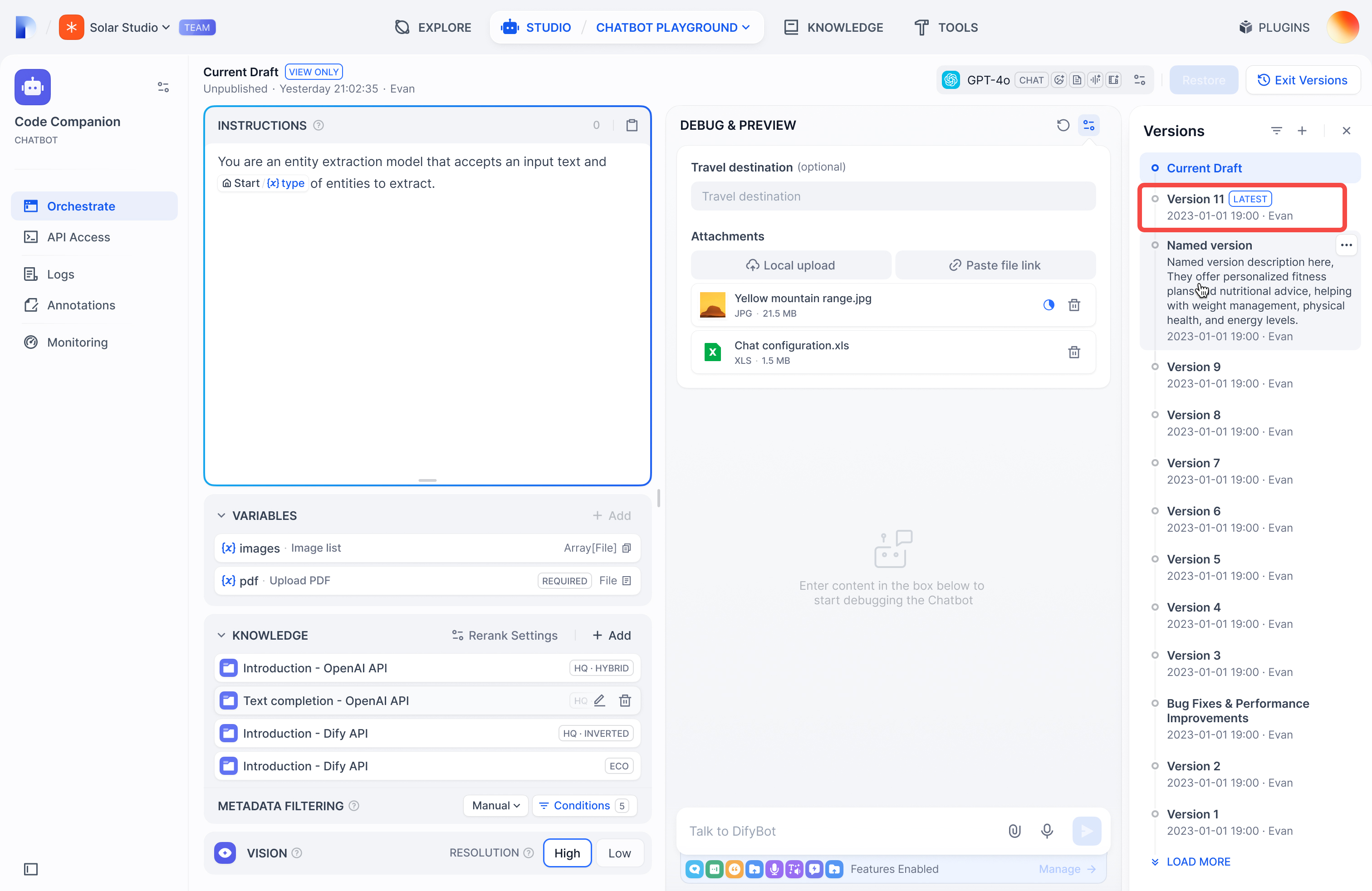

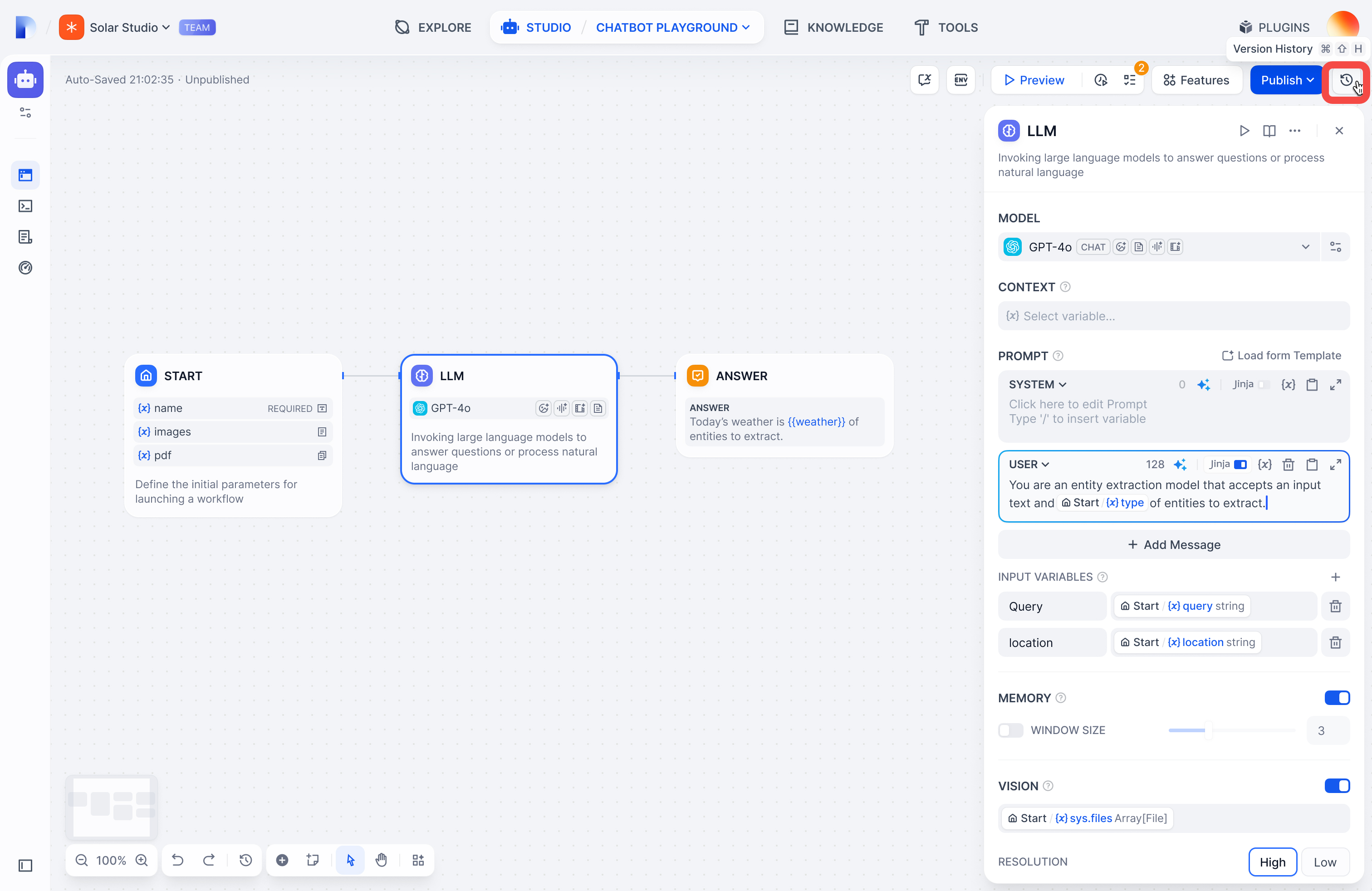

## Viewing versions

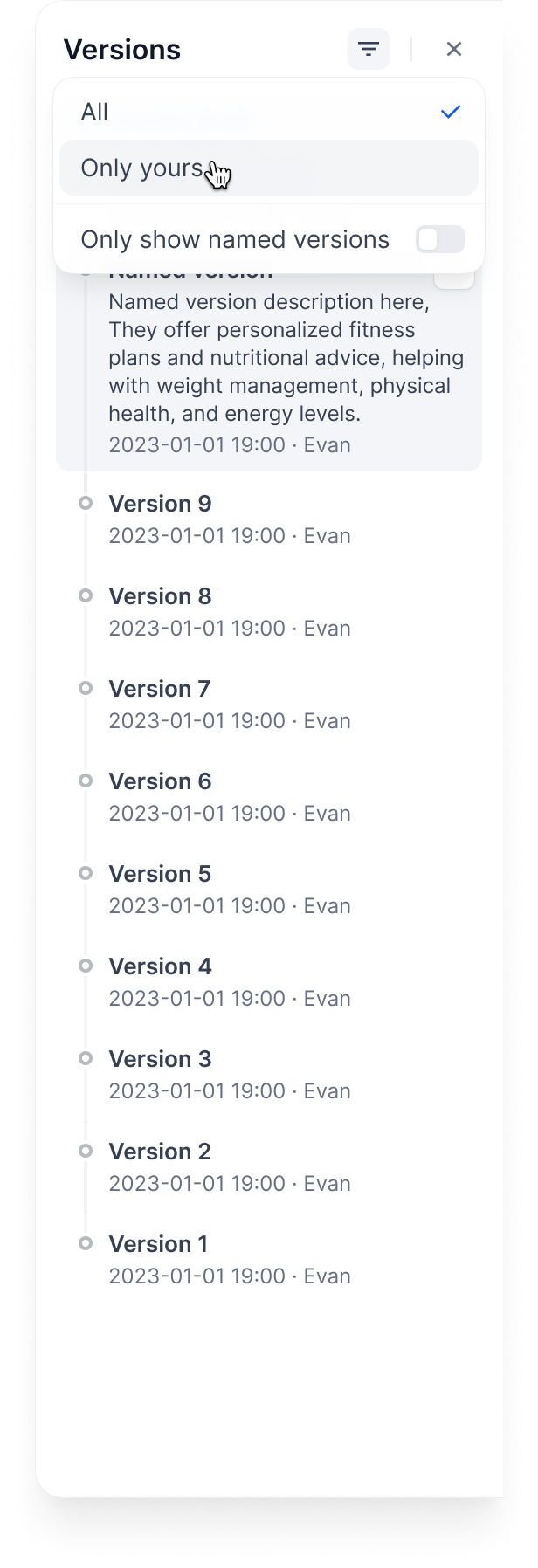

Click the history icon to see all versions:

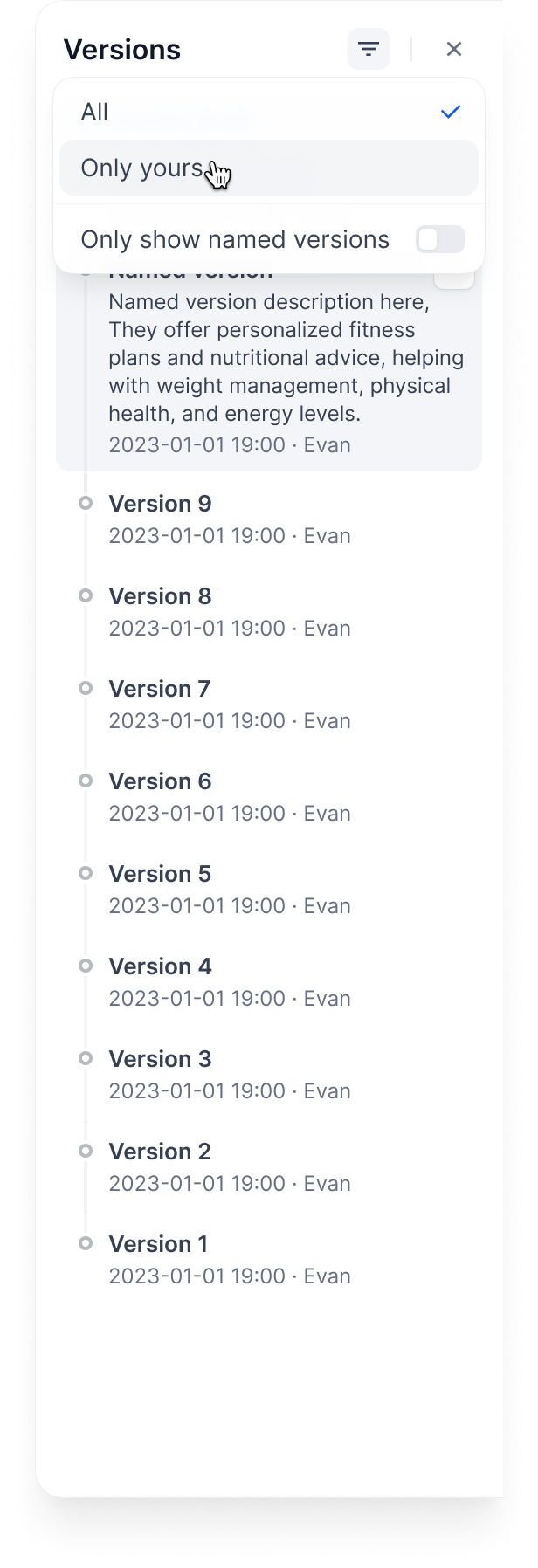

Filter by:

* **All versions** or **only yours**

* **Only named versions** (skip auto-generated names)

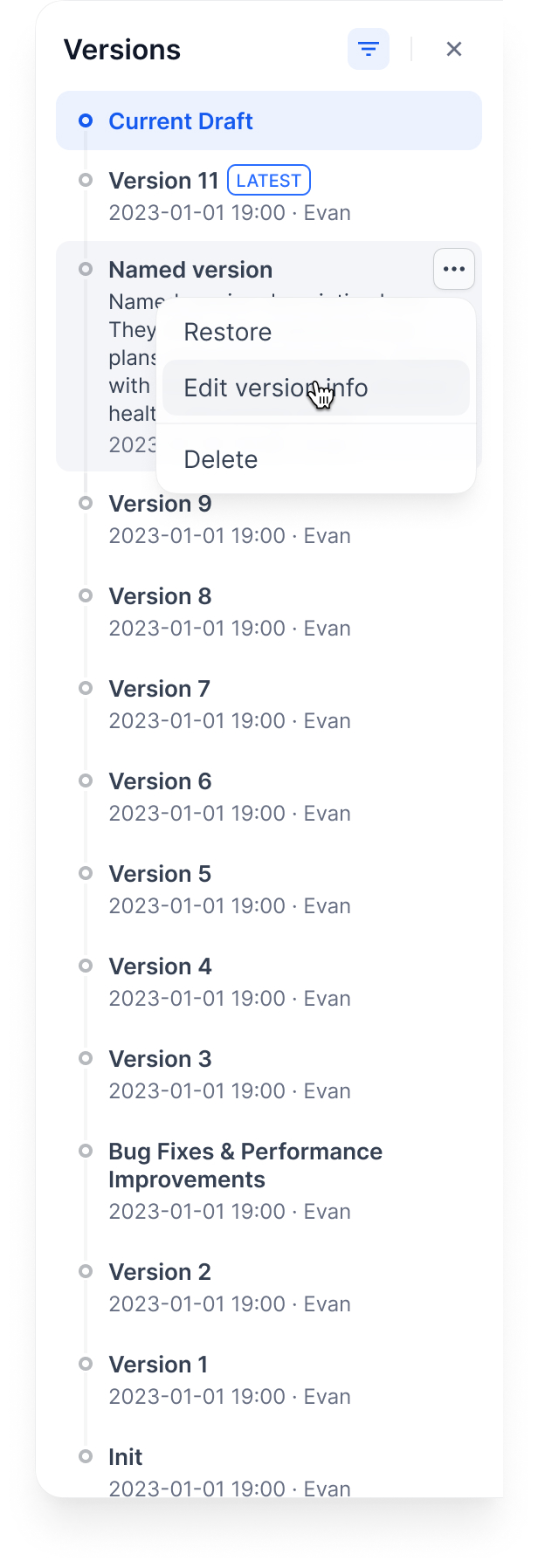

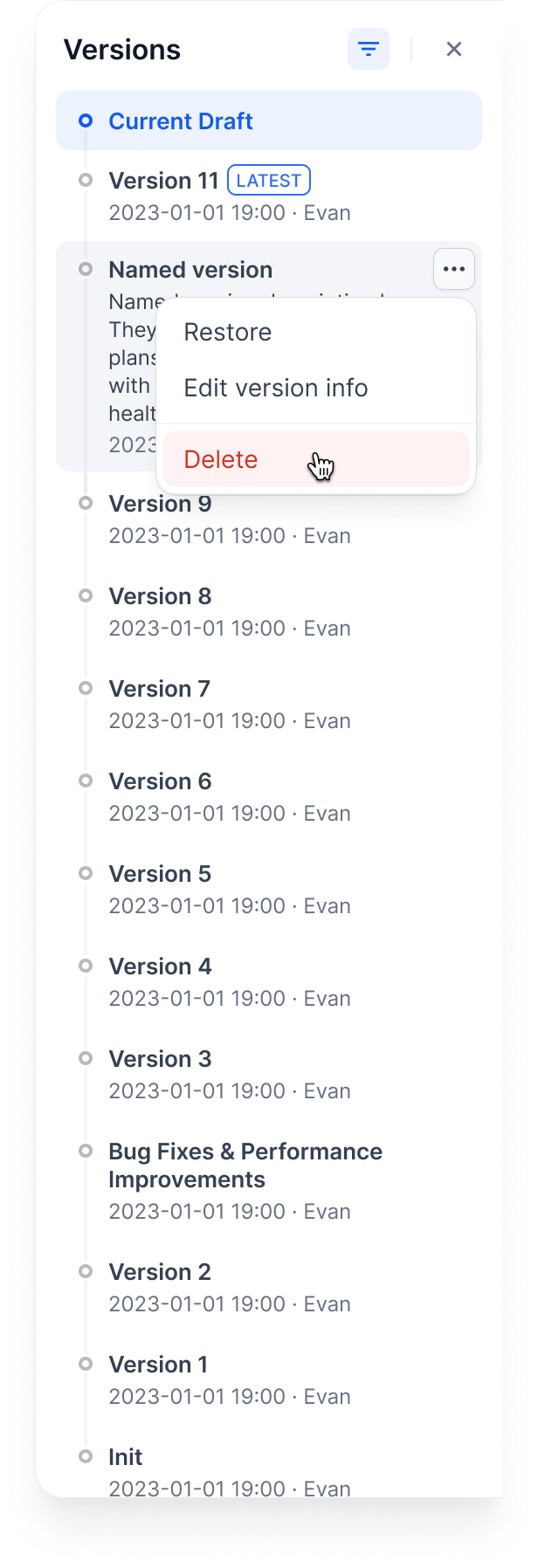

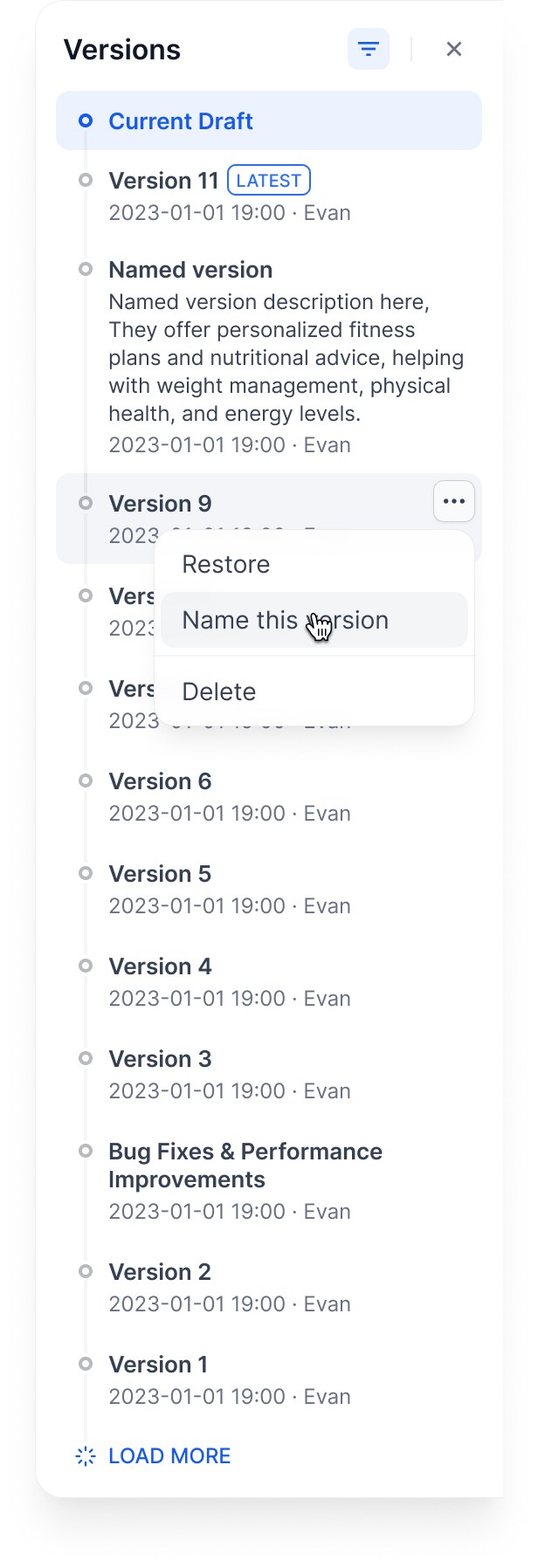

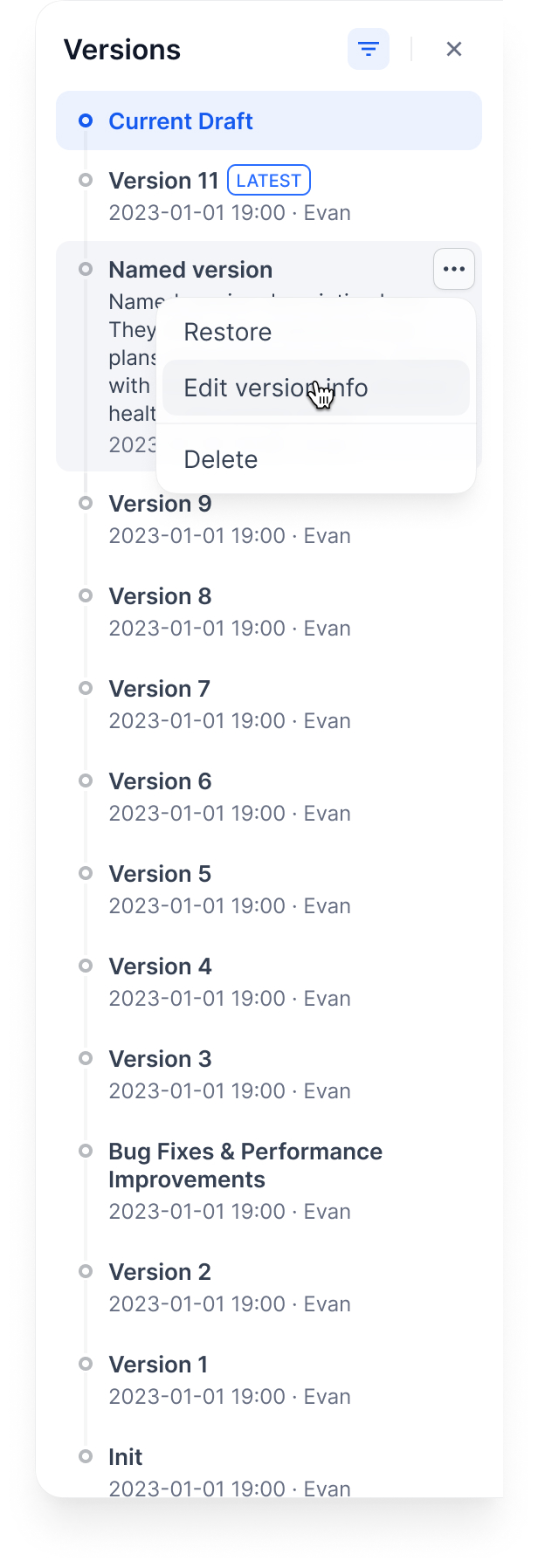

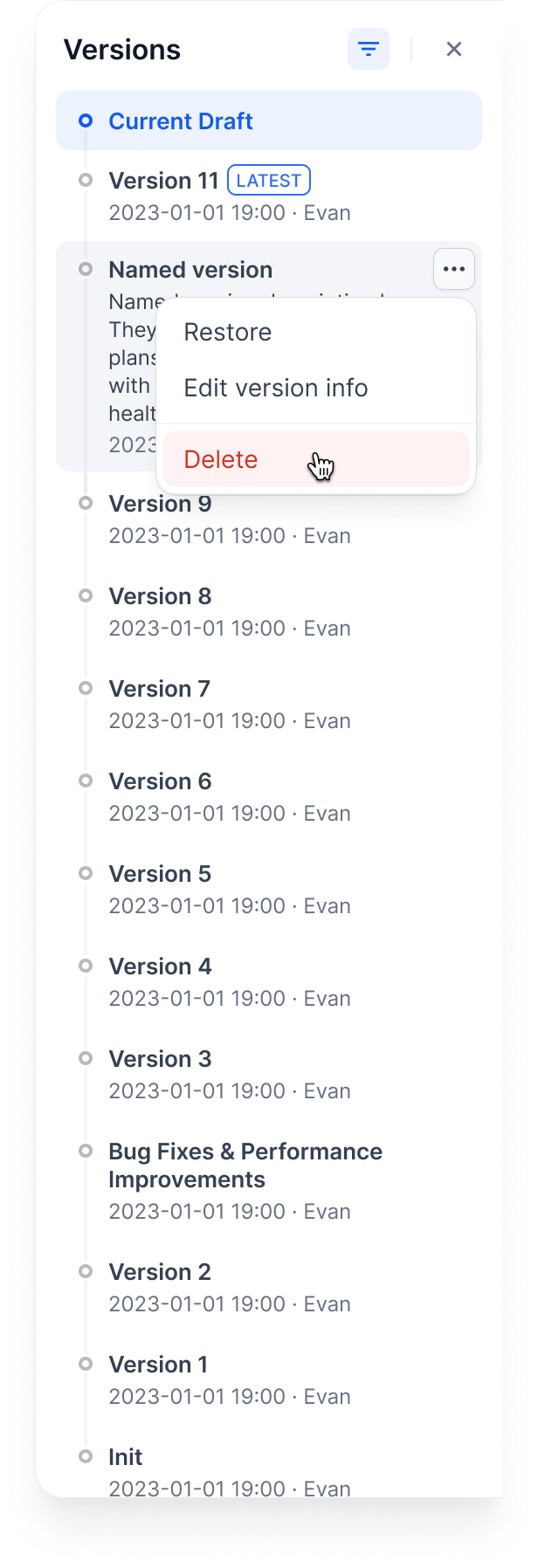

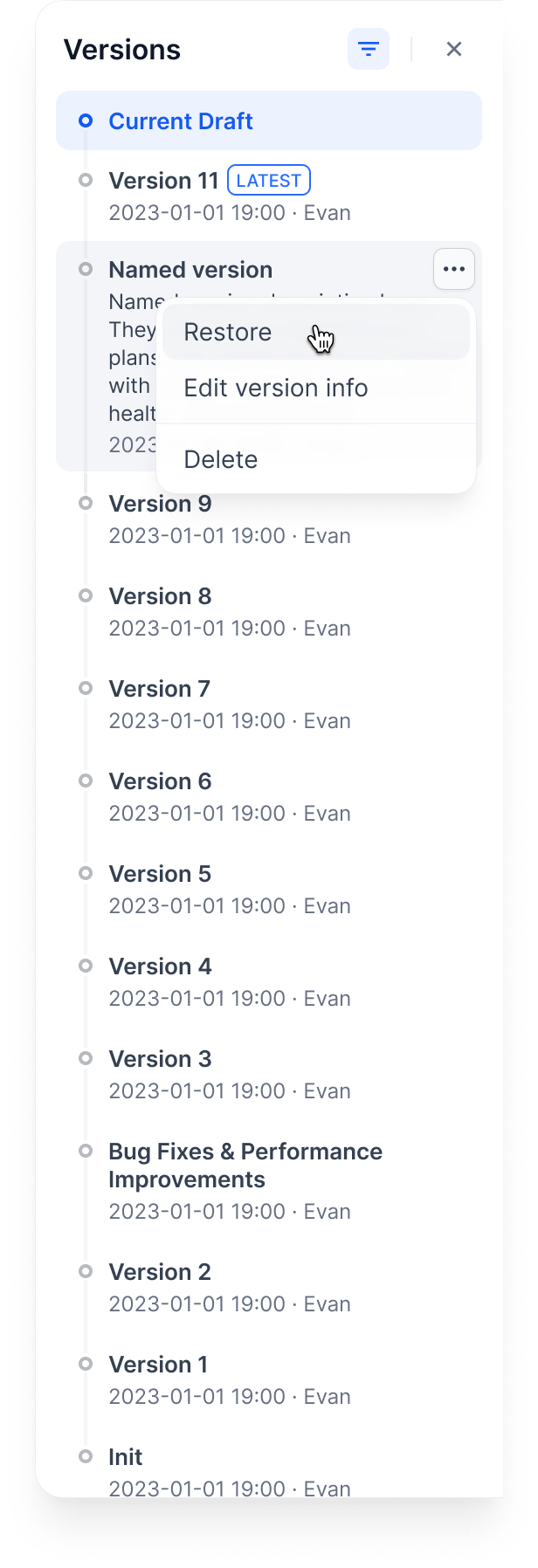

## Managing versions

**Name a version**: Give it a proper name instead of the auto-generated one

**Edit version info**: Change the name and add release notes

**Delete old versions**: Clean up versions you don't need

You can't delete the Current Draft or Latest Version.

**Restore a version**: Load an old version back into your draft

This replaces your current draft completely. Make sure you don't have unsaved work.

## Example workflow

Here's how versions work through a typical development cycle:

### 1. Start with a draft

### 2. Publish first version

### 3. Publish second version

### 4. Restore old version to draft

### 5. Publish the restored version

Complete demo:

## Tips

* Always test in draft before publishing

* Use descriptive version names for important releases

* Restore versions when you need to rollback quickly

* Keep old versions around for reference

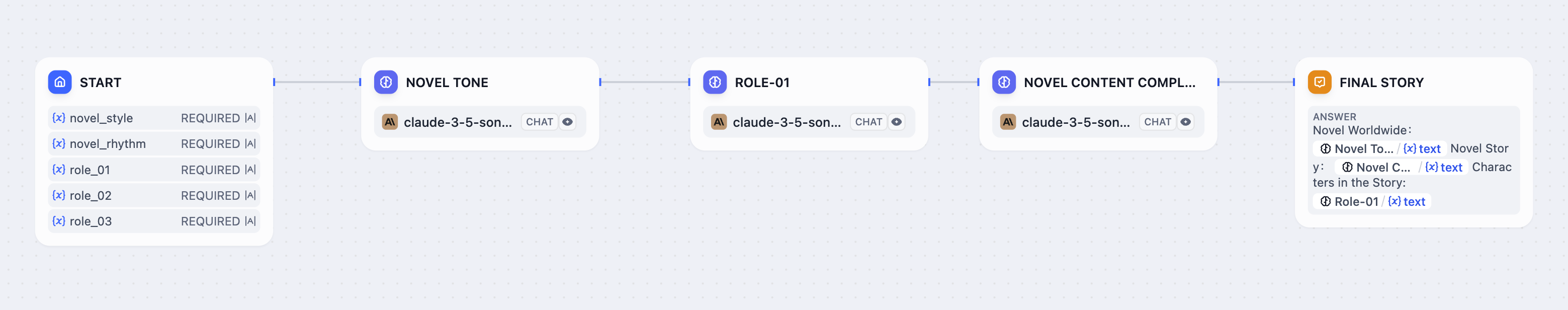

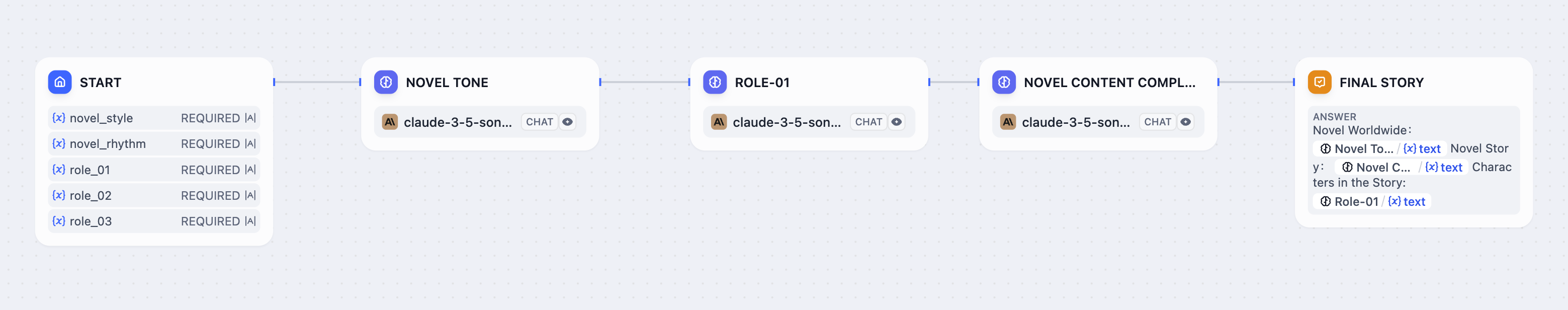

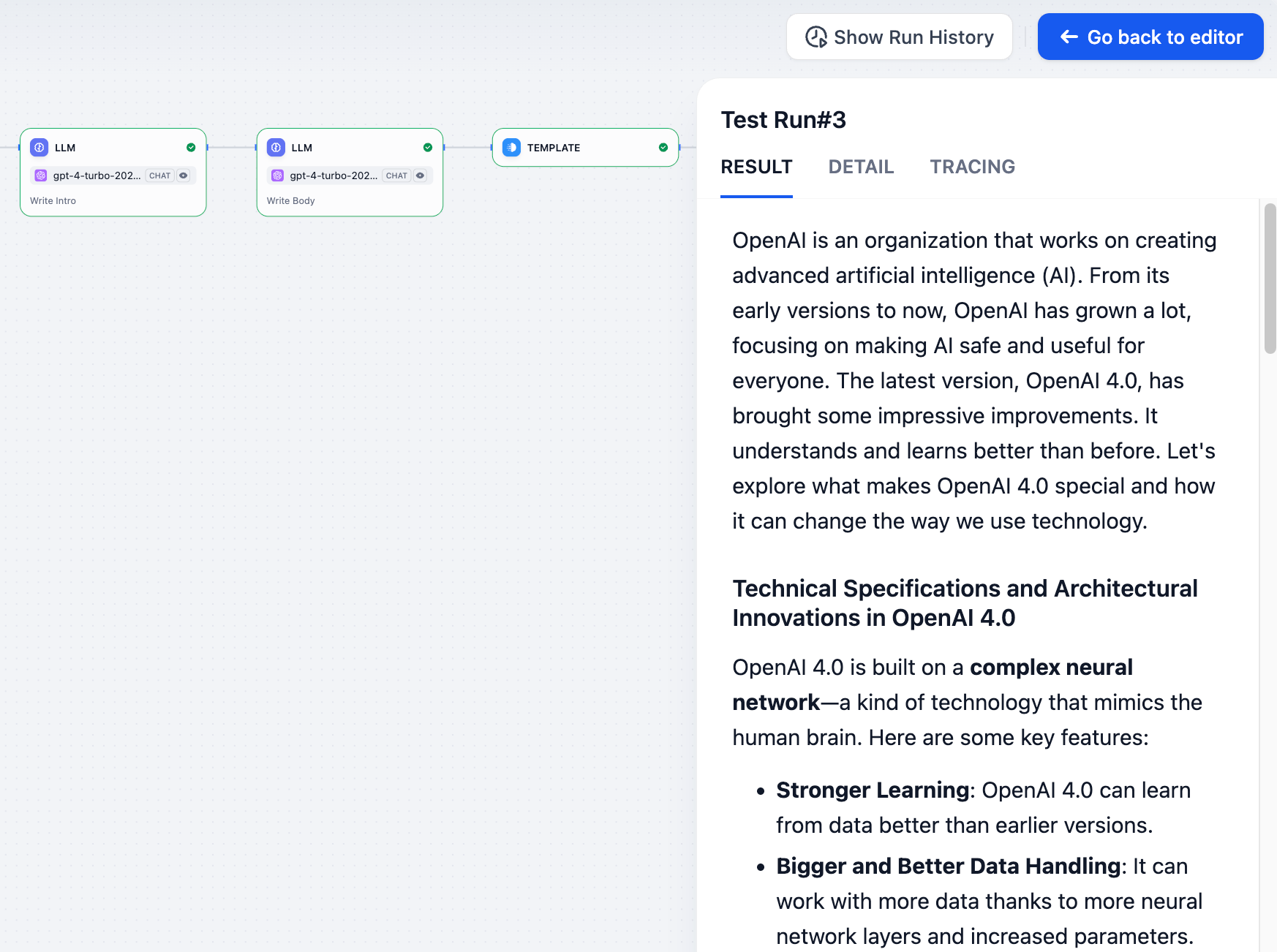

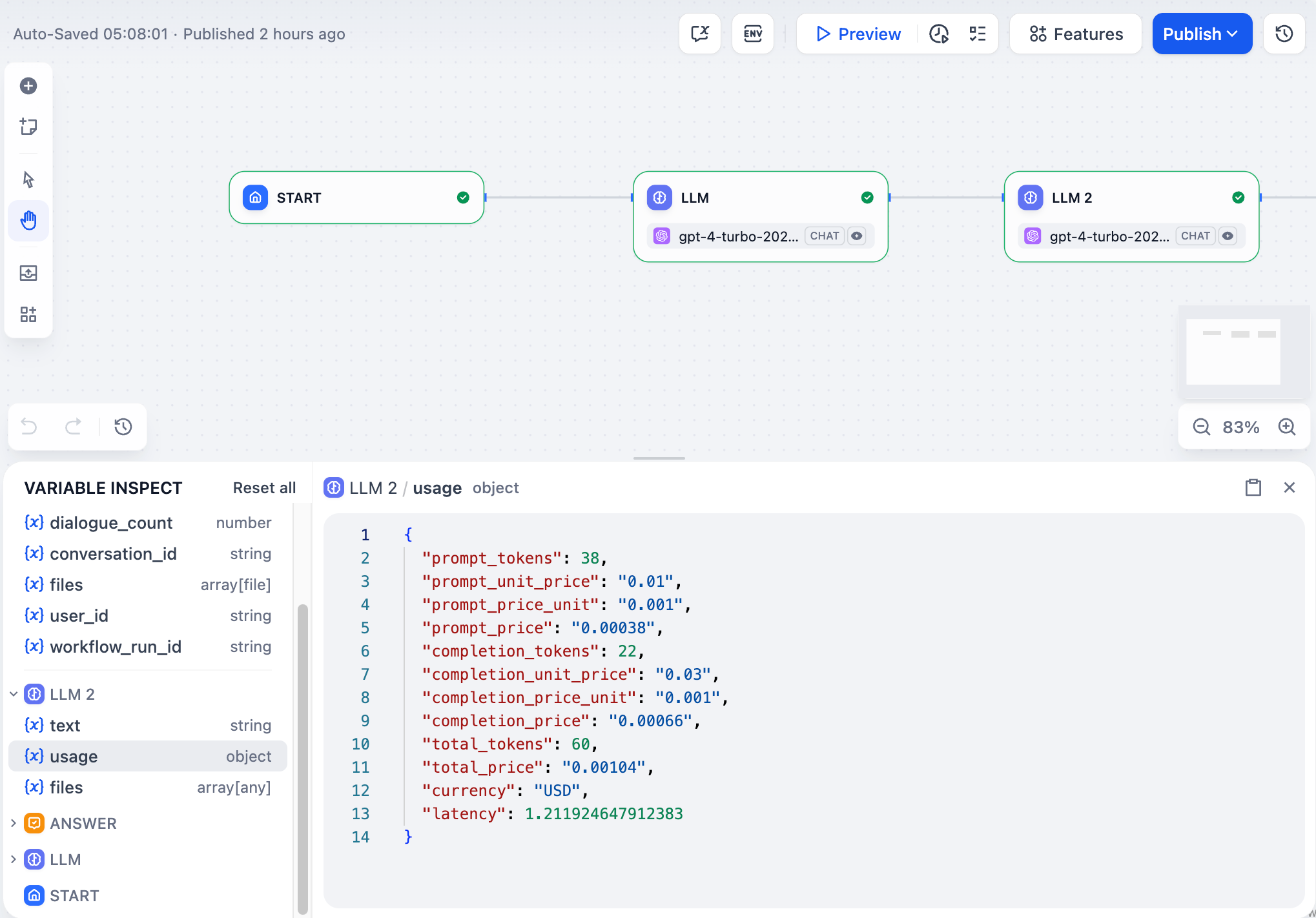

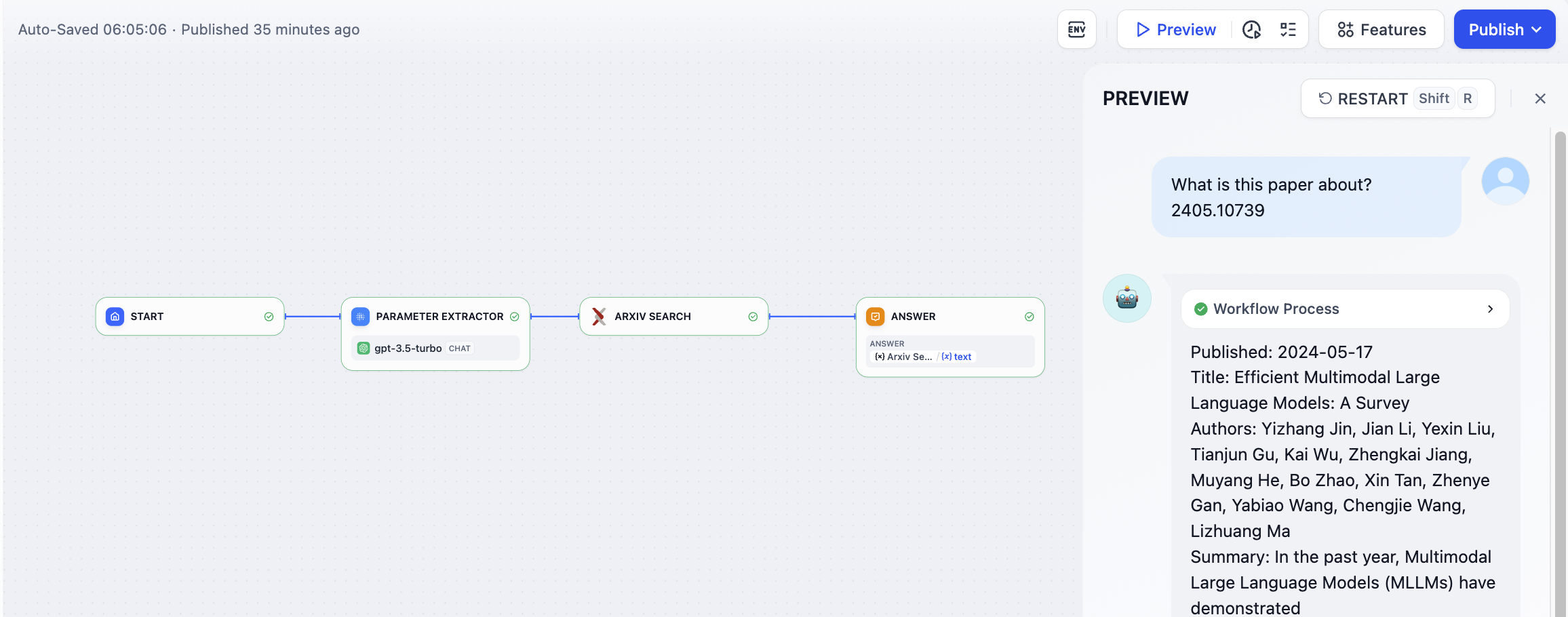

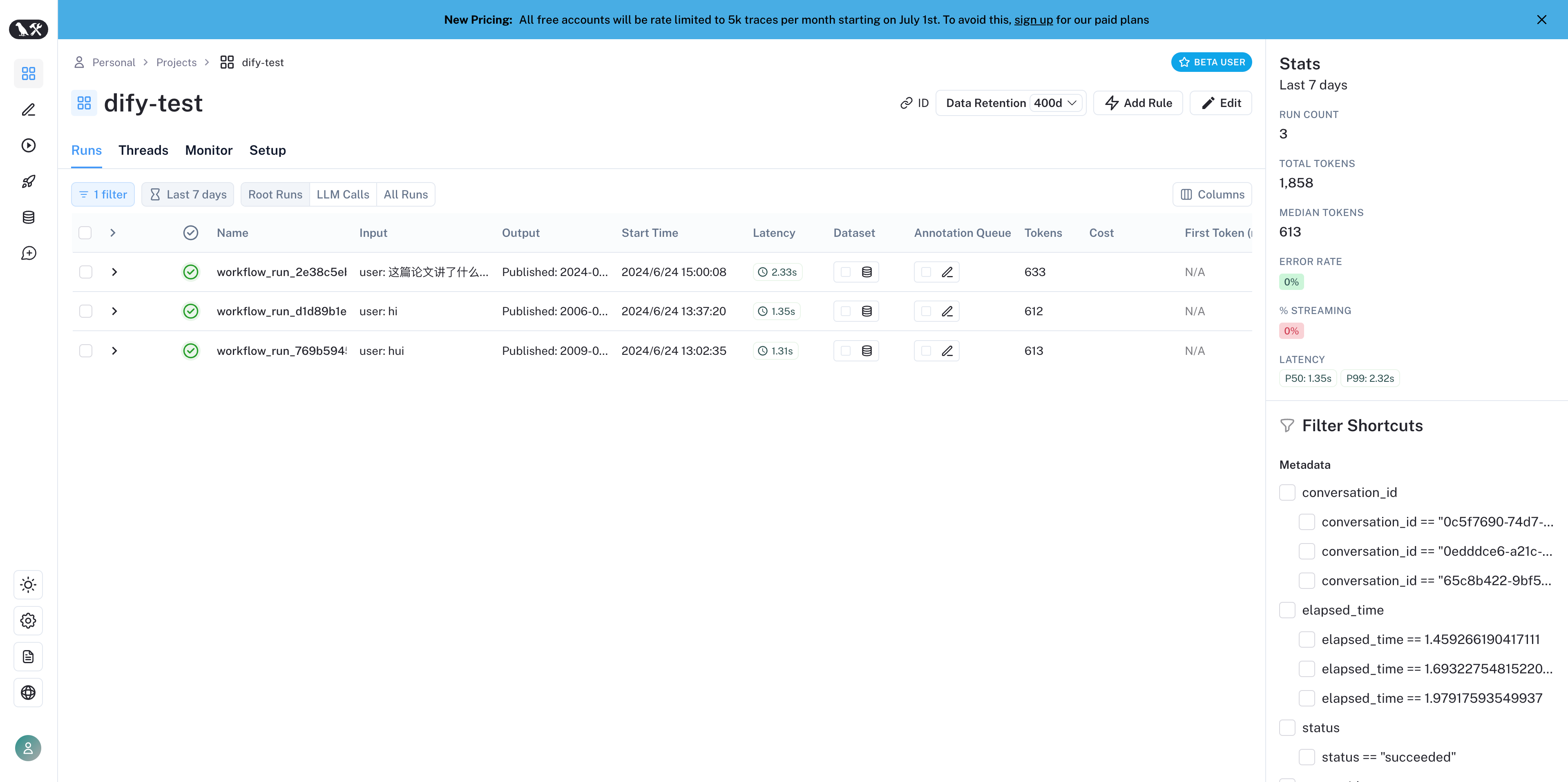

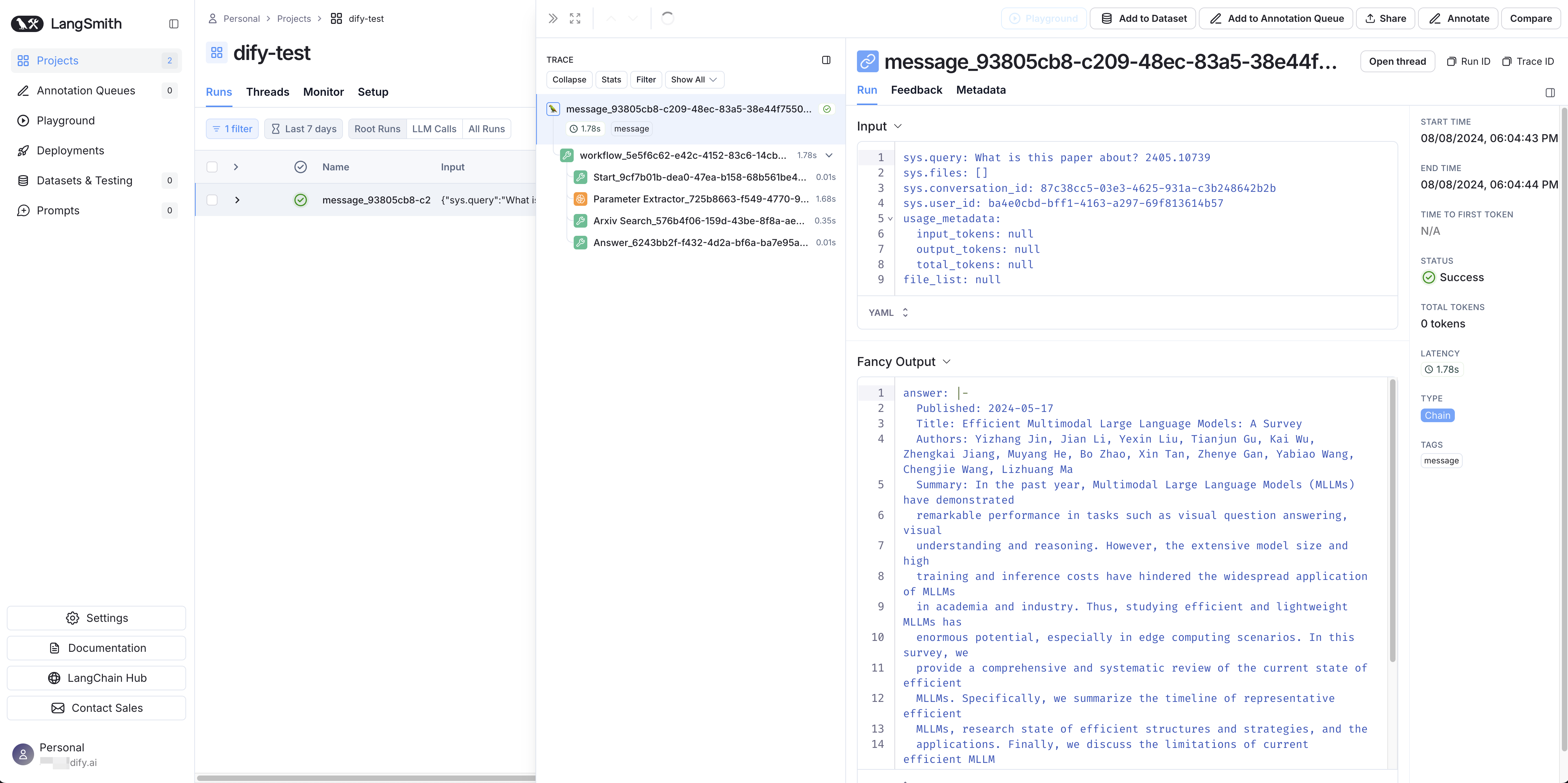

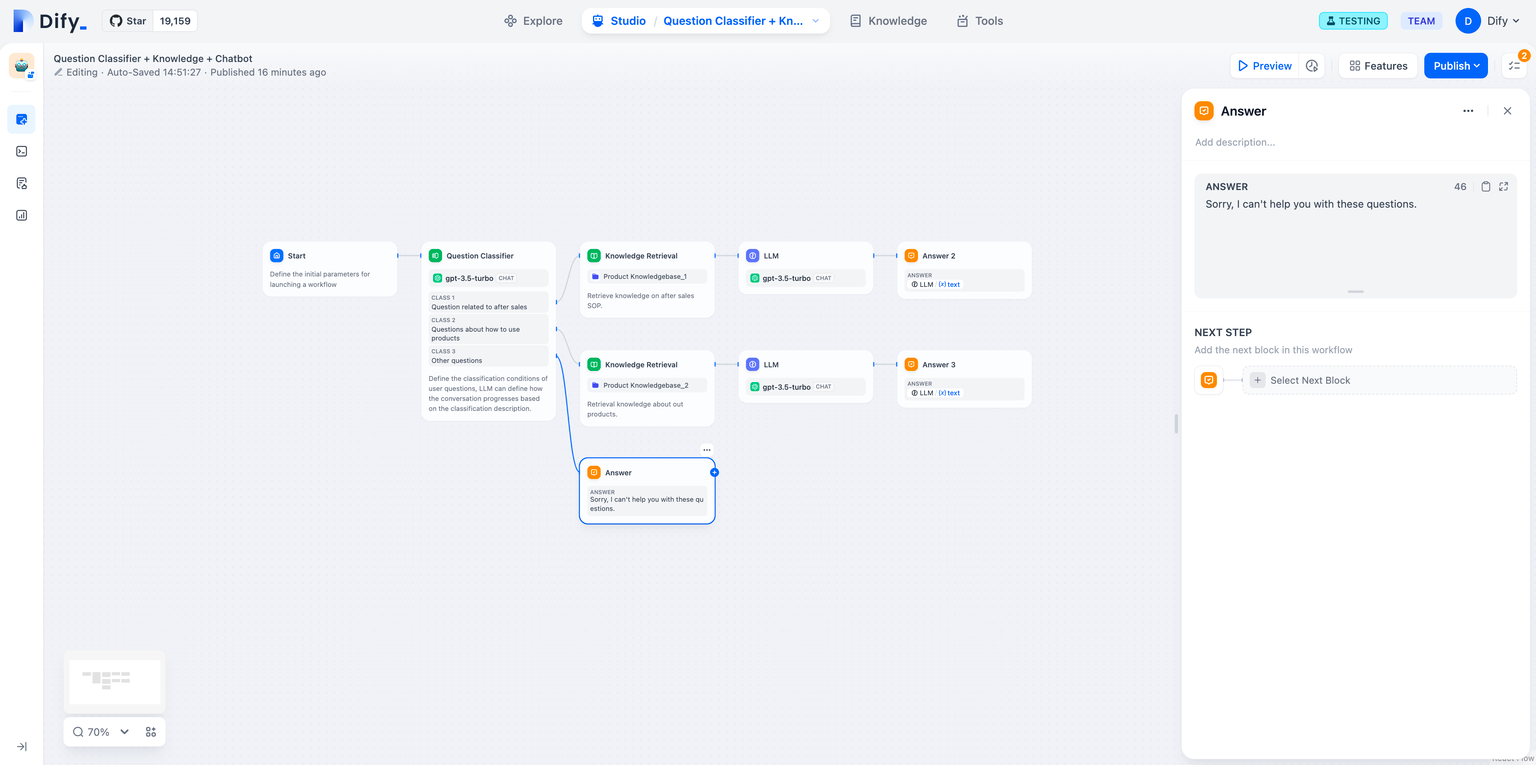

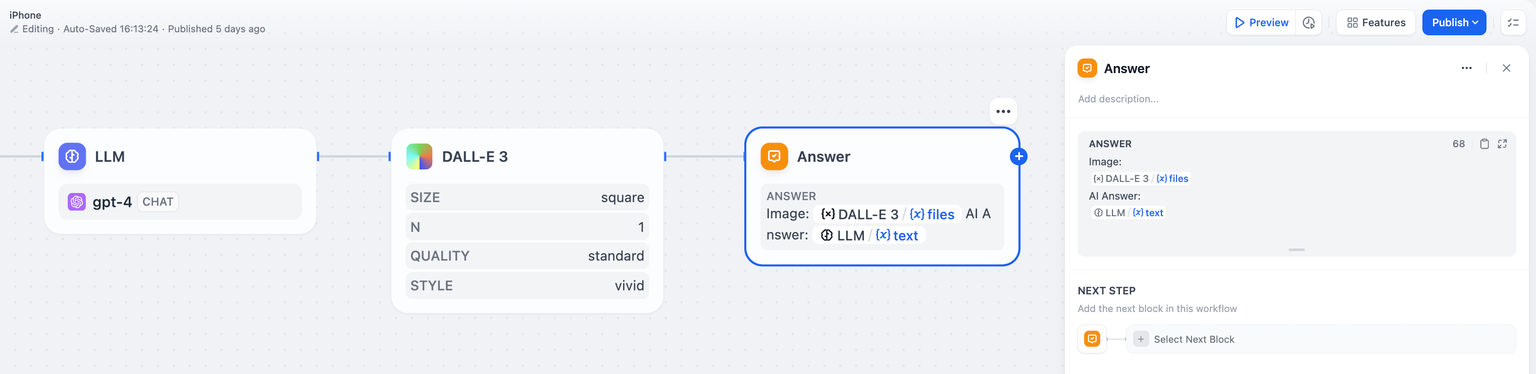

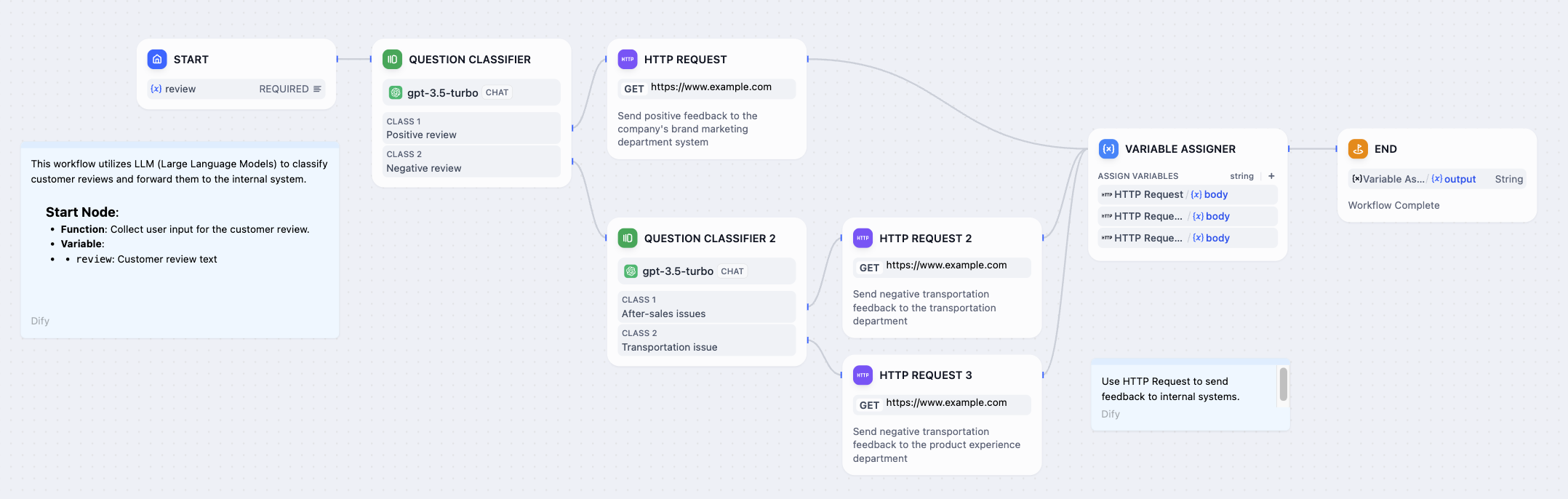

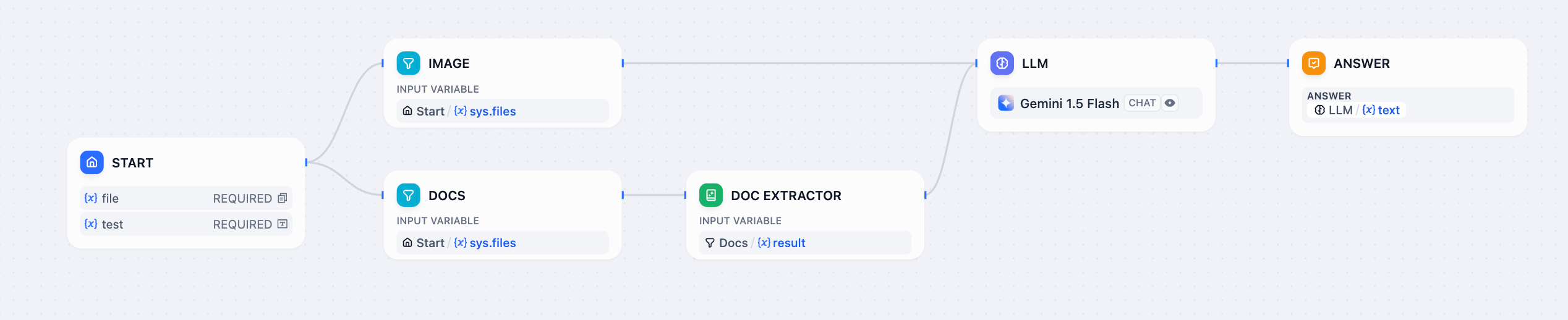

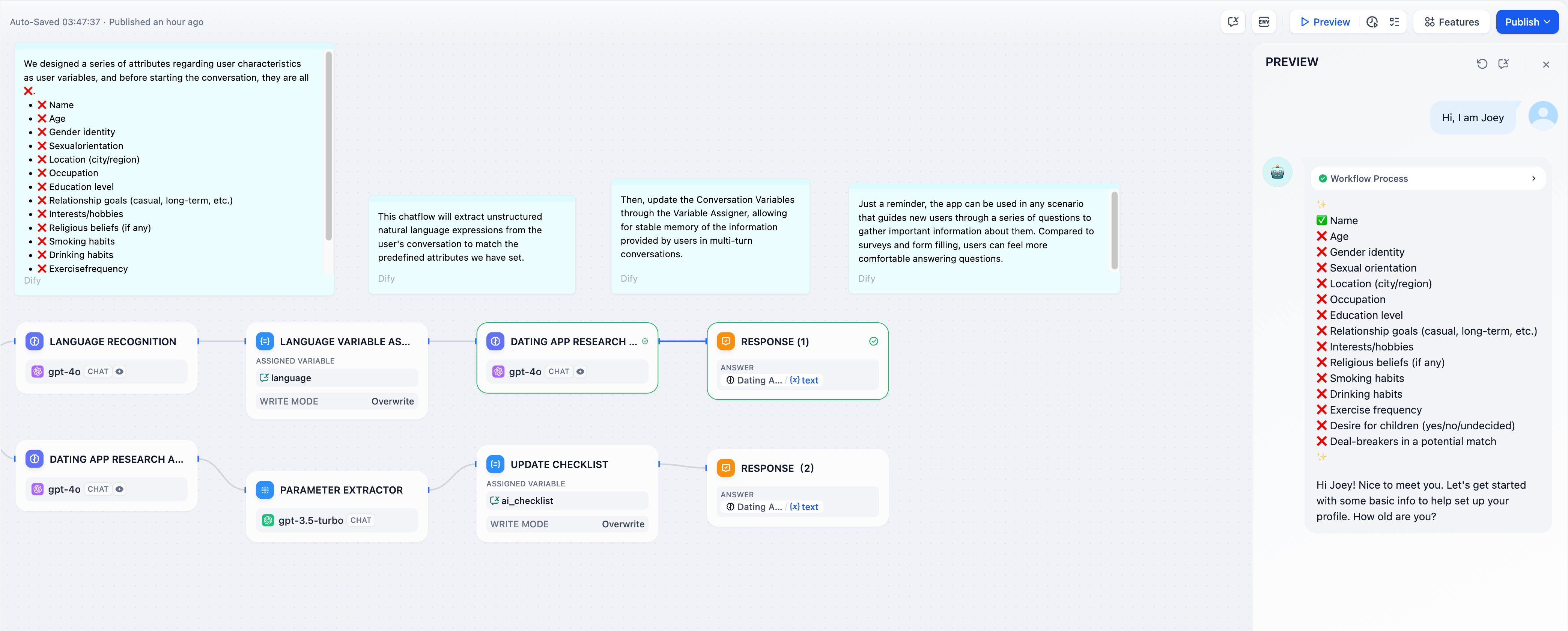

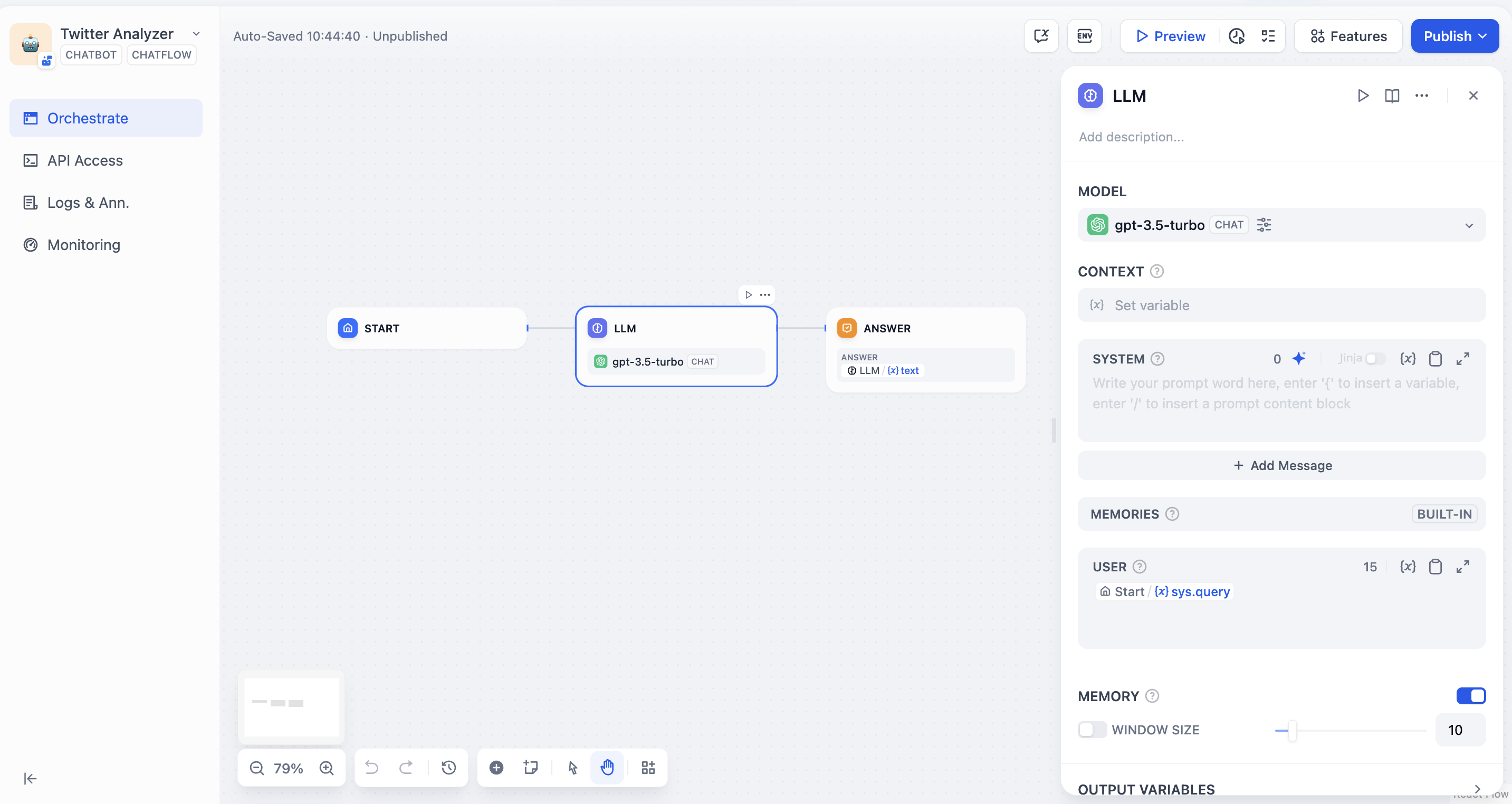

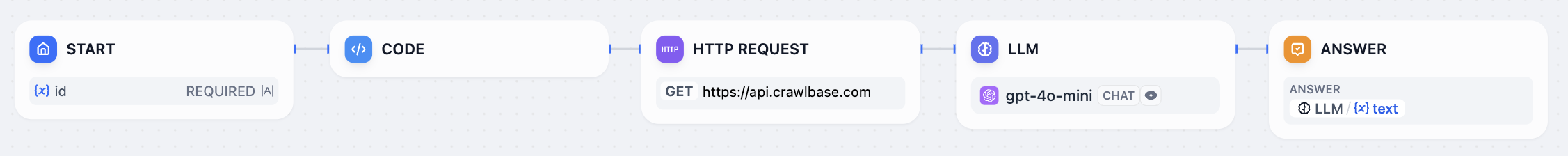

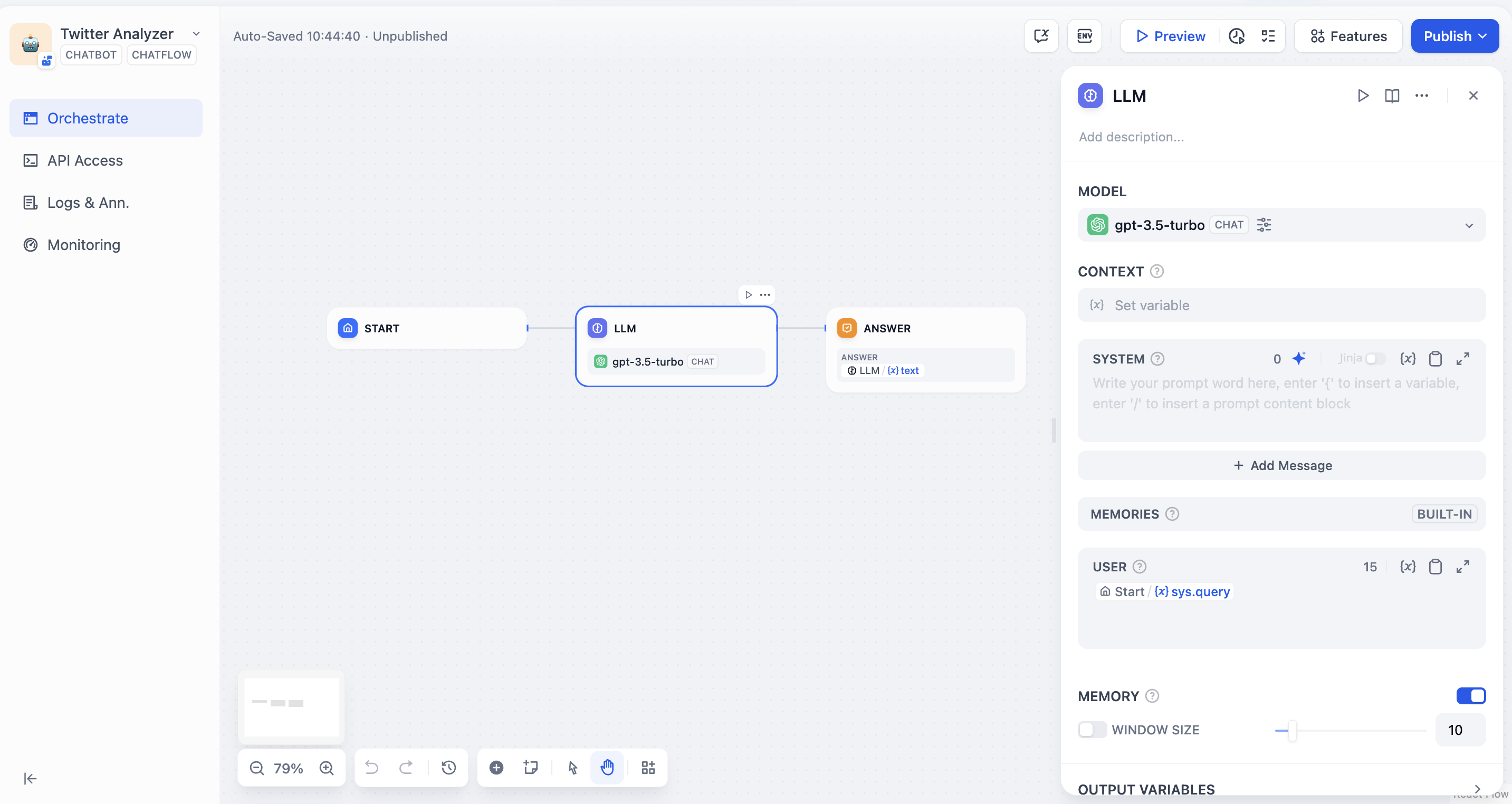

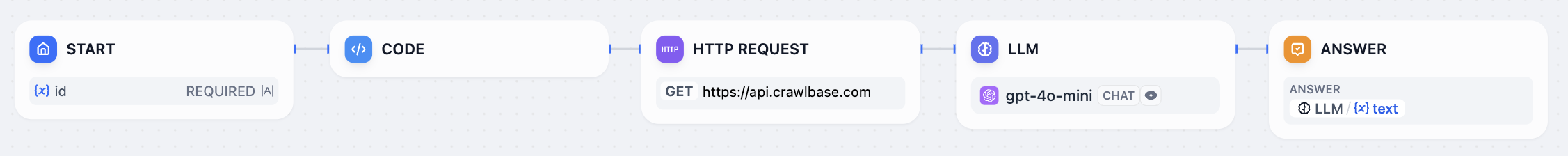

# Workflow & Chatflow

Source: https://docs.dify.ai/en/use-dify/build/workflow-chatflow

Build agentic workflows that combine AI models, tools, and logic into reliable, repeatable processes

## Why Agentic Workflows

AI models are powerful, but on their own they can be unpredictable—they may hallucinate, miss steps, or produce inconsistent outputs. In production environments, especially for teams and enterprises where reliability matters, you need more control over how AI operates.

Agentic workflows solve this by embedding AI capabilities within a structured, repeatable process. Instead of relying on a single model to figure everything out, you design a flow that orchestrates models, tools, and logic step by step—with clear conditions, checkpoints, and fallback paths.

The AI is still doing the heavy lifting, but within boundaries you define.

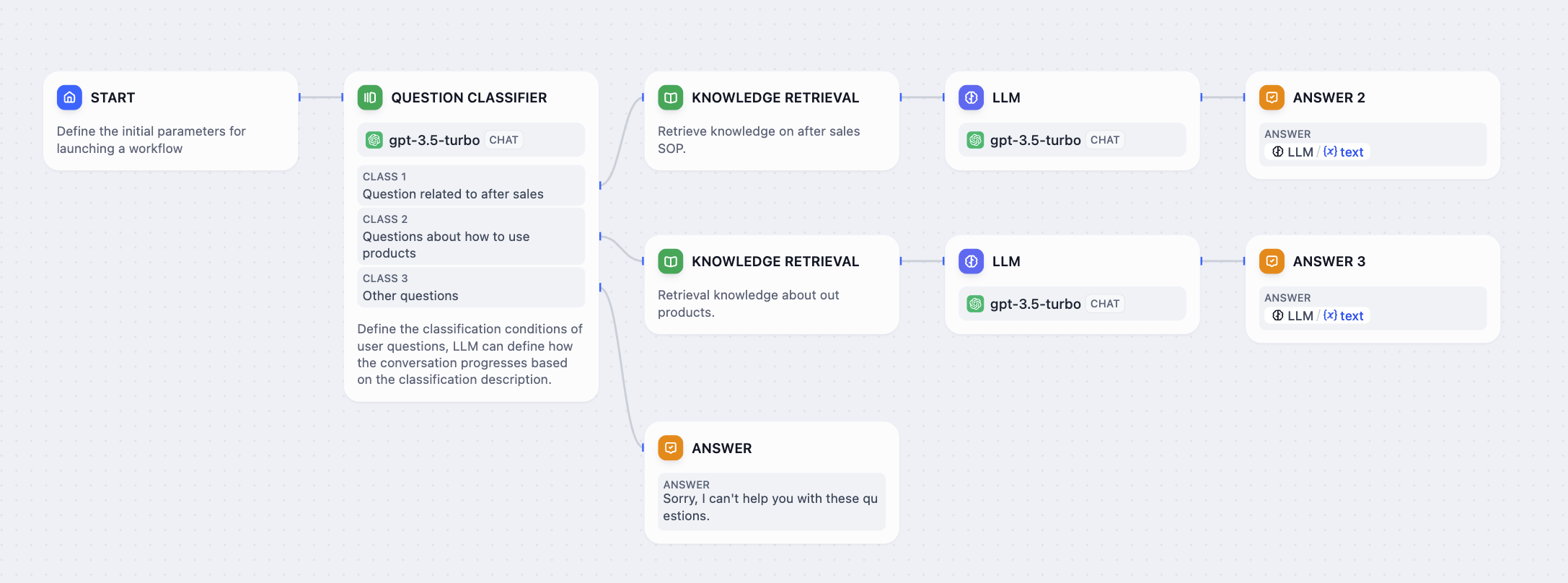

## Workflow vs. Chatflow

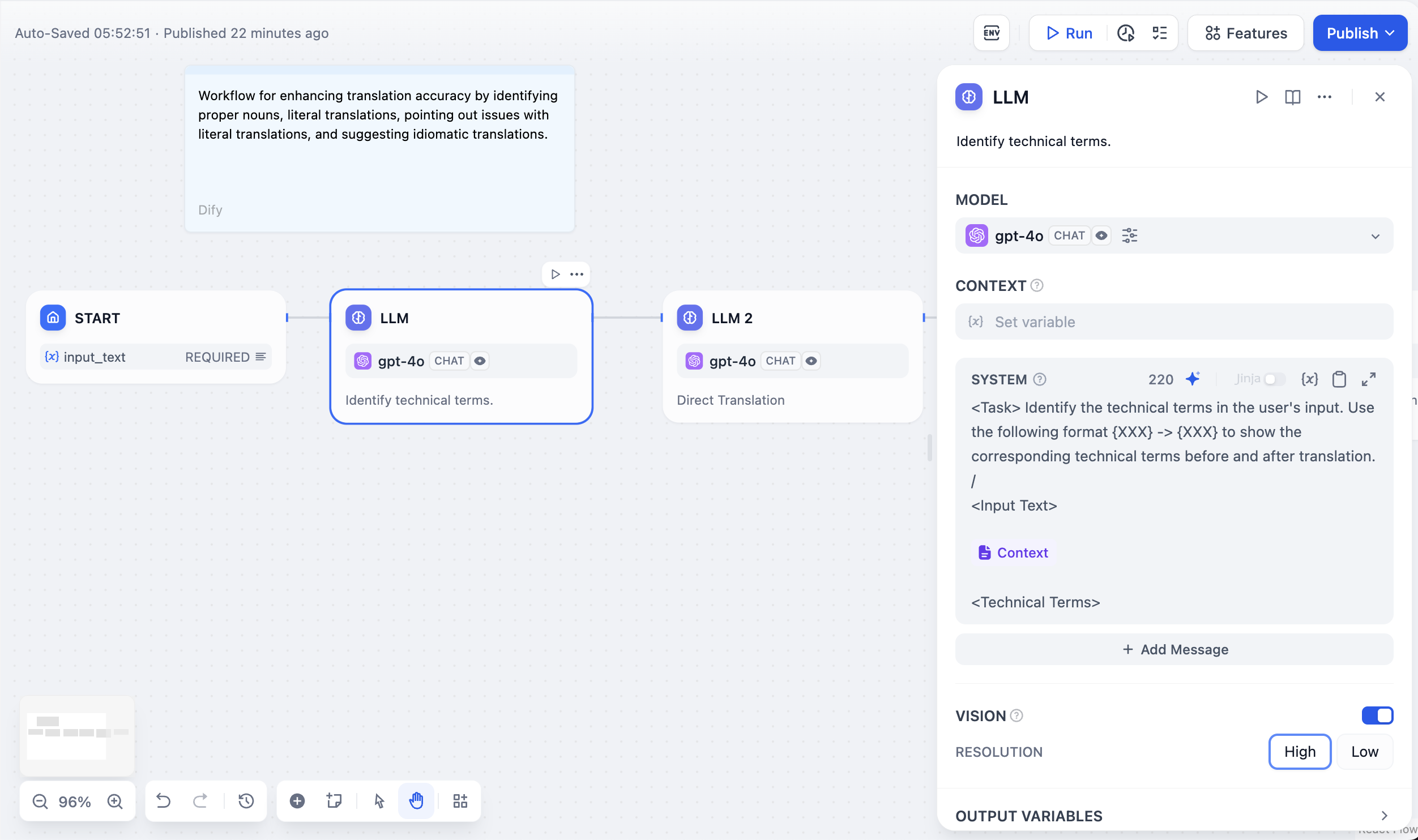

Dify offers two app types for building agentic workflows: **Workflow** and **Chatflow**. Both are built on a shared visual canvas and node system.

To build a flow, connect nodes that each handle a specific step, such as calling a model, retrieving knowledge, running code, or branching on conditions. Most of the work is **drag, connect, and configure**—code is only needed when your logic calls for it.

Their core difference is how users interact with the app:

* A **Workflow** runs once from start to finish.

It takes an input, processes it through the flow, and returns a result. Use it for tasks like automated report generation, data processing pipelines, or batch processing.

* A **Chatflow** adds a conversation layer.